Clear Sky Science · en

A multi-strategy framework for enhancing Harris hawks optimization for global optimization problems

Smarter Hunts Through Data

Modern hospitals collect an enormous amount of information about every patient—from blood tests and scans to sensor readings and questionnaires. Hidden inside all those measurements are patterns that can help doctors spot disease earlier and choose better treatments. But when there are far more measurements than patients, traditional computer models can get confused and latch onto noise instead of true signals. This paper presents a new way to help algorithms sift through huge medical datasets to find a small, reliable set of clues that still predicts illness accurately.

Why Too Much Information Can Hurt

Medical datasets today can include dozens or even thousands of measurements per person, while the number of patients remains relatively small. This imbalance makes computer models prone to “overfitting,” where they appear brilliant on past data but fail on new patients. One powerful remedy is feature selection: instead of feeding every lab value and scan measurement into the model, an algorithm first searches for a compact set of the most informative features. A diagnosis tool that relies on a handful of key biomarkers is not only easier to train and faster to run, it is also more transparent for clinicians, who can reason about why a decision was made.

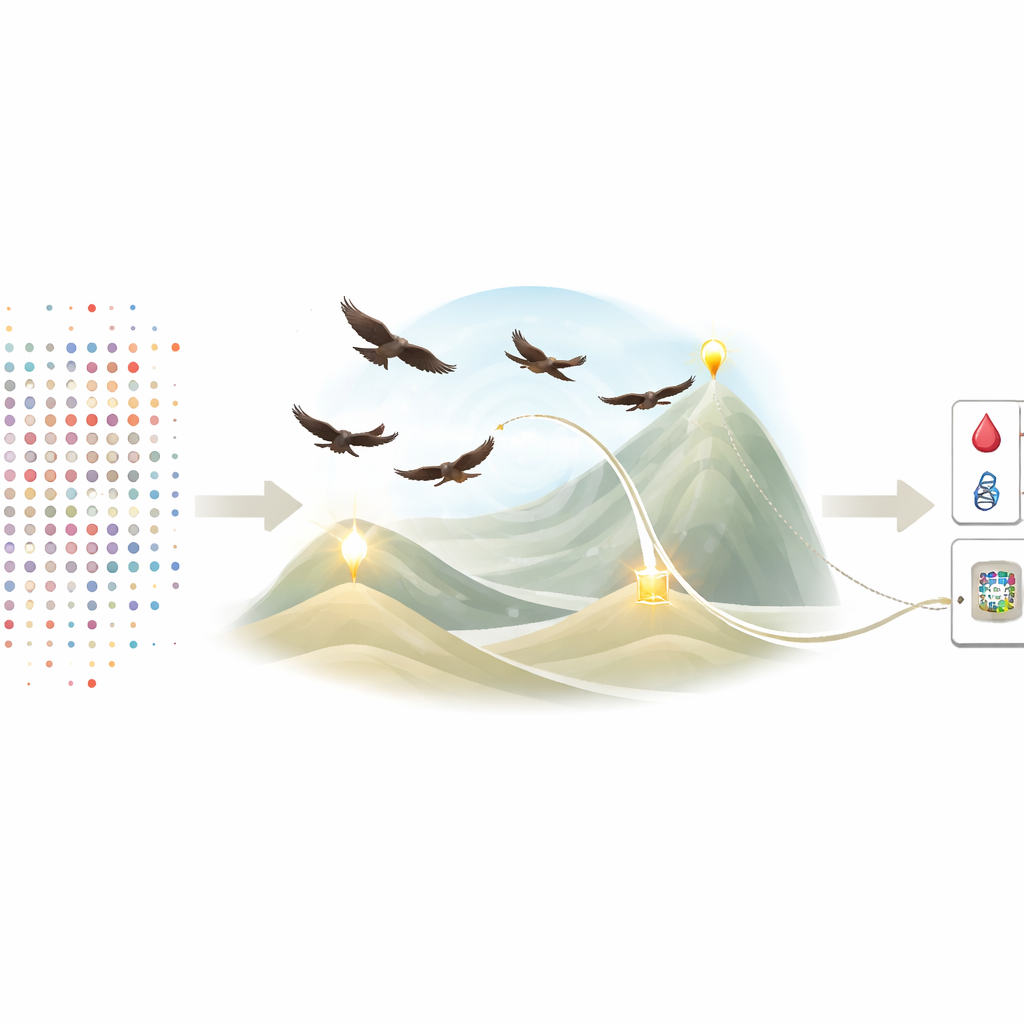

Inspired by Hawks on the Hunt

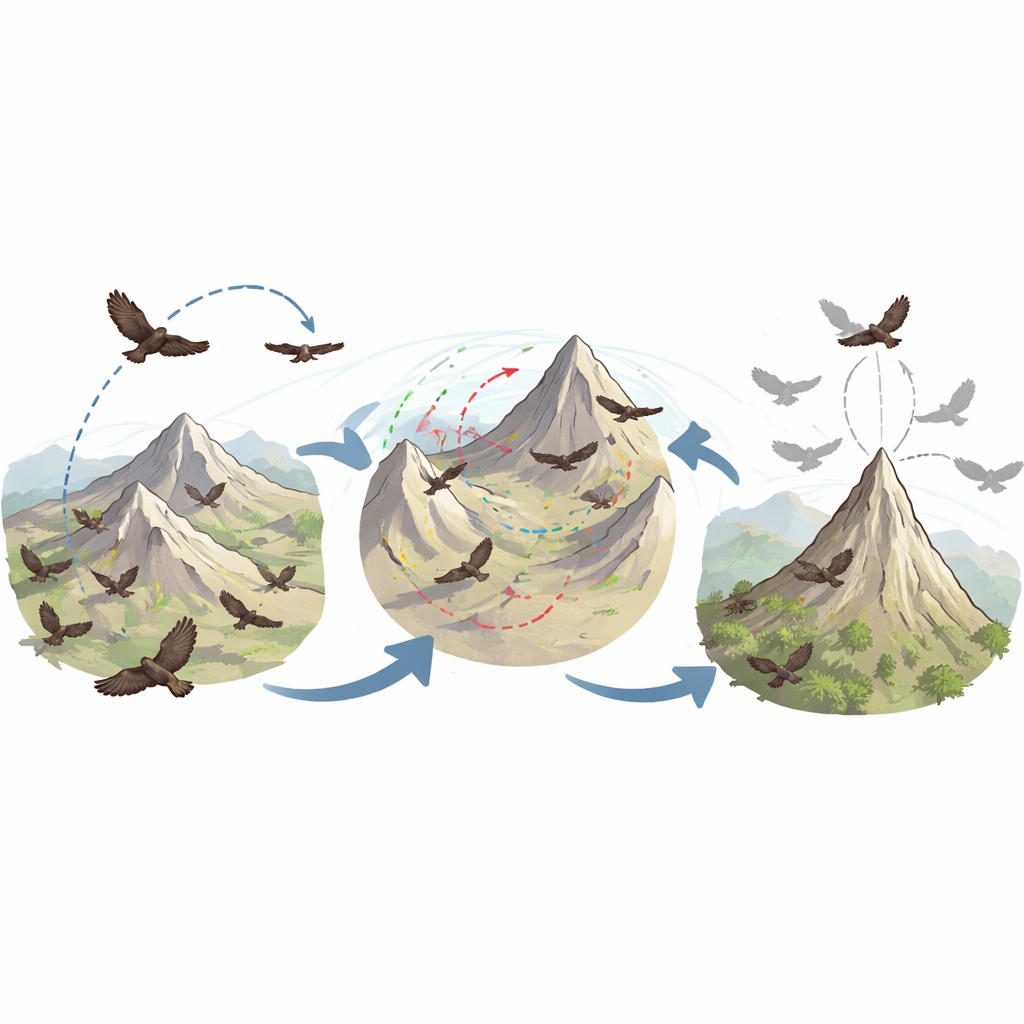

To choose that ideal subset of features, the authors build on a nature-inspired technique called Harris Hawks Optimization. In this metaphor, each possible feature subset is a “perch” for a hawk, and the search for the best subset mimics a coordinated hunt for prey across a landscape of possibilities. Standard Harris Hawks Optimization is good at roaming widely and then closing in on promising regions, but it can still get stuck circling a deceptive hilltop—a solution that looks good locally but is not truly best. These stalls are especially costly in medicine, where each trial solution must be judged by training and testing a classifier, a time‑consuming step.

Three New Tricks for the Flock

The paper proposes a modified framework, dubbed MHHO, that gives the virtual hawks three new strategies. First, a leader‑guided perching step nudges exploring hawks to pay more attention to the current best solution instead of wandering blindly after random peers, helping the search reach promising regions faster. Second, an adaptive deception factor watches for signs that progress has stalled; when that happens, it temporarily strengthens the size of the hawks’ random leaps so they can vault out of local traps, then softens those leaps again once improvement resumes for fine‑tuning. Third, a hierarchical attack strategy changes how the final pounce works: instead of every hawk diving straight at the same spot, each one takes guidance from a slightly better “mentor” hawk, approaching the target from multiple angles and preventing the whole flock from clumping too tightly too soon.

Testing on Math Puzzles and Real Patients

To see whether these new behaviors actually help, the authors ran two major test campaigns. In the first, MHHO and the original method tackled 23 standard mathematical benchmark problems that are widely used to challenge optimization algorithms. MHHO achieved better results on most of them, converging more quickly and more precisely to the true best value, albeit with somewhat higher computation time. In the second campaign, the team used MHHO as a feature selector on 15 publicly available medical datasets, including breast cancer, heart disease, thyroid disorders, Parkinson’s disease, and diabetic retinopathy. For each trial, a support vector machine classifier evaluated how well a given feature subset could distinguish between healthy and sick (or between multiple diagnosis categories).

Fewer Tests, Clearer Answers

Across these medical case studies, MHHO typically found very small sets of features—often just one or two measurements—that yielded equal or higher classification accuracy compared with the original approach. It also produced more stable solutions: repeated runs tended to pick similar subsets and reach similar accuracy, an important property when doctors and regulators demand reproducibility. Statistical tests confirmed that each of the three added strategies contributed meaningful improvements, and that together they offered a favorable balance between accuracy and sparsity. In practical terms, this means future diagnostic tools could be built on a lean panel of highly informative tests, reducing cost and complexity while remaining trustworthy.

What This Means for Patients and Practitioners

For non‑specialists, the main message is that better “search strategies” inside machine‑learning systems can make medical AI both smarter and simpler at the same time. By coordinating virtual hawks that explore, escape traps, and refine their aim intelligently, the proposed framework helps computers focus on the few measurements that matter most. Although the method still requires more validation beyond medicine and adds some computational overhead, it demonstrates how carefully designed optimization can move us closer to diagnostic models that are accurate, stable, and easier for clinicians to understand and adopt.

Citation: Al-Adwan, S., Abdullah, S., Alweshah, M. et al. A multi-strategy framework for enhancing Harris hawks optimization for global optimization problems. Sci Rep 16, 10614 (2026). https://doi.org/10.1038/s41598-026-45741-5

Keywords: feature selection, medical diagnosis, metaheuristic optimization, Harris hawks algorithm, high-dimensional data