Clear Sky Science · en

Investigating performance and key factors for real-world deployment of grain image classification using convolutional neural networks

Why Smarter Grain Checks Matter

Every loaf of bread starts with a tiny wheat kernel. Ensuring those kernels are healthy and free from hidden damage is vital for food quality, fair pricing, and cutting waste across the grain supply chain. Today, much of this checking is still done by eye, which is slow, tiring, and can vary from one inspector to another. This study explores how modern image-based artificial intelligence can reliably sort good from bad wheat kernels in real-world conditions, and what it takes to trust such systems outside the lab.

From Hand Inspection to Machine Eyes

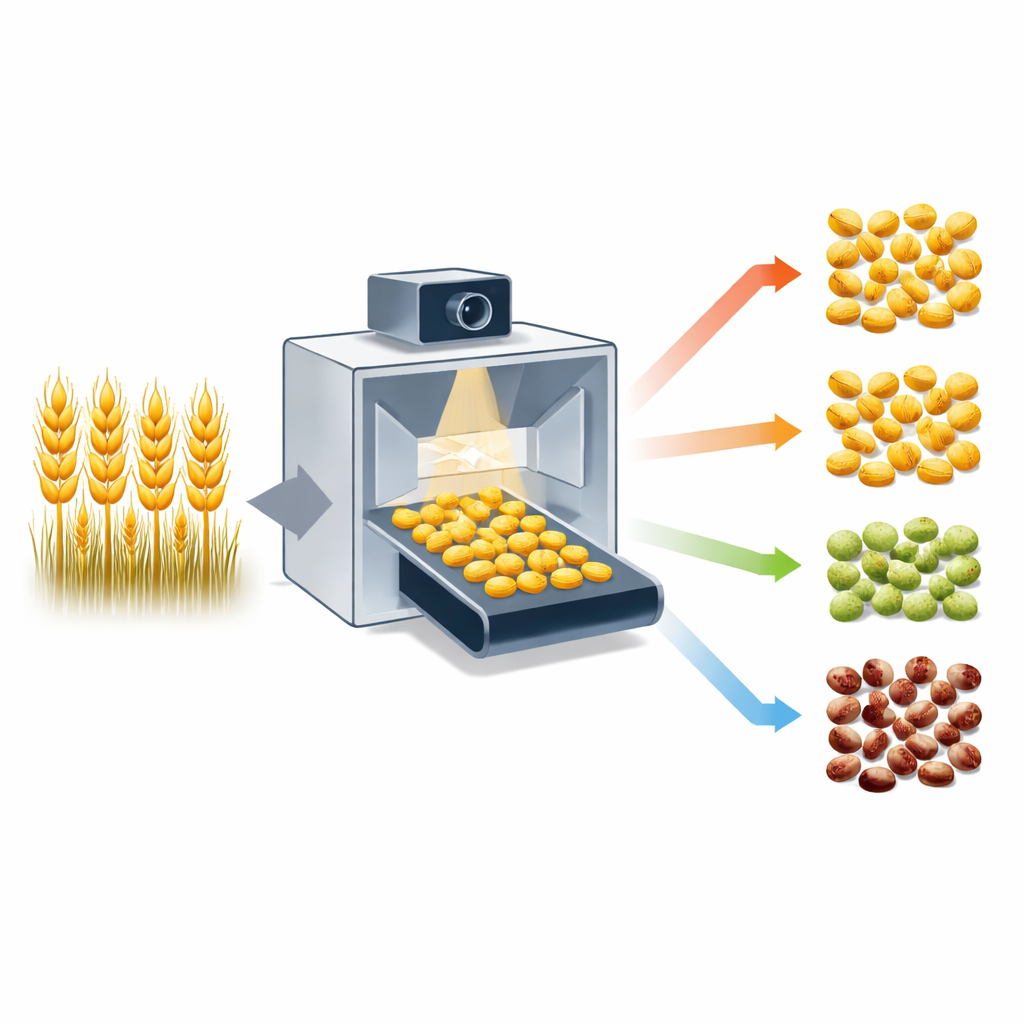

Traditionally, experts judge wheat by visually inspecting bulk samples, looking for defects such as mold, insect damage, or broken kernels. The paper focuses on an automated imaging system that photographs single kernels using mirrors and high-resolution cameras, capturing more than 90% of each kernel’s surface. These images are then fed to deep learning models called convolutional neural networks, or CNNs, which can learn to spot patterns related to quality problems without human-crafted rules. The authors ask not just whether CNNs can classify kernels, but how reliably they work when faced with messy, real-world data.

Putting Three Smart Models to the Test

The researchers gathered more than 200,000 labeled images of individual wheat kernels, divided into eight categories such as healthy, moldy, sprouted, or insect-damaged. They compared three popular CNN designs—MobileNetV2, EfficientNetV2B0, and ResNet50V2—by training them on most of the images and holding out separate sets taken at different times as test data. Overall, the two larger models, ResNet50V2 and EfficientNetV2B0, reached over 96% accuracy, while the lighter MobileNetV2 performed slightly worse and was less stable from run to run. Yet when the team looked at each class separately, they found that rare defect types, like certain fungal infections, were much harder to classify correctly than the abundant healthy grains.

Why Data Balance and Image Cleanup Matter

To probe the system’s robustness, the authors varied how they split the data into training and test sets, and repeated training with different random seeds. Performance dropped when the test set came from a time point that looked somewhat different from the training data, especially for rare or visually ambiguous defects. By examining misclassified kernels using interactive visual maps, they discovered that some mistakes stemmed from uncertain human labels and kernels that realistically belonged to more than one category. They also tested whether the built-in image pre-processing—segmenting kernels from the background, adjusting color, and downscaling images—actually helped. Surprisingly, pre-processed and downsampled images (256×256 pixels) performed as well overall as full high-resolution raw images, and even better for several defect types, though some rare classes still benefited from higher detail.

Designing Systems for the Real World

The findings point to several key ingredients for trustworthy grain inspection by AI. First, having enough representative examples of each defect type is crucial; when some defects are rare or inconsistently labeled, even powerful models struggle. Second, pre-processing that standardizes lighting and isolates kernels tends to boost performance and stability, but there is a trade-off: certain subtle defects require the extra detail preserved in higher-resolution images. The authors suggest that combining information from both pre-processed and full-resolution images, or fusing multiple models, could further improve reliability, especially for tricky cases where damage overlaps between categories.

What This Means for Future Grain Sorting

In everyday terms, the study shows that AI can already match or exceed human-level wheat kernel inspection, provided it is trained on the right kind of data and evaluated carefully. The best-performing models were accurate and consistent overall, but they exposed weak spots where rare or confusing defects and imperfect labels limit reliability. By highlighting the roles of data balance, smart image cleanup, and class-aware testing, the work lays out a roadmap for turning deep learning from a promising lab tool into a dependable partner on the grain elevator floor, helping farmers, millers, and food producers make faster, more objective quality decisions.

Citation: Kumari, R., Persson, J., Eklöf, V.E. et al. Investigating performance and key factors for real-world deployment of grain image classification using convolutional neural networks. Sci Rep 16, 12357 (2026). https://doi.org/10.1038/s41598-026-45314-6

Keywords: wheat grain quality, image-based inspection, deep learning, convolutional neural networks, automated grain sorting