Clear Sky Science · en

A contrastive learning framework with adaptive feature fusion for brain tumor classification

Why smarter reading of brain scans matters

Brain tumors are dangerous not only because they invade vital tissue, but also because they can be hard to tell apart on medical scans. Doctors rely heavily on MRI images to decide how serious a tumor is and which treatment to use. Yet tumors that behave very differently in the body can look surprisingly similar on the screen. This study presents a new computer method that learns to spot subtle differences in MRI images, aiming to support doctors with faster and more reliable tumor classification.

Seeing the challenge inside the skull

Brain tumors arise when cells inside the skull grow out of control, pressing on nearby areas and causing symptoms like headaches, seizures, or vision problems. On MRI scans, three common tumor types—meningioma, glioma, and pituitary tumor—often overlap in appearance. To make matters harder, hospitals capture the brain from several angles (axial, coronal, sagittal), so the same tumor can look quite different from one slice to the next. Existing deep learning systems can recognize broad patterns in these images, but they often miss the very fine details in shape, boundary, and texture that distinguish one tumor type from another.

A new way to teach computers what to notice

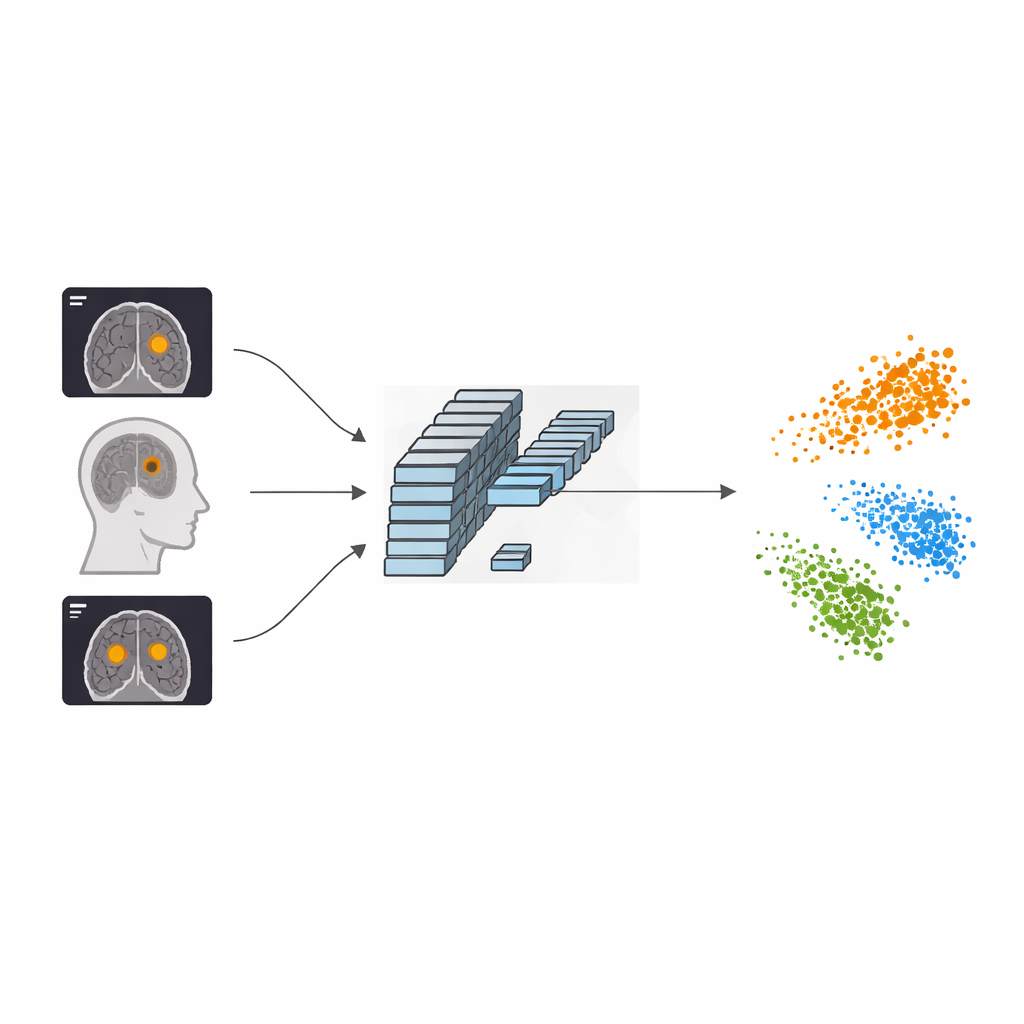

The authors propose a framework called AFF-CL that reshapes how a neural network learns from MRI scans. Instead of only learning to match an image with its label (for example, “glioma”), the system also learns by contrasting images against one another. It builds a kind of memory queue that stores feature fingerprints of many past images along with their tumor types. When a new scan comes in, the model compares its internal fingerprint not just to one partner image, but to many stored examples of the same tumor type and of other types. Features from the same category are pulled closer together, while those from different categories are pushed apart, helping the computer carve out clearer boundaries between tumor classes in its internal “map” of images.

Looking at the brain both wide and close-up

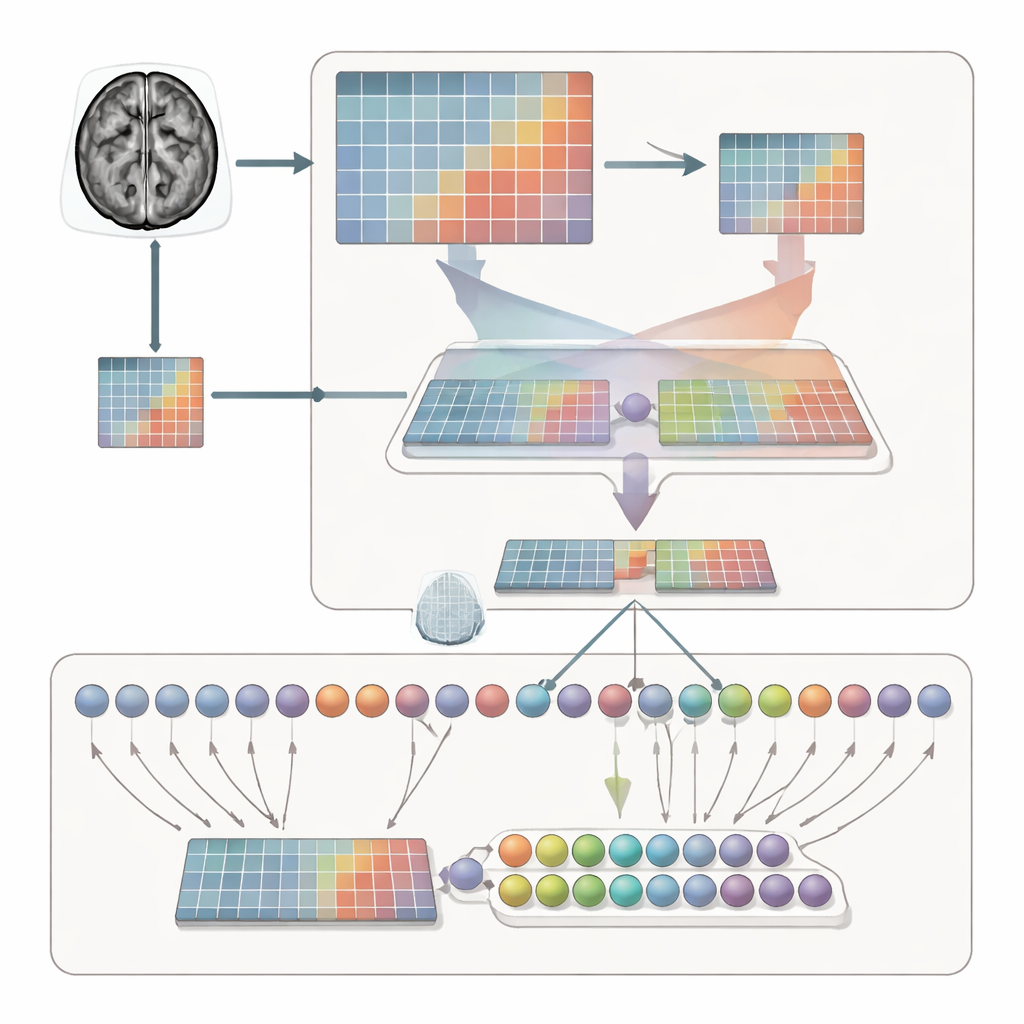

Simply separating categories is not enough; the system must also learn where to look within each MRI slice. To achieve this, the framework processes each scan in two complementary ways. One branch sees the whole brain, capturing overall context, size, and location of the tumor. The other branch zooms in on a cropped region, forcing the model to inspect local structure, edges, and textures. An adaptive feature fusion (AFF) module then acts like a smart mixer, deciding, point by point, how much to trust the broad view versus the close-up. Using a built-in attention mechanism, it strengthens signals where local detail is crucial and reinforces them with the wider anatomical context, producing a richer combined representation for final classification.

Putting the method to the test

The researchers evaluated AFF-CL on a widely used public dataset of 3,064 brain MRI images from 233 patients. The dataset includes the three main tumor types and images taken from multiple viewpoints. After training, the new method achieved about 99.35% accuracy, surpassing many recent deep learning approaches that rely on deeper networks or conventional attention alone. The authors also showed that their approach boosts performance across different backbone architectures, from lightweight convolutional networks suitable for smaller clinics to more advanced transformer models. Visualizations of the network’s internal activity indicate that, with AFF-CL, attention concentrates more sharply on the actual tumor regions and separates the three tumor types into well-defined clusters in feature space.

What this means for patients and clinics

For a non-specialist, the key message is that AFF-CL helps computers learn to recognize brain tumors in MRI scans with exceptional precision by both comparing images more intelligently and blending “zoomed-out” and “zoomed-in” views of the brain. While the method currently requires more computing power to train and still needs stricter patient-level testing, it already outperforms existing tools on two different datasets. In the long run, such systems could act as a second pair of eyes for radiologists, reducing missed diagnoses, speeding up treatment decisions, and making advanced brain tumor analysis more accessible in a wider range of hospitals.

Citation: Peng, Y., He, S. & Chang, L. A contrastive learning framework with adaptive feature fusion for brain tumor classification. Sci Rep 16, 14504 (2026). https://doi.org/10.1038/s41598-026-44887-6

Keywords: brain tumor MRI, deep learning diagnosis, medical image analysis, contrastive learning, feature fusion