Clear Sky Science · en

A smart monocular vision metrology system based on computer for standing long jump

Why Jumping Distance Matters

The standing long jump is one of the simplest ways schools around the world test students’ fitness: stand at a line, jump as far as you can, and measure the distance. But in crowded schoolyards this supposedly simple test can turn messy. Teachers still bend down with measuring tapes, decisions can be disputed, and recording thousands of jumps is slow and tiring. This study introduces a camera‑based system that can automatically and accurately measure jump distance from ordinary video, promising quicker, more objective testing without expensive specialized hardware.

From Tape Measure to Smart Camera

Today’s automated long‑jump tools often rely on ultrasound sensors, pressure mats, or beams of light stretched across the sand. While effective, they are costly to install, sensitive to weather, and hard to maintain for large‑scale school use. The authors instead treat the task as a vision problem: if a single camera can see both the athlete’s feet and the jump mat, software should be able to work out how far the person has traveled. They design a complete pipeline that turns raw video into a final distance number, all in real time, using a mix of modern computer vision and careful geometric reasoning.

How the System Sees a Jump

The process begins with a high‑speed camera placed a couple of meters to the side of the jump mat, capturing crisp 240‑frame‑per‑second video. Software first breaks this video into individual frames and uses an off‑the‑shelf detector to quickly find when an athlete enters and leaves the scene. Within this window, it searches for the moment when the heel is highest in the image (the peak of the jump) and then for the later frames where the heel position stops changing, indicating a stable landing. This automatic key‑frame selection means the system does not waste time analyzing every single frame, and it avoids being confused by partial views when the athlete is only half in the picture.

Finding Mats, Heels, and True Distance

Once the landing frame is identified, a custom vision model called FastNetSeg takes over. This lightweight network combines two ideas: a Transformer branch that captures the overall layout of the scene and a compact convolutional branch that focuses on local detail. Together, they color in the pixels that belong to the athlete and those that belong to the jump mat. From the mat mask, an algorithm traces its outer boundary, smooths away small irregularities, and distills it down to four reliable corner points. From the athlete mask, another algorithm looks at the outline of the lower‑left body region, filters out irrelevant areas, and pinpoints the heel using curvature—essentially finding the sharp turn where the back of the foot meets the ground. These few key points provide the raw ingredients for measurement.

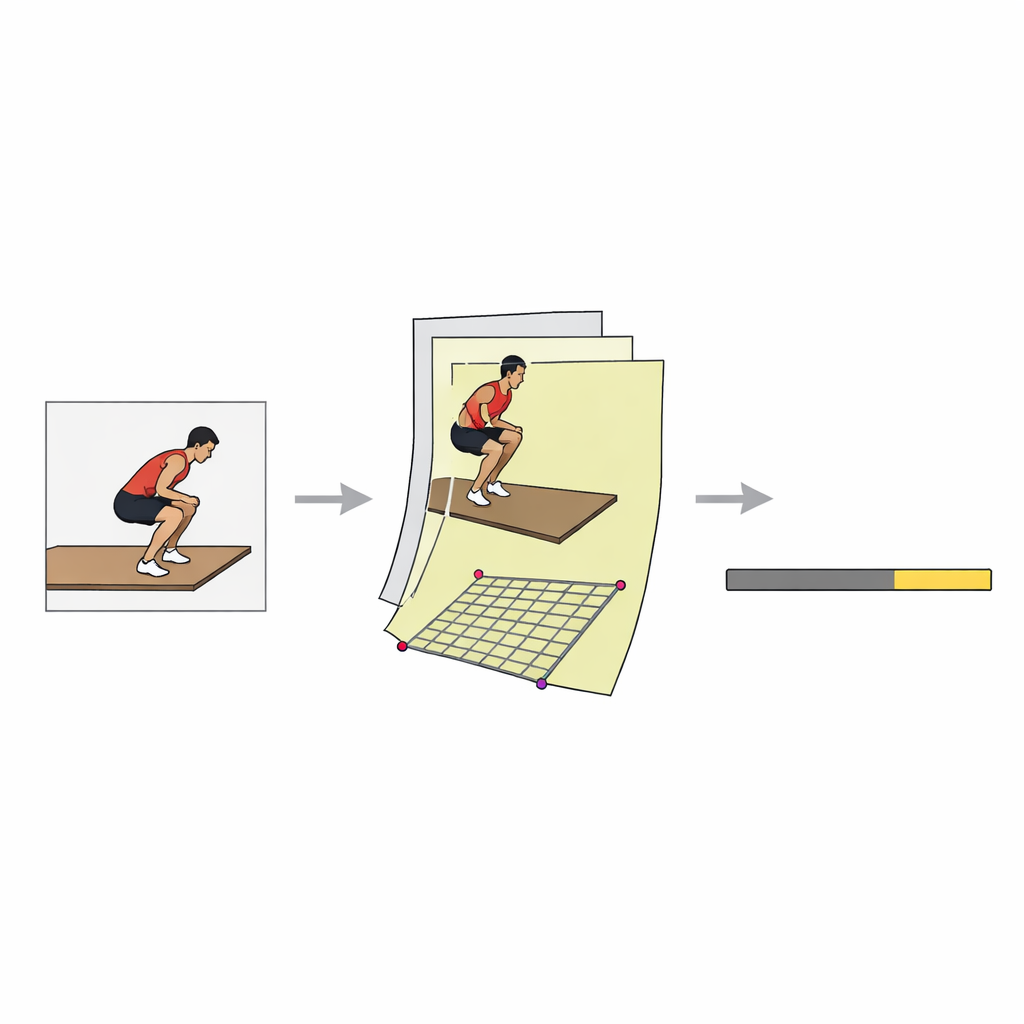

Turning Pixels into Centimeters

Because the camera views the mat at an angle, distances in the image do not directly match real‑world centimeters: a pixel near the far edge of the mat may represent more physical distance than one near the camera. To overcome this, the system learns a “mapping” from image coordinates to the flat surface of the mat, using a standard geometric tool called a perspective transformation. Knowing the true length and width of the mat, the software computes how any visible point—especially the heel—would be placed on a top‑down map of the ground. It then applies an extra correction step based on a simple polynomial curve, which is fitted from calibration jumps, to reduce the small systematic errors that remain near the edges of the camera’s view.

How Well It Works in the Real World

To test the system under realistic conditions, the researchers built a dedicated dataset: 1,200 jumps from 200 university students, filmed outdoors at different times of day and in varying weather. Human annotators drew pixel‑level outlines of athletes and mats to train and evaluate the model. On modern but readily available GPU hardware, the complete system processes about 23 frames per second, fast enough for live use during school tests. Crucially, when its distance estimates are compared to careful tape measurements, the average error is only about 0.71 centimeters—less than the width of a finger. Removing any of the key modules, such as the incomplete‑athlete filter, the single‑view mapping, or the precise heel‑finding step, causes accuracy to drop sharply, underscoring the importance of the full design.

A Clearer, Fairer Jump Test

In plain terms, this work shows that a single smart camera can replace manual measuring tapes and costly sensor setups for the standing long jump, without sacrificing accuracy. By combining fast video analysis, precise outlining of the jumper and mat, careful geometric conversion from image to ground, and a final error‑smoothing step, the system delivers centimeter‑level reliability in real time. With shared code and calibration tools, schools and sports programs could deploy this approach widely, making fitness testing faster, fairer, and less dependent on human judgment.

Citation: Kuang, G., Li, S., Liu, Y. et al. A smart monocular vision metrology system based on computer for standing long jump. Sci Rep 16, 14611 (2026). https://doi.org/10.1038/s41598-026-44523-3

Keywords: standing long jump, computer vision, sports measurement, deep learning, fitness testing