Clear Sky Science · en

Multimodal generative adversarial networks for piano fingering correction and performance expressiveness modeling through audio-visual feature fusion

Smarter Practice for Everyday Piano Players

Learning the piano usually means years of lessons with a watchful teacher who listens to every note and studies every hand movement. This research explores how artificial intelligence can share some of that load, turning an ordinary piano, a microphone, and a camera into a digital coach that spots awkward fingering and flat, mechanical playing, then offers gentle course corrections almost in real time.

Why Watching Matters as Much as Listening

Most music software focuses on sound alone, judging which notes you hit and how accurate your rhythm is. Human teachers, by contrast, care just as much about how you move: which finger you choose, how your wrist travels across the keys, and how your touch shapes the tone. The authors argue that a useful piano assistant must do both at once. Their system listens to the audio while also analyzing video of the hands, learning how physical gestures and resulting sounds line up. This dual view lets the computer notice, for example, when you play the correct note but use an awkward finger that could limit speed, comfort, or expression later on.

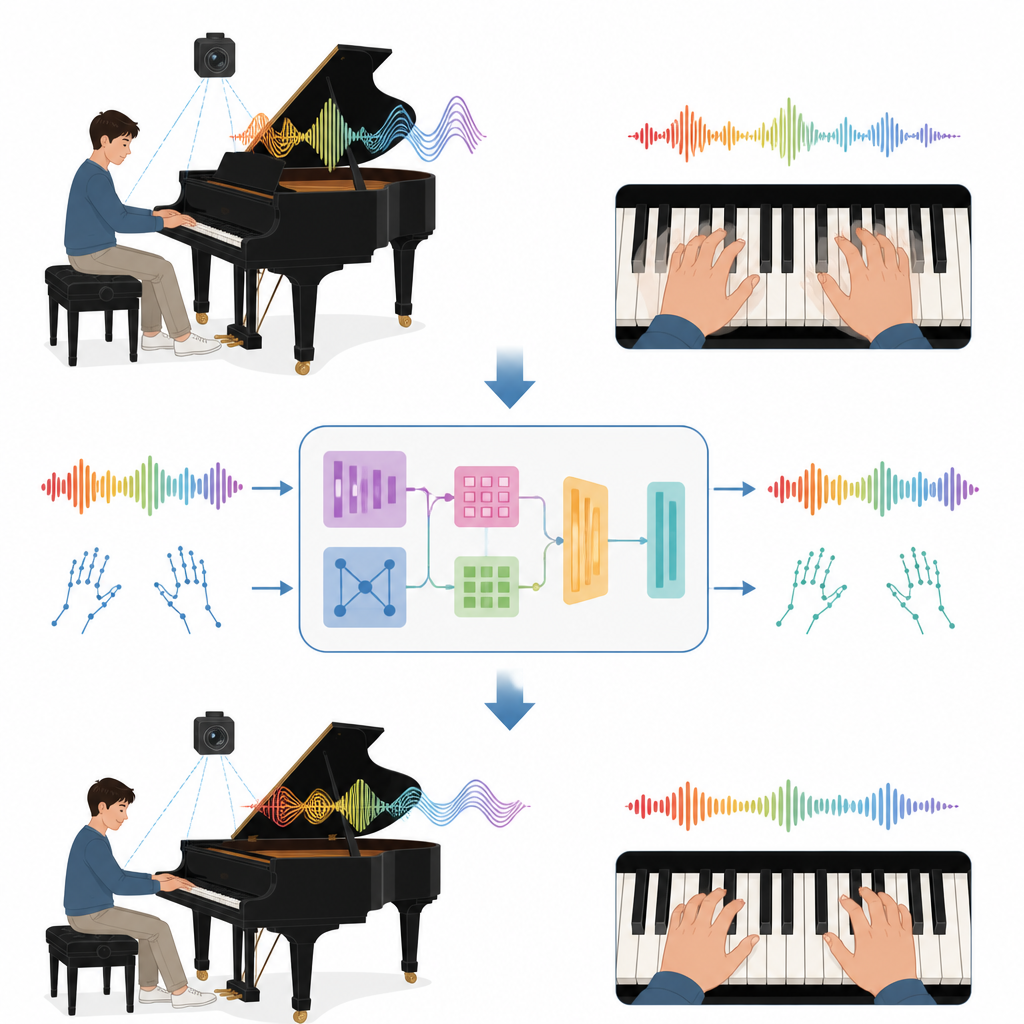

How the Digital Coach Sees and Hears You

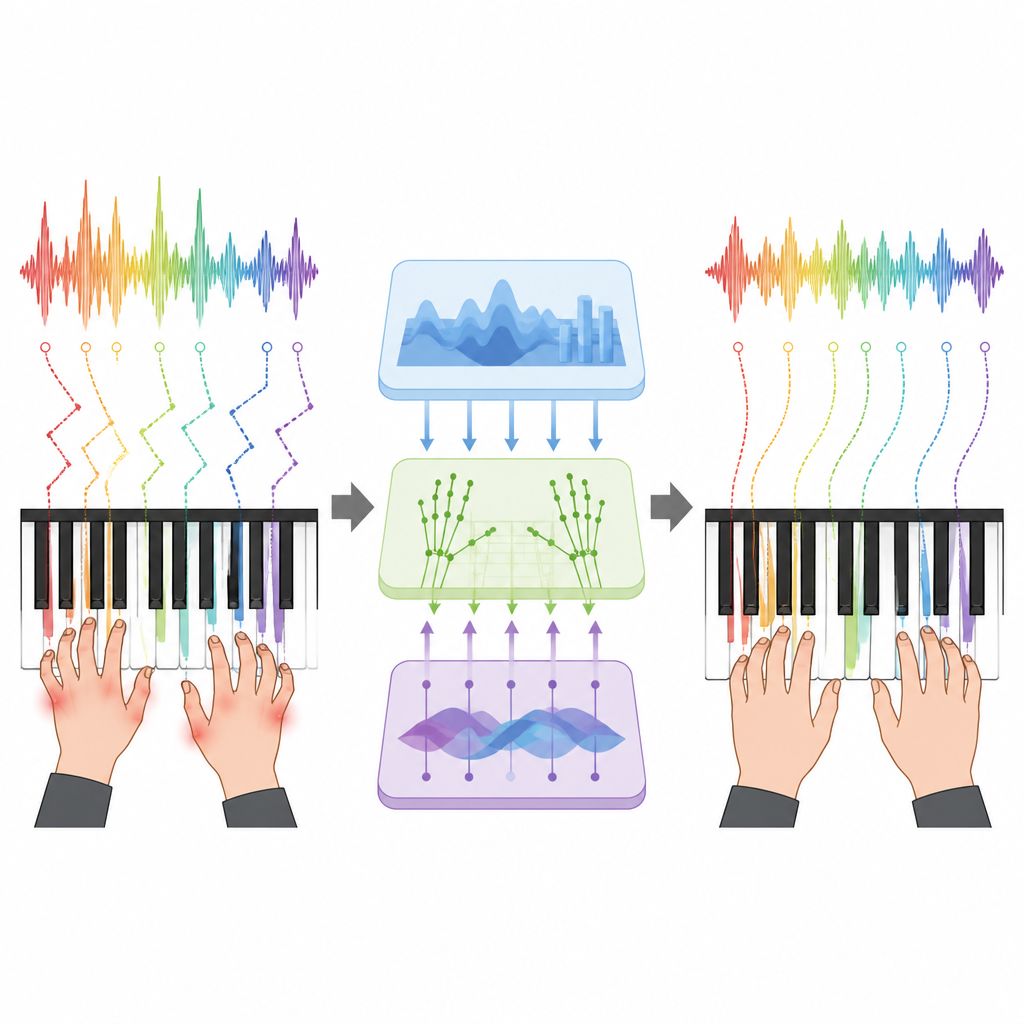

Behind the scenes, the system breaks sound and video into tiny slices and then learns patterns over time. From the audio, it extracts rich fingerprints of each moment, capturing pitch, loudness, and brightness of tone. From the video, it tracks the positions of 21 points on each hand, following how fingers travel over the keyboard. A special alignment step links each note’s sound with the instant a finger presses a key. A central “fusion” module then decides how much to trust each source at every moment, giving more weight to the camera when the hands are clear, or to the sound when fingers are hidden or the video is noisy. This blended picture becomes the system’s best guess at what the player is actually doing.

Teaching Better Fingering and More Expressive Playing

To turn this understanding into help for students, the authors build a generative model that does more than label right and wrong. Instead of picking a single “correct” finger number, it learns the range of fingerings expert pianists use for a passage, taking into account comfort and musical flow. In tests on a large collection of 3,847 recorded performances, the system matched expert fingering choices nearly 90 percent of the time at the level of individual notes and stayed close even on long, difficult phrases. At the same time, it studied aspects of expression such as timing flexibility, changes in loudness, and subtle differences in tone, and learned to predict how expert judges would rate the vividness of a performance with strong correlations to human scores.

From Lab Prototype to Practice Room Assistant

Because the algorithms are efficient, they can process about one second of music in under two tenths of a second, fast enough to give feedback at the end of each phrase during real practice. The authors tested various ways to present this guidance, from simple color signals about posture to more detailed diagrams showing suggested finger changes and how to shape a crescendo or relax a too-strict tempo. Teachers who reviewed the system’s suggestions judged most of them to be not only physically practical but also musically sensible, though they noted that the tool sometimes recommends advanced solutions that may be too challenging for beginners.

What This Means for Future Music Learning

The study shows that by jointly watching and listening, a computer can capture some of the subtle link between how a pianist moves and how the music feels. While it does not replace a human mentor and still struggles outside controlled recording conditions, the approach points toward widely accessible practice tools that offer personalized fingering advice and gentle nudges toward more expressive playing. For students without regular access to expert teachers, such systems could make practice more informed, safer for the hands, and more rewarding musically.

Citation: Li, J. Multimodal generative adversarial networks for piano fingering correction and performance expressiveness modeling through audio-visual feature fusion. Sci Rep 16, 15076 (2026). https://doi.org/10.1038/s41598-026-44473-w

Keywords: piano fingering, music education, audio visual learning, performance expressiveness, generative adversarial networks