Clear Sky Science · en

Graph attention network-based multimodal approach for lung diseases classification

Why smarter lung checks matter

Lung diseases are among the leading causes of death worldwide, yet many of them can be treated if caught early. Doctors usually rely on chest X‑rays plus written notes about a patient’s symptoms to decide what is wrong. Reading all this information by hand is slow and error‑prone, especially when different diseases look similar on the scan or share the same cough and fever. This study introduces an artificial intelligence system designed to read X‑rays and clinical text together, helping clinicians spot several kinds of lung problems more accurately and consistently.

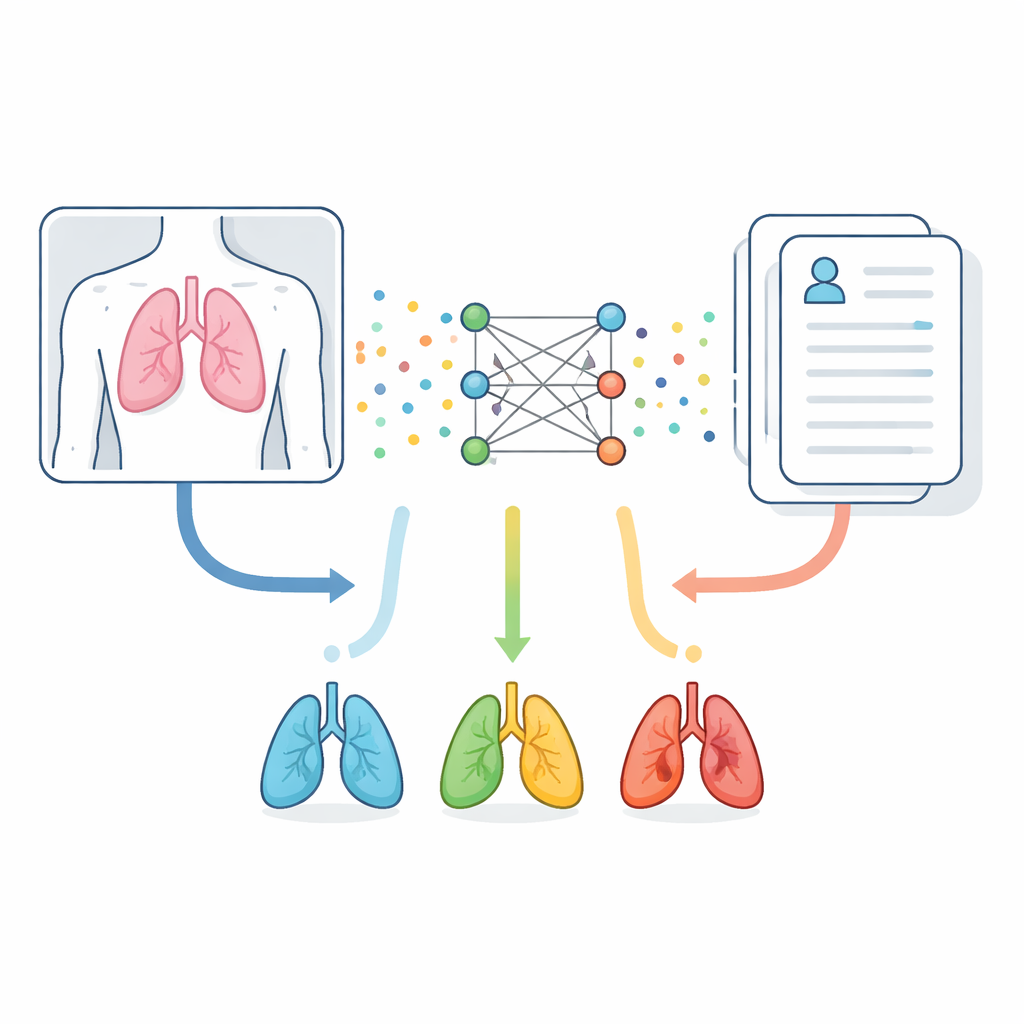

Seeing and reading at the same time

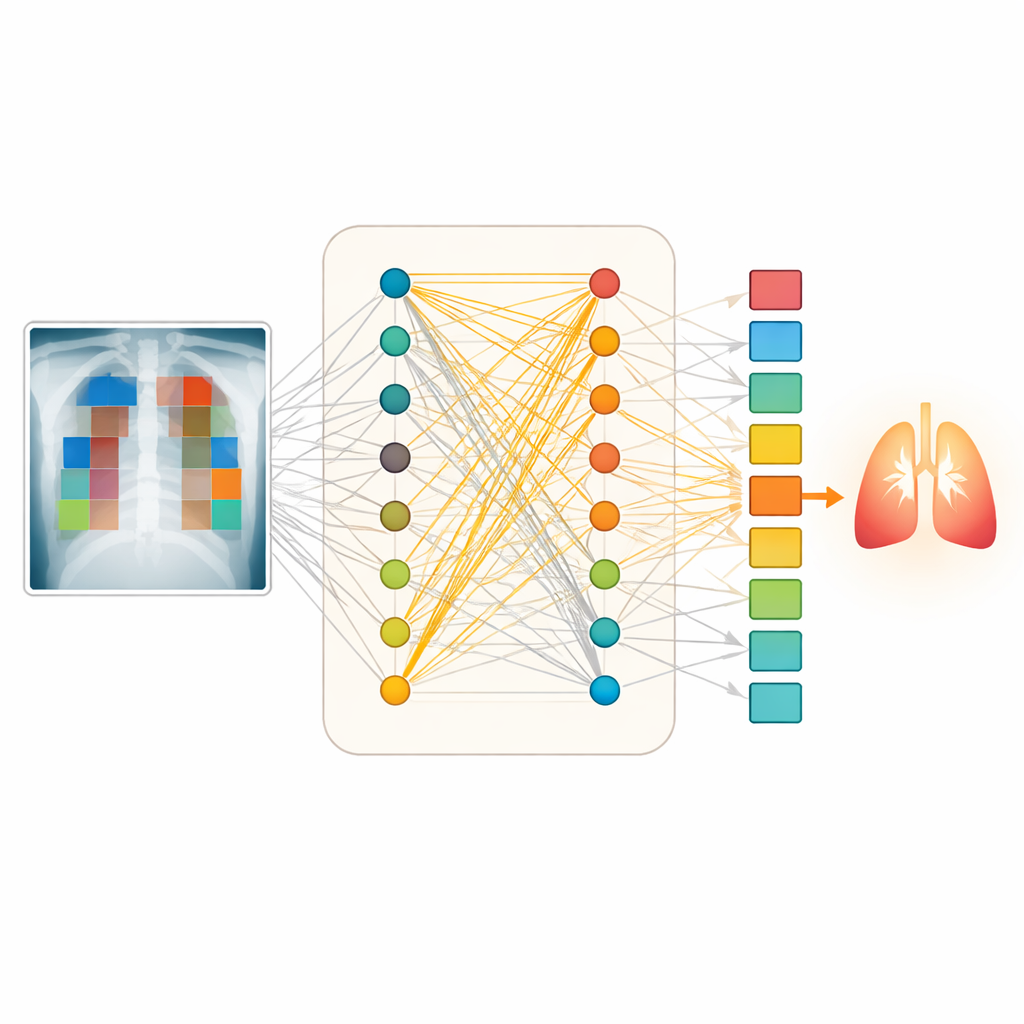

The researchers start from a simple idea: the body’s story is told both in images and in words. Chest X‑rays reveal shapes, shadows, and densities inside the chest, while clinical notes list complaints such as shortness of breath or chest pain. Instead of treating these as separate clues, the new system blends them. It uses a vision model trained specifically on medical images to turn each X‑ray into many small numeric pieces that capture visual patterns. In parallel, a language model tuned to medical writing converts each word in the clinical description into its own numeric representation. Together, these two streams of numbers form a shared picture of what is happening in a patient’s lungs.

Building a web of connections

Simply stacking image and text information often misses subtle connections, such as a small cloudy region on an X‑ray that matters only when the note mentions recent infection. To address this, the authors represent the combined data as a graph—a web of points and links. Each point corresponds either to a specific region in the X‑ray or to a specific word in the clinical text. The system then measures how closely every image region relates to every word and keeps only the strongest relationships. This produces a sparse but meaningful network that links, for example, a bright patch near the lung edge with a mention of chest pain or fluid.

Letting attention guide the diagnosis

Once this network is built, it is processed by a graph attention model. In this setup, each point in the graph “looks” at its neighbors and decides how much weight to give them, much like a doctor focusing on the most relevant combination of scan features and symptoms. Multiple attention “heads” examine different patterns in parallel, capturing varied ways that text and image can support each other. The model then pools together the most informative signals from the whole graph and feeds them into a final decision layer that predicts which of eight lung conditions—or a normal finding—is most likely for that case.

Putting the system to the test

The team trained and evaluated their method on a large public dataset containing about 80,000 chest X‑rays paired with short clinical descriptions grouped into eight lung disease categories, plus normal lungs. They carefully split and cleaned the data to avoid near‑duplicate cases leaking between training and testing. On unseen test images and texts, their approach correctly classified lung conditions in about 96 out of 100 cases, outperforming several strong competitors that either merged data more crudely or used simpler graph methods. It also produced very reliable probability scores, meaning its level of confidence closely matched how often it was right. When tested on a different hospital dataset with different disease frequencies, performance dropped—as expected—but the system still distinguished diseases well, suggesting useful real‑world robustness.

What this means for patients and doctors

In everyday terms, this work shows that an AI system can learn to “read” both the picture and the chart together, much like an experienced radiologist who considers the scan in light of the patient’s story. By focusing on the most meaningful links between image regions and specific symptoms, the model can reduce missed or wrong diagnoses and flag uncertain cases for closer review. While further testing in real clinics is needed, especially with richer and more varied reports, the study points toward decision‑support tools that could make lung disease diagnosis faster, more consistent, and more accessible in hospitals that lack expert readers.

Citation: Rahman, M., YongZhong, C. & Bin, L. Graph attention network-based multimodal approach for lung diseases classification. Sci Rep 16, 10914 (2026). https://doi.org/10.1038/s41598-026-44282-1

Keywords: lung disease diagnosis, chest X-ray, medical AI, multimodal learning, graph neural networks