Clear Sky Science · en

BigEye: a clinically interpretable deep learning framework for diabetic retinopathy detection and stage prediction

Why a simple eye photo can save sight

For many people with diabetes, vision loss creeps in silently. A single eye photograph taken at the doctor’s office can reveal early damage long before symptoms appear, but specialists are needed to interpret these images. This study introduces “BigEye,” a computer system designed not only to spot diabetic eye disease from these photos, but also to explain its decisions in terms doctors already use, potentially making screening faster, more consistent, and easier to trust.

How diabetes quietly harms the eye

Diabetic retinopathy is a complication of diabetes that damages the light-sensing tissue at the back of the eye, the retina. High blood sugar weakens the tiny blood vessels that nourish this tissue, causing them to leak, clog, or grow in abnormal ways. Over time, this damage shows up as distinct signs—tiny bulges in vessels, small spots of bleeding, yellowish deposits of fat and proteins, fluffy white patches of lost nerve tissue, and fragile new vessels that can pull the retina loose. Doctors examine these changes in special photographs called fundus images and use an international five-step scale to classify how far the disease has progressed, from no disease to advanced sight‑threatening stages.

Why doctors want machines they can question

Computer programs based on deep learning already match eye doctors at spotting diabetic eye disease in photographs, and some are approved for clinical use. But many of these systems behave like “black boxes”: they can be highly accurate while offering little insight into how they arrived at a particular decision. For physicians who must explain diagnoses, weigh treatment risks, and guard against rare but serious errors, this lack of transparency is a major barrier. What they really want are tools that think in similar terms to human experts—tools that can say, in effect, “I called this case moderate disease because I see many tiny bulges and some fatty deposits in these parts of the retina.”

What BigEye does differently

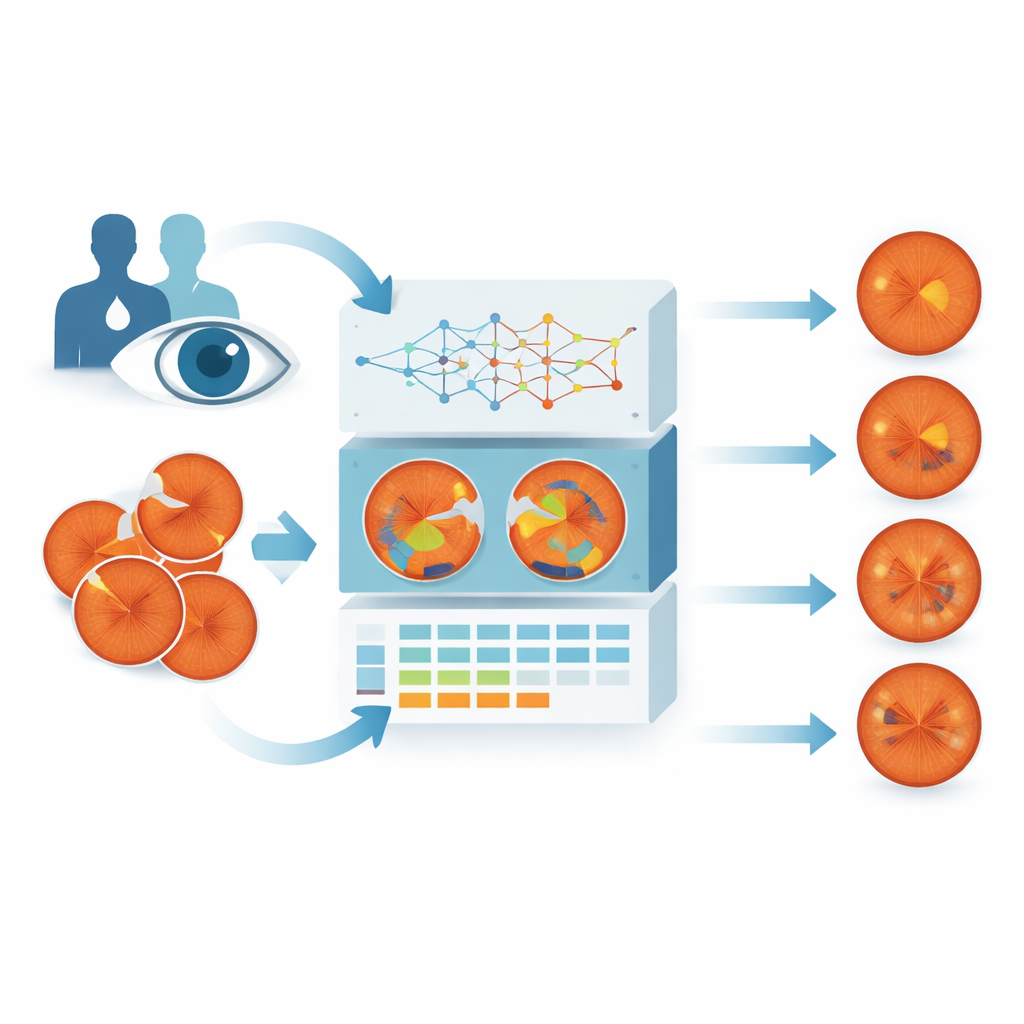

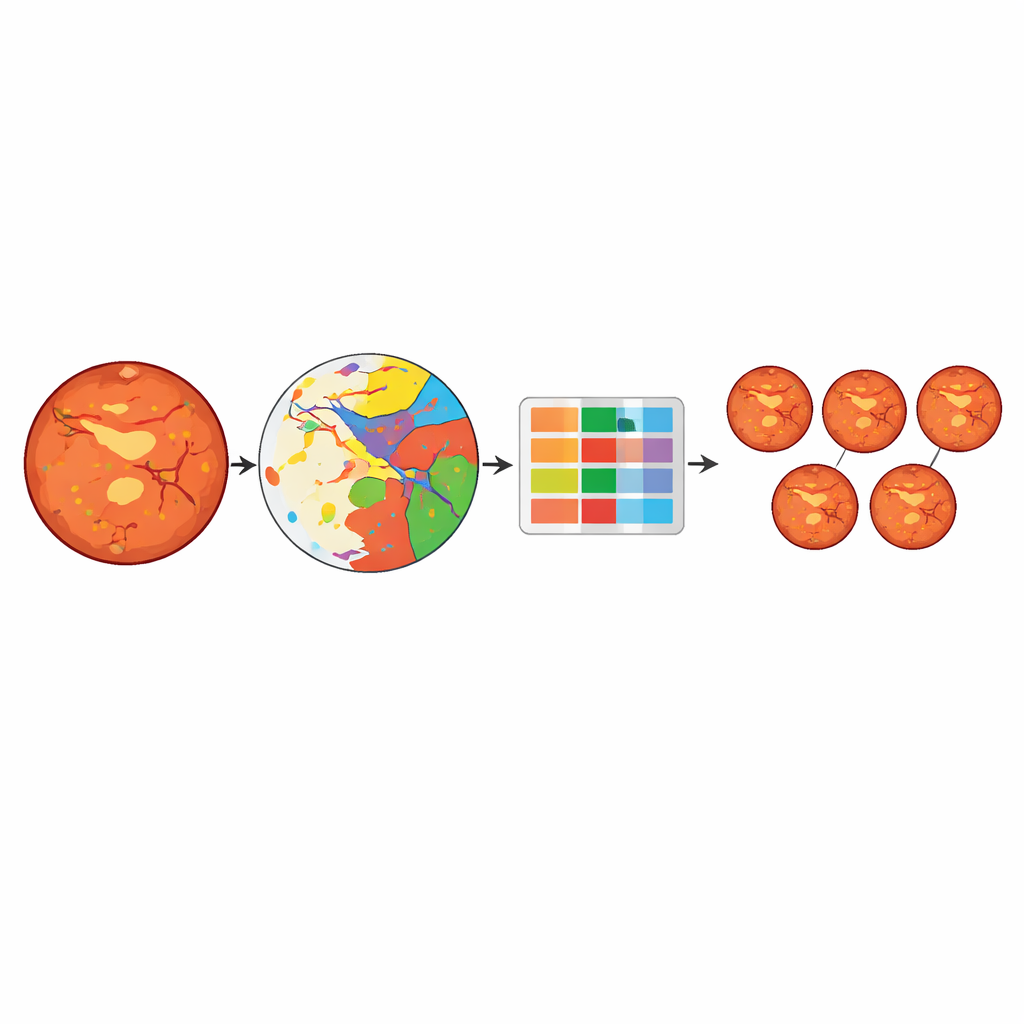

BigEye was built to mimic how clinicians think about retinal damage. The researchers combined eye photographs from a local hospital and a public French screening program, collecting over 600 images that covered all five stages of disease. Specialists painstakingly traced six key types of damage on these images: tiny bulges in vessels, bleeding areas, fatty deposits, fluffy white nerve patches, abnormal new vessels, and scars from laser treatment. A deep learning model was then trained to reproduce these outlines automatically—essentially learning to color in each kind of damage on its own when it sees a new photograph.

Turning spots and scars into a stage

Once BigEye can highlight these damaged areas, the system converts them into simple measurements: how many of each type of lesion are present, and how much area they cover relative to the retina. These measurements form a table of numbers fed into several different machine‑learning models whose job is to predict the official disease stage. The best-performing model, a tree‑based approach called Light Gradient Boosting, correctly classified stages about 83% of the time. It performed especially well at distinguishing eyes without disease from those with more serious damage, though it sometimes confused neighboring stages, such as moderate versus severe disease, when their patterns looked very similar.

Peeking inside the model’s reasoning

To test whether BigEye reasons like a clinician, the team used a technique called SHAP to see which lesion measurements most strongly influenced each stage prediction. The patterns closely mirrored the medical rulebook. Images labeled as disease‑free were associated with very low counts of all lesions. Mild disease was driven mainly by tiny bulges in vessels, just as human graders expect. Moderate disease showed a mix of these bulges and fatty deposits, while severe disease was linked to larger numbers of bleeding spots and fluffy white patches. The most advanced stage depended heavily on signs of new vessel growth and the scars left by laser treatment—again matching how specialists judge these eyes.

What this means for people with diabetes

BigEye shows that a computer can grade diabetic eye disease using the same visible warning signs that doctors trust, rather than relying on mysterious internal patterns. Although the study used a modest‑sized dataset and struggled somewhat with the smallest and most subtle lesions, the approach demonstrates a promising direction: systems that can rapidly screen large numbers of patients while clearly showing which types of damage drove each decision. For people living with diabetes, that could mean earlier detection, more consistent care, and better conversations with their doctors about what is happening inside their eyes and how to protect their sight.

Citation: Gill, H.M., Salem, D.H., Omoru, O.B. et al. BigEye: a clinically interpretable deep learning framework for diabetic retinopathy detection and stage prediction. Sci Rep 16, 12574 (2026). https://doi.org/10.1038/s41598-026-43573-x

Keywords: diabetic retinopathy, retinal imaging, explainable AI, medical deep learning, ophthalmology