Clear Sky Science · en

A framework of large language model commander agent for spatial reasoning in combat simulation

Smarter Maps for High-Stakes Decisions

Modern artificial intelligence can write essays and pass exams, but it still struggles with decisions that depend on geography—like where to place troops on a battlefield or how to move safely through complex terrain. This paper introduces “Geo-Commander,” an AI system that teaches large language models not just to read and reason, but to “think with maps,” turning them into assistants that can suggest tactically sound positions in detailed combat simulations.

Why Words Alone Are Not Enough

Large language models excel at reasoning with text, yet real-world decisions often hinge on where things are, what the ground looks like, and how conditions change over time. In military simulations, a poor choice of position can mean being exposed to enemy fire or missing a crucial opportunity. Previous systems either relied on rigid hand-crafted rules or focused on long-term planning without fine-grained control over specific locations. Visual language models can look at map images, but they tend to treat them as static pictures, missing the deeper spatial relationships and changing lines of sight that matter in combat. This gap between verbal reasoning and spatial understanding limits how useful today’s AI can be for geography-heavy tasks.

Turning Terrain into a Structured Playground

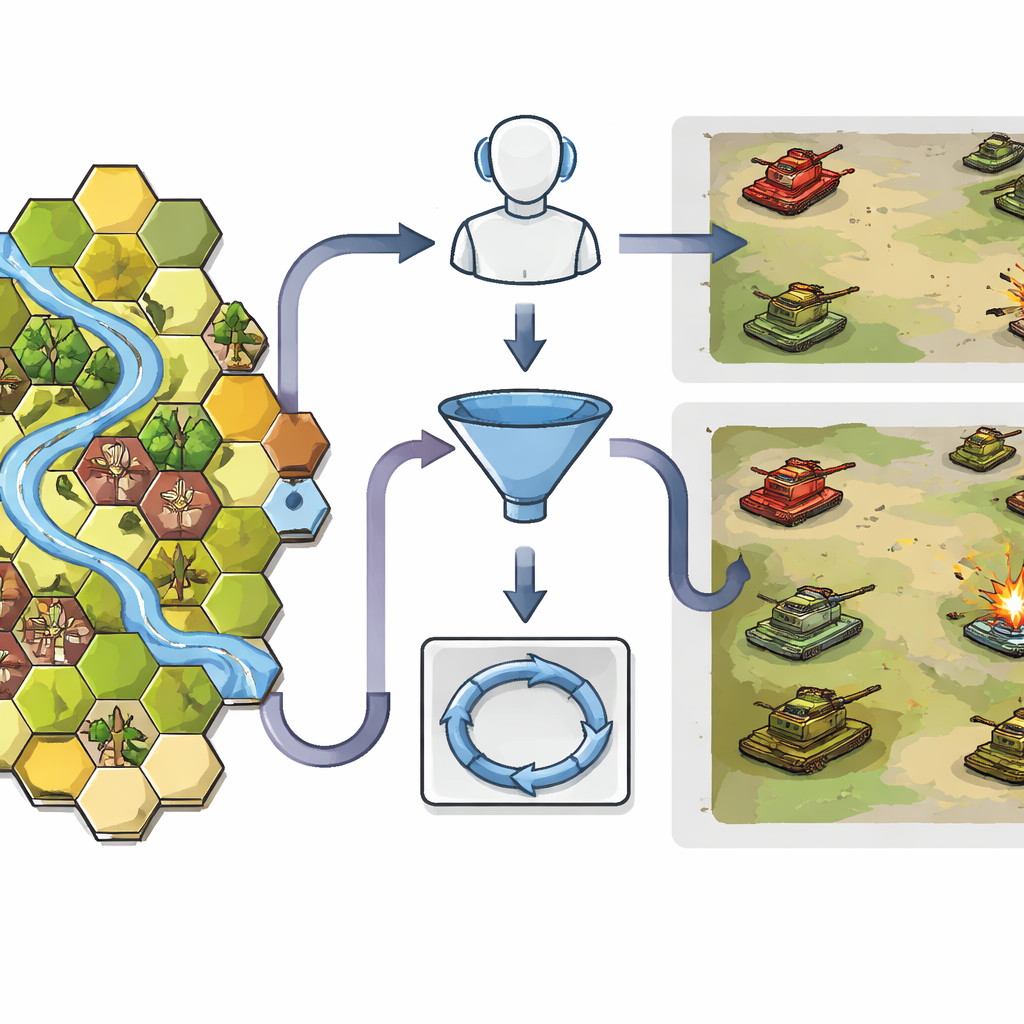

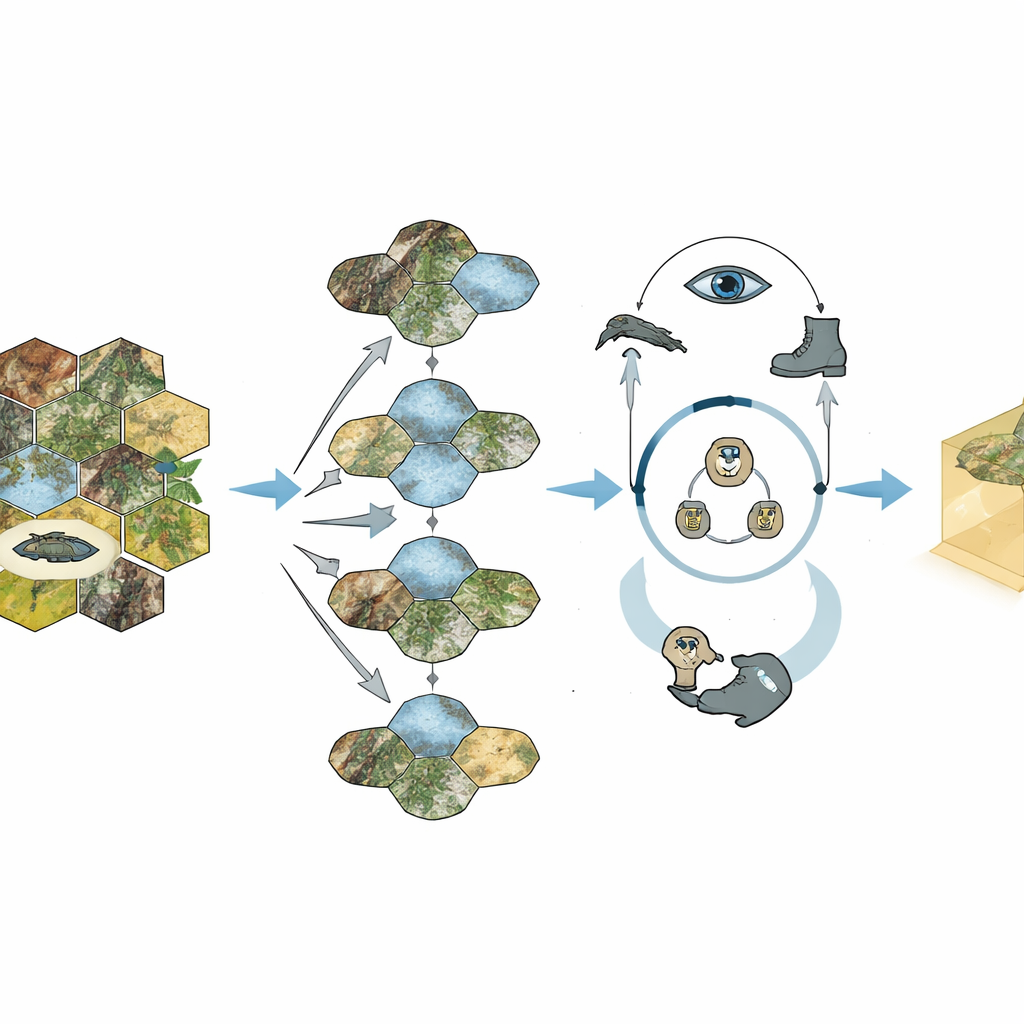

Geo-Commander tackles this problem by giving the AI a highly structured view of the battlefield. The terrain is converted into a hexagonal grid, a familiar format from war games, where each cell carries simple but rich information: its position, height, and what kind of ground it contains, such as open fields, forest, buildings, or rivers. This structure helps the AI understand who can see whom and who can move where. A first module, called Geo-Choice, acts like a smart filter. Instead of forcing the model to consider thousands of possible locations, it uses basic tactical knowledge to narrow the map down to at most ten promising candidate spots that fit the current task—whether that is hiding from the enemy, sniping from long range, or charging in for a close assault.

Letting the AI Reason Through Each Move

Once the map has been narrowed down, a second component, the Spatialized ReAct Chain, allows the AI to think through its options in an explicit step-by-step loop. The language model examines each candidate point, calls specialized tools to measure how far it is from enemies, how long it would take friendly units to reach it, and how wide its field of view would be. After each round of calculations, it revises its judgment, much like a human commander who checks a map, asks for range estimates, and then reconsiders. Crucially, this process produces an interpretable trail of reasoning: the system can explain, in plain language, why a chosen grid cell offers better cover, visibility, or maneuvering potential than the alternatives.

Putting the System to the Test

The researchers evaluated Geo-Commander inside a professional-grade tank combat simulation. They designed both “static” tasks, where the AI simply had to pick the best hiding, sniping, or assault point on a fixed map, and “dynamic” battles, where red and blue tank detachments maneuvered and fought over varied terrain. Human military experts first created a detailed rating table of which grid cells were tactically superior, providing a tough benchmark. The full Geo-Commander system, combining the Geo-Choice filter and the reasoning loop, consistently chose better positions than standard visual language models, simplified versions of itself, and an existing rule-based commander. In full simulated battles it even outperformed a state-of-the-art reinforcement learning agent that had been trained through a million self-play games.

From War Games to Wider Worlds

Geo-Commander shows that language models can become competent “map thinkers” when given the right spatial structure and tools, not just more text. By blending grid-based terrain encoding with an explicit cycle of reasoning, action, and observation, the system turns opaque AI judgments into traceable, tactically sensible recommendations. While the study focuses on tank combat simulations and remains safely confined to virtual scenarios, the same ideas could apply to disaster response planning, search-and-rescue routing, or any task where decisions depend on where to go next. In simple terms, the work demonstrates a path for AI to move from talking about the world to navigating it, with humans still firmly in command.

Citation: Chen, Yb., Ping, Y., Zhou, S. et al. A framework of large language model commander agent for spatial reasoning in combat simulation. Sci Rep 16, 13431 (2026). https://doi.org/10.1038/s41598-026-43365-3

Keywords: spatial reasoning, combat simulation, large language models, decision support, geospatial AI