Clear Sky Science · en

UltraReporter for transforming spoken diagnostic cues into structured ultrasound reports with large language models

Turning Talk into Time Saved

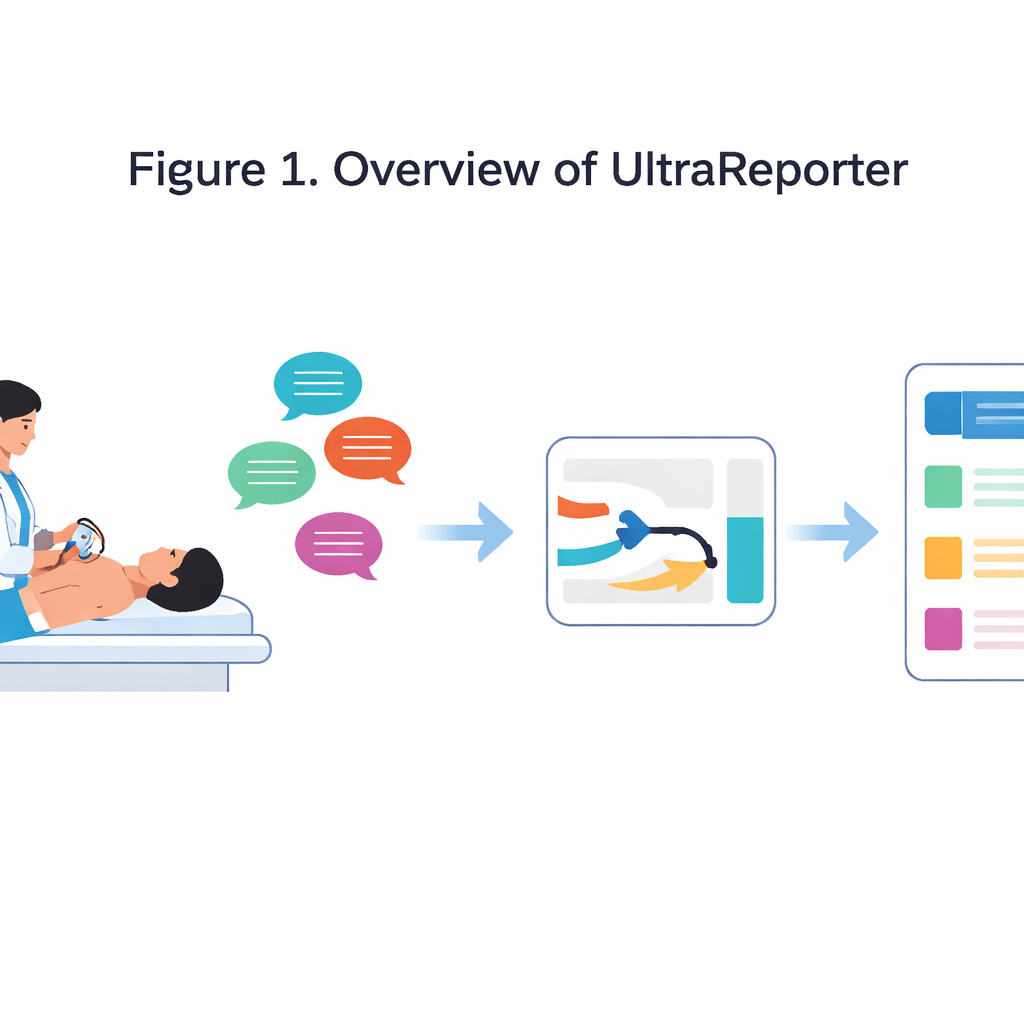

When doctors perform an ultrasound, they must juggle two demanding tasks: carefully scanning the patient and hurriedly typing or clicking their way through a detailed report. This paperwork can take longer than the scan itself and is vulnerable to fatigue and small but important mistakes. The study introduces UltraReporter, an artificial intelligence system that listens to the short phrases doctors already say during an exam and automatically turns them into a polished, structured report in about one second. For patients, this promises faster visits and more consistent documentation; for clinicians, it offers a way to reclaim time and reduce burnout.

A New Helper in the Ultrasound Room

In many hospitals, ultrasound is the workhorse imaging tool, used for the liver, gallbladder, kidneys, thyroid, and other organs. Its speed and safety have driven exam volumes so high that sonographers and radiologists face intense reporting pressure. Traditionally, attempts to automate reporting have either transcribed long dictated paragraphs or tried to interpret images directly. Both approaches struggle in real clinics: full dictation still takes minutes and needs editing, while image-only systems often misread noisy ultrasound pictures. UltraReporter instead fits into what doctors already do. As they scan, they naturally call out short cues like “liver cyst, one point two by one point one.” UltraReporter listens, converts those spoken cues to text, and then expands them into a full, template-style report that can be checked and signed.

Building Data from Thin Air

Designing such a system faces a key problem: there are almost no existing pairs of real spoken cues matched to final ultrasound reports. The researchers solved this with a multi-agent artificial intelligence pipeline that effectively manufactures realistic training data from existing text reports. One AI “cue-simulator” learns to shrink full reports into brief, doctor-like bullet phrases. A second AI “report-generator” learns to expand such cues back into well-structured narratives. A third “quality-evaluator” grades each synthetic pair on accuracy, completeness, clarity, and other factors, throwing out any that fall short. This process produced more than 21,000 high-quality cue–report pairs spanning hundreds of body sites and thousands of diseases, giving the system a rich foundation without requiring extra manual annotation.

Teaching the System Hospital Habits

Beyond general medical knowledge, real-world reports must follow local habits: familiar headings, favorite phrases, and specific ways of describing common findings. To capture this, the team added a second training stage called template-augmented fine-tuning. Here, UltraReporter learns not only from cues and reports, but also from a library of nearly 200 real institutional templates matched to the organ and disease in question. This nudges the model to use standard wording and layout, improving consistency across patients and providers. A final training step, called defect-oriented preference optimization, teaches the system to spot and correct its own subtle mistakes. When the model confuses a measurement or omits a key detail, another AI flags the defect and creates training examples that explicitly prefer the corrected version, sharpening the model’s clinical reasoning.

From Speech to Report in One Second

To work in a busy exam room, the system must handle messy real speech. The authors pair a noise-tolerant speech recognizer with a language model tuned on medical Chinese so that phrases like “portal vein” are not misheard as everyday words. The recognized cue is then passed to the trained UltraReporter model, which produces a structured report covering findings and impressions almost instantly. Safety is built in: the system calculates how confident it is about each piece of text, especially numbers and diagnoses. Any low-confidence segment is highlighted in the doctor’s interface, drawing attention to spots that deserve a second look. In reader studies, independent specialists often judged UltraReporter’s reports equivalent to or better than those written by physicians, and in routine use most generated reports were rated on par with originals.

What This Means for Patients and Clinicians

UltraReporter shows that a relatively compact language model—far smaller than many headline-grabbing systems—can match or even exceed expert performance on a focused, practical task when fed the right data and trained with care. By turning the brief phrases doctors already say into complete, standardized reports, it has the potential to cut documentation time to seconds without taking control away from clinicians. For patients, this could mean more face-to-face time and fewer clerical delays. For health systems, it offers a blueprint: use multi-step AI frameworks, grounded in local templates and human oversight, to transform everyday clinical routines in safe and scalable ways.

Citation: Hao, P., Zhang, J., Zhang, S. et al. UltraReporter for transforming spoken diagnostic cues into structured ultrasound reports with large language models. Sci Rep 16, 13662 (2026). https://doi.org/10.1038/s41598-026-41439-w

Keywords: ultrasound reporting, medical AI, speech-to-report, clinical documentation, large language models