Clear Sky Science · en

Pre-trained multi-scale RWKV-GCN for multivariate time series forecasting

Why smarter forecasts matter

From predicting rush-hour traffic to balancing the power grid, many modern systems rely on looking ahead in time. These systems collect streams of measurements from many sources at once—temperatures, road sensors, electricity meters, currency rates, and more. Making accurate forecasts from this tangled web of signals is difficult: each series changes over time, and the series also influence one another. This paper introduces a new forecasting method, called PMSRWKV-GCN, that is designed to untangle these relationships so computers can make more accurate and stable predictions about the future.

Many signals, many hidden patterns

In real applications, forecasters rarely deal with a single curve. A city’s traffic network, an energy system, or the world’s currency markets all produce many time series at once. To predict what happens next, a model must understand how each individual signal evolves over time and how different signals affect one another. Classic statistical tools work well for one series at a time but struggle when dozens or hundreds of series are intertwined. Newer deep learning models, such as recurrent networks and Transformers, have improved the situation but often require heavy computation and can be confused by long histories and noisy relationships among signals.

Finding rhythms before learning connections

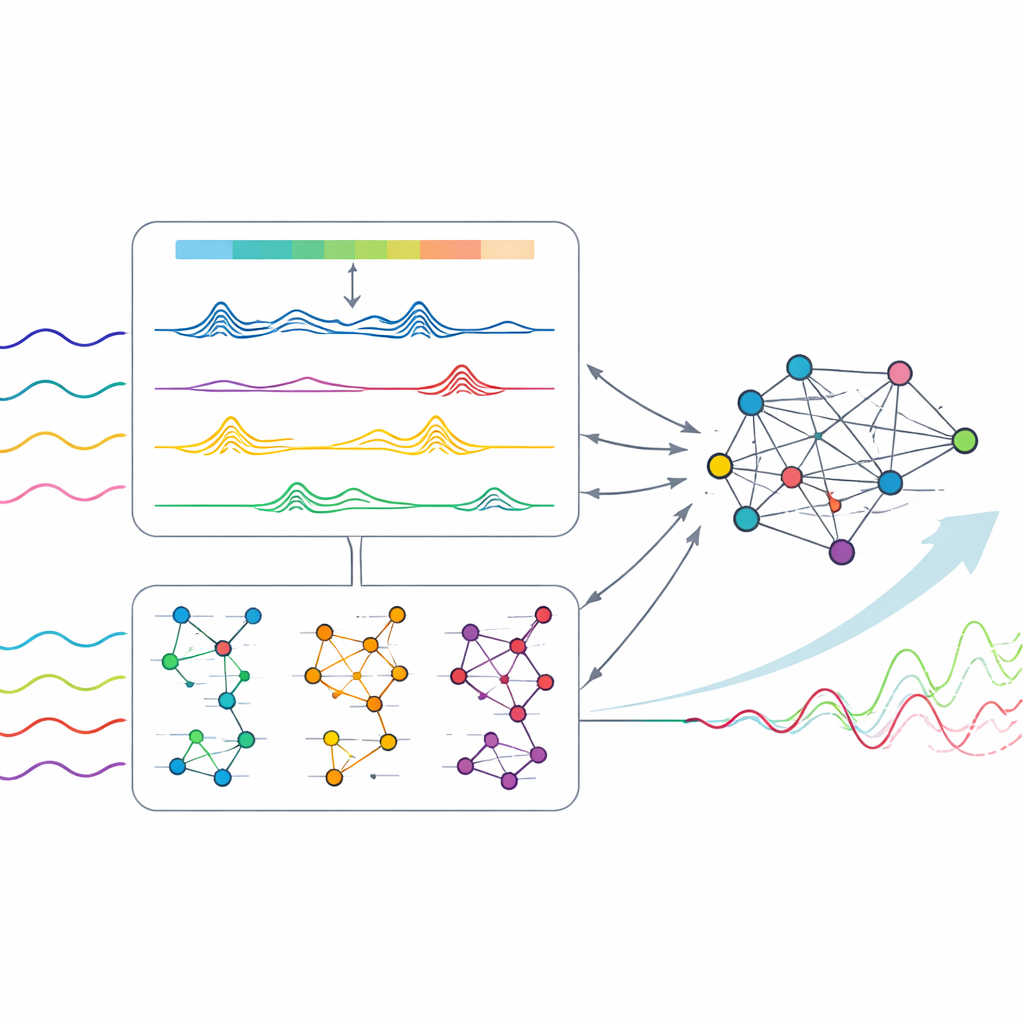

The authors argue that a major difficulty lies in timing: if a model tries to learn relationships between series too early, weak or spurious links can drown out the genuine rhythms hidden in each signal. Their solution is to split the task into two stages. First, the method concentrates on uncovering clean temporal patterns within each individual series. It does this by applying a fast mathematical tool (the Fast Fourier Transform) that reveals dominant cycles—daily, weekly, or seasonal rhythms—in the data. Guided by these cycles, a redesigned "time-mixing" component looks at each series over several time scales at once, from short ripples to long waves. In this pre-training stage, each channel is handled independently so that cross-talk between series cannot inject noise.

Letting the network learn a map of influences

Once the model has learned reliable temporal patterns for each series, it moves to the second stage, where relationships between series are finally introduced. Here, the method treats the collection of signals as a network: each series is a node, and connections represent how strongly two series influence each other. Instead of assuming a fixed map of links, the model learns this map directly from data using a graph-based neural network. Crucially, it does this at multiple time scales that match the previously discovered cycles. For each scale, the network refines both the node features and the pattern of connections, then blends them together using weights derived from the strength of the underlying cycles. This multi-scale graph design lets the model emphasize the most informative relationships while downplaying weaker, less useful ones.

Testing the method in the real world

The researchers evaluated PMSRWKV-GCN on eight public datasets that reflect practical forecasting challenges: electricity demand from hundreds of clients, highway traffic in California, weather measurements, power transformer temperatures, and foreign exchange rates among major economies. Across a range of forecasting horizons—from a few steps ahead to long-range predictions—the new model typically produced lower errors than several strong baselines, including Transformer-based and other graph-based approaches. An ablation analysis showed that both stages were important: removing the multi-scale time-mixing weakened the model’s sense of temporal structure, while removing the multi-scale graph severely hurt performance on datasets with strong interactions between series. Pre-training the temporal module on its own further improved accuracy and stability by a few percent across datasets.

What this means for everyday forecasting

To a non-specialist, the key message is that the authors have built a forecasting system that separates "what each signal tends to do" from "how signals influence one another," and then recombines these insights in a carefully controlled way. By first learning clean rhythms for each series and only afterward learning a flexible network of influences, PMSRWKV-GCN avoids being misled by noisy or weakly related data. The result is a model that can more reliably track sharp changes and complex cycles in domains like energy, transportation, weather, and finance. This stage-by-stage, multi-scale approach offers a blueprint for future forecasting tools that are both more accurate and more robust when faced with the messy data of the real world.

Citation: Hao, J., Liu, F. & Zhang, W. Pre-trained multi-scale RWKV-GCN for multivariate time series forecasting. Sci Rep 16, 10250 (2026). https://doi.org/10.1038/s41598-026-41091-4

Keywords: time series forecasting, multivariate data, deep learning, graph neural networks, temporal patterns