Clear Sky Science · en

MCI detection from handwritten drawing test using residual vision transformer

Why simple drawings can reveal hidden memory problems

Imagine that a doctor could spot early warning signs of dementia just by looking at how you draw a clock, a cube, or a line of connected circles. These quick sketches are already used in clinics, but they are scored by hand and depend heavily on a doctor’s judgment. This paper shows how an artificial intelligence (AI) system called ResViT can “read” these drawings automatically, turning pen strokes into an early alert for mild cognitive impairment (MCI), a stage between normal aging and dementia when treatment and planning can still make a big difference.

From pen-and-paper tests to smart screening

Mild cognitive impairment often shows up first in everyday tasks that require planning, attention, and a sense of space—exactly what drawing tests are designed to probe. Doctors commonly ask patients to draw a clock that shows a certain time, copy a three‑dimensional cube, or connect scattered numbers and letters in sequence. In the past, each drawing had to be graded by eye, which is slow and can vary from one clinician to another. The authors set out to build a more objective system that looks at all three drawings together, using a computer to spot patterns that even trained eyes might miss. Their goal is not to replace doctors, but to give them a fast, consistent second opinion.

Blending two ways of seeing: details and the big picture

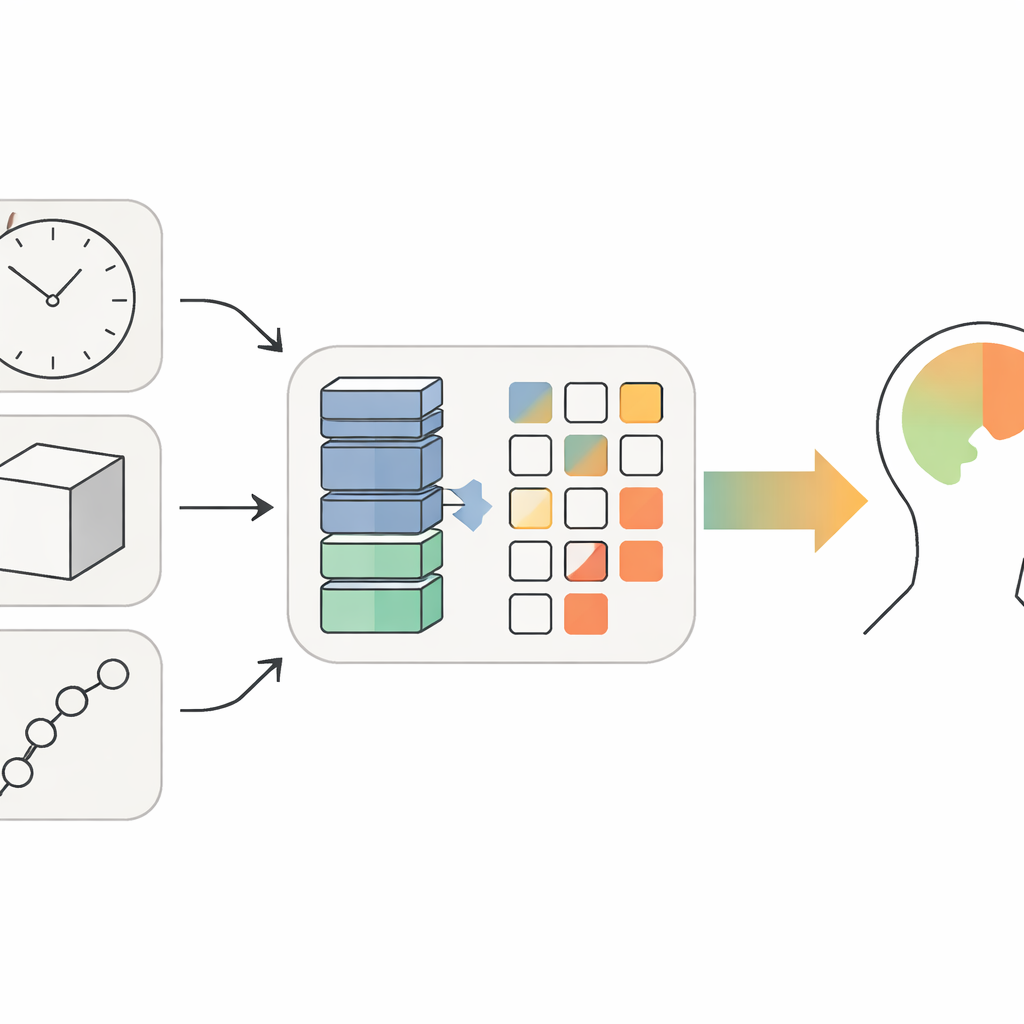

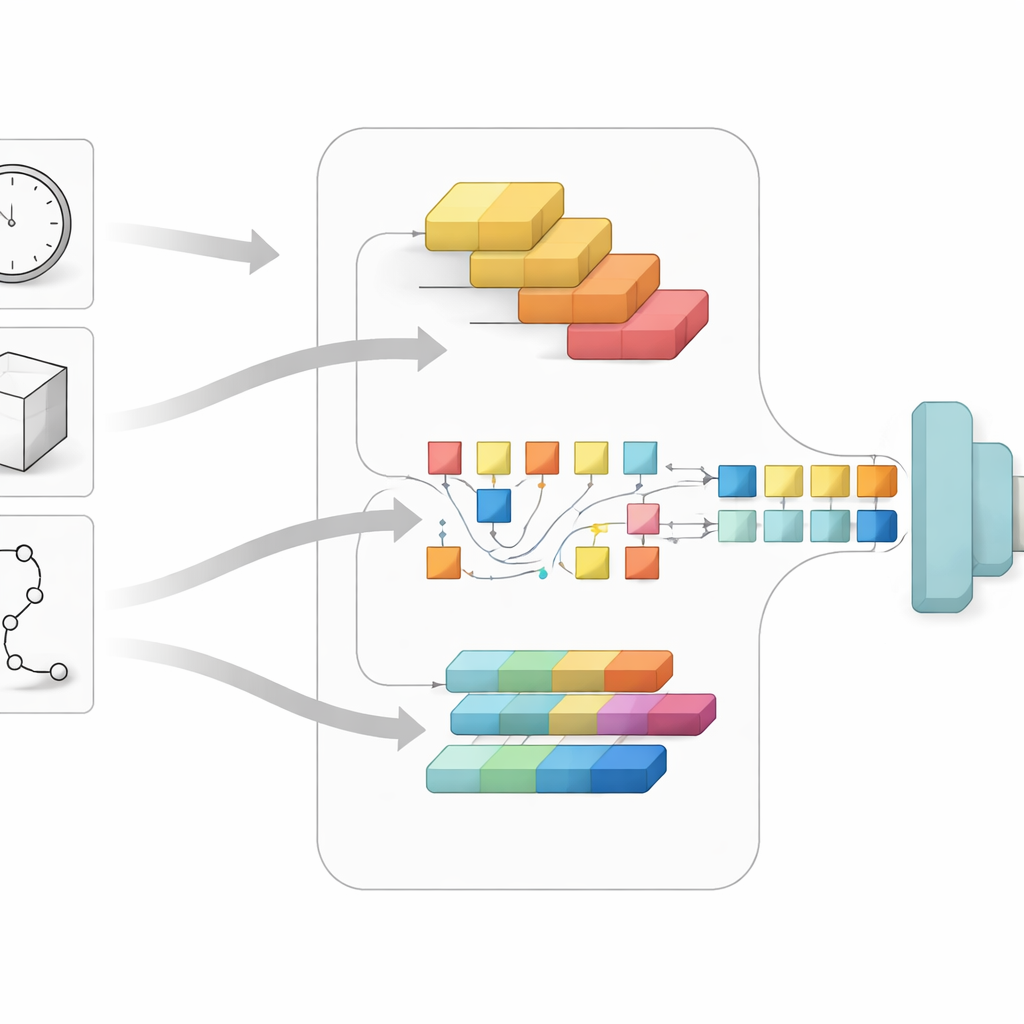

The core of the study is a hybrid AI model called ResViT, designed to combine two complementary styles of image analysis. One part, based on a technique known as ResNet, is especially good at spotting fine details such as edges, corners, and small distortions in the lines of a drawing. The other part, a Vision Transformer, excels at understanding the overall layout—how the pieces of a clock, cube, or trail fit together across the page. Instead of feeding the drawings through these components one after another, the system runs them in parallel, then fuses the two streams of information into a single, richer picture of a person’s cognitive state.

How the system learns from real patient drawings

To test their idea, the researchers used a public collection of drawings from 918 people, each of whom had completed the clock, cube, and trail‑making tasks. Each person’s cognitive status had already been judged using a standard clinical test, providing a ground truth label of either “healthy” or “MCI.” The team converted the drawings into grayscale images, resized them, and applied simple tweaks such as rotations and brightness changes to make the model more robust. During training, ResViT repeatedly compared its predictions to the known labels and adjusted its internal settings, with safeguards like early stopping and dropout to avoid memorizing the training data instead of learning general rules.

How well it works and what it reveals

When evaluated on people it had never seen before, ResViT correctly distinguished healthy individuals from those with MCI about three‑quarters of the time, with an accuracy of 74.09% and a balanced F1 score around 0.67. This outperformed several strong alternatives, including versions that used only the ResNet part, only the Vision Transformer, or another popular network called EfficientNet. The hybrid approach, with about one third as many internal parameters as a large standalone transformer, proved especially good at balancing sensitivity to disease with avoidance of false alarms. Using heat‑map visualizations, the authors also showed that the model tends to focus on clinically meaningful regions—like clock digits, cube edges, and branching points in trails—suggesting that it is paying attention to much the same cues as human experts.

Limits today and possibilities for tomorrow

The authors stress that their system is not yet ready to be a universal screening tool. The dataset is modest in size, skewed toward older adults, and lacks important background information such as education level and cultural differences, all of which can influence how people draw. The model can also be computationally demanding for low‑power devices. Still, because ResViT can be adapted with relatively few new examples, it could be extended to other cognitive disorders or new drawing tasks as more data become available. Integrating larger and more diverse datasets, and building leaner versions of the model, will be crucial steps toward everyday use.

What this means for patients and families

In plain terms, this work shows that carefully designed AI can turn simple pen‑and‑paper sketches into a practical tool for catching early signs of memory and thinking problems. While a 74% accuracy rate is not perfect, it is promising for a first line of defense that is cheap, quick, and easy to repeat over time. In the future, a scanned drawing from a clinic, or even a tablet at home, could quietly flag subtle changes long before they are obvious in daily life, giving doctors and families more time to respond. Rather than replacing human judgment, systems like ResViT could make that judgment more consistent and timely, bringing earlier help to people at risk of dementia.

Citation: Sirshar, M., Matloob, I., Tayyabah, A. et al. MCI detection from handwritten drawing test using residual vision transformer. Sci Rep 16, 10334 (2026). https://doi.org/10.1038/s41598-026-40716-y

Keywords: mild cognitive impairment, drawing tests, deep learning, vision transformer, early dementia detection