Clear Sky Science · en

Mixed attention mechanism multi-task learning for fetal abdominal standard plane recognition and key anatomical structure detection

Helping Doctors See Before Birth

Prenatal ultrasound is one of the first windows parents and doctors have into a baby’s health. But reading those grainy black‑and‑white images is a skill that takes years to master, and small details can make the difference between catching a problem early and missing it. This study introduces a computer system designed to help: an artificial‑intelligence tool that can quickly recognize standard views of a baby’s abdomen and highlight the key organs doctors look for during routine pregnancy scans.

Why Standard Views Matter

When an expectant mother has an ultrasound in mid‑pregnancy, the clinician doesn’t just “look around.” They deliberately search for a set of standard views of the fetus. For the abdomen, these include cross‑sections used to measure abdominal size, views focused on the bladder, umbilical cord, and several angles on the kidneys and spine. Together, these planes let doctors estimate growth, check for structural problems, and assess risks around birth. Acquiring each standard view requires precise hand movements and a strong grasp of anatomy, and similar‑looking slices can easily be confused—especially for less experienced staff or when images are noisy.

Turning Ultrasound Images into Data

To train a computer to assist with this task, the researchers first assembled a large collection of real‑world images. Over four years, they gathered more than 6,700 abdominal ultrasound pictures from over 3,100 pregnant women at several hospitals, using many different machines. Experienced ultrasound doctors carefully traced 14 key anatomical structures on each image—such as the stomach, bladder, kidneys, major blood vessels, spine, ribs, and umbilical cord—and labeled which of seven standard abdominal views each image represented. This labor‑intensive labeling process created a rich “map” connecting pixel patterns to clinically meaningful structures.

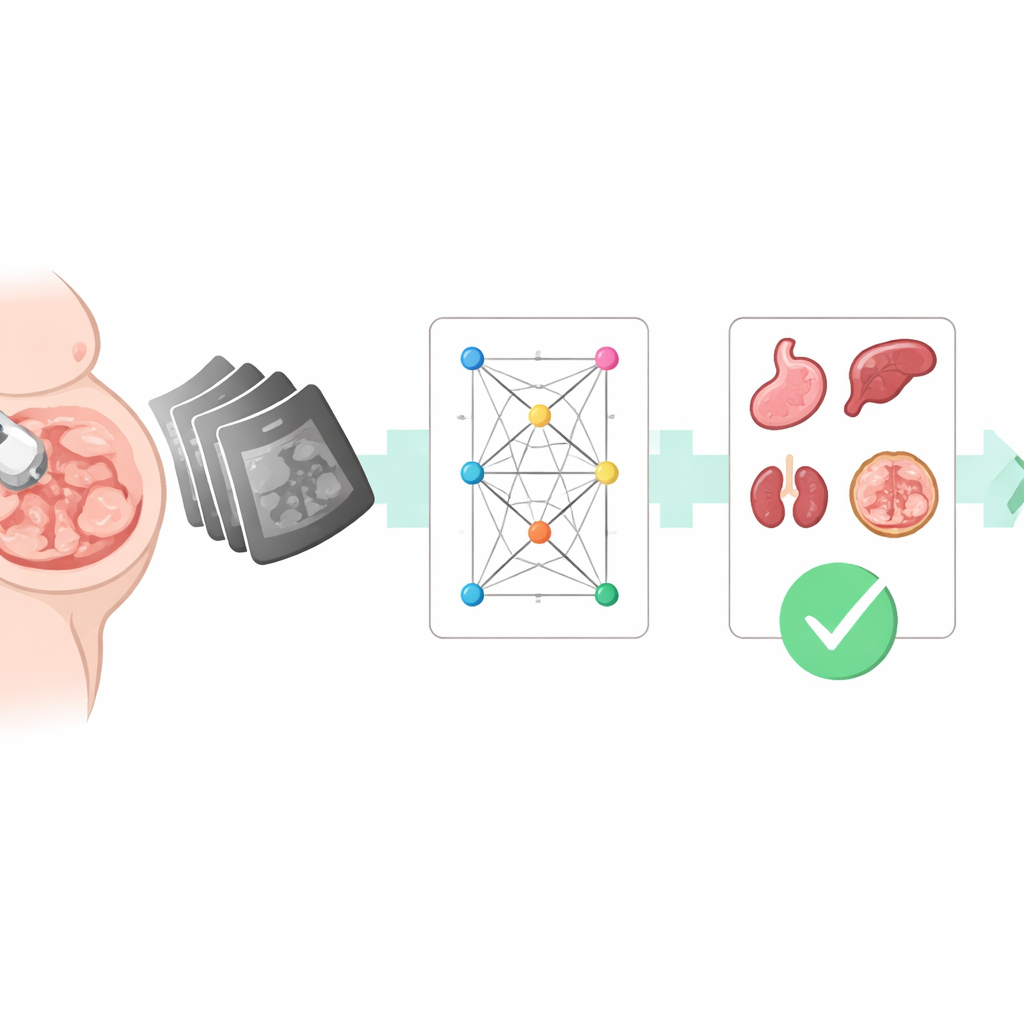

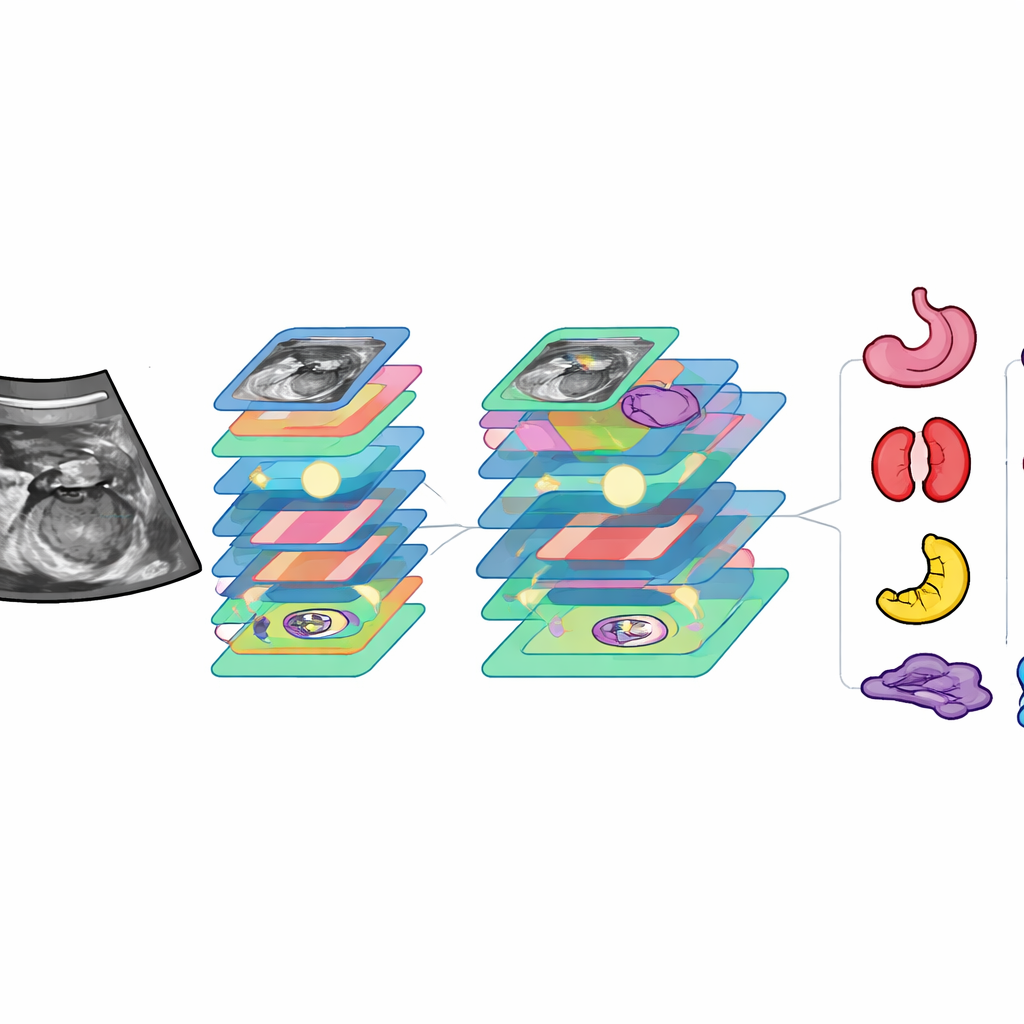

A Dual‑Purpose AI with Focused Attention

Building on this dataset, the team developed a deep‑learning model they call FAUSP‑NET. Unlike systems that perform only one job, FAUSP‑NET is a multi‑task network: in a single pass it both draws boxes around key organs and decides which standard view the image belongs to. Under the hood, the model uses a modern object‑detection backbone, enhanced with several “attention” components that help it focus on the most informative regions and scales. Some attention blocks emphasize subtle differences between look‑alike structures (for example, left versus right kidney, or umbilical cord versus nearby vessels), while others adapt to organs of very different sizes. A specially designed loss function helps the system learn from rare or hard‑to‑see structures, improving how precisely it outlines them.

How Well the System Performs

Once trained, FAUSP‑NET was tested on held‑out images and compared with 24 existing computer‑vision models, including popular object detectors and image classifiers. It proved more accurate than all of them in both detecting organs and recognizing standard views. For organ detection, it correctly matched doctors’ markings in the vast majority of cases and handled most structures with near‑perfect reliability; only the faintest, smallest features, such as certain vessels and the diaphragm, remained challenging. For view recognition, the system correctly classified images as one of the seven abdominal planes more than 97% of the time. On standard hardware, it processed each image in about 24 milliseconds—fast enough for real‑time use during an exam.

Comparing AI with Human Experts

To see how this tool stacks up against real clinicians, the researchers evaluated it on an entirely separate set of images from another hospital and compared its performance with junior, mid‑level, and senior ultrasound doctors. FAUSP‑NET’s accuracy in detecting structures and identifying standard views approached or matched that of experienced senior physicians, and clearly surpassed that of less experienced doctors. Crucially, the system completed its analysis in less than one hundredth of the time a senior doctor needed to review the same cases. Visualization techniques showed that the model tends to focus on the same regions human experts rely on, supporting its interpretability and potential acceptance in clinical practice.

What This Means for Expectant Families

In everyday terms, this work shows that a carefully trained AI system can act as a second set of eyes during prenatal scans of a baby’s abdomen. FAUSP‑NET can quickly flag whether the right abdominal view has been captured and whether crucial organs are clearly visible, helping less‑experienced sonographers avoid mistakes and freeing specialists to focus on complex cases. While further testing across more hospitals and machines is still needed, the technology points toward a future in which routine pregnancy ultrasounds are more consistent, faster, and less dependent on who happens to be holding the probe, potentially improving care for mothers and babies alike.

Citation: Li, Y., Huang, Y., Liu, Z. et al. Mixed attention mechanism multi-task learning for fetal abdominal standard plane recognition and key anatomical structure detection. Sci Rep 16, 12324 (2026). https://doi.org/10.1038/s41598-026-40590-8

Keywords: prenatal ultrasound, fetal abdomen, deep learning, standard plane detection, medical image analysis