Clear Sky Science · en

An underwater image dataset for occlusion-aware fish instance segmentation

Why Counting Fish Underwater Is Hard

Fish farms are turning into high-tech operations, where cameras and algorithms quietly watch over thousands of animals. Yet a surprisingly basic task—simply telling one fish from another in a crowded tank—turns out to be very difficult. Fish swim over and under each other, block the camera’s view, and appear only in pieces at the edge of an image. This paper introduces a new underwater image collection, the Fish Occlusion Dataset (FOD), built to help computers recognize individual fish even when they are partly hidden. That ability is key to automating feeding, health checks, and stock assessment in modern aquaculture.

A New Picture Library for Busy Fish Tanks

The heart of this work is a large, carefully curated set of underwater photos of crucian carp, a common farmed fish. The researchers recorded 66 fish in a tank using a specialized underwater camera mounted above the water, then extracted still frames from the videos. After removing near-duplicate images, they ended up with over a thousand single-fish images and hundreds of multi-fish scenes. Each visible fish was outlined by hand at the level of individual pixels, giving computers access to precise shapes instead of rough boxes. In total, FOD contains 14,376 images and 144,894 meticulously labeled fish, making it one of the most detailed public resources of its kind.

Teaching Computers to See Through Overlap

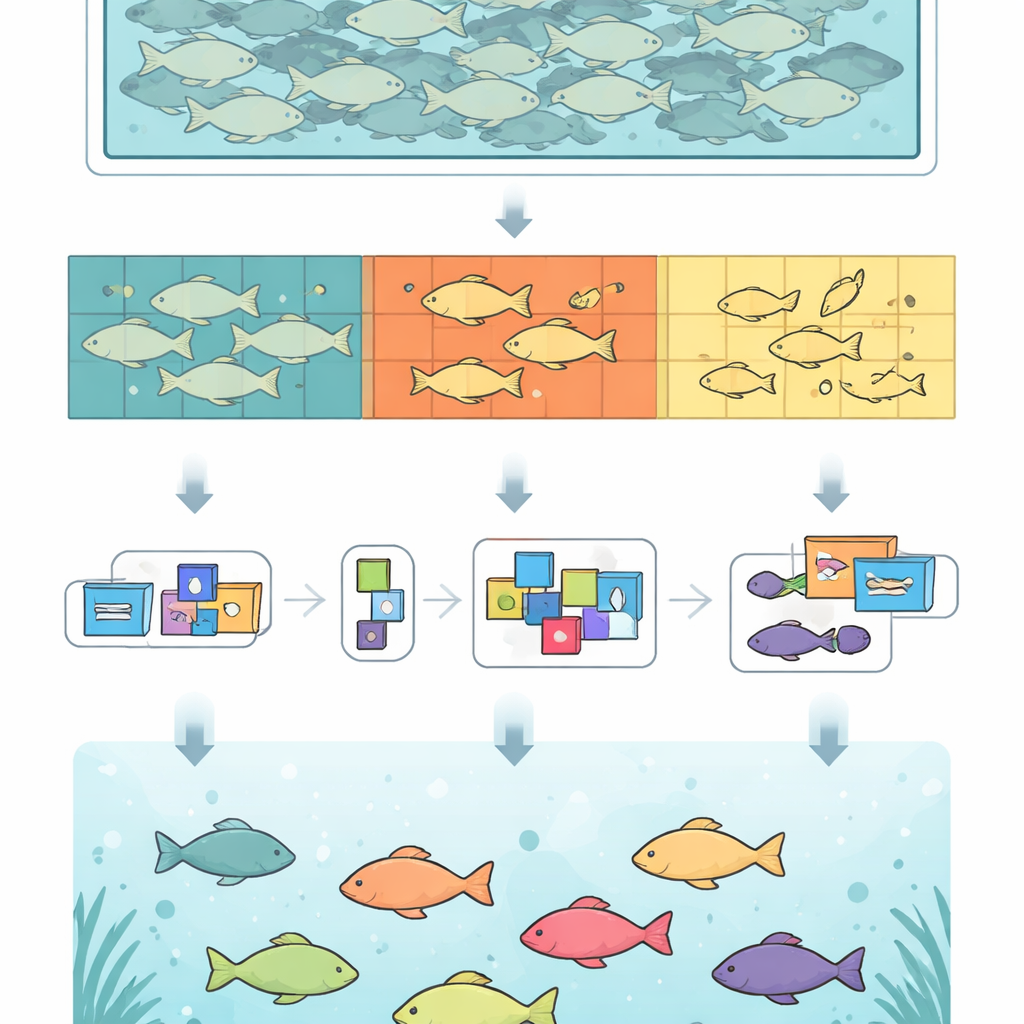

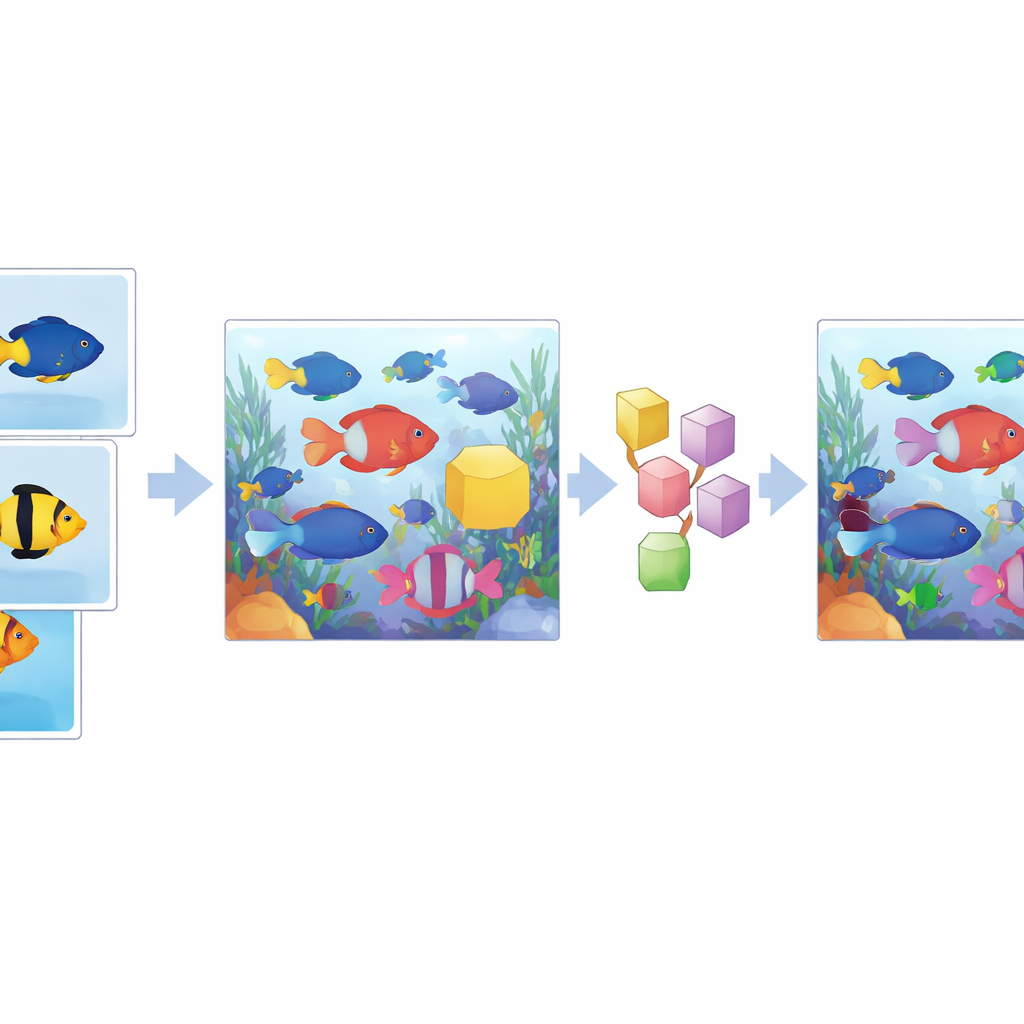

To truly test how well algorithms handle crowding, the team needed many examples where fish overlap. Drawing detailed outlines in such scenes is extremely time-consuming, so they adopted a clever shortcut. First, they generated high-quality masks for individual fish. Then they digitally cut those fish out and pasted them onto background images in new arrangements. By rotating, scaling, and shifting fish, and by limiting how much they can cover one another, they created 13,000 synthetic images with realistic, dense schools and controlled overlap. Smooth blending at the edges keeps these composites looking natural. The final dataset mixes original and synthetic scenes, giving both variety and realism.

Grading How Hidden Each Fish Is

Not all occlusion is equal: a fish that is fully visible is much easier to recognize than one that appears only as a few scattered patches. To capture this, the authors sorted every fish into three simple groups. “Whole” fish are completely visible, “part” fish are partially blocked by others, and “fragment” fish appear only in separated pieces. This extra layer of labeling lets researchers see exactly where their algorithms struggle. When they analyzed the numbers, they found that most fish in the dataset fall into the “part” group, reflecting what really happens in crowded tanks. They also showed that traditional summary scores can hide failures on tiny fragments, so reporting results by occlusion level gives a clearer picture of model strengths and weaknesses.

How Current Algorithms Measure Up

To demonstrate what FOD can do, the team tested eight popular image-segmentation methods, including both long-standing detection-based models and newer “proposal-free” designs that work more directly with image regions. All achieved high average accuracy on the dataset, and one method, Mask2Former, stood out for producing the sharpest outlines, especially when fish overlapped. Yet even the best models faltered when fish were broken into fragments—performance there dropped noticeably compared with fully visible fish. An extra experiment showed why FOD’s mixture of real and synthetic data matters: training only on real scenes led to poor handling of occlusion, while training only on synthetic ones missed some details of real images. Combining both produced the most robust models.

What This Means for Smarter Fish Farms

In practical terms, this new dataset offers a proving ground for computer vision systems that must work in real fish farms, where clear views are the exception rather than the rule. By deliberately focusing on overlapping fish and by sharing both the images and the code used to build them, the authors provide a foundation for more reliable, occlusion-aware monitoring tools. While the current collection covers only one species in a controlled tank, the same approach can be extended to other fish and more challenging environments. As these techniques spread, fish farmers could gain continuous, precise information about stock size, behavior, and growth—helping them use feed more efficiently, spot health problems early, and run more sustainable operations.

Citation: Wang, X., Yu, H., Zhang, C. et al. An underwater image dataset for occlusion-aware fish instance segmentation. Sci Data 13, 526 (2026). https://doi.org/10.1038/s41597-026-06898-w

Keywords: underwater imaging, fish farming, computer vision, instance segmentation, occlusion