Clear Sky Science · en

Duration between rewards controls the rate of behavioral and dopaminergic learning

Why the Pace of Rewards Matters

Teachers warn against last-minute cramming, and animal trainers space out treats—but why does taking breaks help us learn? This study asks a surprisingly simple question with big implications: when you are trying to learn that a signal predicts a reward, does it help more to get many quick rewards or fewer rewards spaced farther apart? By carefully timing drops of sugar water for mice and measuring both their behavior and brain chemistry, the researchers uncover a mathematical rule showing that the time between rewards, not the raw number of trials, controls how fast learning happens.

Learning with Fewer but Better-Spaced Treats

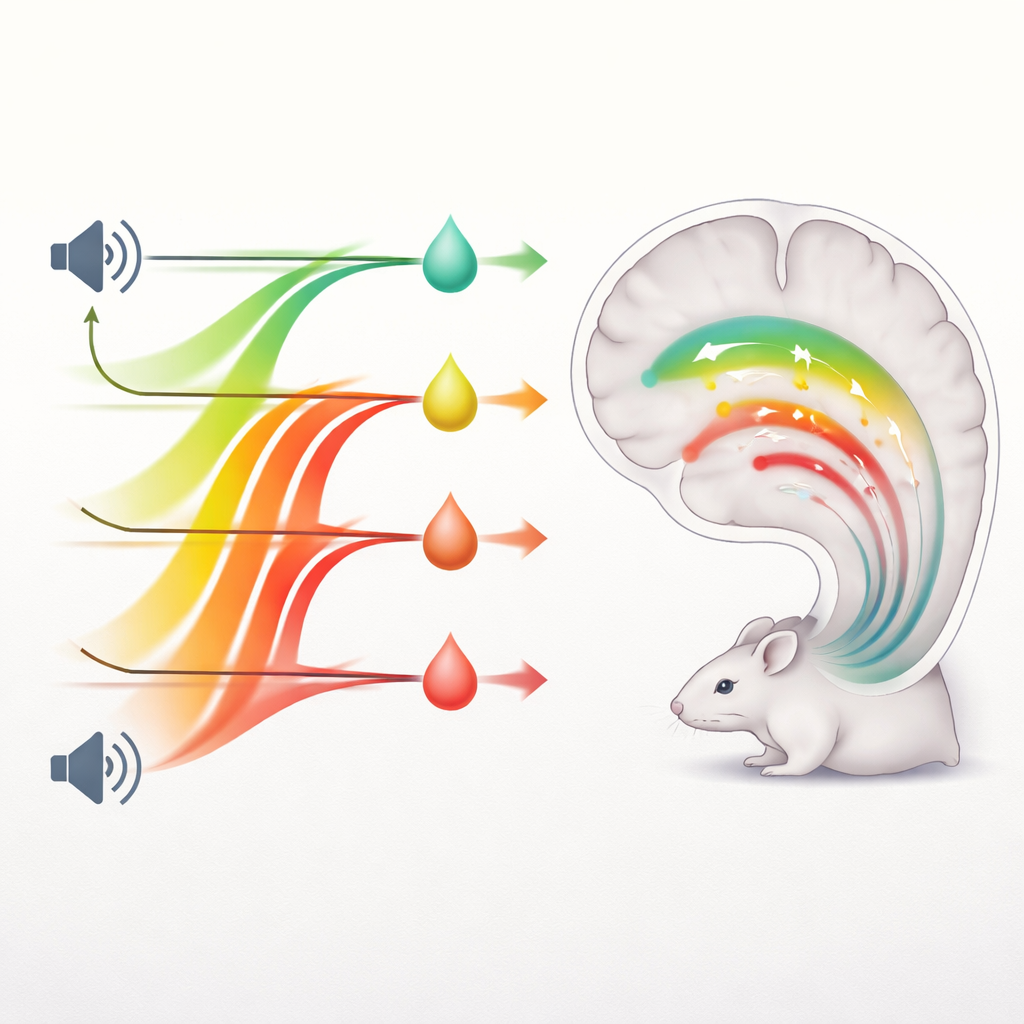

The team trained thirsty, head-fixed mice to associate a brief tone with a tiny sip of sweet liquid. All mice heard the same sound and got the same reward shortly afterward, but the time until the next tone-and-reward cycle varied dramatically—from half a minute up to ten minutes, and in one group an hour. Mice with short breaks experienced many cue–reward pairings per day, while those with long breaks experienced only a handful. Intuitively, one might expect the “busy” schedule to produce faster learning. Instead, the opposite happened: when the breaks were ten times longer, mice needed about ten times fewer cue–reward experiences to figure out the association.

Same Learning in the Same Time, No Matter How Many Trials

Although the spaced-out mice needed far fewer experiences, they did not actually learn more quickly in real time. When the researchers calculated how many minutes of conditioning had passed before each mouse began reliably licking in anticipation of the reward, the total time to learn was nearly identical across groups whose breaks varied 20-fold. In other words, stretching out the interval between rewards made each individual experience more potent for learning, in direct proportion to the waiting time. Removing nine out of ten trials from a dense training schedule had essentially no effect on how long it took for the association to form, as long as the total elapsed time in the training setting stayed the same.

Dopamine Signals Follow the Same Rule

To see what was happening inside the brain, the scientists used a fluorescent sensor to track dopamine, a chemical messenger long thought to signal reward prediction errors—that is, the difference between expected and actual rewards. As training progressed, brief surges of dopamine gradually shifted from the reward itself to the predictive tone. Crucially, these dopamine responses showed the same timing rule as behavior: when rewards were spaced ten times farther apart, the dopamine surge to the cue appeared after about one-tenth as many cue–reward experiences, yet after about the same amount of clock time. The pattern held not only for pleasant rewards but also when the tone predicted a mild shock, suggesting that both positive and negative learning share the same time-based rule.

A New Way the Brain Computes Cause and Effect

Classic theories portray learning as a trial-by-trial process in which each experience nudges an internal value up or down by some fixed fraction. In these “trial-based” models, seeing more pairings of cue and outcome in a given period should always speed learning. The new results contradict that idea and instead support a different framework, called ANCCR, in which the brain updates its beliefs only when an outcome actually occurs and then works backward in time to credit earlier cues. Because these updates are triggered at each reward, the model predicts that the change per reward should grow in direct proportion to how long it has been since the previous reward. This mathematically explains why longer gaps between rewards make each experience count more, while leaving overall learning after a fixed duration unchanged.

Rethinking “Practice Makes Perfect”

By showing that the duration between rewards—not the sheer number of trials—governs both behavioral and dopaminergic learning rates, this work challenges the common assumption that more repetitions automatically mean faster learning. For simple associations between signals and outcomes, packing in extra trials may offer little benefit if the rewards come too close together. Instead, well-timed spacing can allow the brain’s dopamine system to make larger, more informative updates from each outcome. The findings call for a reevaluation of how we model learning in the brain and suggest that in many situations, smarter spacing of experience may be just as important as, or more important than, practicing more often.

Citation: Burke, D.A., Taylor, A., Jeong, H. et al. Duration between rewards controls the rate of behavioral and dopaminergic learning. Nat Neurosci 29, 825–839 (2026). https://doi.org/10.1038/s41593-026-02206-2

Keywords: dopamine, reward learning, spacing effect, associative conditioning, reinforcement learning