Clear Sky Science · en

Low-overhead fault-tolerant quantum computation by gauging logical operators

Keeping Quantum Computers Honest

Future quantum computers promise to crack problems that stump today’s supercomputers, but they are also notoriously fragile: tiny disturbances can scramble their calculations. This paper introduces a new way to keep large quantum computations on track using fewer extra quantum bits than previously thought necessary, bringing practical, error-resilient quantum machines closer to reality.

Why Quantum Bits Need Protection

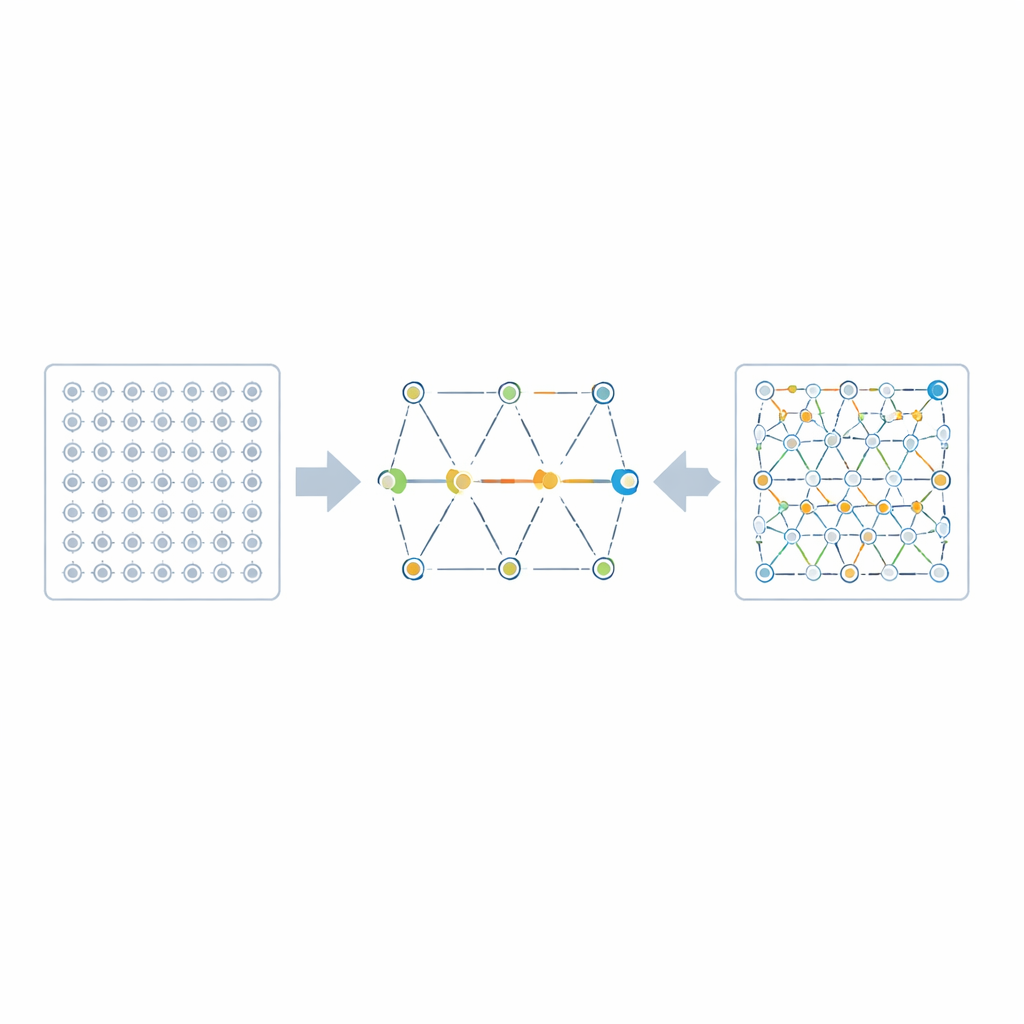

Quantum bits, or qubits, can store information in delicate superpositions that are easily disrupted by their surroundings. To safeguard them, researchers bundle many physical qubits together into a logical qubit using quantum error-correcting codes. A powerful class of such codes, called quantum low-density parity-check (qLDPC) codes, can protect many logical qubits while using relatively few physical ones and only sparse connections between them. However, while these codes are excellent for storing quantum information, carrying out the logical operations needed for full-scale computation has often required large extra “helper” systems, eroding their efficiency advantage.

Measuring Without Breaking the Code

Many quantum algorithms can be implemented if we can reliably measure certain collective properties—logical operators—of the encoded qubits. Doing this carelessly can damage the protective code or demand an enormous number of extra qubits. The authors tackle this challenge by reinterpreting a logical operator as a kind of symmetry of the system, a concept borrowed from physics. Instead of measuring the global quantity directly, they introduce a web of simple, local measurements whose combined outcomes reveal the same information. This idea, known as “gauging” a symmetry, has a long history in theories of matter and forces, but here it is repurposed as a practical tool for quantum computing.

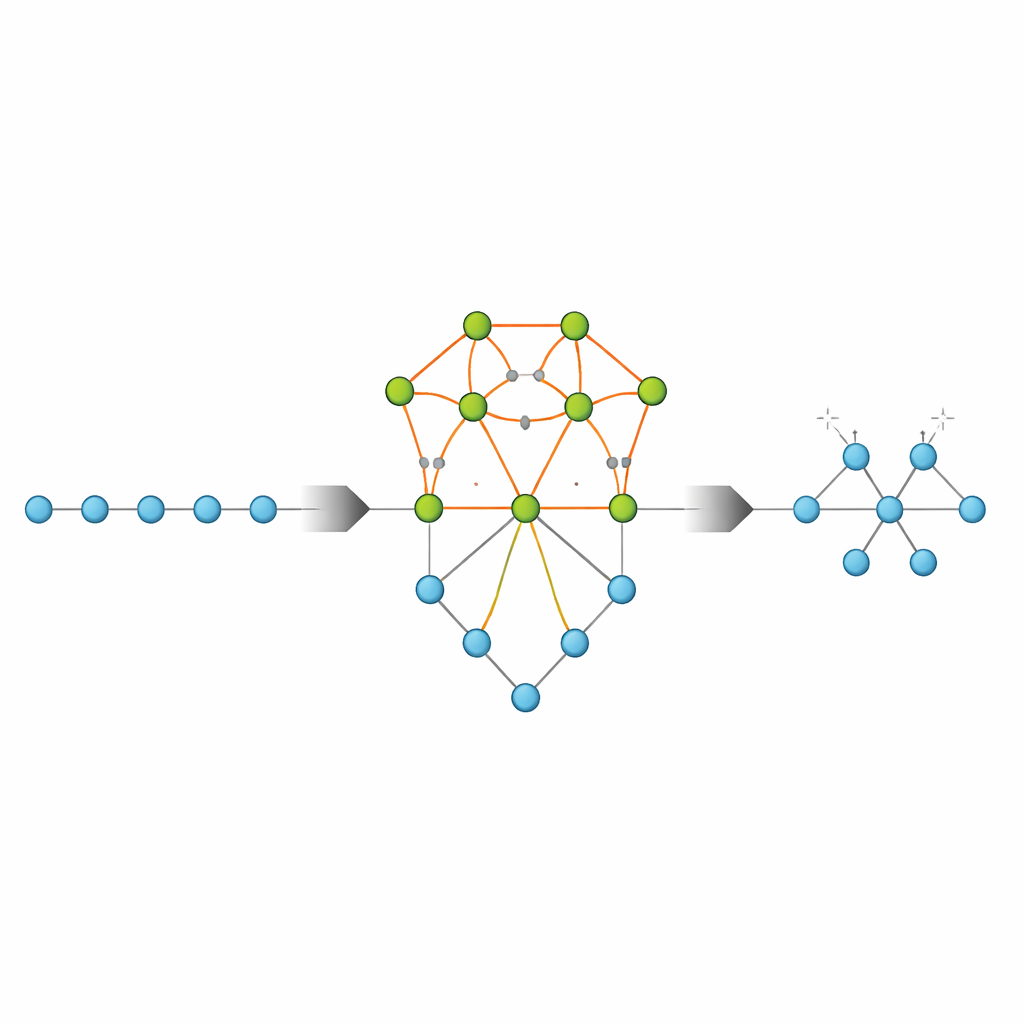

Turning a Global Question into Local Checks

The core of the method is to attach an auxiliary graph—a network of additional qubits—to the group of qubits involved in the logical operator. Each edge in this graph hosts a new qubit, and simple, local tests are performed that involve one data qubit and neighboring edge qubits. These tests enforce rules reminiscent of Gauss’s law in electromagnetism, tying together the behavior of qubits around each vertex. Although each individual outcome looks random, their product encodes the value of the original logical operator. After this “gauging measurement,” the auxiliary qubits are themselves gently removed by further measurements, a process called “ungauging,” which returns the system to a form equivalent to the original code.

Designing Efficient Auxiliary Networks

Not every auxiliary graph works equally well. To keep error rates low and overhead modest, the network must remain sparse, retain good protective distance, and avoid creating overly complicated loops. The authors identify clear design rules that guarantee these properties and show how to systematically construct suitable graphs for any logical operator. Using tools from modern graph theory and topology, including so‑called expander graphs and a process called decongestion, they prove that the number of extra qubits needed scales essentially linearly with how many qubits the logical operator touches, up to modest polylogarithmic factors. This is a dramatic improvement over earlier approaches, where overhead could grow faster than the size of the encoded system itself.

Fault Tolerance in Space and Time

Real devices suffer not only from qubit errors but also from faulty measurements. The authors analyze how their gauging procedure behaves under such imperfections by viewing the entire sequence of space- and time-local operations as a larger “spacetime code.” They show that, provided the auxiliary graph is chosen according to their rules and the relevant checks are repeated enough times, the overall scheme retains the same level of protection as the original qLDPC code. In other words, the logical measurement itself becomes fault tolerant, resisting both physical errors on qubits and misreported outcomes, without dramatically increasing the number of qubits or the duration of the protocol.

What This Means for Future Quantum Machines

For a non-specialist, the key message is that the authors have found a way to ask a global question about many qubits—crucial for running quantum algorithms—by cleverly arranging a series of local, easily implemented checks that do not overload the hardware. Their framework unifies and generalizes several earlier “code surgery” techniques and offers a clear recipe for extending them to new families of quantum codes. If refined and integrated with practical decoders, this gauging-based approach could become a standard building block for large-scale, error-resilient quantum processors, allowing powerful computations to be carried out with hardware resources that are realistically within reach.

Citation: Williamson, D.J., Yoder, T.J. Low-overhead fault-tolerant quantum computation by gauging logical operators. Nat. Phys. 22, 598–603 (2026). https://doi.org/10.1038/s41567-026-03220-8

Keywords: fault-tolerant quantum computation, quantum error correction, quantum LDPC codes, logical measurements, code surgery