Clear Sky Science · en

Comprehensive benchmarking of metagenomic binning tools reveals key factors for improved genome recovery

Why tiny neighbors in your gut deserve a closer look

The microbes that live in our guts, soils, and oceans quietly shape our health, food systems, and climate. Yet most of them cannot be grown in the lab, so scientists rely on powerful DNA sequencing to peek into these hidden worlds. This study asks a deceptively simple question with big consequences: when we turn raw DNA data into draft genomes of microbes, which computer tools work best, and under what conditions do they succeed or fail?

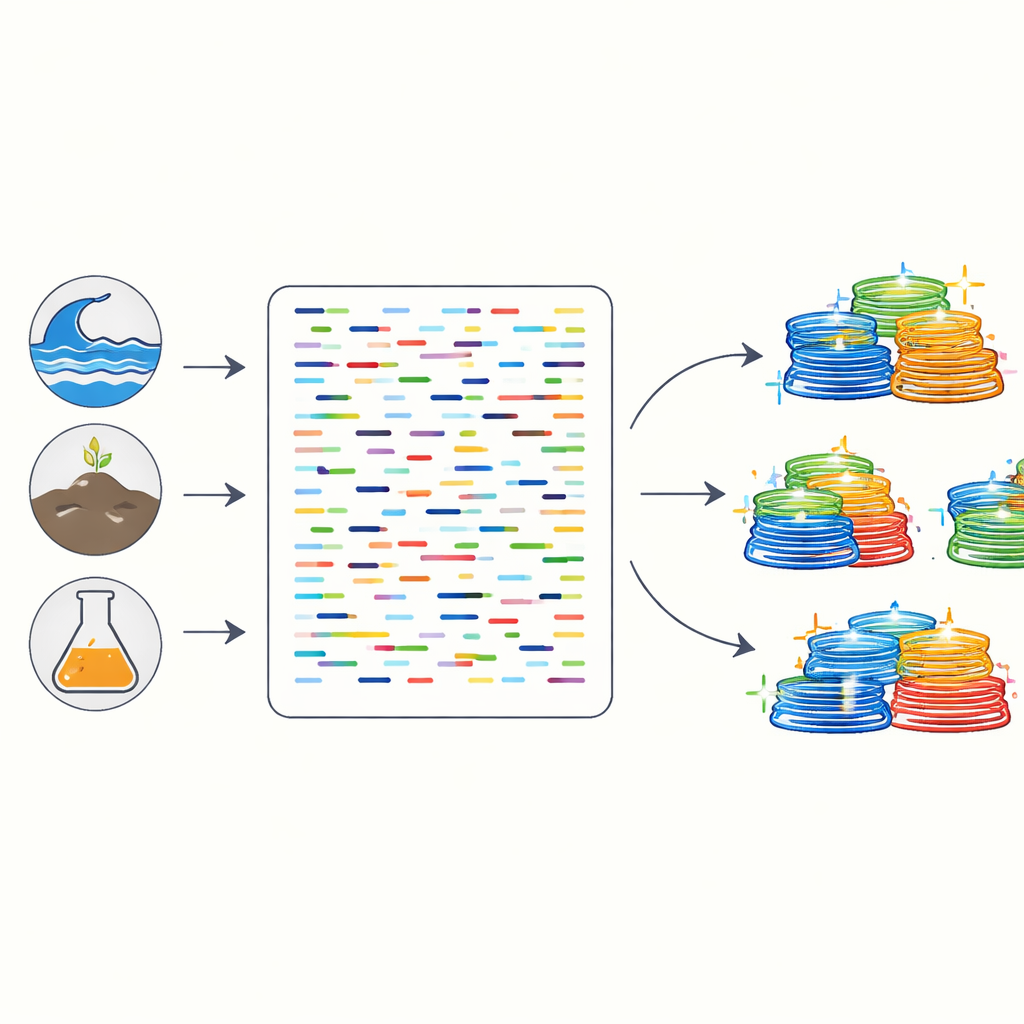

Piecing together genomes from a genetic jigsaw

Modern sequencers turn a scoop of soil or a stool sample into billions of short DNA fragments mixed from hundreds or thousands of species. Researchers first stitch these pieces into longer stretches called contigs, then use “binning” tools to group contigs that likely come from the same microbe, forming what are known as metagenome-assembled genomes. Many different binning programs exist, built on distinct mathematical and machine-learning ideas. The authors systematically compared nine popular tools, plus three methods that refine and combine their output, using a mix of simulated communities and real DNA data from human gut, ocean, and soil samples.

How community complexity and sequencing depth tip the scales

The team found that two basic features of a dataset strongly shape binning success: how many species are present and how deeply the sample is sequenced. When communities contained only a few dozen species, most tools did reasonably well. But as the number of species climbed into the hundreds or thousands—levels closer to real gut or soil microbiomes—many older methods faltered, failing to recover complete genomes. More sequencing always helped, especially above about 7 gigabases per sample, but could not fully rescue tools that were not designed for high complexity. In contrast, a newer generation of neural network–based binning programs maintained high performance in these crowded communities, particularly when plenty of sequencing data were available.

Newer smart algorithms and the hidden problem of chimeras

A standout finding is that neural network tools such as COMEBin, SemiBin2, and VAMB (especially when they use information from multiple samples at once) consistently recovered more high-quality genomes than traditional approaches. However, the authors also looked beyond simple counts and asked how many reconstructed genomes were “chimeric”—artificial hybrids mistakenly built from pieces of different species. Using a specialized check for this kind of contamination, they showed that chimeric rates varied widely between tools. Some methods that looked strong by standard measures turned out to produce many hybrid genomes, while others, including certain neural network tools, kept chimeras relatively low. This highlights that quality checks must go beyond simple completeness and error rates.

Why many samples and paired reads matter

The study also tackled two practical design choices for microbiome projects: how many samples to group when doing “multi-sample” binning, and whether to use cheaper single-end sequencing or more informative paired-end reads. For tools that can learn from coverage patterns across multiple samples, performance improved as more samples were added—but only up to around 20. Using fewer gave little benefit, and using many more could even hurt results or waste computing power. Separately, the authors showed that datasets sequenced with single-end reads consistently yielded poorer assemblies and far fewer good genomes than paired-end data, even when the total amount of DNA sequenced was similar, because the missing pairing information leads to more fragmented contigs.

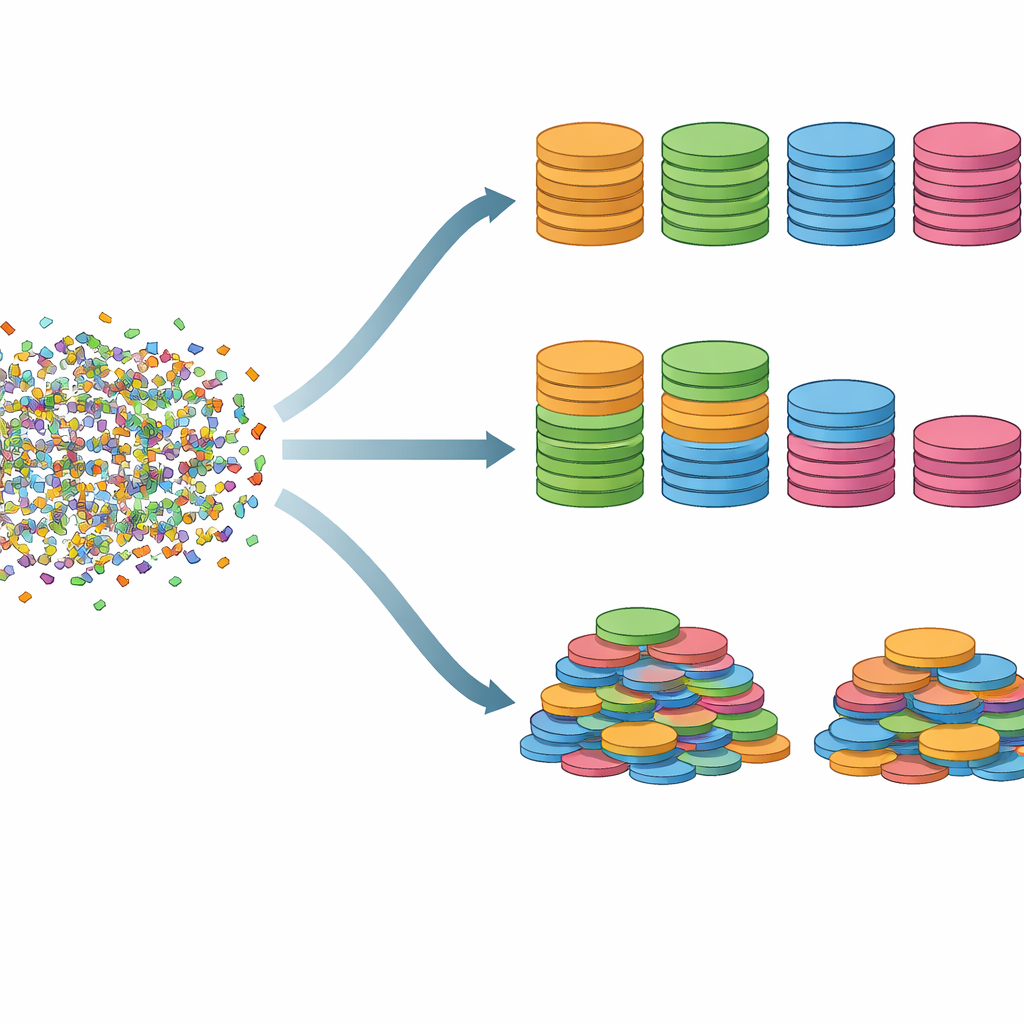

Combining tools to build better microbial catalogs

Because different programs tend to excel on different microbes, the authors tested whether an ensemble approach could do better than any single tool. By integrating genome bins from three top-performing neural network methods and then refining them with a careful post-processing step, they recovered over 30% more high-quality genomes than widely used older pipelines that combine traditional binning tools. These extra genomes were not just more of the same: they expanded the tree of life represented in the data and included more hard-to-capture regions such as 16S ribosomal RNA genes, which are important for naming and placing microbes on the microbial family tree.

What this means for future microbiome studies

For non-specialists, the core message is straightforward: the way we turn raw DNA reads into draft genomes greatly affects what we think lives in a given environment. This benchmarking work shows that deeper sequencing, paired-end reads, careful use of about 20 related samples, and modern neural network–based binning tools—ideally combined in an ensemble strategy—can greatly boost both the number and the reliability of recovered microbial genomes. In turn, that means more accurate maps of the invisible communities shaping our bodies and planet, and a stronger foundation for future discoveries in medicine, ecology, and biotechnology.

Citation: Kim, J., Kim, N., Cha, J.H. et al. Comprehensive benchmarking of metagenomic binning tools reveals key factors for improved genome recovery. Nat Commun 17, 3467 (2026). https://doi.org/10.1038/s41467-026-71521-w

Keywords: metagenomics, microbiome, genome reconstruction, machine learning tools, benchmarking study