Clear Sky Science · en

Evolution of object identity information in sensorimotor cortex throughout grasp

How the Brain Knows What We’re Holding

Every time you pick up a coffee mug in the dark or fish your phone out of your pocket without looking, your brain somehow knows what you’re grabbing. Yet the signals rushing in from your hand and arm change dramatically the instant you touch the object. This study asks a simple but deep question: how does the brain keep track of what object is in your hand as you move from reaching toward it to actually holding it?

From Reaching to Holding

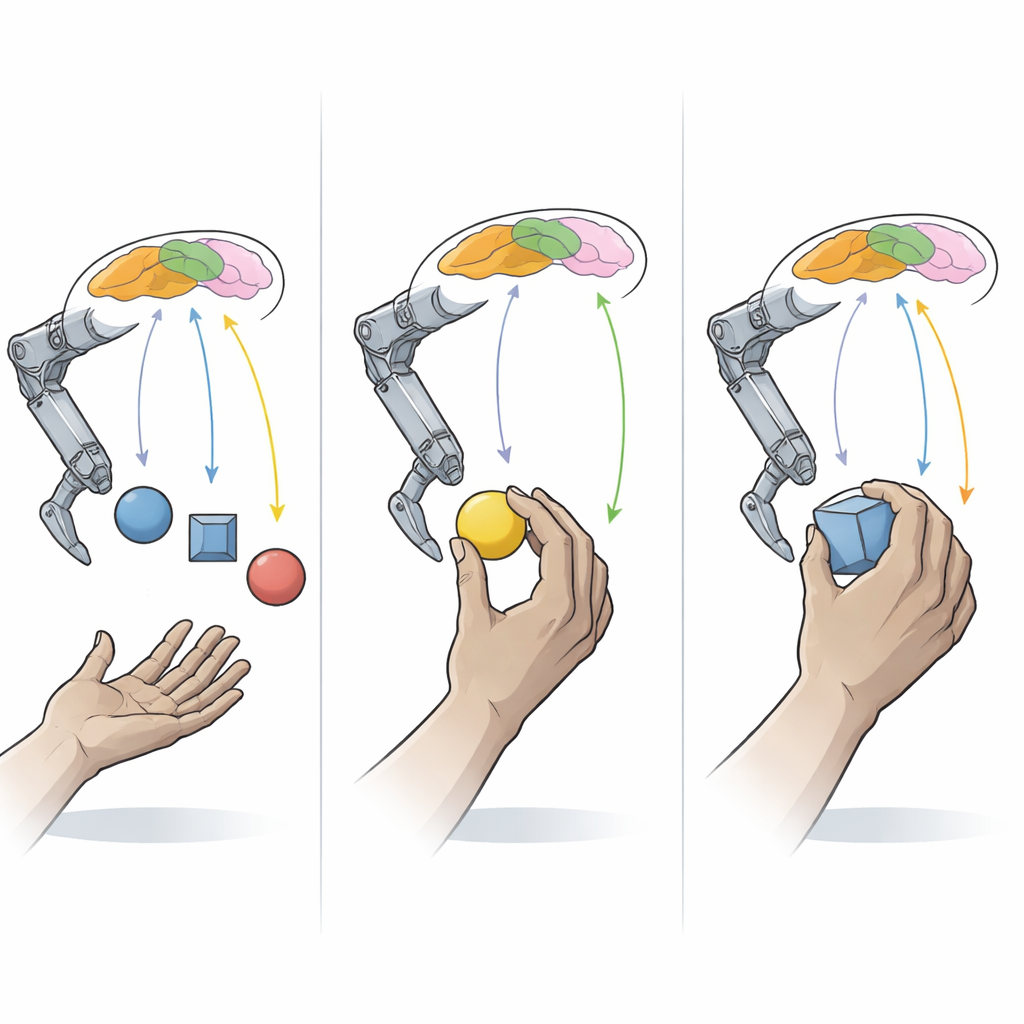

To explore this, researchers worked with macaque monkeys trained to grasp a set of everyday-shaped objects that varied in size, shape, and orientation. A robotic arm brought one object at a time directly to the monkey’s hand so that the arm and shoulder could stay almost still. Before contact, the hand naturally opened and shaped itself to match the object; after contact, the fingers closed with enough force to break a magnetic link and hold the object. Throughout this behavior, the team recorded electrical activity from hundreds of individual brain cells in several areas that control movement and touch in the hand.

Different Brain Areas, Different Moments

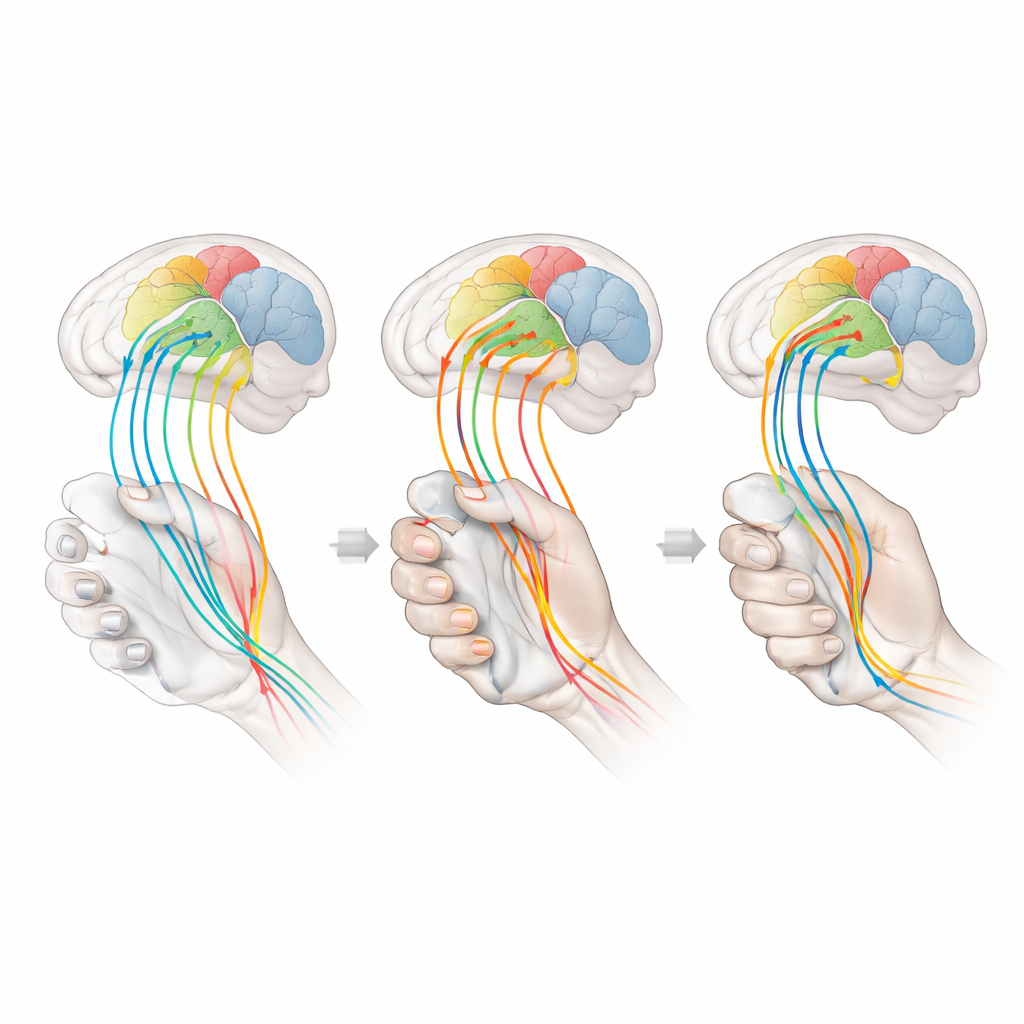

The recordings came from four neighboring regions along the central groove of the brain. One is the primary motor cortex, which helps drive muscle activity. Three lie in the primary touch area: one that mainly receives signals from muscles and tendons about joint angles, another that receives signals from the skin, and a third that combines both types of input. Before the hand made contact, brain cells in the motor and muscle-sensing regions were most active and already carried information that distinguished which object was about to be grasped. In contrast, the skin-focused regions were relatively quiet and carried little or no information about object identity during this “pre-shaping” phase.

What Changes at the Moment of Touch

When the fingers met the object, the pattern flipped in an unexpected way. Overall firing in many regions dropped after contact, even though the monkeys were still squeezing the objects. Yet the amount of object-specific information actually increased in the skin-based touch areas and the combined-input region, and stayed strong in motor and muscle-sensing cortex. In other words, fewer electrical spikes carried more meaningful information. Analyses that measured how efficiently each neuron used its activity showed that object identity became consolidated around the moment of contact and then remained stable, even though the raw activity levels declined.

Shifting Codes Rather Than Static Maps

A key insight came from comparing how activity patterns before and after contact related to each other. If the brain used the same “code” for object identity across the whole movement, then a computer trained to read out objects from pre-contact activity should also perform well after contact, and vice versa. Instead, such cross-epoch decoders performed poorly in every region, especially in the skin-based touch areas and the combined-input area. Only when the decoder was trained on data from both phases could it recover a unified, though still imperfect, readout of hand posture and object identity. This shows that, while information about what is being grasped is always present, the way it is represented in brain activity changes sharply when the hand begins to feel and squeeze the object.

Why This Matters for Hands and Machines

These results paint a picture of the sensorimotor cortex as a flexible communication hub rather than a static map. Before contact, motor and muscle-sensing regions primarily reflect how the hand is shaped and moving, allowing the brain to “guess” the object from posture alone. After contact, touch-sensitive regions suddenly become rich in information about which surfaces of the hand are loaded and how the object presses on the skin, while motor and muscle regions blend posture with forces needed to hold the object. For a layperson, the takeaway is that your brain does not store a single fixed fingerprint of each object. Instead, it constantly rewrites its internal description as your fingers close in and make contact, weaving together movement and touch so seamlessly that you simply experience the feeling of having a solid object in your grasp.

Citation: Yan, Y., Sobinov, A.R., Goodman, J.M. et al. Evolution of object identity information in sensorimotor cortex throughout grasp. Nat Commun 17, 2784 (2026). https://doi.org/10.1038/s41467-026-69502-0

Keywords: grasping, sensorimotor cortex, touch and proprioception, object recognition by hand, neural coding