Clear Sky Science · en

Readability assessment of English translations of Chinese classics: a study based on XGBoost and BP neural networks

Why ancient wisdom still needs clear English

Confucius’s Analects has shaped Chinese thought for more than two millennia, yet many English readers still find it hard to follow. Different translations try to stay true to the original while also being readable, but it is not obvious which versions are easier for today’s audiences to understand. This article uses modern language technology and machine learning to measure how readable several English translations of The Analects are, offering a data-driven way to think about how classic works travel across languages and cultures.

Many voices for one classic book

The study focuses on five complete English translations of The Analects, produced between the nineteenth and twenty-first centuries by James Legge, William Jennings, D. C. Lau, Edward Slingerland, and Burton Watson. All five translators were working from the same Classical Chinese original, but they made different stylistic and interpretive choices. To compare them fairly, the authors broke each translation into 1412 short lines that roughly match the traditional division of sayings in the Chinese text. Three translations were used to train their models, and two were held back to test how well those models could judge new passages.

Turning sentences into measurable signals

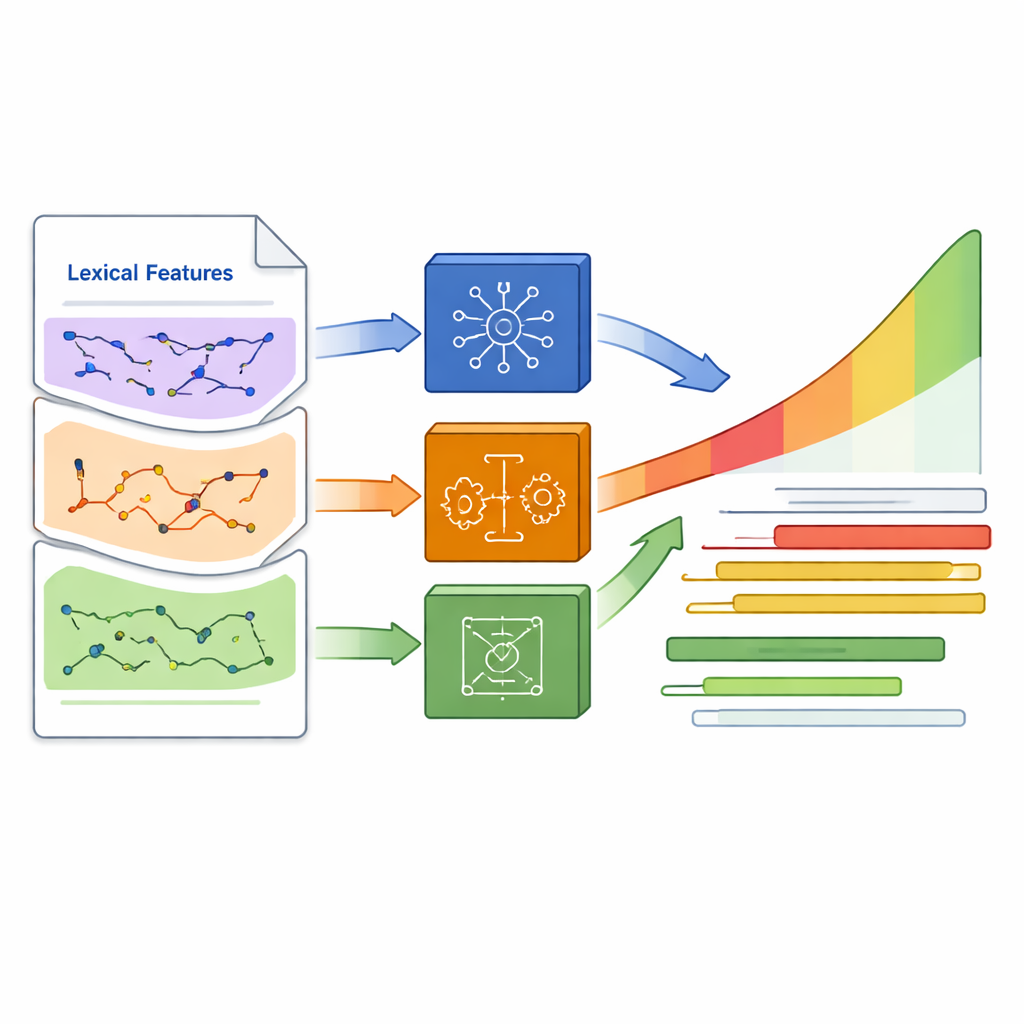

Instead of relying on a single familiar formula like Flesch Reading Ease, the researchers built a much richer set of 114 indicators for every line in the corpus. Some were traditional readability formulas that look at basic traits such as sentence length and average word size. Others captured vocabulary features like how many long or rare words appear, how varied the word choices are, and how dense the information is. A third group described sentence structure, for instance how many clauses a sentence contains or how often certain grammatical patterns occur. Finally, they added a modern twist: a large language model (BERT) estimated how semantically “typical” each line is compared with the rest of the corpus, providing a compact index of meaning-level coherence.

Teaching machines to sense difficulty

Using these indicators, the authors trained two machine learning models—an XGBoost model and a simple backpropagation neural network—to predict composite readability scores for each line. Those scores were based on the combined output of nine traditional formulas, giving the models a stable target to learn from. Before training, they examined how strongly each indicator correlated with the scores. Lines packed with long, multi-syllable, or technically difficult words tended to be rated harder, as did lines with more total characters and more complex sentence structures. In contrast, some fine-grained grammatical counts played only a modest role. Both machine learning models reproduced the training patterns extremely well on held-out data, suggesting that this blend of features captures much of what makes a passage from The Analects easy or hard to read.

Comparing translators at a glance and up close

Once trained, the models were turned loose on the two test translations by Slingerland and Watson. On a broad level, the researchers grouped predicted scores into bands from easiest to hardest and counted how many lines from each translation fell into each band. Watson’s rendering came out slightly easier overall: more of his lines landed in the high-readability bands, while Slingerland’s used longer sentences and more elaborate wording more often. At a finer level, the team looked at individual sayings where the two translators diverged sharply. In these cases, harder lines typically combined several factors—longer sentences, nested clauses, abstract or rare vocabulary, and dense commentary packed into a single line—while easier lines tended to favor shorter, more direct phrasing and simpler word choices.

What the findings mean for readers and translators

For non-specialist readers who want to approach Confucius in English, the study suggests that some translations offer a smoother path than others, at least in terms of raw reading effort. For translators and scholars, it shows how quantitative tools can complement traditional close reading by making patterns of difficulty visible across thousands of lines. The authors stress that readability is only one aspect of a good translation; faithfulness to the original meaning and literary style also matter. Still, by revealing how sentence length, structure, and word choice shape the experience of reading The Analects in English, this work points toward more accessible editions of Chinese classics and, ultimately, toward clearer cross-cultural conversations.

Citation: Yang, L., Zhou, G. Readability assessment of English translations of Chinese classics: a study based on XGBoost and BP neural networks. Humanit Soc Sci Commun 13, 588 (2026). https://doi.org/10.1057/s41599-026-06878-w

Keywords: text readability, machine learning, Confucius Analects, literary translation, natural language processing