Clear Sky Science · en

Distributional reservoir state analysis for real-time anomaly detection in multivariate time series data

Why spotting odd behavior in data matters

From keeping spacecraft healthy to catching cyberattacks and equipment failures, our world quietly depends on computers that watch streams of numbers and cry out when something looks wrong. These time-stamped measurements, known as time series, can change quickly, and problems may last only seconds before causing lasting damage. The challenge is building detectors that learn fast, run on ordinary hardware, and still react almost instantly when something unusual starts—or stops—happening. This paper introduces a new method, called MD-RS, that aims to become a practical workhorse for such real-time anomaly detection.

A faster way to listen to data streams

Many existing tools scan data using a moving window: they look at the last chunk of points, treat them all equally, and decide whether that window is normal or suspicious. This simple idea breaks down in practice. If the window is long, the detector reacts late when trouble starts and keeps shouting long after the problem is gone. If the window is short, it reacts quickly but struggles with patterns that unfold slowly, such as gradual drifts or changes in rhythm. Deep learning methods, such as modern transformer networks, can model richer patterns but often require long training times on powerful graphics cards, making them hard to update on the fly when a system’s behavior changes.

A dynamic memory instead of a rigid window

The MD-RS method replaces rigid windows with a dynamic, brain-inspired memory known as a reservoir. Imagine feeding a stream of measurements into a fixed web of simple units that are all connected to each other. As new values arrive, this web stirs and settles into a shifting pattern of activity that naturally remembers recent events while gradually forgetting the distant past. Because the internal connections never change, only a small part of the model needs to be trained, which keeps learning fast even on standard computers. This moving “echo” of the data provides a rich summary of what has happened recently, without having to choose a fixed window length by hand.

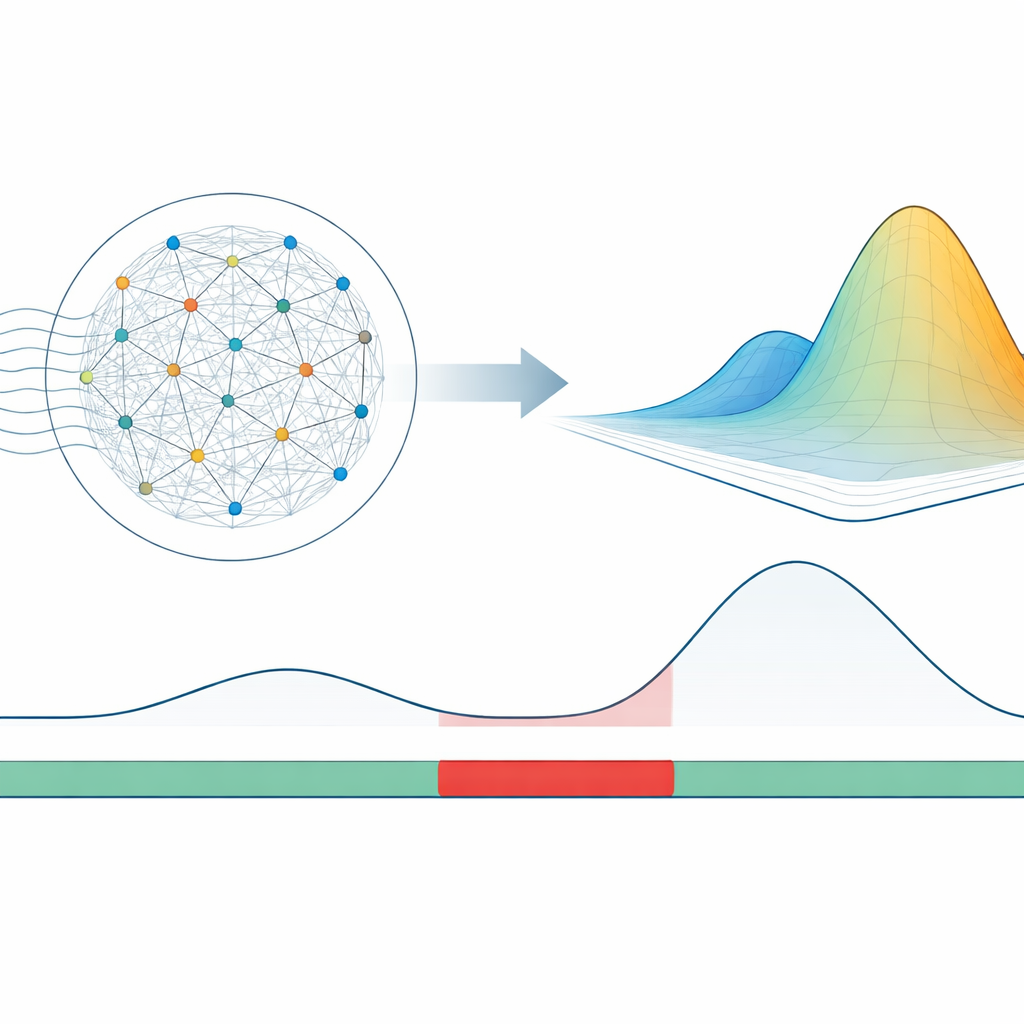

Measuring how far states drift from normal

Instead of trying to reconstruct the original signal and using the reconstruction error as an alarm, MD-RS looks directly at the patterns formed by the reservoir itself. During training, the method is shown only normal behavior and records how the reservoir’s activity typically clusters in its high-dimensional space. It then fits a simple statistical shape to this cluster, summarized by its average position and the way it spreads. When new data arrive, the method measures how far the current reservoir pattern has drifted from this learned “cloud” of normal activity, using a distance measure that accounts for both position and spread. Large distances signal that the system has entered an unfamiliar regime. Because this score depends on the reservoir’s internal state rather than noisy raw measurements, it changes smoothly over time, making it easier to set stable thresholds and avoid jittery alarms.

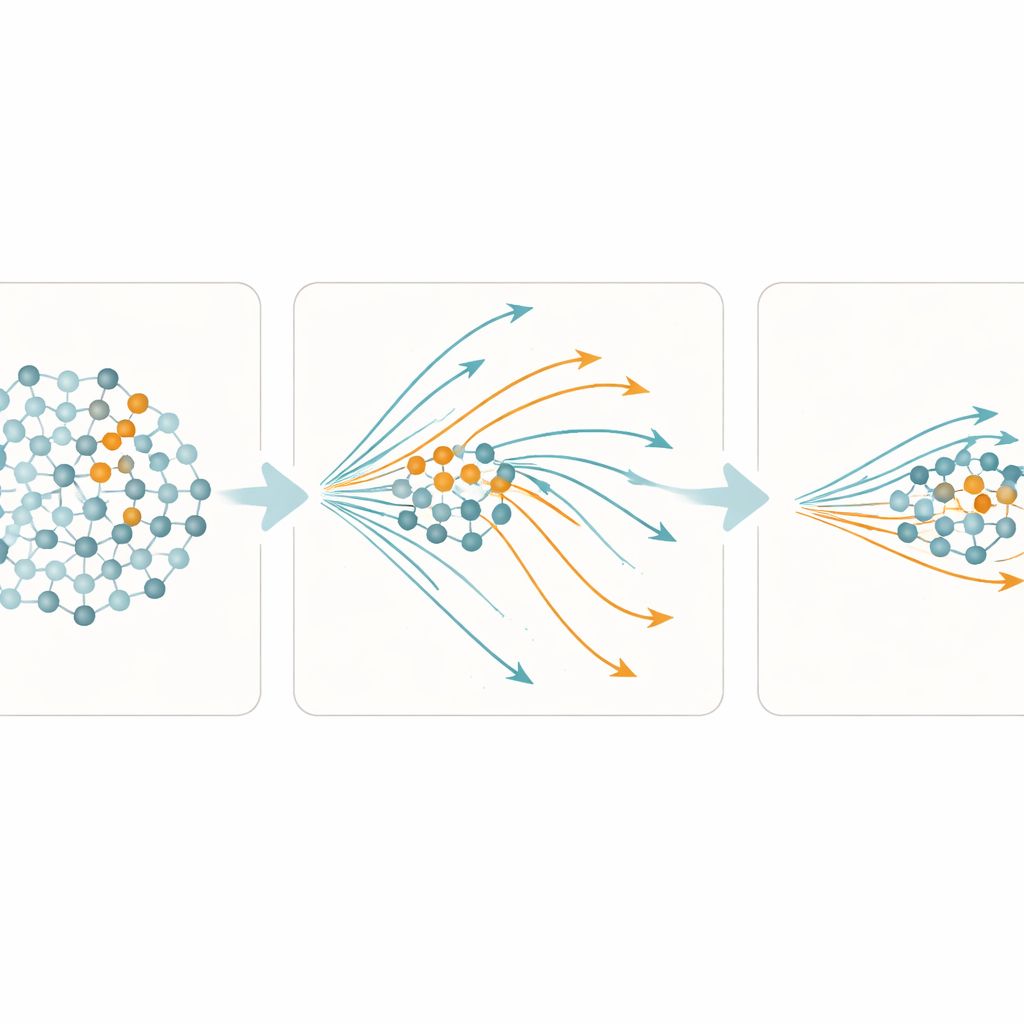

Combining quick and slow reactions

A further twist in MD-RS is to mix two types of units in the reservoir: most respond slowly and keep a long memory, while a smaller fraction respond quickly and forget just as fast. The slow units are good at capturing extended patterns and trends, which helps when anomalies stretch over many time steps or alter long-term rhythms. The fast units, in contrast, allow the system to snap back quickly once conditions return to normal, sharply reducing the time the detector remains on high alert after an event ends. By carefully choosing the mix—about nine slow units for every fast one—the authors show that the model can detect both short and long anomalies with high temporal precision, without having to retune settings for each new dataset.

Proving real-time performance in practice

To test MD-RS, the researchers compared it against classical window-based methods, several advanced deep learning systems, and other reservoir-based approaches on a large collection of benchmark datasets. These include univariate archives with tiny fractions of anomalies and complex multivariate streams from spacecraft, servers, and industrial plants. They evaluated not only whether anomalies were detected, but also how quickly detectors reacted when an anomaly started and how soon they relaxed when it ended, using a specialized metric that rewards good timing. Across most datasets and evaluation measures, MD-RS matched or outperformed the best existing techniques, while training in seconds to minutes on a single CPU—often orders of magnitude faster than deep learning models that rely on GPUs.

What this means for real systems

In simple terms, this work shows that you do not need a massive, slow-to-train neural network to get high-quality, real-time anomaly detection. By using a fixed, efficiently simulated dynamic memory and tracking how its internal activity drifts away from learned normal behavior, MD-RS provides timely, stable alarms that are practical to deploy and update. Its ability to handle both quick glitches and slow-burning problems, combined with its modest hardware needs, suggests it could serve as a new standard approach for monitoring everything from medical sensors and server farms to spacecraft and industrial plants.

Citation: Tamura, H., Fujiwara, K., Aihara, K. et al. Distributional reservoir state analysis for real-time anomaly detection in multivariate time series data. npj Artif. Intell. 2, 41 (2026). https://doi.org/10.1038/s44387-026-00090-6

Keywords: time series anomaly detection, real-time monitoring, reservoir computing, Mahalanobis distance, streaming data