Clear Sky Science · en

Quantum hyperdimensional computing: a foundational paradigm for quantum neuromorphic architectures

Why this new kind of computing matters

Computers are everywhere, yet many of today’s toughest problems – from decoding genomes to finding new drugs – still strain even the fastest machines. At the same time, quantum computers are beginning to move from lab curiosities toward practical tools. This paper introduces a way to bring these threads together: a framework called quantum hyperdimensional computing (QHDC), which is designed from the ground up to let quantum hardware think in a brain‑like, pattern‑based way.

From long numbers to rich patterns

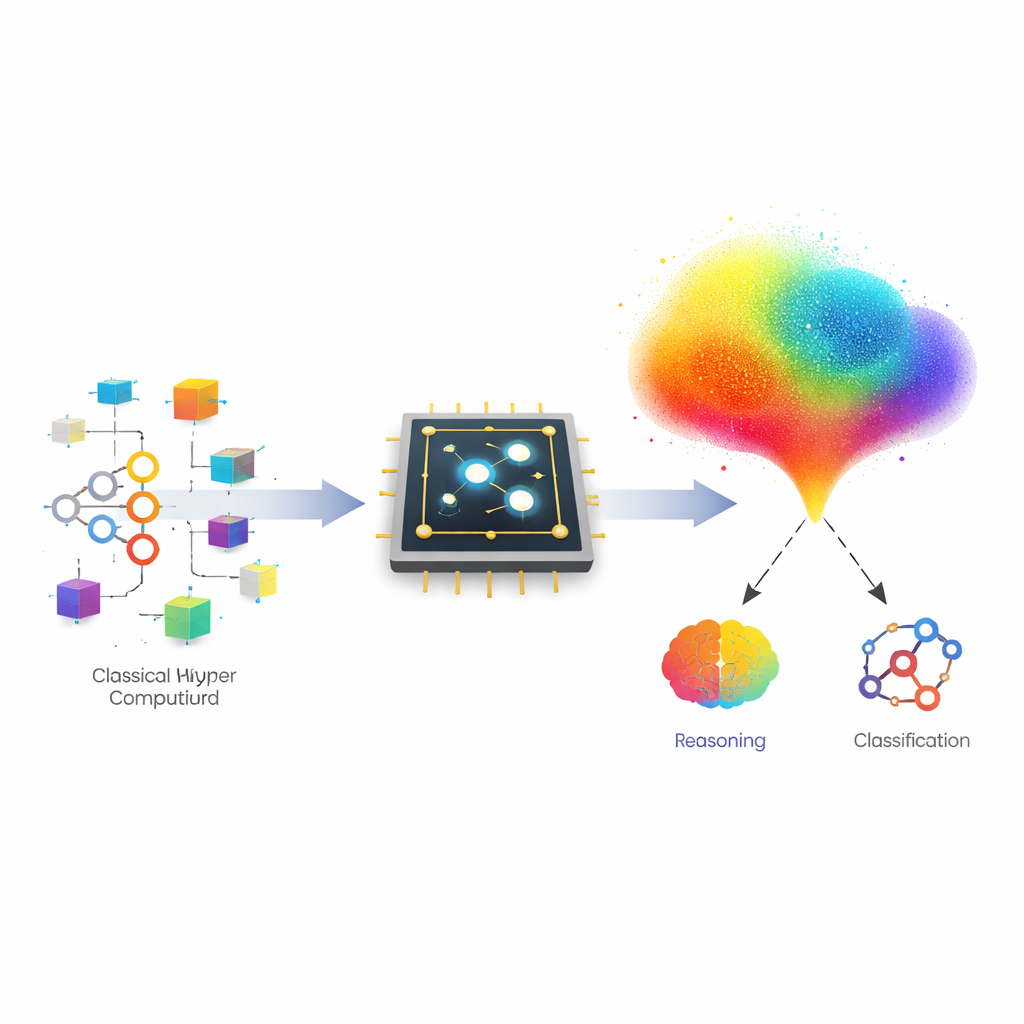

Classical computers usually treat information as precise numbers processed step by step. Hyperdimensional computing (HDC) takes a different route: it represents information as very long vectors – think of them as giant arrows in space – where each arrow encodes a concept, an image, or even a sentence. Simple operations on these arrows can bind concepts together (like linking a country to its currency), bundle many examples into a prototype, or shuffle components to record order in a sequence. Because the information is spread out across many components, these representations are naturally robust to noise and well suited to fast learning from few examples.

Marrying brain‑like codes with quantum machines

The authors show that the core tricks of HDC line up surprisingly well with how quantum computers naturally work. In QHDC, each high‑dimensional vector is realized as a quantum state spread over many basic configurations of a few qubits. Positive and negative entries in the vector are turned into different quantum phases, making it easy for the machine to combine two vectors by stacking their phase patterns. Operations that bundle many examples into one prototype are carried out with advanced quantum routines that carefully add together multiple quantum states, while order information is handled by a quantum version of a shuffle based on the quantum Fourier transform.

How the new framework is put to the test

To move beyond theory, the team implemented QHDC using IBM’s Qiskit software and ran it both in simulation and on a 156‑qubit IBM Heron quantum processor. They first tested a classic puzzle style task: analogical reasoning of the form “USA is to Dollar as Mexico is to ?”. Using only quantum versions of the basic HDC operations, the system correctly recovered “Peso” as the missing piece in ideal simulations, matching classical results. This demonstrated that quite subtle reasoning could be reproduced using quantum states and interference alone, even though the full circuit was too deep to run reliably on today’s noisy devices.

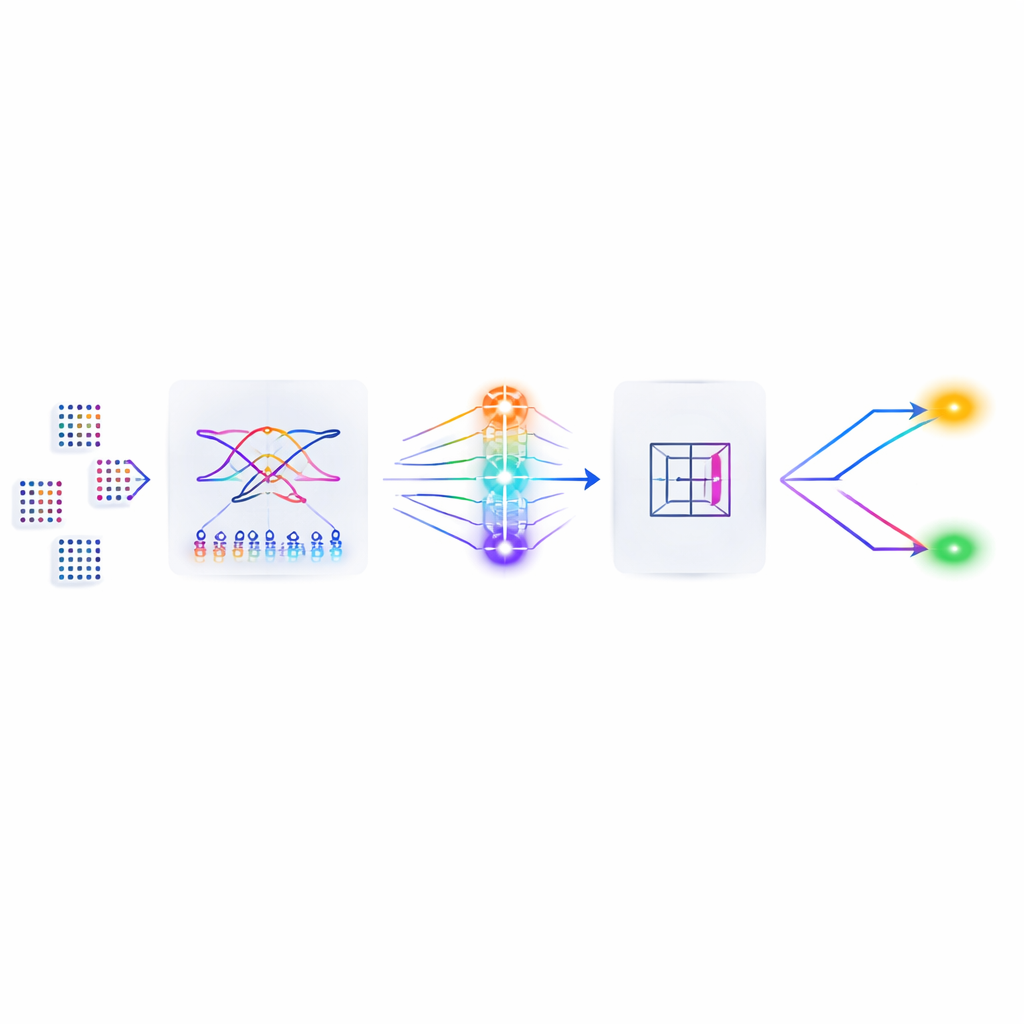

Teaching a quantum system to recognize numbers

The second test asked whether QHDC could learn from real data: distinguishing images of the handwritten digits 3 and 6 from the MNIST dataset. To fit within current hardware limits, the images were shrunk to tiny 4×4 black‑and‑white patterns. Each image was encoded into a small set of quantum feature states, then combined into a prototype for each class. Because the most ambitious fully quantum version of this bundling step would require extremely deep circuits, the authors adopted a hybrid approach: they did the bundling as simple addition in classical space, then re‑encoded the result as a quantum state. A quantum routine known as the Hadamard test then compared each new image’s state to the two prototypes to decide the label.

Performance today and hopes for tomorrow

On a conventional computer, this HDC‑style classifier achieved accuracy comparable to standard machine‑learning approaches. In ideal quantum simulations, the hybrid QHDC version came very close, while also revealing a key trade‑off: higher‑dimensional quantum states improved pattern separation but demanded deeper circuits that current hardware struggles to run without losing information. When executed on the real IBM device, accuracy dropped as noise accumulated, but the results still clearly reflected the intended behavior – and they did so with far less training effort than popular quantum machine‑learning models that require many rounds of parameter tuning.

What this could mean for future discoveries

Viewed in plain terms, this work shows that there is a natural way for quantum computers to store and manipulate rich, brain‑like patterns instead of just numbers and logic. The authors argue that as quantum hardware improves, QHDC could power fast, noise‑tolerant tools for tasks such as matching genomic sequences, screening huge chemical libraries, or fusing many kinds of medical data into a single patient‑specific fingerprint. For now, the study establishes that these ideas are not just mathematical curiosities: they can be programmed, run, and measured on real machines, laying a practical foundation for quantum neuromorphic computing.

Citation: Cumbo, F., Li, RH., Raubenolt, B. et al. Quantum hyperdimensional computing: a foundational paradigm for quantum neuromorphic architectures. npj Unconv. Comput. 3, 21 (2026). https://doi.org/10.1038/s44335-026-00064-6

Keywords: quantum computing, hyperdimensional computing, neuromorphic algorithms, quantum machine learning, pattern recognition