Clear Sky Science · en

Determinants of visual ambiguity resolution

Mystery in Everyday Sight

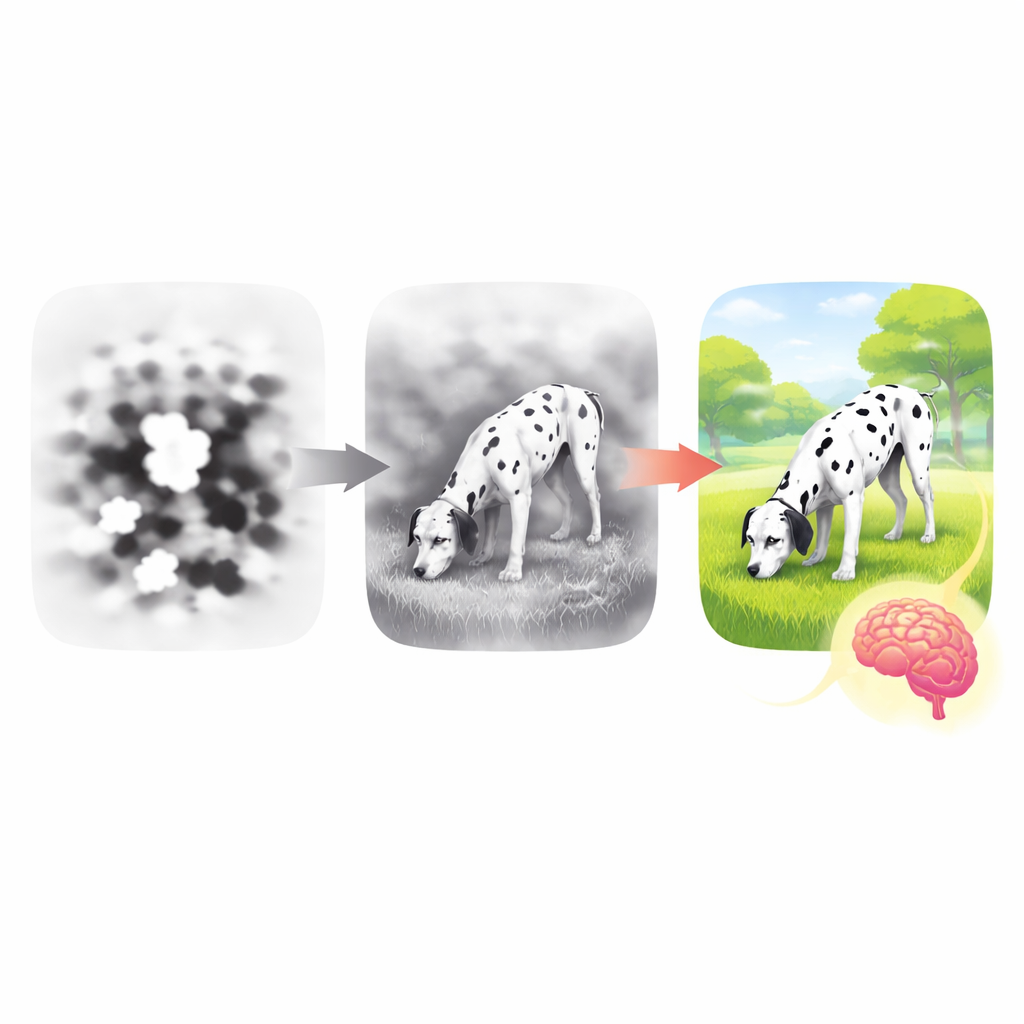

Have you ever stared at a blurry black-and-white picture that suddenly “pops” into a clear object, after someone tells you what it is? This study digs into that everyday magic. The researchers ask why some fuzzy images stay stubbornly confusing while others snap into focus in our minds, and what actually changes in our brains once we finally “get” what we are looking at.

Turning Clear Pictures into Visual Riddles

To probe these questions, the team created a huge collection of visual riddles. They started with 1,854 photos of everyday objects—from birds and tools to fruits and vehicles—and converted them into stark black-and-white “Mooney” images. These images keep only broad patches of dark and light, stripping away fine detail and shading. More than 900 volunteers looked at these pictures online. For each image, people first said whether they could identify the object and then chose a name for it from a list. Crucially, every ambiguous image was shown twice: once before and once after participants briefly saw the original, clear gray version in between. This allowed the researchers to watch how perception changed as people gained more information.

What Makes a Picture Hard to See?

To understand why some images felt more ambiguous than others, the researchers turned to a brain-inspired artificial neural network that mimics the visual processing stages of the human brain. They compared how similar each clear image and its Mooney counterpart looked to this model at different stages, from simple edge detection to complex object recognition. They found that the Mooney transformation mostly damaged the higher-level stages that carry information about what the object is, while lower-level features like edges and coarse shapes were relatively preserved. Images that kept more of these high-level features were the ones people found easier to recognize. In other words, what makes an image confusing is less the loss of raw detail and more the loss of the abstract structure that signals “this is a dog” or “this is a chair.”

How Learning Changes the Way We Look

Seeing the clear version of an image—“disambiguation”—had a powerful effect. Afterward, people were faster and more confident in saying they recognized the Mooney image, and they named it correctly much more often. But the way features mattered also shifted. Before disambiguation, recognition depended strongly on whether the image preserved those high-level, object-like patterns. Afterward, lower-level visual features such as shapes and contours played a bigger role. It is as if, once people had seen the answer, they started matching the Mooney patches of black and white against a newly formed internal template from the clear image, using the fine-grained structure of the picture rather than guessing from vague impressions.

From Wild Guesses to Shared Meaning

The team also looked at the words people used to name each object. They measured how “far” each label was from the true object’s meaning in a semantic space built from language data, and how varied people’s labels were for the same image. Before disambiguation, guesses were scattered and inconsistent: some answers were loosely related (“horse” for “zebra”), others were wildly off. After viewing the clear image, people’s labels moved closer in meaning to the true object and became more similar to one another. Interestingly, the amount of information gained from the clear image did not improve recognition in a simple straight line. Instead, there was a U-shaped pattern: people did best either when the new information strongly confirmed what they already suspected or when it clearly overturned a wrong guess. Moderate, ambiguous corrections were less helpful.

How Our Minds Tame Visual Confusion

This work suggests that we resolve visual confusion through a flexible dance between broad guesses and precise matching. At first, our brains lean on high-level expectations: we try to fit vague shapes to familiar objects. Once we are shown the answer, we switch to checking whether the exact arrangement of edges and patches matches the object we now “know” is there. At the same time, our mental description of the object becomes both sharper and more widely shared across people. The finding that more information is not always better, and that clear confirmation or clear contradiction can be most helpful, offers a richer picture of how we extract meaning from incomplete views—a process at the heart of how we see in the messy, ambiguous real world.

Citation: Linde-Domingo, J., Ortiz-Tudela, J., Völler, J. et al. Determinants of visual ambiguity resolution. Commun Psychol 4, 78 (2026). https://doi.org/10.1038/s44271-026-00441-8

Keywords: visual perception, ambiguity, object recognition, predictive processing, Mooney images