Clear Sky Science · en

Impact of using artificial intelligence as a second reader in breast screening including arbitration

Why this study matters for women and families

Breast screening is one of the most widely used tools for catching cancer early, when treatment is more effective and less aggressive. Yet the system depends on a large, highly trained workforce of specialists who examine mammogram images by eye. In the United Kingdom, there are not enough radiologists to comfortably meet demand, raising concerns about delays and missed cancers. This study asks a question that affects millions of women: can an artificial intelligence (AI) system safely take on part of the reading work alongside human experts without sacrificing accuracy—and possibly even help find cancers earlier?

How breast screening works today

In the National Health Service Breast Screening Programme, women aged 50 to 70 are invited for a mammogram every three years. Each set of images is normally read independently by two trained mammography readers, such as radiologists or specialist radiographers. If the two disagree, or if local policy requires it, the case goes to a special discussion called arbitration, where readers together decide whether the woman should be recalled for further tests. This double-reading system is designed to balance two goals: catching as many cancers as possible while avoiding unnecessary recalls that cause anxiety, extra tests, and cost.

What the researchers set out to test

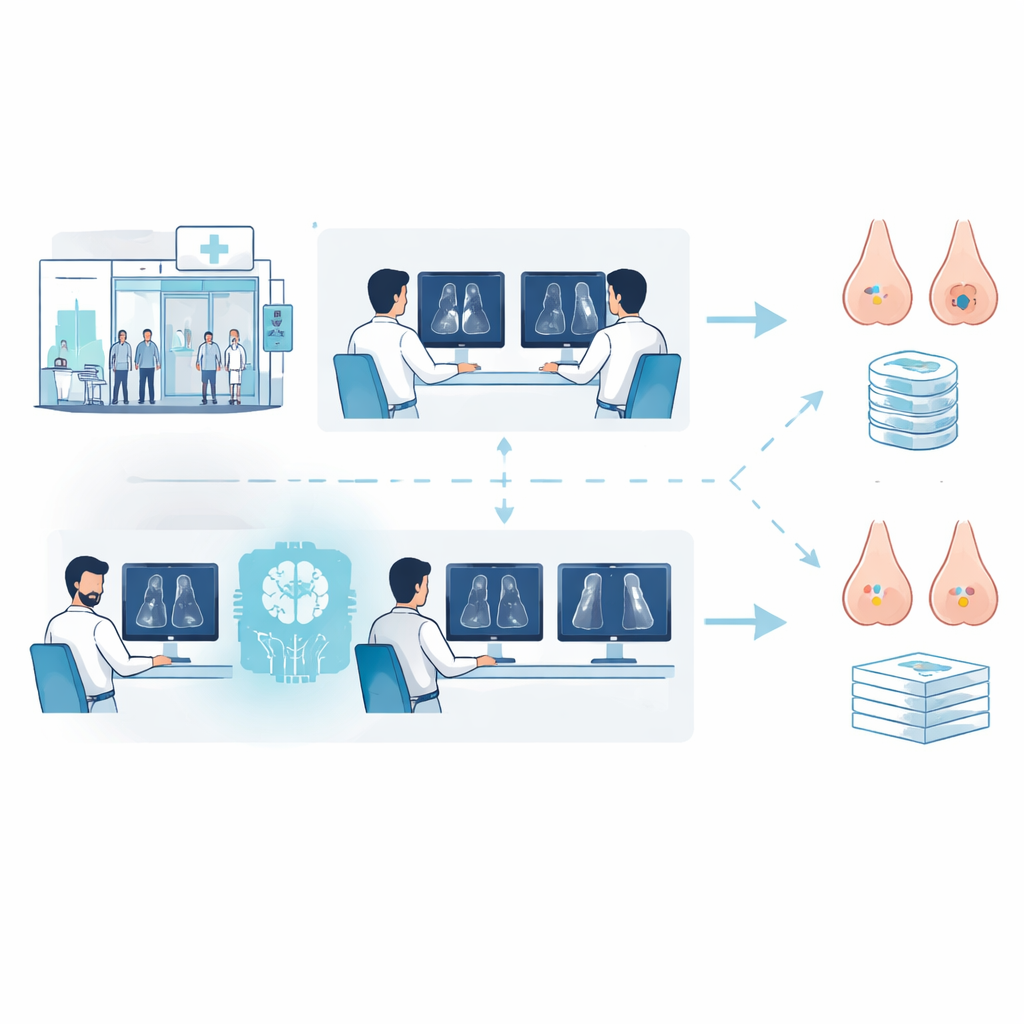

The team studied mammograms and clinical records from 50,000 women who had been screened at two hospital services in London. Because these women had several years of follow-up, the researchers could see not only which cancers were caught at screening, but also which appeared later between screens (so-called interval cancers) or at the next routine visit. They compared two pathways using the same historical images: the standard approach with two human readers, and an AI-assisted approach where the first reader was human and the second was the Google-developed AI system. Any cases that needed a final decision went to arbitration panels of 22 experienced readers, who worked as they would in real clinics.

How well AI stacked up against human experts

Overall, using AI as the second reader performed at least as well as having two humans, once arbitration decisions were taken into account. The AI-assisted pathway showed very similar ability to flag women who truly had cancer (sensitivity) and to reassure those who did not (specificity), meeting strict statistical criteria for “noninferiority.” In fact, specificity was slightly higher with AI, meaning fewer women were recalled unnecessarily, and the cancer detection rate was virtually identical between the two approaches. Because AI replaced one of the human reads for most cases, the total number of routine readings fell by about half, translating to an estimated 36–44% reduction in reader time even after accounting for extra effort at arbitration.

Where AI helped—and where it fell short

Before arbitration, the AI system was more likely than individual human readers to flag cancers that later appeared between screens or at the next routine visit, suggesting it can sometimes spot subtle changes that humans miss. However, many of these potential early warnings were overturned during arbitration, especially when readers were influenced by older images that the AI did not analyze or by extra AI-highlighted spots that turned out to be harmless. In a small but important subset of 93 women, the AI correctly judged that something was wrong, yet the arbitration panels decided against recall; most of these women later developed interval or next-round cancers. At the same time, human arbitration correctly cancelled many AI-triggered recalls in women who were ultimately cancer free, improving overall specificity. Across different age groups, ethnicities, breast densities, and cancer types, the AI-assisted pathway generally matched the performance of standard care, though results for some smaller subgroups were less certain.

What this could mean for future screening

The study suggests that AI can safely take on the role of a second reader in breast screening without reducing the quality of care, while easing pressure on a stretched workforce. Yet it also highlights the limits of current systems: by itself, AI did show promise in flagging cancers earlier, but these advantages were diluted when humans, understandably cautious, overruled some AI prompts. The authors argue that improving how AI explains its suggestions, reducing distracting false alarms, and training clinicians in when to trust or question the tool could unlock more of its potential. If these human–machine partnerships are refined and carefully tested in real-world trials, AI-supported screening may not only sustain current programs but also help more women have their cancers caught at a stage when treatment is most likely to succeed.

Citation: Warren, L.M., Venton, J., Young, K.C. et al. Impact of using artificial intelligence as a second reader in breast screening including arbitration. Nat Cancer 7, 507–521 (2026). https://doi.org/10.1038/s43018-026-01128-z

Keywords: breast cancer screening, mammography, artificial intelligence, radiology workload, medical arbitration