Clear Sky Science · en

Prospective evaluation of artificial intelligence integration into breast cancer screening in multiple workflow settings: the GEMINI study

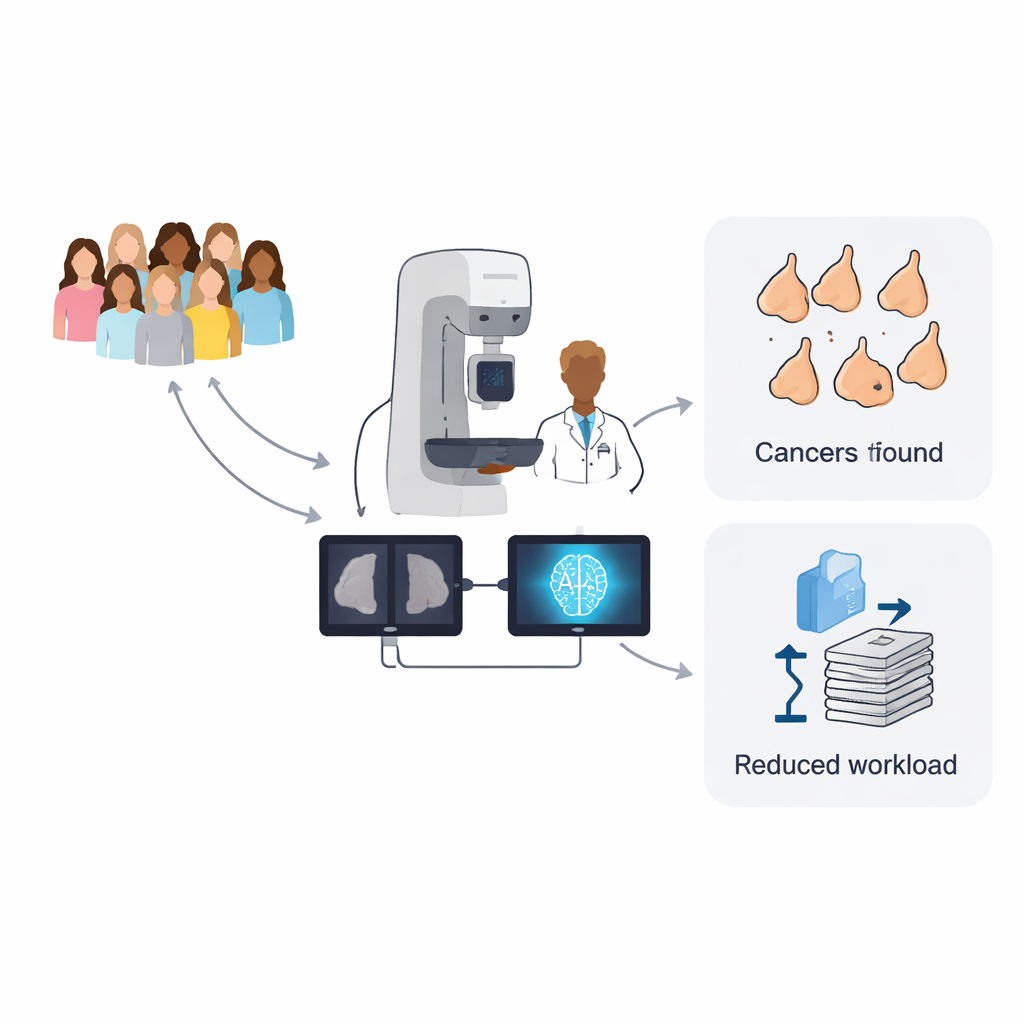

Why this matters for women and clinics

Breast screening saves lives, but it also places heavy demands on specialist doctors and can cause anxiety when women are called back unnecessarily. This study, carried out in a real National Health Service (NHS) breast screening program in Scotland, asked a practical question: can artificial intelligence (AI) help doctors find more cancers while keeping call‑backs and workload under control? Instead of testing a single setup, the researchers explored many different ways to weave AI into day‑to‑day screening, offering a roadmap for clinics that have different pressures and priorities.

How breast screening works today

In the UK, women aged 50 to 71 are invited for a mammogram every three years. Each set of breast X‑rays is read independently by two trained human readers. If they disagree, a third expert makes the final decision. This "double reading" approach improves safety but demands large amounts of specialist time. At the same time, health systems face rising numbers of women coming for screening and shortages of radiologists. Any new tool must therefore do more than just spot cancers; it must also fit into the existing system and help manage limited staff time.

What the GEMINI study set out to test

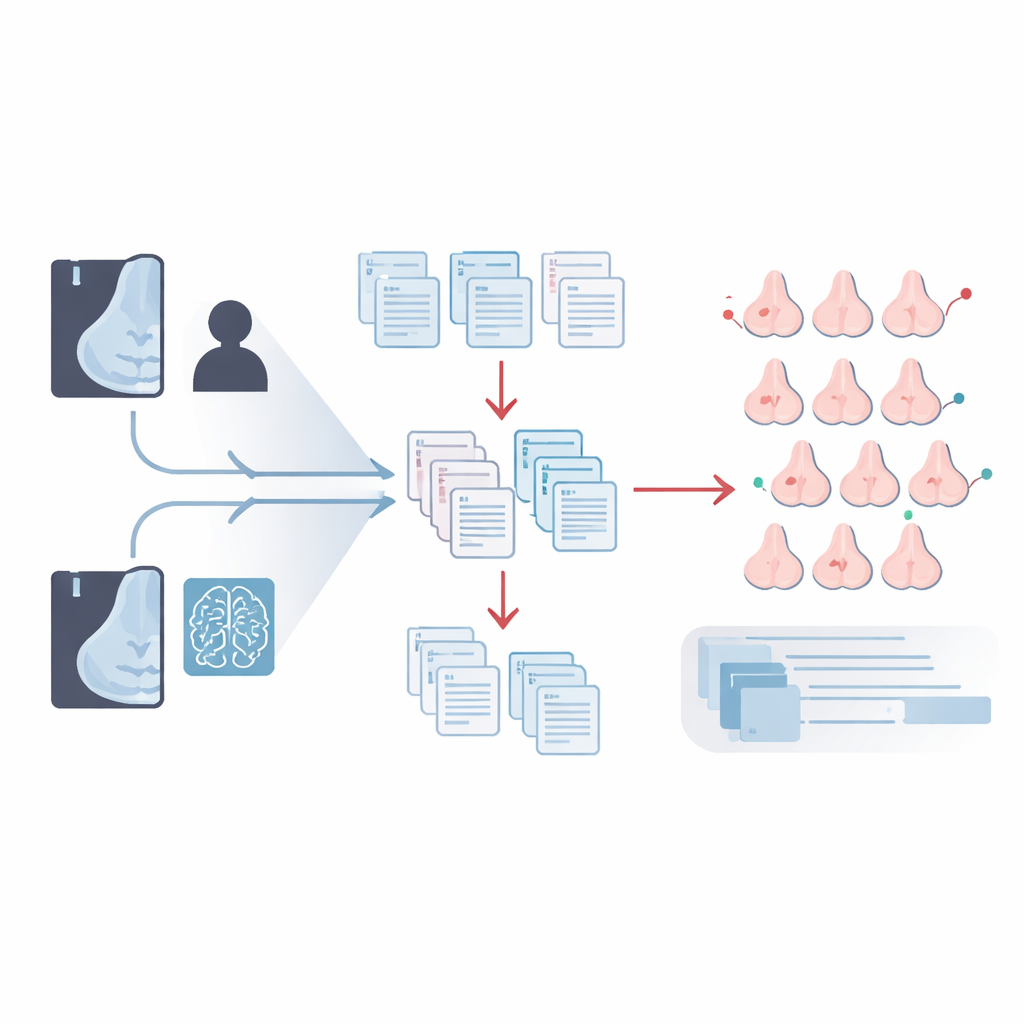

The GEMINI study followed 10,889 women who attended routine screening in one UK region in 2023. Everyone received standard care: their mammograms were read by two human experts, with arbitration if needed. In the background, a commercial AI system called Mia also analyzed the images and quietly stored its opinion. The research team then used two main ideas. First, they tried AI as an "extra safety net": if AI suggested recalling a woman but the screening team did not, her images were sent for an additional human review. Second, they used the stored AI outputs to simulate different "triage" setups, where AI would occasionally stand in for the second reader to save time. In total, they modeled 17 distinct workflows that mixed and matched these approaches.

What happened when AI acted as an extra reader

When AI disagreed with the routine decision not to recall, senior readers took another look at those mammograms. Out of 1,345 such cases, 55 women were called back for further tests, and 11 extra breast cancers were found that would otherwise have been missed at that visit. Most of these were invasive cancers, and many were relatively small. Importantly, reviewing these extra cases was quick: roughly nine in ten were read in under a minute, similar to or faster than a typical mammogram read. This suggests that experienced readers were able to use the AI prompts efficiently, confirming real concerns while confidently dismissing harmless changes when they compared with past images.

How triage with AI can lighten the load

The team then asked how AI could safely replace some of the second human reads. In "triage" workflows, when the first human reader and AI agreed, that agreement stood, and a second reader was not needed for those cases. In "triage negatives" workflows, this shortcut was only used when both agreed that no recall was needed, which avoids extra call‑backs but still saves work. Depending on the decision thresholds set for the AI, these simulated strategies could cut the total reading workload by about one‑third to nearly one‑half. Some versions slightly reduced the number of cancers found, while others preserved cancer detection and even reduced the proportion of women called back for more tests.

Finding the best balance for each clinic

The main workflow the authors favoured combined a "triage negatives" setup with the extra‑reader role for AI. In this configuration, all cancers found by routine screening were still detected, plus the 11 additional cancers highlighted by AI. This raised the cancer detection rate by about 10 percent—roughly one extra cancer per 1,000 women screened—while keeping the recall rate essentially unchanged and cutting human reading workload by up to 31 percent. Other tested workflows offered different trade‑offs, such as larger workload savings and fewer false alarms, at the cost of missing a small number of cancers that the primary workflow would have caught.

What this means for future breast screening

To a non‑specialist, the key message is that AI is not poised to replace doctors in breast screening but can serve as a flexible assistant. In this real‑world evaluation, one AI system, used carefully, helped find more cancers without increasing the number of women called back and while freeing up a sizeable fraction of expert reading time. Because the study explored many possible setups, health services can choose the configuration that best fits their needs, whether that is maximising cancer detection, minimising unnecessary recalls, or easing staff shortages. Further work in other regions, with different AI tools and longer follow‑up, will be needed, but this study shows that thoughtfully integrated AI could make breast screening both sharper and more sustainable.

Citation: de Vries, C.F., Lip, G., Staff, R.T. et al. Prospective evaluation of artificial intelligence integration into breast cancer screening in multiple workflow settings: the GEMINI study. Nat Cancer 7, 484–493 (2026). https://doi.org/10.1038/s43018-026-01126-1

Keywords: breast cancer screening, artificial intelligence, mammography, radiology workload, cancer detection