Clear Sky Science · en

A case study comparing anonymized and synthetic health insurance claims data for medication safety assessments

Why this matters for everyday health data

Whenever you visit a doctor or pick up a prescription, digital traces of your care end up in large insurance databases. These records are gold mines for finding rare drug side effects and improving treatment guidelines—but they are also deeply personal. This study asks a simple but crucial question: when we try to protect patient privacy by altering these data, can researchers still trust the medical findings they get back?

Two different ways to hide in the crowd

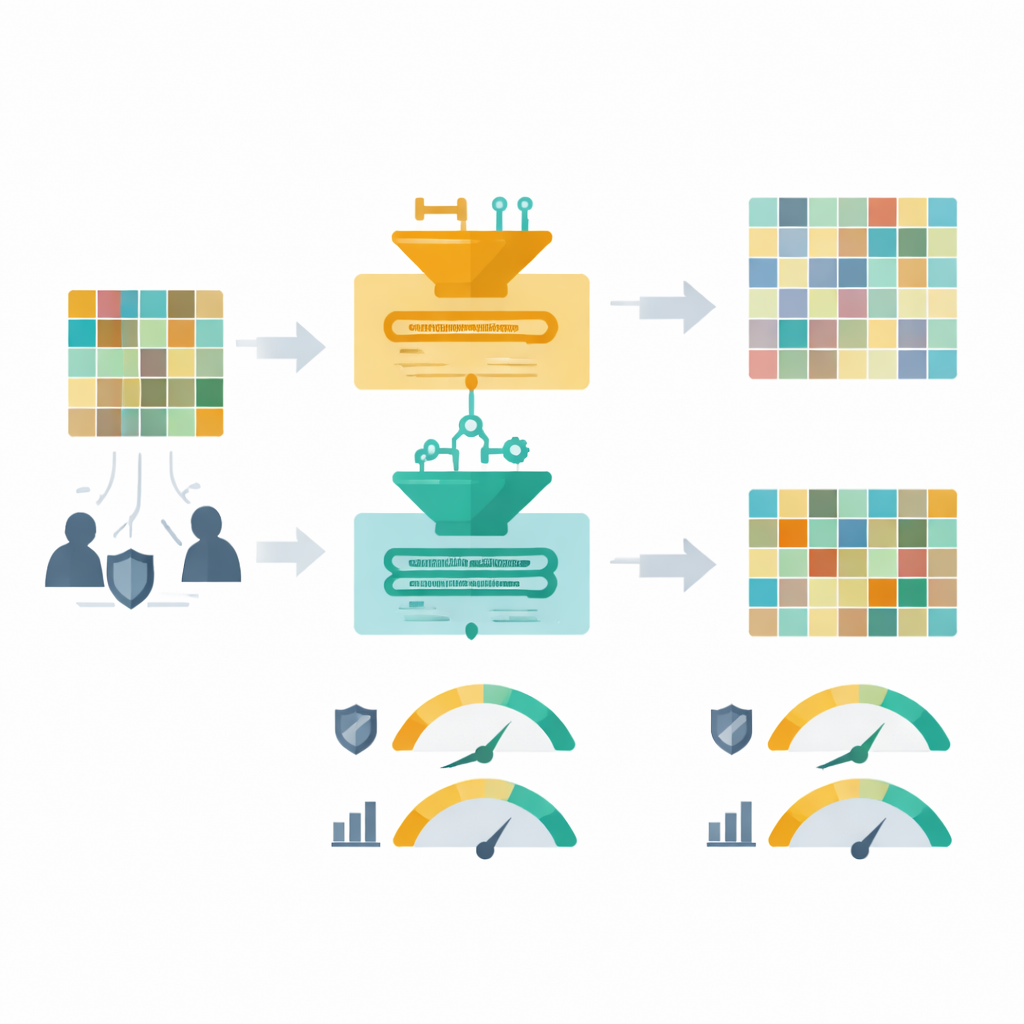

The researchers focused on a real insurance claims dataset about people treated for blood clots in the veins (venous thromboembolism) who took blood thinners plus antiplatelet drugs. One method, called anonymization, keeps the real records but blurs or removes details so individuals are harder to pick out. The other, synthetic data, trains a computer model on the original records and then fabricates an entirely new dataset that follows the same overall patterns without reproducing exact people. The team created three protected versions of the same data: a very cautious anonymized version that protected every variable, a more targeted anonymized version based on a detailed risk analysis, and a fully synthetic version.

How closely did the copies match the real patients?

To see how much the protected datasets still resembled the original, the authors compared basic features such as age, sex, and common illnesses, and also looked at how variables were related to one another. The highly cautious anonymized data lost more than a third of all patient records and dropped many health indicators entirely, which distorted the balance between treatment groups. The threat-modeled anonymization removed fewer records and preserved most patterns better. The synthetic data kept the original number of patients and captured many patterns well, but sometimes shifted proportions for certain conditions or drug exposures. When the team used more advanced statistical checks, the threat-based anonymization and the synthetic data both showed strong overall similarity to the original, while the very strict anonymization looked least like the source data.

Could the original safety study be reproduced?

The original clinical question behind these data was whether one class of blood thinners, called direct oral anticoagulants, was safer or riskier than older vitamin K antagonists when combined with antiplatelet drugs. The study looked at two outcomes: deaths from any cause and episodes of major bleeding. Using each protected dataset, the researchers re-ran the same time-to-event analyses that estimate how much one treatment changes risk compared with the other. All hazard ratio estimates that could be calculated landed inside the original study’s uncertainty range, suggesting they did not fundamentally reverse the medical conclusion. But the strict anonymization version lost so many events that some bleeding risks could not be estimated at all, and the statistical uncertainty balloons. The targeted anonymization and synthetic data did better but still nudged the risk estimates and widened the error bars, especially for rare bleeding events.

How safe are the protected datasets from prying eyes?

Next, the team asked how hard it would be for a determined attacker to re-identify someone or to infer sensitive health details. They used state-of-the-art “red team” tests that try to link records to outside information, single out individuals, guess missing attributes, or detect whether a person’s record was used to build the dataset in the first place. Against the original data, these attacks were highly successful, underscoring the need for extra protection before any wider sharing. All three protected versions sharply reduced these privacy risks under both a realistic, limited-attacker scenario and an aggressive, worst-case scenario. The strict anonymization offered the strongest protection overall but at the cost of the greatest information loss. The threat-based anonymization and the synthetic data provided a more balanced trade-off, though each showed small pockets where certain attributes or unusual records were a bit more exposed.

What this means for using protected health data

For this small but complex claims dataset, no single protection strategy clearly won on every front. Stronger privacy almost always came with weaker scientific signal, especially for rare events that matter in safety studies. The authors conclude that both carefully designed anonymization and well-executed synthetic data can make insurance data much safer to share, but protected datasets of this size are best suited for testing methods and running feasibility checks, not for drawing final clinical conclusions. Whenever possible, key medical findings should still be confirmed on the original, tightly governed data, using protected versions as complementary tools rather than complete replacements.

Citation: Halilovic, M., Meurers, T., Alibone, M. et al. A case study comparing anonymized and synthetic health insurance claims data for medication safety assessments. npj Digit. Med. 9, 321 (2026). https://doi.org/10.1038/s41746-026-02622-5

Keywords: health data privacy, synthetic data, data anonymization, insurance claims research, medication safety