Clear Sky Science · en

Multimodal multi-instance learning for cardiopulmonary exercise testing performance prediction

Why this matters for people with weak hearts

For people living with heart failure, one of the biggest questions is, “How much time do I have, and what can doctors still do for me?” The best medical test for answering that today is a demanding treadmill or bike exam that measures how much oxygen the body can use during intense exercise. But this test is hard to get and isn’t available at many hospitals. This study shows how doctors might instead use common heart ultrasound scans and information already in the medical record, combined with modern artificial intelligence, to estimate the same crucial number and flag patients who may need life‑saving advanced therapies.

The challenge of spotting danger early

Heart failure affects millions of Americans and often steals more than a decade of life. At its most advanced stage, survival can be worse than many cancers, yet only a small fraction of patients receive treatments like heart transplantation or mechanical pumps in time. A key tool for deciding who should be referred for these therapies is cardiopulmonary exercise testing, which measures “peak VO₂,” the maximum oxygen the body can use during exercise. Low peak VO₂ is a strong warning sign, but the test requires special equipment, trained staff, and space, so many centers—especially smaller or rural hospitals—cannot offer it. In contrast, standard heart ultrasound scans (transthoracic echocardiography, or TTE) and electronic health records (EHRs) are widely available but, on their own, have not been very good at predicting who is at highest risk.

Teaching computers to read across tests

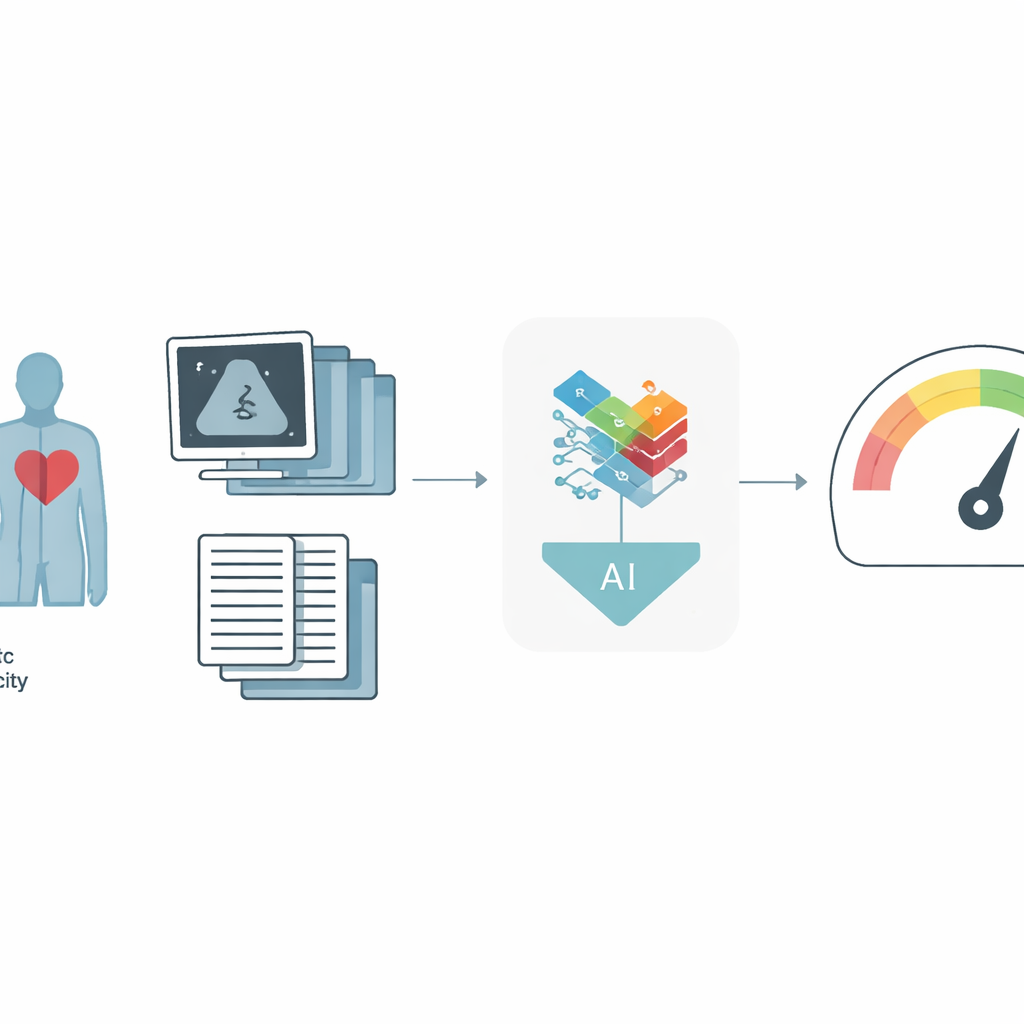

The researchers built a new artificial intelligence system that learns from two major sources of information: moving ultrasound images of the heart and detailed data from the EHR, such as age, weight, medications, and standard heart measurements. Each ultrasound exam contains many clips and specialized views, so instead of treating each picture separately, the model reviews all of them together, more like a physician would. It uses a “multi‑instance” strategy: first turning each image or clip into a compact description, then combining them with an attention mechanism that lets the model focus on the most informative parts. In parallel, a specialized neural network trained on many kinds of table‑shaped medical data converts the EHR information into its own summary. A final fusion step blends the ultrasound and EHR summaries into a single portrait of the patient, from which the system predicts peak VO₂ and whether the person falls below a critical safety threshold.

How well the system performs

The team trained and tested their approach on data from four large hospitals in the New York–Presbyterian network, using 1,000 patients for development and 127 patients from separate sites for external validation. Compared to an earlier, simpler AI model that looked at ultrasound and EHR data more independently, the new framework was clearly more accurate. It explained about 60% of the variation in peak VO₂ in the main test group, versus about 53% before, and its typical error shrank by roughly half a metabolic equivalent of task, a clinically meaningful improvement. When the goal was simply to identify high‑risk patients—those with especially low exercise capacity—the system reached an area‑under‑the‑curve of 0.85 in the development group and 0.87 in the external hospitals, outperforming all models that used only ultrasound or only EHR data. In practical terms, at a fixed, clinically reasonable trade‑off between missed cases and false alarms, more truly high‑risk patients were correctly flagged.

Looking inside the black box

To check that the model was paying attention to sensible features, the authors created visual maps over the ultrasound images showing which regions most influenced the predictions. The maps tended to highlight heart chambers, their movement, and blood‑flow waveforms—features that cardiologists already rely on—suggesting that the system is learning meaningful patterns rather than noise. In the EHR data, measures like age, body mass index, and left‑ventricular pumping strength emerged as especially important, again matching clinical expectations. The researchers also examined how well the model worked in different subgroups. Performance was similar for men and women and for white and non‑white patients when predicting the exact peak VO₂ value, though some gaps appeared in older adults and in high‑risk classification across races, underscoring the need for more diverse data and fairness‑focused refinement.

From research to bedside care

Because the system uses information already collected in routine care—standard echocardiograms and existing EHR data—it could, in principle, be embedded directly into hospital software. After a scan is read, the AI could quietly estimate peak VO₂ and highlight patients whose predicted exercise capacity is dangerously low, prompting doctors to order formal exercise testing or refer them to advanced heart failure specialists. The study’s results, including strong performance on hospitals not used in training, suggest that such a tool could help catch more patients in trouble who might otherwise be overlooked. While prospective trials and broader testing are still needed, this work points toward a future where powerful but scarce tests are complemented by AI systems that make smarter use of the data most hospitals already have.

Citation: Huang, Z., Pan, W., Alishetti, S. et al. Multimodal multi-instance learning for cardiopulmonary exercise testing performance prediction. npj Digit. Med. 9, 304 (2026). https://doi.org/10.1038/s41746-026-02493-w

Keywords: heart failure, cardiopulmonary exercise testing, echocardiography, artificial intelligence, risk prediction