Clear Sky Science · en

Artificial intelligence-enabled ultrasound diagnosis and stratification of follicular thyroid neoplasms: a multi-center study

Why this matters for people with thyroid nodules

Many people discover thyroid nodules during routine checkups and then face anxious weeks waiting to learn whether the growth is harmless or cancerous. This study explores whether artificial intelligence (AI) can read ultrasound images of a specific group of thyroid growths—called follicular neoplasms—more accurately than human experts, helping patients avoid unnecessary surgery while making sure dangerous tumors are not missed.

When harmless and harmful look the same

Follicular thyroid tumors come in two main forms: adenomas, which are benign, and carcinomas, which can invade blood vessels and spread. Under both the microscope and on ultrasound scans, these tumors often look strikingly similar. Even experienced radiologists and pathologists can struggle to tell them apart before surgery, which means many patients undergo removal of half or all of the thyroid just to get a definitive diagnosis. The stakes are high: some carcinomas are only mildly invasive and carry a good outlook, whereas others are more aggressive, so knowing the exact type can shape how much surgery and follow-up a patient truly needs.

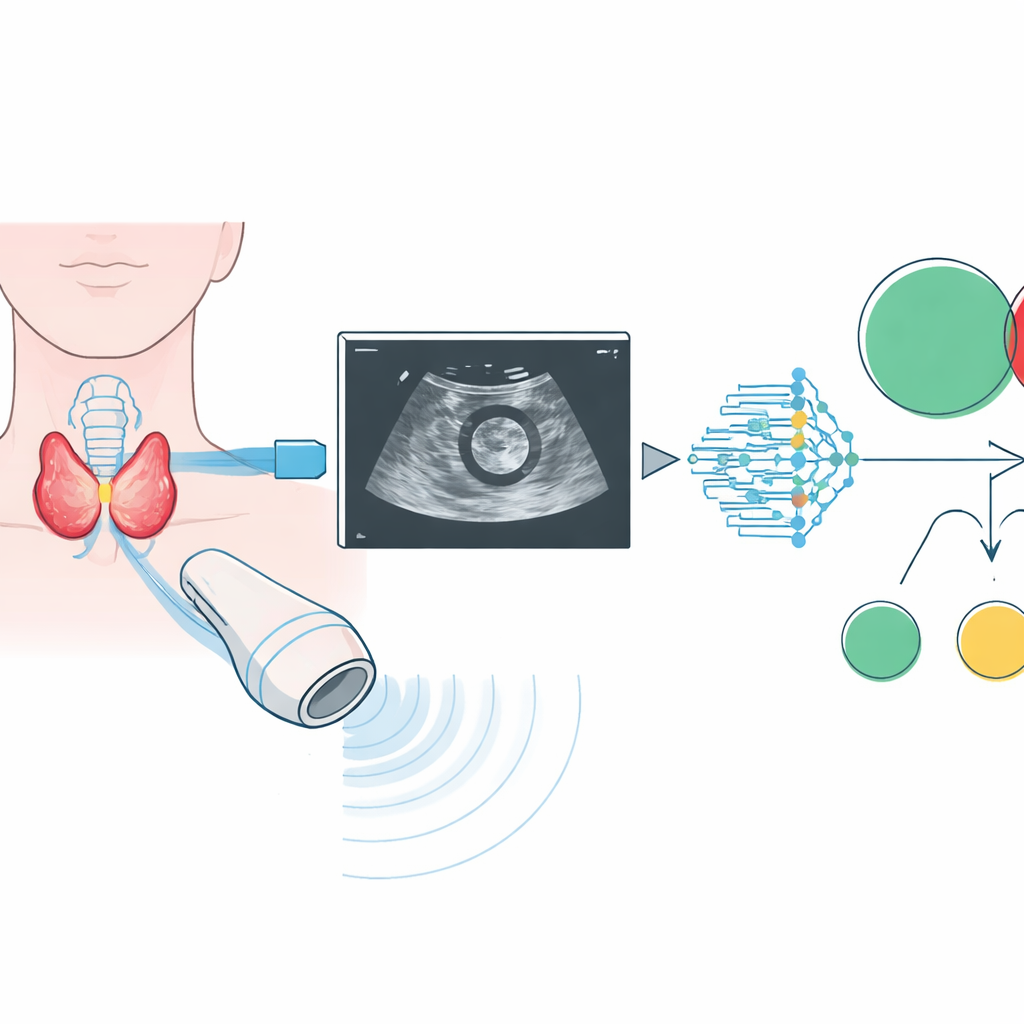

Teaching a computer to read thyroid ultrasounds

The researchers assembled one of the largest collections of follicular thyroid ultrasound images to date, drawing on 2,567 patients from 31 hospitals across China. For each nodule, radiologists outlined the relevant region on standard black-and-white ultrasound images. A modern deep learning system, based on a visual architecture known as ConvNeXt, was then trained in stages. First it learned to distinguish benign adenomas from carcinomas. Next, among the carcinomas, it learned to sort them into less invasive and more invasive subtypes, which roughly correspond to low-, intermediate-, and high-risk disease. The team tested different kinds of ultrasound information and found that simple B‑mode images—standard gray-scale scans—were more reliable for the AI than color blood-flow images, which varied too much in quality.

How well the AI performed in the real world

To see if the system would hold up outside the labs where it was built, the authors tested it on three independent groups of patients from other hospitals, each with different mixes of benign and malignant tumors. Across these centers, the AI showed consistently strong performance when separating adenomas from carcinomas, with accuracy measures (AUC values) around 0.82 to 0.85. It also did well at its more demanding task: ranking tumors into three groups—benign, minimally invasive cancer, and more aggressive invasive cancer—again keeping high performance across all hospitals. Importantly, the model worked about equally well for men and women, for different surgical approaches, and across most geographic regions, suggesting that it could be useful in a wide range of clinical settings.

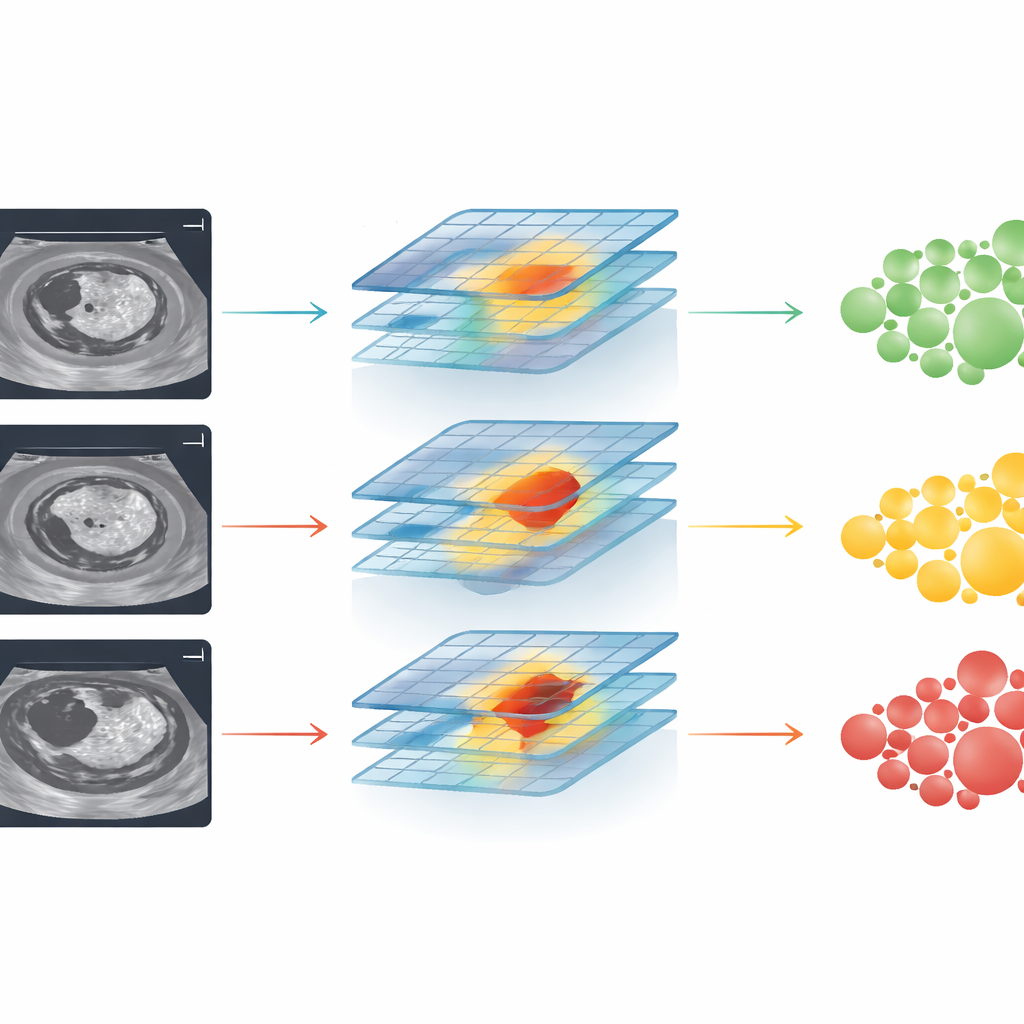

Working alongside, not instead of, radiologists

The study also asked a practical question: does this AI actually help doctors make better decisions? When radiologists used only established scoring systems for thyroid ultrasound, their performance in identifying carcinomas was noticeably worse than the AI’s. When shown the AI’s output and its highlighted “attention maps” on the same images, their accuracy climbed and in some cases nearly matched the computer’s. Junior doctors benefited the most, but even seasoned specialists gained an edge. At the same time, analysis of cases the AI got wrong revealed a key weakness: in those images, the system tended to focus on areas outside the true tumor rather than on the suspicious internal features, hinting at where further refinements are needed.

What this could mean for patients

In plain terms, this work suggests that a well-trained AI can serve as a second set of highly consistent eyes when doctors read thyroid ultrasounds. For patients with follicular thyroid nodules, such a tool could improve the odds that a dangerous carcinoma is recognized before surgery, while reducing the chance that a harmless adenoma triggers overly aggressive treatment. The model is not ready to replace expert judgment, and it still needs testing in other countries and more varied patient groups. But as part of an ultrasound workstation or scanner, it could soon help tailor surgery and follow-up to each person’s true level of risk, turning a once murky gray area of thyroid diagnosis into a clearer path forward.

Citation: Li, J., Zhang, H., Zheng, H. et al. Artificial intelligence-enabled ultrasound diagnosis and stratification of follicular thyroid neoplasms: a multi-center study. npj Digit. Med. 9, 313 (2026). https://doi.org/10.1038/s41746-026-02489-6

Keywords: thyroid ultrasound, follicular neoplasm, artificial intelligence, cancer risk stratification, medical imaging