Clear Sky Science · en

A novel intelligent hybrid reinforcement learning framework for autonomous decision making in complex health cognitive systems

Smarter Help for Patients Who Need Constant Care

For people with complex movement problems such as mixed cerebral palsy, every small decision about posture, medication, or alarms can affect safety and comfort. This study explores how a brain-inspired computer system can assist caregivers by watching many signals at once, thinking ahead about risks, and still behaving in ways doctors can understand and trust.

Why Current Hospital Computers Fall Short

Many hospital systems already use artificial intelligence, but most struggle in messy real-world settings. They often need huge amounts of training data, have trouble planning several steps into the future, and cannot easily explain why they make a recommendation. In health care these weaknesses are serious, because you cannot freely "try and see" with real patients, and every wrong move may harm someone. The authors argue that instead of a single rule set or one monolithic learning model, clinical support tools should mimic how the human brain mixes quick habits with slower planning and constant checks on safety and fairness.

A Brain Inspired Decision Partner

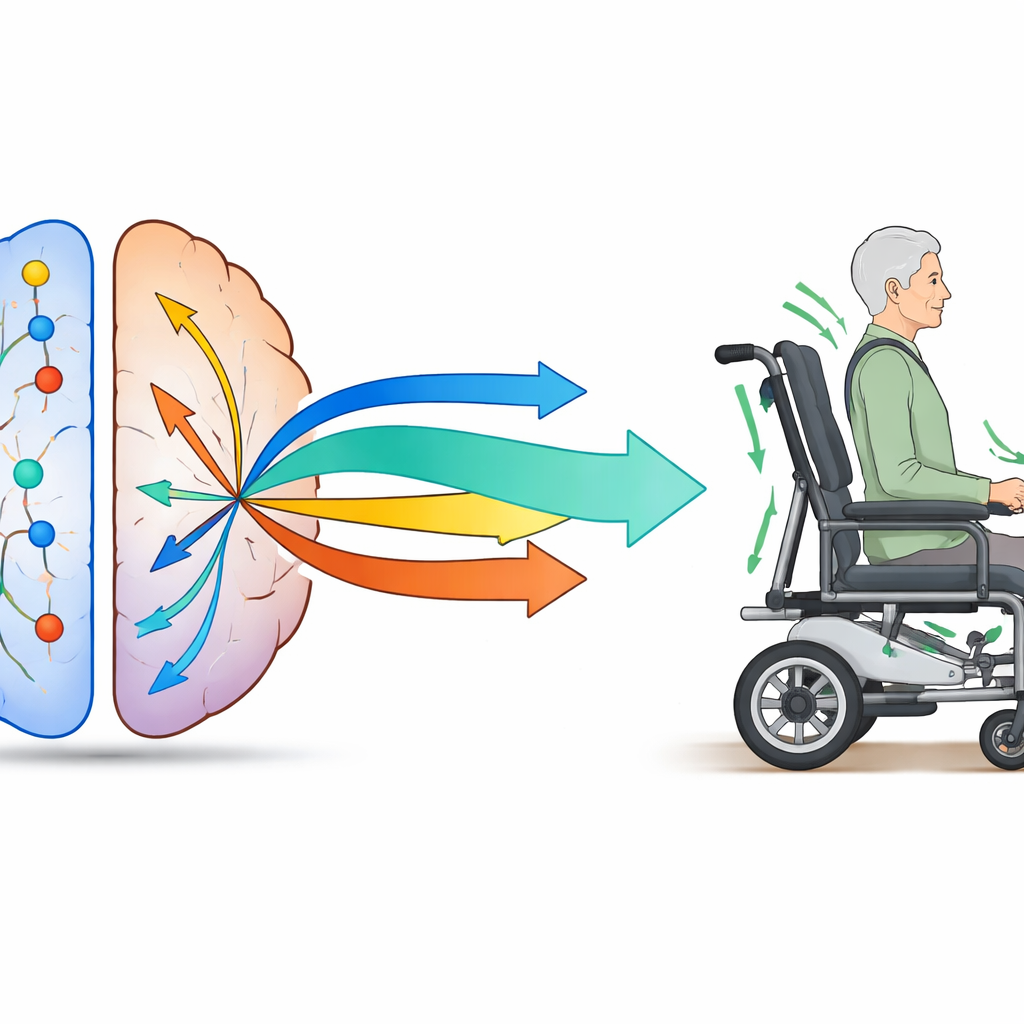

The team designs a hybrid learning framework that mirrors several parts of the brain. One part behaves like quick reflexes: it reacts fast using patterns it has seen before, for example gently changing a wheelchair angle when sensors detect minor discomfort. Another part behaves like deliberate planning: it mentally tests different options before acting, such as comparing the likely effects of giving medicine versus simply repositioning the patient. A higher-level "meta controller" constantly decides which style to use in each moment, based on how uncertain or risky the situation appears, much like the human brain switches between habit and careful thought.

Blending Medical Knowledge with Data

To keep the system trustworthy, the authors weave formal medical knowledge directly into the learning process. The model takes in hospital sensor streams, records of treatments, facial expressions, and environmental readings, but it also consults accepted clinical guides such as WHO and ICD-10 codes. These symbolic rules help it recognize known conditions, suggest approved therapies, and justify why a certain step matches established practice. The framework also runs "what if" simulations, asking how a patient would likely fare if the team chose a different action. This allows safer learning from past records instead of risky trial and error on real patients, while an ethical module watches for drops in performance on vulnerable subgroups and nudges the policy back toward fair behavior.

Testing the System in a Virtual Ward

The researchers put their design to the test in detailed computer simulations of a ward treating 86 patients with mixed cerebral palsy. Virtual agents stand in for patients, caregivers, and environmental hazards such as high room temperature or smoke. The system monitors body sensors, facial cues like crying, wheelchair tilt, and doctor choices, then issues care instructions or automatic safety moves. Compared with more standard learning methods, the hybrid system reaches near-optimal performance with about half the training data, reacts more reliably in unusual edge cases, and cuts simulated falls by about 40 percent. It also achieves very high scores on explainability measures, meaning its decisions can be traced back to recognizable signals and medical rules.

Beyond One Disease and One Hospital

To see whether their ideas transfer beyond this single case, the authors also test the frozen framework on publicly available datasets, including children’s activity traces, signals from a hand exoskeleton, and robotic control tasks. With only light adaptation at the input stage, the core decision logic still performs well, suggesting that the same brain-inspired layout could support many different health and control scenarios. This broad behavior, together with strong simulation and statistical checks, points toward tools that can share knowledge across conditions without constant retraining from scratch.

What This Means for Future Patient Care

In plain terms, the study introduces a digital assistant that watches over patients much like a careful nurse whose instincts are backed by textbooks and the ability to rehearse choices in her head. By blending fast reactions, thoughtful planning, medical guidelines, and fairness checks, the framework offers a path toward safer, more understandable automation in rehabilitation and other complex care settings. While it still needs real-world trials, the work outlines how future bedside systems might quietly adjust chairs, flag problems, and suggest treatments in ways that support rather than replace human clinicians.

Citation: Abdullah, Fatima, Z., Ather, M.A. et al. A novel intelligent hybrid reinforcement learning framework for autonomous decision making in complex health cognitive systems. Sci Rep 16, 14721 (2026). https://doi.org/10.1038/s41598-026-50418-0

Keywords: reinforcement learning, cerebral palsy, clinical decision support, healthcare AI, autonomous agents