Clear Sky Science · en

Automated landscape element recognition and layout optimization based on image segmentation and object detection

Smarter Parks and Green Spaces

Cities everywhere are racing to add more trees, parks, and walking paths, but designing these spaces well is surprisingly hard. Planners must juggle beauty, comfort, safety, and climate while combing through thousands of photos, maps, and site visits. This paper introduces a way to let computers do much of the heavy lifting: automatically reading landscape images, understanding what is in them, and suggesting better layouts for future parks and public spaces.

Why Urban Landscapes Need a New Toolkit

Traditional landscape design relies on experts walking sites, taking notes, and sketching options by hand. This is slow, expensive, and can lead to inconsistent results from one designer or project to the next. As cities grow denser and climate pressures mount, planners need tools that can quickly scan large areas, recognize where trees, lawns, water, paths, and buildings already are, and test many design ideas before anyone pours concrete or plants a single shrub. The authors argue that advances in computer vision and artificial intelligence now make it possible to move from handcrafted drawings to data-driven design support.

Teaching Computers to Read the City

To make this work, the researchers first built a large image collection of real landscapes: over 17,000 photos from parks, gardens, campuses, rooftops, and waterfronts. Each image was carefully labeled by experts so a computer could learn to spot key elements such as deciduous and evergreen trees, shrubs, grass, water, pathways, buildings, benches, playgrounds, lights, flower beds, and rocks. On top of this dataset they trained a set of visual models that do two things at once. One model paints each pixel with a category, outlining the precise shapes of lawns, ponds, and tree canopies. A second, complementary model draws separate outlines around individual objects like benches or pieces of playground equipment, while also locating them in space.

From Raw Photos to Recognized Elements

The heart of the system is a new image analysis network designed specifically for messy outdoor scenes. It looks at each photo at several scales at once, so it can notice both fine details like the edge of a path and big structures like a building or pond. Special "attention" mechanisms help the network focus on the most important regions and ignore cluttered backgrounds. Another part of the design pays extra attention to boundaries, so that the border between a shrub and a lawn, or between a path and a flower bed, is drawn sharply. In tests, this approach proved noticeably more accurate than several well-known computer vision methods originally built for streets or generic objects, especially for tricky categories such as water edges and irregular vegetation.

Letting Algorithms Try Out New Layouts

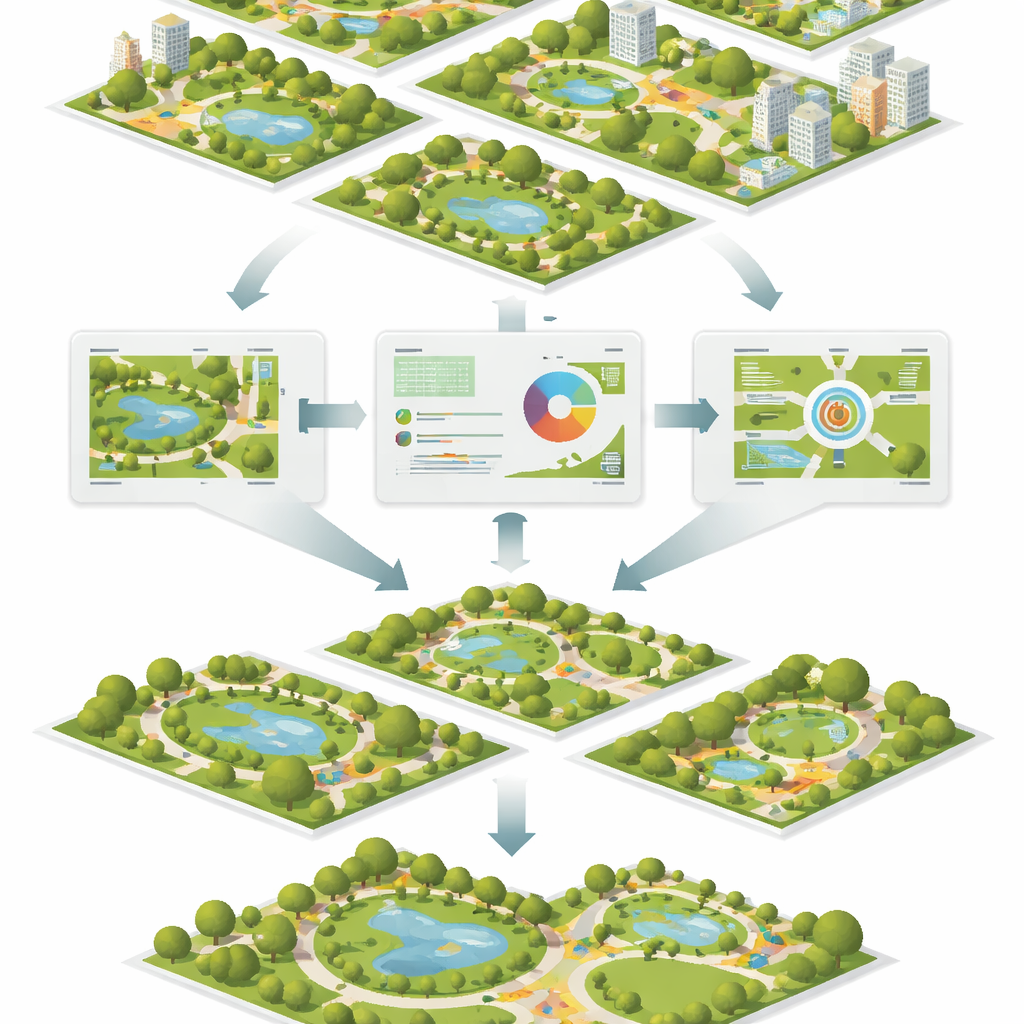

Once the system has mapped out all the elements in a space, a separate optimization engine starts to rearrange them virtually. It encodes common design rules—such as keeping safe distances between incompatible uses, linking related spaces with clear walking routes, and balancing open lawns with shaded areas—into a set of mathematical constraints. Two search strategies, inspired by evolution and flocking behavior, then work together to shuffle and refine layouts, seeking arrangements that score better on space efficiency, walkability, and visual balance. The computer can generate many alternatives in a short time, giving designers a menu of improved options to consider rather than a single hand-drawn plan.

How Well Does It Work in Practice?

To test whether these automated suggestions actually help, the team invited professional landscape architects and other users to review sample projects. Experts, unaware of which layouts were made by humans or by the system, consistently scored the computer-assisted designs higher for spatial organization, function, and overall quality. Survey participants also reported high satisfaction, though they noted that fine-grained choices such as color palettes and material finishes still benefit from human taste and local knowledge. The models handled different seasons and sites reliably, though very small items like lights and benches, or highly seasonal plants, remained harder to detect perfectly.

What This Means for Future Cities

In simple terms, the study shows that computers can now read landscape photos well enough to take on much of the tedious measuring, counting, and rule-checking that bogs down urban design. By automatically recognizing what is on the ground and exploring better layouts, the framework gives human designers more time to focus on creativity and community needs. While it will not replace landscape architects, it offers them a powerful assistant that can scale to entire districts or cities, helping deliver greener, more coherent public spaces with less guesswork and more evidence behind each design choice.

Citation: Zhang, H., Tang, N. & Sun, J. Automated landscape element recognition and layout optimization based on image segmentation and object detection. Sci Rep 16, 10841 (2026). https://doi.org/10.1038/s41598-026-45851-0

Keywords: urban landscape design, computer vision, image segmentation, layout optimization, smart cities