Clear Sky Science · en

A lightweight metallographic defect detection framework via structured priors and energy-aware attention under strong grain boundary interference

Why tiny flaws in steel matter

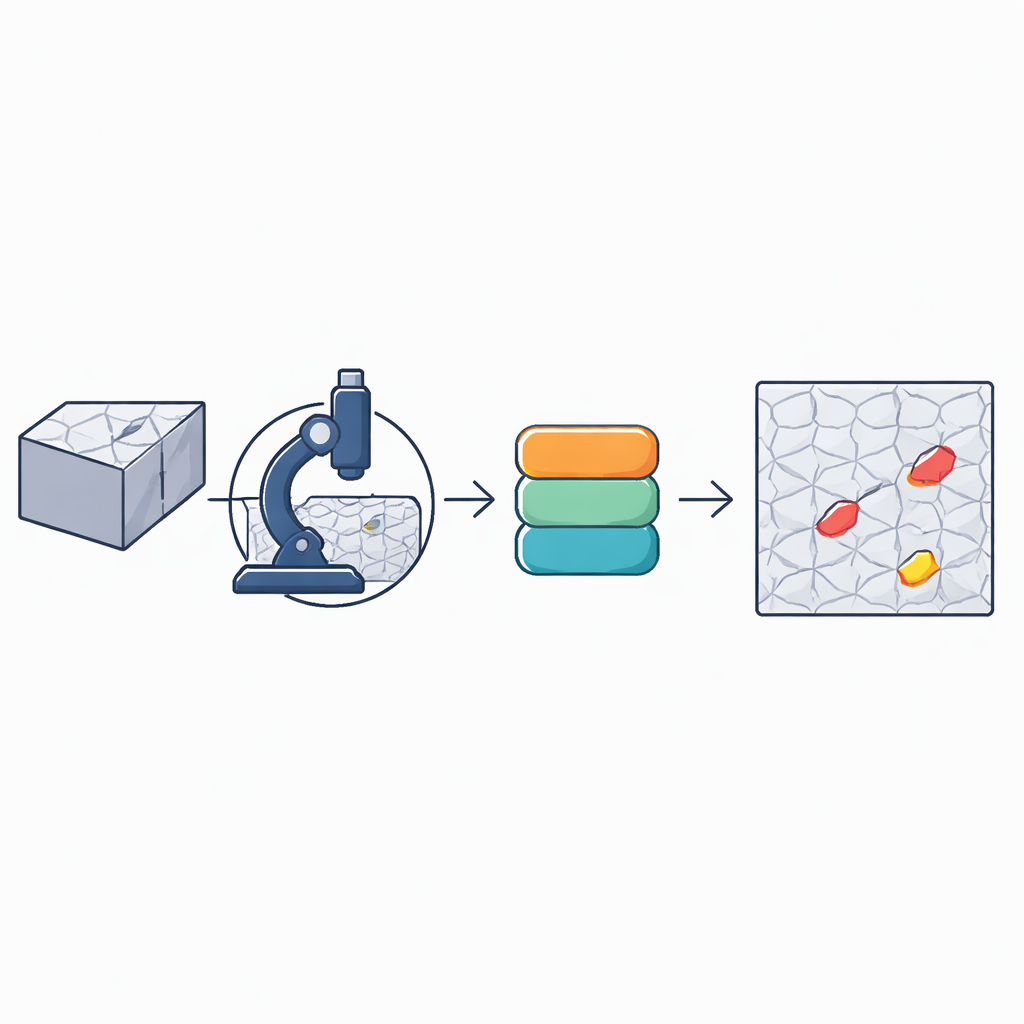

From car gears to high‑pressure pipes, many everyday machines rely on a common workhorse material: a low‑carbon steel often labeled AISI 1020. Before engineers trust this steel, they examine polished, etched slices under a microscope to judge the metal’s internal structure. But if the specimen surface itself is stained, scratched, or uneven, those preparation flaws can masquerade as real material problems. The paper introduces a new lightweight artificial‑intelligence tool that can spot these subtle preparation defects quickly and reliably, even when they are almost lost in a busy background of microscopic grain patterns.

The challenge of seeing faint flaws

Under high magnification, steel looks like a mosaic of small regions called grains, separated by intricate networks of grain boundaries. These boundaries form a dense, high‑contrast texture that easily overwhelms the faint signals from preparation defects such as water stains, leftover etching liquid, or slightly uneven surfaces. Standard computer‑vision models, including popular YOLO detectors, assume that objects stand out clearly from their surroundings. In metallography, the opposite often holds: the background is strong and structured, while the defects are weak and irregular in both shape and size. As images are repeatedly compressed inside a deep network, those weak signals are easily smoothed away, leading to missed detections or confusion between harmless texture and true flaws.

A tailored detection framework for metallography

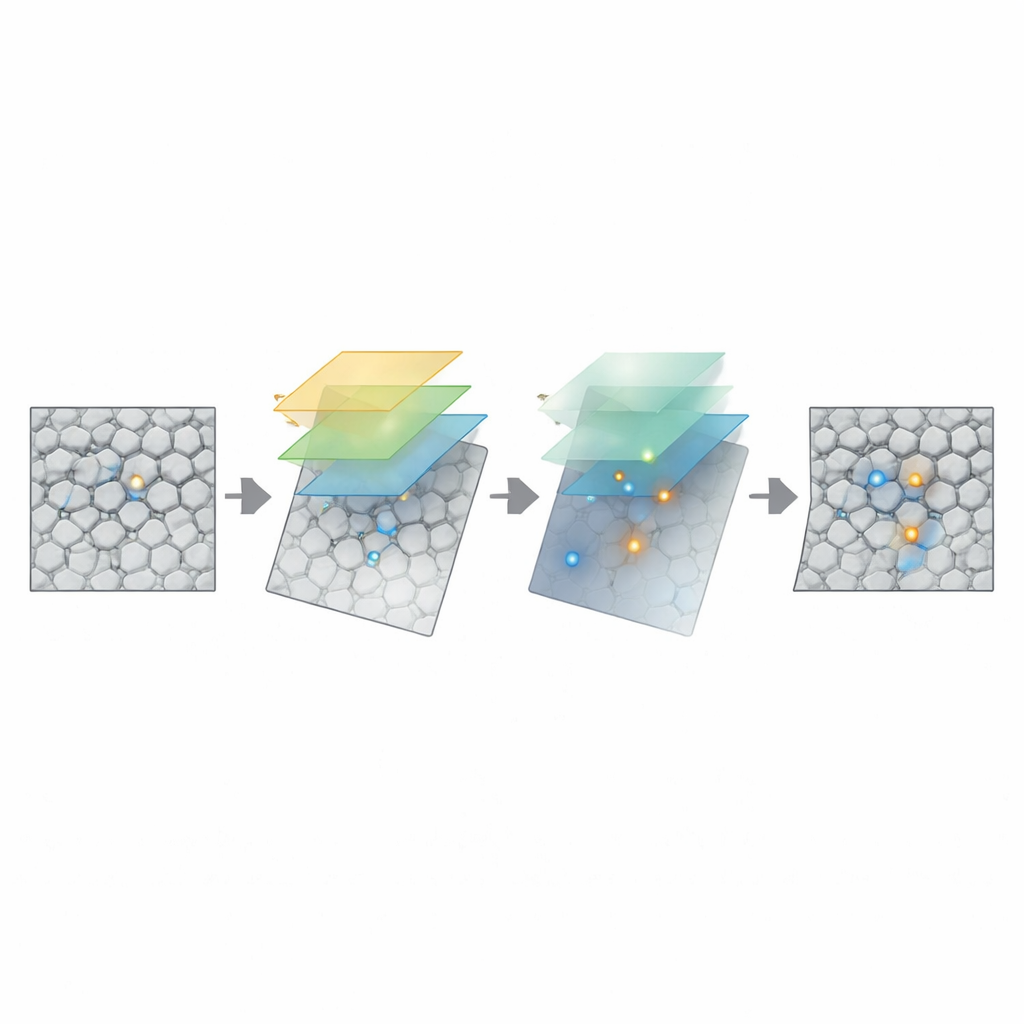

To tackle this, the authors build RSS‑YOLOv10, a customized offshoot of the recent YOLOv10 real‑time detector, tuned specifically for microscopic steel images. They start from the smallest “nano” version of YOLOv10 to keep the model compact and avoid overfitting to the complex grain patterns. On top of this, they redesign key blocks in the network’s feature extractor and feature‑fusion stages so that the system can handle extreme variations in defect size, from tiny pits to broad stains, without becoming computationally heavy. They also preserve YOLOv10’s end‑to‑end training strategy, which avoids a time‑consuming post‑processing step and is well suited to fast industrial inspection.

How the new components work together

The heart of RSS‑YOLOv10 is a new module called RepHMA, which replaces standard building blocks in the backbone network. RepHMA processes each image patch through several parallel branches that “look” at different neighborhood sizes, then merges them into a single, efficient block for use during actual detection. This multi‑scale view helps the model capture both tiny spots and larger, blurry regions. A second ingredient, the SimAM attention mechanism, acts like a filter that compares each tiny region to its surroundings and boosts those that differ strongly from the repetitive grain background. Importantly, SimAM does this without adding extra trainable parameters, preserving the model’s lightweight nature. Finally, a redesigned “SlimNeck” section fuses information from different resolution levels using specially chosen convolutions that maintain useful detail while cutting redundant computation.

Building and testing on real steel data

Because existing metallographic image collections usually focus on ideal, defect‑free specimens, the team constructed its own benchmark called 20 S‑PDD. It contains over 1,200 microscope images of 20 steel prepared in the laboratory, with three types of preparation defects carefully outlined by experts: water stains, corrosive liquid residue, and uneven surface regions. The authors trained and evaluated RSS‑YOLOv10 on this dataset and compared it with the baseline YOLOv10n and several well‑known detectors, including Faster R‑CNN, SSD, YOLOv5, YOLOv8, and a real‑time transformer‑based model. They also tested the method on a separate public dataset of larger‑scale steel surface defects to see how well it generalizes beyond microscopic images.

What the results show

On the metallographic 20 S‑PDD dataset, RSS‑YOLOv10 achieved higher precision, recall, and overall detection accuracy than all comparison models, raising the mean average precision by nearly four percentage points over the YOLOv10n baseline while using even fewer floating‑point operations. It was especially effective at distinguishing stains and corrosive residues from the surrounding grain texture, reducing both missed defects and false alarms. On the separate NEU‑DET steel‑surface dataset, the model again outperformed the baseline, showing that its core design choices are robust to different kinds of textured metal images. Visualizations of the model’s internal “heat maps” confirm that it focuses tightly on actual defects while largely ignoring grain boundaries.

Why this matters for everyday practice

For metallography labs and quality‑control engineers, the proposed framework offers a way to automatically screen prepared specimens for preparation defects before deeper analysis of the steel’s true microstructure. Because RSS‑YOLOv10 is compact and efficient, it can run in real time on standard desktop hardware or embedded devices, making it practical for routine use alongside existing microscopes. Although the current work centers on a single steel grade and a limited set of specimens, the approach opens a path toward broader automated checks of metallographic quality, reducing human fatigue and inconsistency while protecting downstream measurements that underpin safety‑critical engineering decisions.

Citation: Shen, Z., Deng, Q., Wu, Z. et al. A lightweight metallographic defect detection framework via structured priors and energy-aware attention under strong grain boundary interference. Sci Rep 16, 10969 (2026). https://doi.org/10.1038/s41598-026-45556-4

Keywords: metallographic defect detection, steel microstructure, deep learning inspection, automated microscopy, YOLO object detection