Clear Sky Science · en

Combined machine learning - 3D physics based approach for building damage evaluation: the case of L’Aquila 2009

Why this matters for people living with earthquakes

After a strong earthquake, the most urgent question is which buildings are safe to re-enter and which must stay off limits. Traditionally, this requires slow, on-the-ground inspections and relies on broad shaking maps that may miss local quirks of the landscape and city layout. This study focuses on the devastating 2009 earthquake in L’Aquila, Italy, and shows how combining advanced computer models of the shaking with artificial intelligence can help quickly flag the most damaged buildings and better prepare for future quakes.

A closer look at one Italian city

The authors use L’Aquila as a real-world test case because the main shock was strong, well recorded, and extensively studied, and because the city’s geology has been mapped in exceptional detail. They work with data on about 3,000 buildings whose damage was carefully surveyed after the 6.1 magnitude event. For each structure, they know not only how badly it was damaged but also how it was built, how big it is, when it was constructed, and where it sits relative to the fault and local terrain. This rich picture allows them to explore which factors really control whether a building survives a quake lightly scratched or is pushed to the brink of collapse.

Simulating the underground to improve shaking estimates

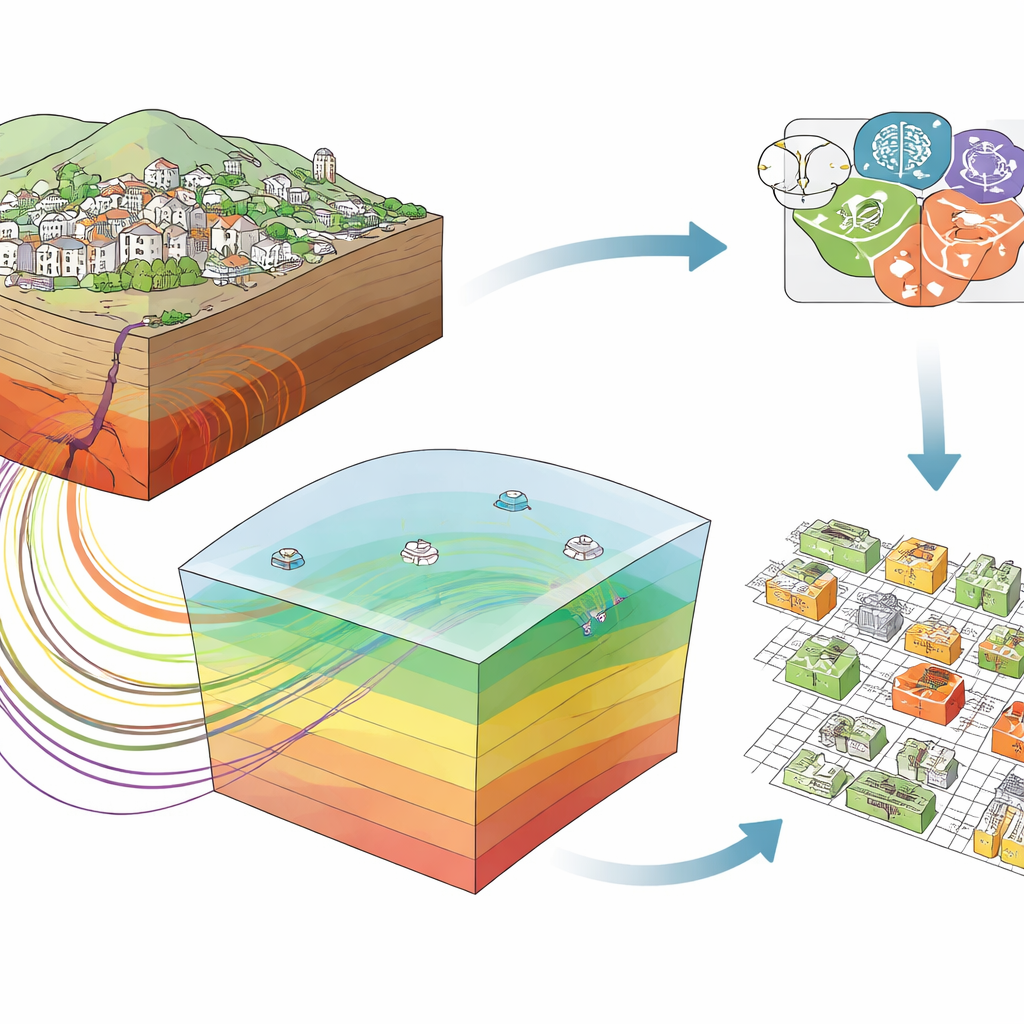

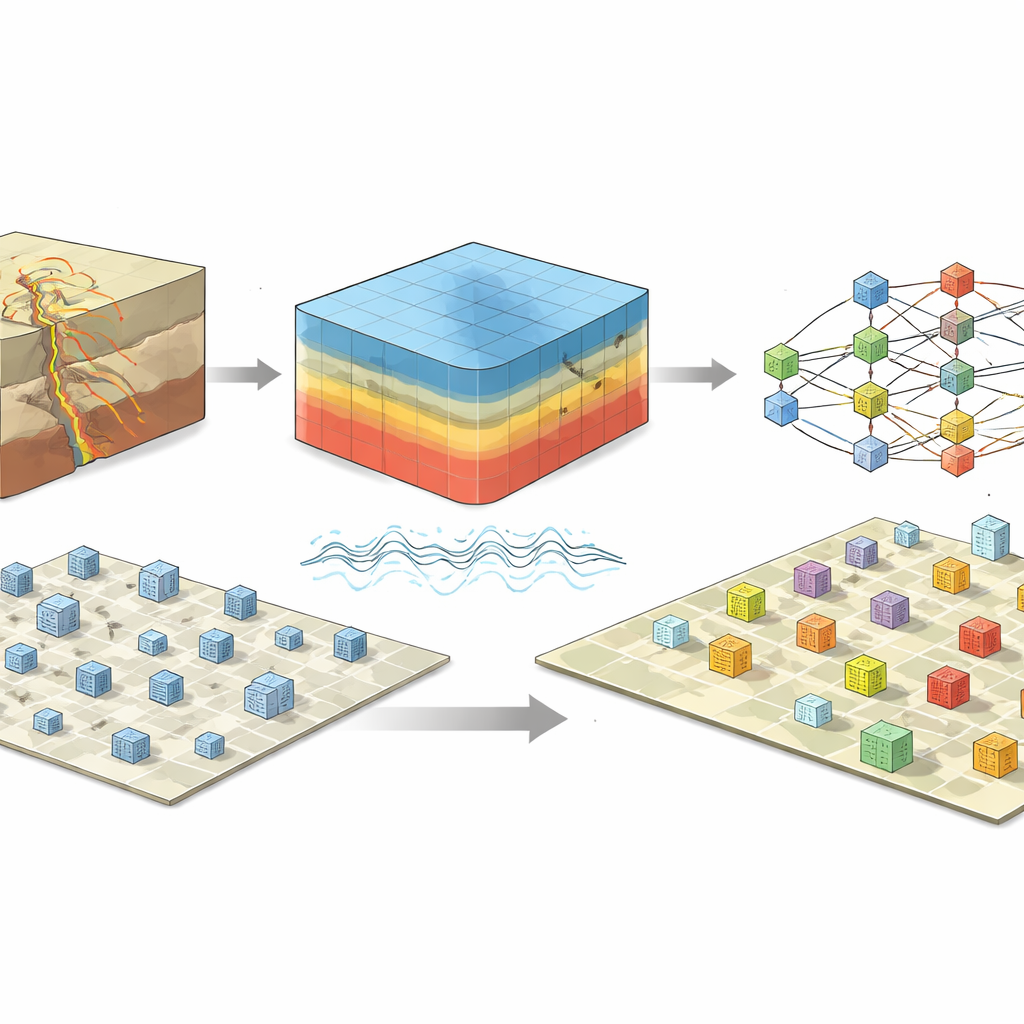

Standard earthquake damage tools often start from ShakeMaps, which blend a limited number of ground sensors with simplified formulas to estimate how hard the ground shook over a region. The team instead builds a detailed three-dimensional digital model of the crust and sedimentary basins around L’Aquila, down to roughly 20 kilometers deep and spanning about 60 kilometers in each horizontal direction. Using high-performance computing and a specialized code, they simulate how seismic waves traveled from the fault through this complex underground landscape. They then extend these simulations to cover both low and high frequencies of motion, producing realistic measures of how fast the ground moved and how violently it accelerated at thousands of points where the buildings actually stand.

Teaching a digital forest to recognize damage

Armed with this building-by-building picture of shaking, the researchers train machine learning models—specifically, ensembles of decision trees known as random forests—to predict damage levels. To make the problem more manageable, they regroup the original six damage grades into two or three broader classes, such as “light versus moderate-to-heavy” or “light-to-moderate versus heavy.” Each model sees a mixture of building-related features (like height, footprint, and age) and site-related features (like distance from the fault and local ground properties), along with either simulated shaking measures or those taken from ShakeMaps. They also handle missing information, such as unknown construction years, using careful data-filling strategies and balance uneven class sizes with synthetic examples so that rare but important heavily damaged cases are not ignored.

What the models reveal about risk

The analysis shows that the most influential predictors of damage are the average surface area of the building or block, how far it is from the part of the fault where most slip occurred, the local ground stiffness, and—crucially—the shaking measures computed from physics-based simulations. In contrast, the analogous values drawn from ShakeMaps barely register among the top variables. When the models rely on the simulated shaking, they reach accuracies of around 80 percent for the simpler two-class problems and perform robustly on a separate, previously unseen test set of buildings. This suggests that capturing the real three-dimensional path of seismic waves, rather than smoothing them over with broad regional formulas, can substantially sharpen our ability to distinguish lightly damaged structures from those that are seriously compromised.

What this means for future earthquakes

For non-specialists, the main takeaway is that pairing realistic computer simulations of how the ground actually moves with modern pattern-recognition algorithms can turn scattered measurements and building records into a practical tool for crisis response. In a future earthquake, a system built along these lines could rapidly highlight neighborhoods where heavy damage is most likely, guiding inspections, shelter planning, and longer-term rebuilding. The authors caution that their current model is tuned to a specific region and building stock, and must be tested and adapted before being used elsewhere. Still, their results point toward a future in which detailed digital twins of the subsurface, combined with smart data-driven models, help communities better understand and manage their earthquake risk.

Citation: Di Michele, F., Pera, D., Mazzieri, I. et al. Combined machine learning - 3D physics based approach for building damage evaluation: the case of L’Aquila 2009. Sci Rep 16, 10919 (2026). https://doi.org/10.1038/s41598-026-45377-5

Keywords: earthquake damage, machine learning, physics-based simulation, building risk, L’Aquila 2009