Clear Sky Science · en

Mathematical modeling of adaptive information security strategies using composite behavior models

Why smarter cyber defense matters

From hospital records to power grids, our lives now depend on digital systems that are under constant attack. Criminals and state-backed hackers no longer use single, blunt tricks; they weave long, stealthy campaigns that adapt as defenders react. This paper introduces a new way to mathematically model that cat-and-mouse game, aiming to give defenders tools that adjust in real time rather than relying on fixed rules or one-off machine learning models.

Limits of today’s protective walls

Most existing security tools look either for known fingerprints of past attacks or for simple statistical oddities in network traffic. Others use game theory or machine learning to anticipate threats. Each of these approaches captures only one slice of reality: they may model the attacker or the defender, but rarely both together, and they often assume behavior that does not change much over time. A large literature review in this study, covering 75 research papers from 2018 to 2025, showed that fewer than a third of existing models try to combine different behavior patterns, and only about a quarter support true real-time adaptation. In other words, most models treat cyber conflict as static and one-dimensional, even though real intrusions unfold over many stages and react to defenders’ moves.

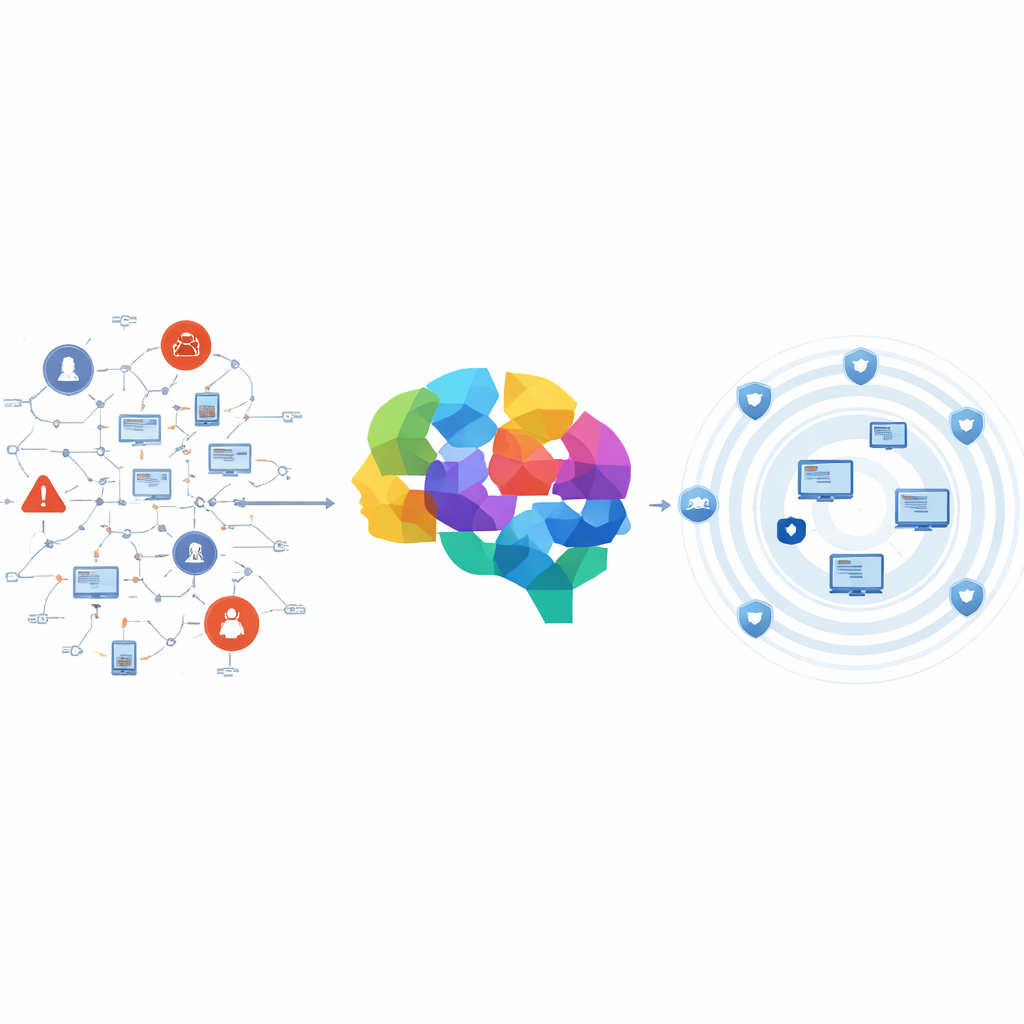

A unified view of attackers and defenders

The authors propose a composite behavior model that weaves many smaller models of both attackers and defenders into a single, dynamic picture. On the attacker side, it represents stages such as scanning a network, breaking in, moving sideways between systems, and maintaining a hidden foothold. On the defender side, it includes components such as anomaly detection, firewall tuning, and shifting computing resources to where they are needed most. All of these pieces are tied together by mathematical equations that describe how the state of the whole system changes over time, including uncertainty and random disturbances. Crucially, the defender does not get a perfect view of what is going on; instead, it sees noisy signals—like imperfect alerts and logs—and must infer what the attacker is likely doing and respond accordingly.

Turning math into moving defenses

To test their ideas, the authors built a detailed simulation environment using common cybersecurity datasets (NSL-KDD and UNSW-NB15) and realistic, synthetic attack campaigns inspired by the "kill chain" stages used by modern intruders. Attacks progress as probabilistic chains of events, with random timing and sensor noise added to mimic the messy conditions of real networks. Defender components use learning rules and feedback control to adjust thresholds, reconfigure defenses, and reassign resources as new evidence arrives. The model runs as a closed loop: attackers change tactics in response to defenses, defenses then adapt again, and so on, allowing the researchers to study how the whole system behaves over many thousands of time steps.

What the simulations reveal

Across a wide range of scenarios—from straightforward intrusions to complex, slow-moving campaigns against simulated industrial control systems—the composite model consistently outperformed traditional non-adaptive defenses and several advanced benchmarks. It achieved detection rates near or above 90 percent in low-noise settings and stayed above roughly 85 percent even when attackers became more sophisticated or when monitoring data was heavily corrupted by noise. Compared with a baseline static model, it detected more attacks, reacted faster to changes in attacker behavior, and used computing resources more effectively, cutting false alarms while avoiding runaway cost. Statistical tests confirmed that these gains were not due to chance, and the system’s internal dynamics remained stable rather than spiraling into chaos as attackers and defenders adjusted to each other.

What this means for everyday security

For non-specialists, the key message is that cyber defense can be modeled more like an evolving ecosystem than a fixed wall. By mathematically describing how many different attacker and defender behaviors interact over time—and by accounting for uncertainty and partial visibility—the proposed framework shows a path to security systems that learn during an attack, not just before it. Such composite, adaptive models could underpin future tools that quietly reshape a network’s defenses as threats unfold, helping critical services stay online and trustworthy even as adversaries become more persistent and inventive.

Citation: Nuaim, A.A., Nuaim, A.A., Nadeem, M. et al. Mathematical modeling of adaptive information security strategies using composite behavior models. Sci Rep 16, 10755 (2026). https://doi.org/10.1038/s41598-026-45315-5

Keywords: adaptive cybersecurity, behavioral threat modeling, attack–defense dynamics, stochastic security systems, intrusion detection