Clear Sky Science · en

Predicting the performance of a graphene-based patch antenna using a machine learning model for terahertz applications

Why tiny antennas matter for tomorrow’s networks

As our phones, sensors, and wireless gadgets race toward ever higher data speeds, engineers are eyeing the terahertz band—frequencies a thousand times higher than today’s Wi‑Fi. At these extremes, building efficient antennas becomes very hard, and simulating each new design can take hours. This study shows how an ultra-thin material called graphene, combined with machine learning, can be used to design compact terahertz antennas that are both powerful and fast to optimize in software, paving the way for future 6G and beyond networks.

A new kind of tiny signal booster

The authors design a microscopic “patch” antenna made from graphene, a single layer of carbon atoms, placed on a silicon block with another graphene layer acting as the ground. Despite being only tens of micrometers across—smaller than the width of a human hair—the antenna is engineered to operate across 1 to 5 terahertz, a range attractive for ultra‑high‑speed wireless links, sensing, and imaging. Computer simulations show that this compact device can support 11 distinct frequency bands within that window and reach a maximum gain of about 7.5 dBi, meaning it concentrates radio energy quite effectively for its size.

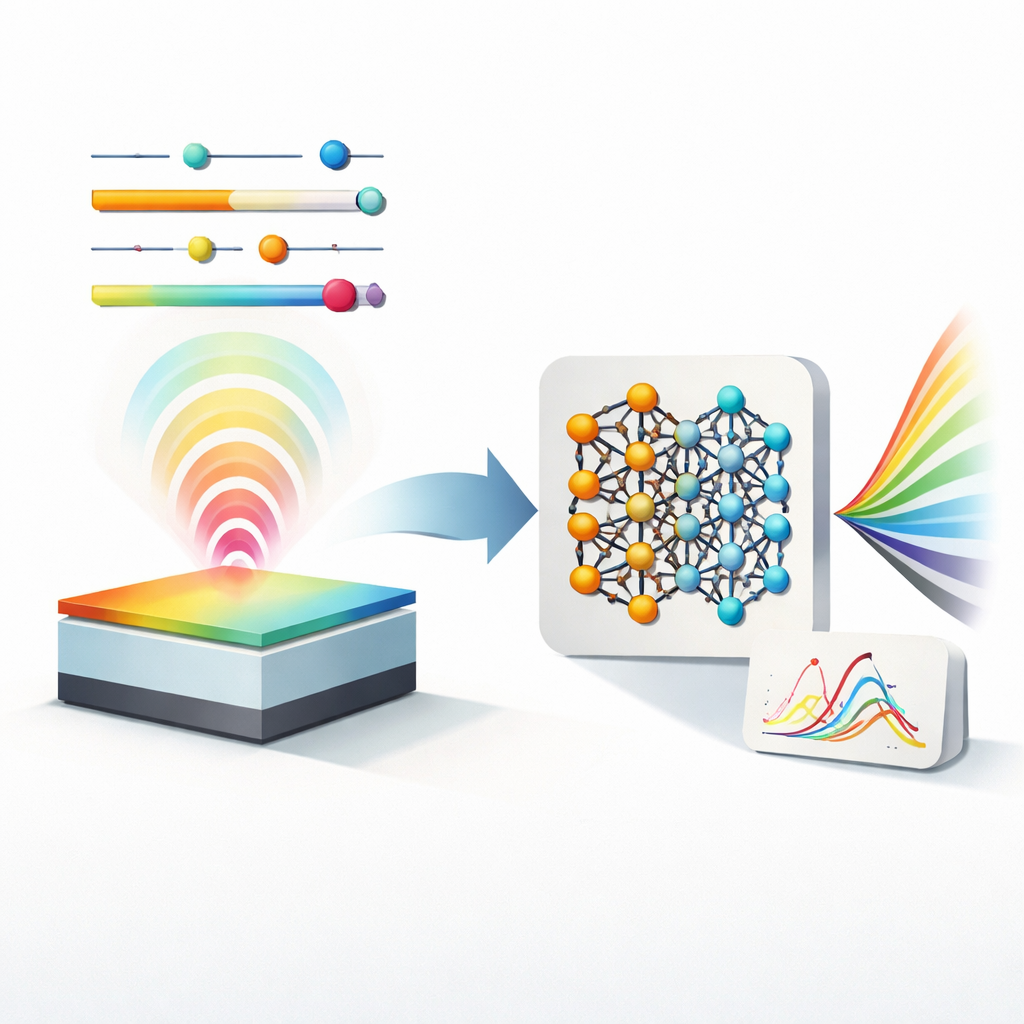

Tuning the material and the shape

What makes this antenna special is not only its size, but its tunability. Graphene’s electrical behavior can be adjusted by changing two key properties: chemical potential (which controls how easily charge carriers move) and relaxation time (which influences how long they keep moving before scattering). Alongside these, the team varies the length and width of the radiating patch. By sweeping these four knobs—two geometric, two material‑based—in a detailed simulation tool, they explore how each combination affects important performance measures: how much power is reflected back (S11), how well the antenna is matched to its feed (VSWR), how much it amplifies signals (gain), how efficiently it radiates, and how its beams spread in horizontal and vertical directions.

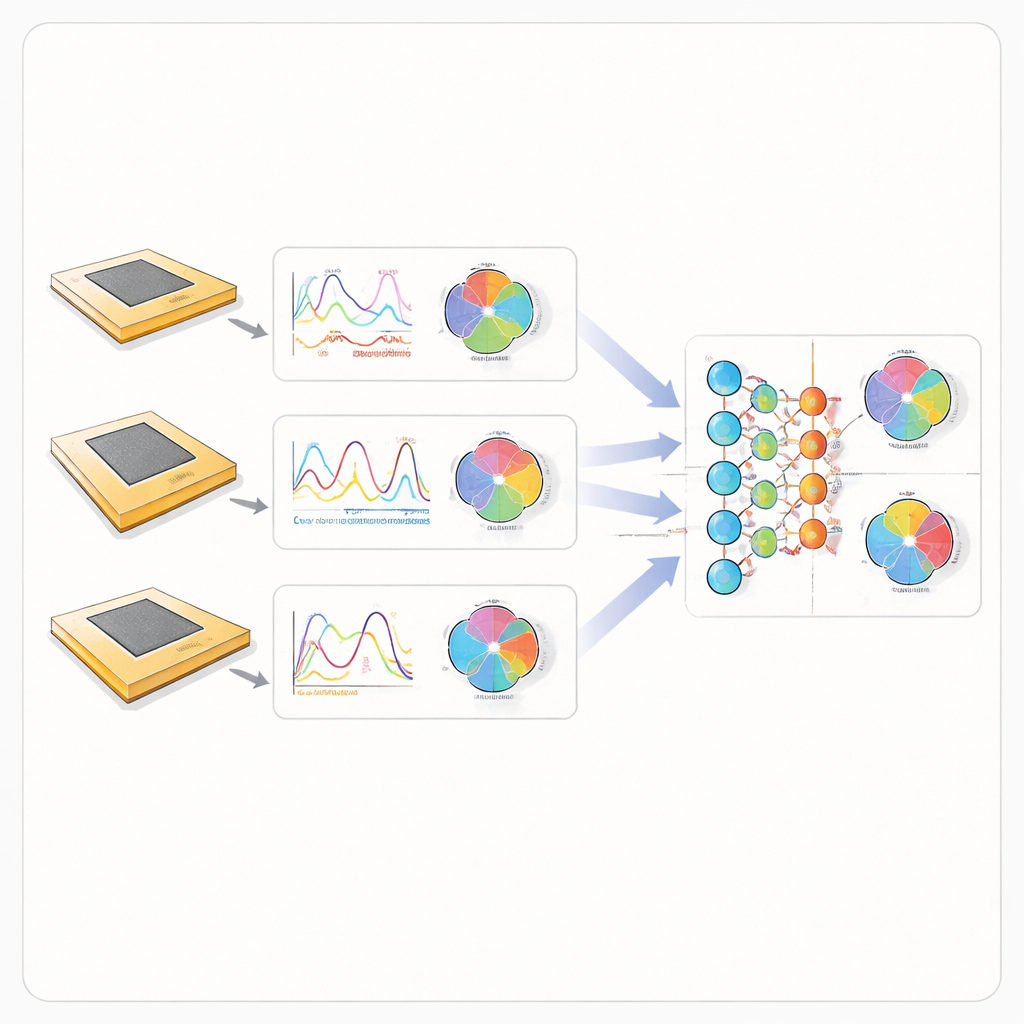

Letting machines learn the antenna’s behavior

Instead of running expensive simulations for every new design, the researchers build three machine learning models—an artificial neural network, a random forest, and a support vector machine—to learn the link between those four design knobs and seven performance outcomes. They first generate a large training set: 784 distinct antenna configurations, each evaluated at hundreds of frequency points, yielding rich curves and radiation patterns. These data feed the learning algorithms, which are trained and tested to see how well they can predict the full behavior of a new antenna design they have never “seen” before. The neural network uses a training method suited to modest data sizes, carefully avoiding overfitting while capturing highly nonlinear trends.

Speeding design without sacrificing accuracy

Once trained, all three models reproduce the simulated antenna behavior with high fidelity, achieving coefficients of determination (R²) between about 0.96 and 0.99 for most parameters. The neural network performs best, reaching an R² of up to 0.999 and delivering predictions in roughly 0.7 milliseconds for a given design, compared with the much longer time needed for a full electromagnetic simulation. It accurately matches not just simple numbers like gain, but also detailed multi‑band curves and two‑dimensional radiation patterns across the terahertz band. The random forest and support vector approaches also perform well, but are slower and slightly less precise than the neural network.

What this means for future wireless systems

In practical terms, this work shows that a carefully trained neural network can stand in for heavy‑duty physics simulations when designing graphene‑based terahertz antennas. Engineers can rapidly explore how tweaks to the antenna’s size or graphene properties will affect gain, efficiency, and beam shape, all in real time, instead of waiting for new simulations each time. That capability is crucial as researchers push toward dense arrays of such antennas for 6G, short‑range ultra‑fast links, and advanced sensing. While this study focuses on a single patch, the same method can be extended to more complex antenna arrays, potentially turning machine learning into a standard tool for crafting the hardware that will carry tomorrow’s data deluge.

Citation: Routhu, G., Abzal, S.M., Sarkar, M. et al. Predicting the performance of a graphene-based patch antenna using a machine learning model for terahertz applications. Sci Rep 16, 14622 (2026). https://doi.org/10.1038/s41598-026-44544-y

Keywords: graphene antennas, terahertz communications, machine learning design, 6G wireless, microstrip patch antenna