Clear Sky Science · en

A novel hybrid customized color correction and Recurrent Convolutional Neural Networks approach for underwater image enhancement

Why clearer underwater photos matter

Whether it is marine biologists surveying coral reefs, engineers inspecting underwater cables, or divers sharing videos from a weekend trip, all face the same problem: underwater photos often look foggy, tinted blue-green, and short on detail. This study introduces a new way to clean up those images so that scenes beneath the surface appear closer to how we would see them in clear air, helping both human observers and computer systems make better sense of the undersea world.

The problem with taking pictures under water

Water is far more than just a denser version of air. As light travels through it, certain colors are absorbed and scattered more than others. Reds and yellows fade quickly with depth or distance, while blues and greens travel farther, which is why many underwater scenes look bluish and dull. Tiny particles in the water scatter light in all directions, washing out contrast and hiding fine details. These effects not only frustrate casual photographers but also weaken the performance of algorithms that try to find objects, map the seafloor, or monitor marine life from images and video.

Existing tricks and why they fall short

Researchers have tried many strategies to fix underwater images. Some methods rely on physics-based formulas that describe how light is absorbed and scattered, attempting to reverse that process. Others adjust brightness and contrast, change color balances, or work in special color spaces that separate lightness from color. More recent techniques use deep learning, training neural networks on large collections of underwater images so the systems can learn what a “good” image looks like. However, many of these approaches are either hand-crafted pipelines with fixed steps or one-pass neural networks. They struggle when water conditions change, when lighting is uneven, or when there is strong noise, because they cannot easily adapt and refine their output over several passes.

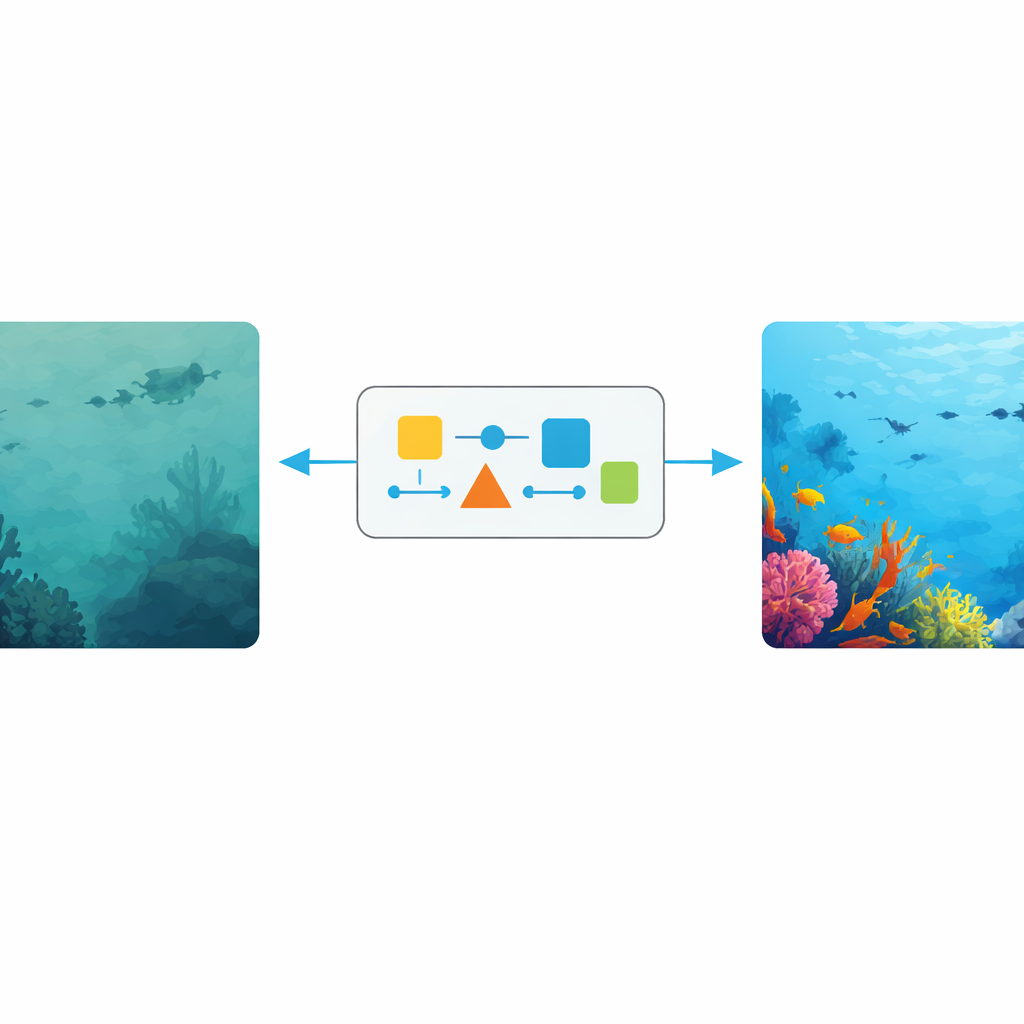

A hybrid path from murky to clear scenes

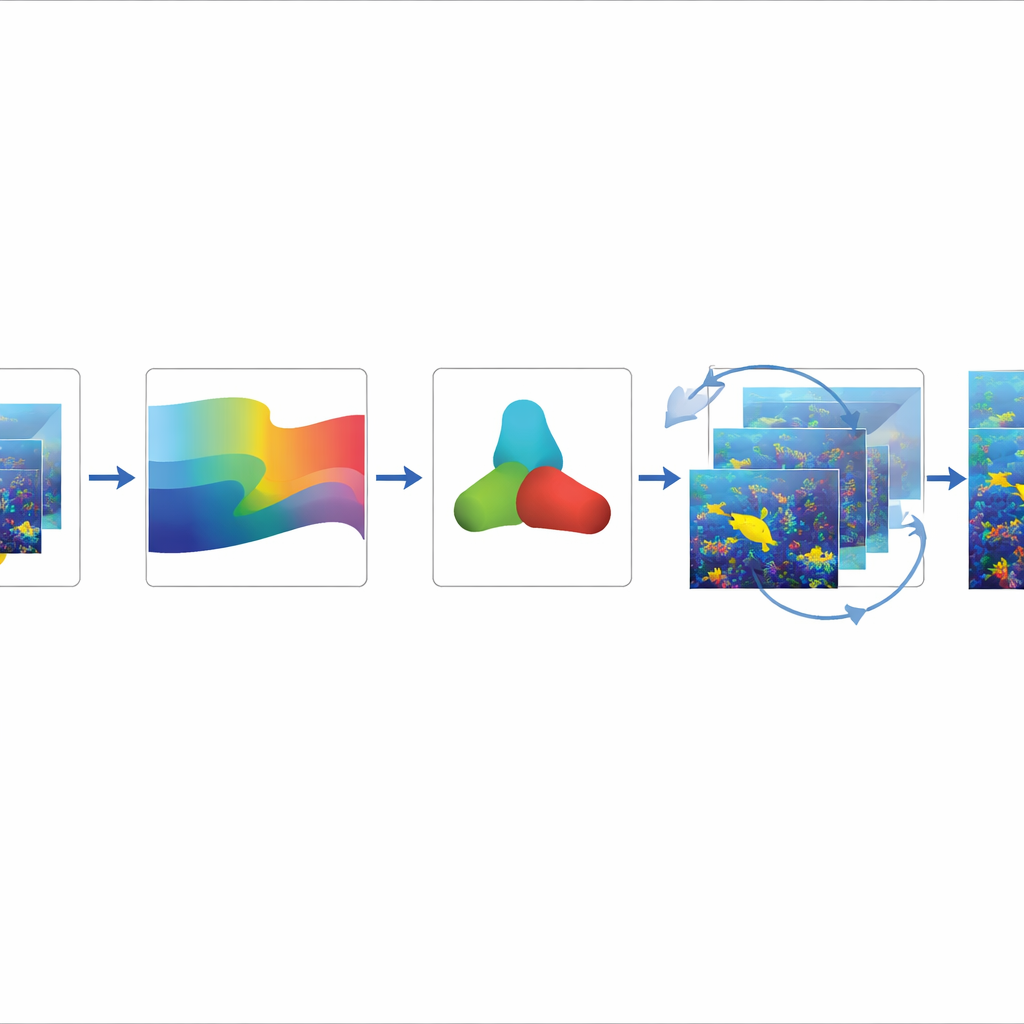

The authors propose a hybrid method that combines the strengths of classical color correction with a modern recurrent convolutional neural network, a type of deep learning model designed to repeatedly refine its predictions. First, a customized color correction stage analyzes how different wavelengths have been weakened and adjusts each color channel accordingly. Instead of blindly boosting reds or cutting blues, it estimates how severely light has been attenuated in that specific underwater setting and restores more natural color tones. The image is then converted into a color space that mirrors human perception, separating overall lightness from color components, making it easier to correct distortions without destroying detail.

Letting the network revisit the image

After the initial color fix, the refined image is passed through a recurrent neural network. Unlike a standard network that analyzes an image once, this design loops its output back in, allowing the model to reconsider the scene across several iterations. With each pass, it sharpens edges, cleans up noise, and improves contrast while trying not to create artificial halos or over-enhanced regions. Additional processing steps smooth out noise in a way that protects boundaries between objects, and specialized handling of the blue and green channels helps correct the most common underwater color imbalance. The overall pipeline therefore moves from raw, tinted images to successively clearer and more accurate representations.

Evidence from diverse underwater scenes

To judge how well the method works, the researchers tested it on a variety of public underwater image collections, spanning synthetic and real scenes, as well as still images and video. They evaluated quality with standard measures that compare sharpness, structural similarity, and noise levels, and also with scores that do not require a perfect reference image—important in the ocean, where a truly “correct” view is rarely available. Across images of coral reefs, statues on the seabed, swimming fish, and divers, their approach consistently produced higher scores than competing techniques and delivered images that human viewers judged to be clearer, more colorful, and richer in detail.

What this means for seeing under the sea

In simple terms, the study shows that carefully fixing color first and then letting a smart network repeatedly polish the result leads to underwater pictures that look more natural and reveal more information. While the method can struggle in very dark conditions and today’s quality scores are not perfect reflections of human judgment, this hybrid approach already offers a practical boost for tasks such as object detection, inspection, and environmental monitoring below the surface. With further refinements and better ways to rate image quality, tools like this could become standard components of underwater cameras and robots, giving us a clearer window into the oceans.

Citation: Natarajan, D., Sudhakaran, P. & Bereznychenko, V. A novel hybrid customized color correction and Recurrent Convolutional Neural Networks approach for underwater image enhancement. Sci Rep 16, 14006 (2026). https://doi.org/10.1038/s41598-026-44535-z

Keywords: underwater imaging, image enhancement, color correction, deep learning, marine vision