Clear Sky Science · en

Sketch recognition model based on improved CycleGAN network and dual attention mechanism

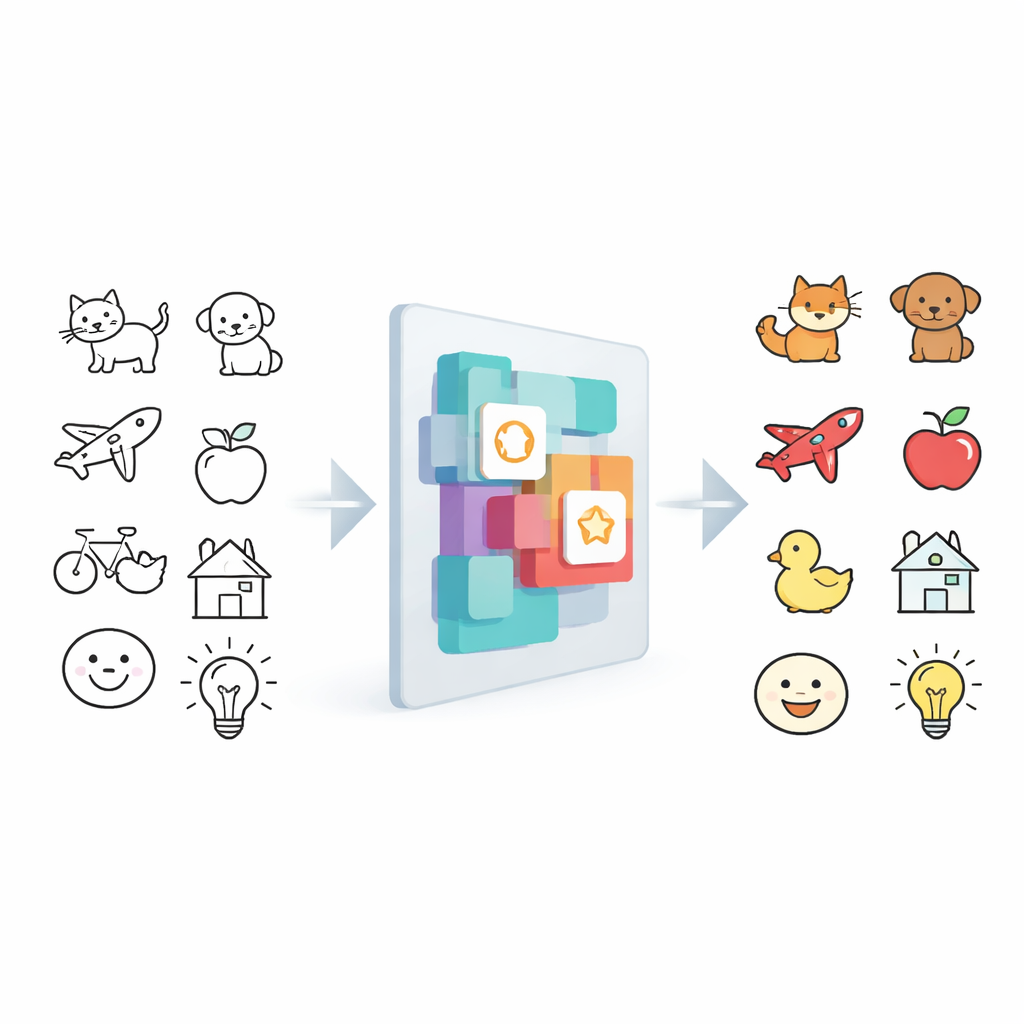

Teaching Computers to Understand Doodles

From napkin sketches to whiteboard doodles, quick drawings are one of the most natural ways people share ideas. But for computers, these sparse lines are surprisingly hard to interpret. This paper presents a new artificial intelligence model that can recognize hand-drawn sketches with striking accuracy, bringing us closer to apps that can instantly turn rough doodles into polished images, searchable icons, or interactive designs.

Why Sketches Are So Hard for Machines

Unlike full-color photos, sketches are made of just a few strokes. Different people draw the same object in wildly different ways, and important details can be missing, faint, or unevenly placed on the page. Traditional recognition systems rely on carefully crafted rules or standard image features, and they often mistake subtle line variations for meaningful differences. As a result, they may confuse similar objects, like a fox and a dog, or struggle with messy, casual drawings. Researchers have turned to deep learning to learn patterns directly from data, but even modern systems can stumble when sketches are too simple, noisy, or varied.

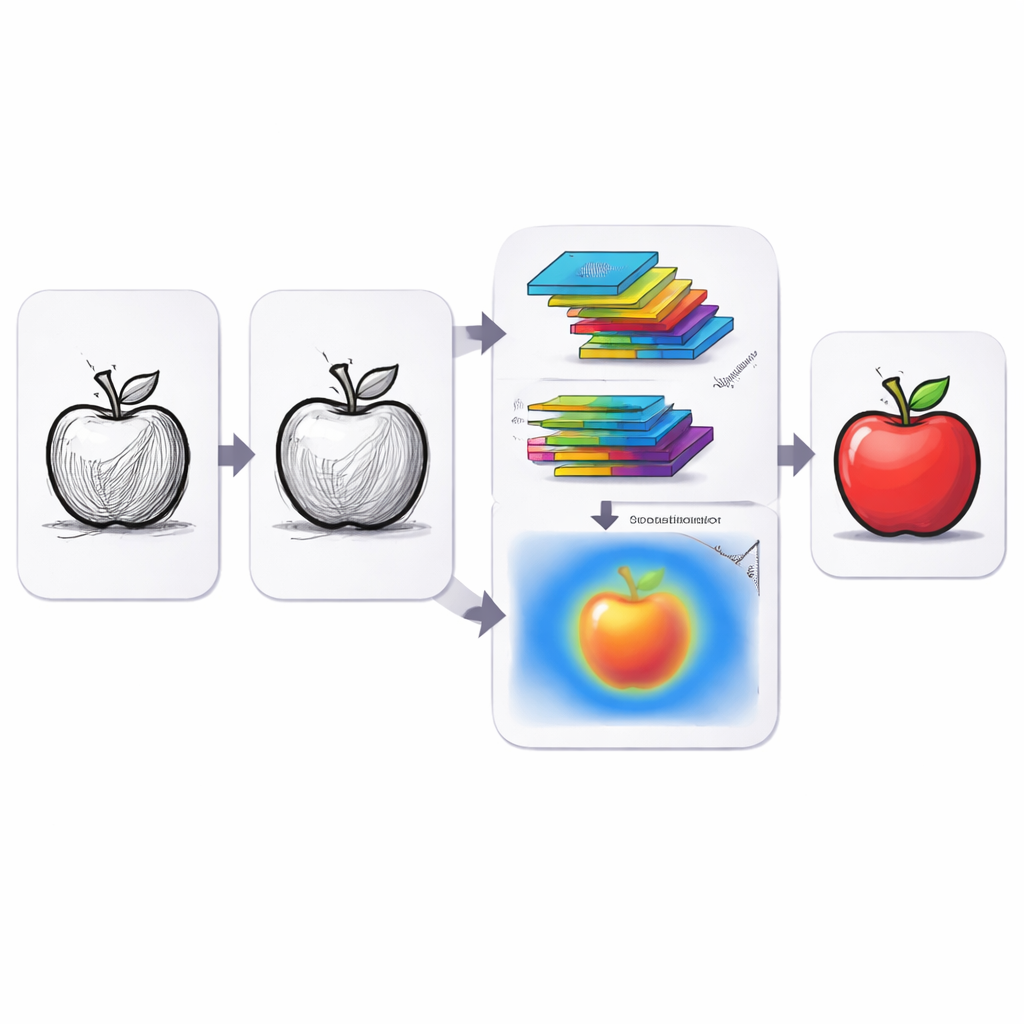

A Smarter Way to Look at Line Drawings

The authors tackle these challenges with a model that treats sketch understanding as a two-step process: first, make the sketch easier for the computer to “see,” and then focus its attention on the most informative parts. At the heart of their approach is an improved version of a powerful image-translation framework known as CycleGAN. Instead of just looking at the drawing once, the network passes it through multiple directional filters that view the strokes from several angles, capturing edges and contours more completely. A brightness balancing module then evens out light and dark areas so that differences in shading or poor lighting do not confuse the system. Together, these steps turn raw doodles into richer internal representations that highlight the underlying structure of the object.

Teaching the Network What to Pay Attention To

Even with better features, a sketch still contains a mix of helpful strokes and distracting details. To separate the signal from the noise, the model uses a dual attention mechanism inspired by how humans focus their gaze. One part, called channel attention, looks across different sets of extracted features and boosts those that best distinguish one category from another, such as the circular outline of a wheel or the beak of a bird. The other part, spatial attention, concentrates on specific regions of the sketch, emphasizing where the most informative strokes lie while downplaying blank or messy areas. These two forms of attention work together so that the model not only sees more but also knows what to ignore.

Putting the Model to the Test

After extracting and refining sketch features, the system passes them into a compact classifier that blends global averaging with additional convolution layers to make the final decision about what the sketch represents. The researchers trained and evaluated their model on two widely used sketch collections: TU-Berlin, with 25,000 drawings of everyday objects, and QuickDraw, with millions of casual doodles collected from online players. To keep the test realistic, they resized images, removed noise, and split the data into separate training and testing groups. Across these benchmarks, the new model consistently outperformed existing methods, achieving accuracy above 97% on both datasets and beating several state-of-the-art competitors in precision, recall, and a combined score known as the F1 measure.

What This Means for Everyday Tools

For non-experts, the technical details reduce to a simple message: this model makes computers much better at understanding rough drawings. By redesigning how the system extracts lines, evens out brightness, and directs its attention, the authors show that machines can reliably recognize even sparse, quirky sketches. This opens the door to drawing-based search engines, design software that turns quick doodles into polished artwork, and more natural ways to interact with devices without precise mouse clicks or professional art skills. While the system can still confuse very similar categories, future work combining sketch analysis with language cues may close that gap, making freehand doodling a truly universal interface between people and machines.

Citation: Wang, Y., Xie, L. & Huang, M. Sketch recognition model based on improved CycleGAN network and dual attention mechanism. Sci Rep 16, 14014 (2026). https://doi.org/10.1038/s41598-026-44146-8

Keywords: sketch recognition, deep learning, CycleGAN, attention mechanism, human-computer interaction