Clear Sky Science · en

Distance-based temporal similarity metrics for adaptive channel selection in multi-modal EEG-fNIRS BCI frameworks

Helping Brains Talk to Machines

For people who cannot move or speak, brain–computer interfaces promise a way to communicate using only their thoughts. But turning raw brain signals into reliable control commands is like trying to hold a conversation in a crowded stadium: there is a lot of data and even more noise. This study presents a simple but powerful way to slim down those data streams so computers can respond faster, without losing what the brain is trying to say.

Why Too Many Wires Slow Things Down

Modern brain–computer systems often use two kinds of noninvasive sensors on the scalp. Electroencephalography (EEG) records tiny electrical pulses from neurons, while functional near-infrared spectroscopy (fNIRS) tracks blood and oxygen changes linked to brain activity. Together they form a “hybrid” system that is more accurate than either alone, but at a cost: dozens of sensors over long recording times produce huge, unwieldy datasets. Searching for the best subset of sensors by trial and error would require testing astronomically many combinations, far beyond what present-day computers can handle in real time.

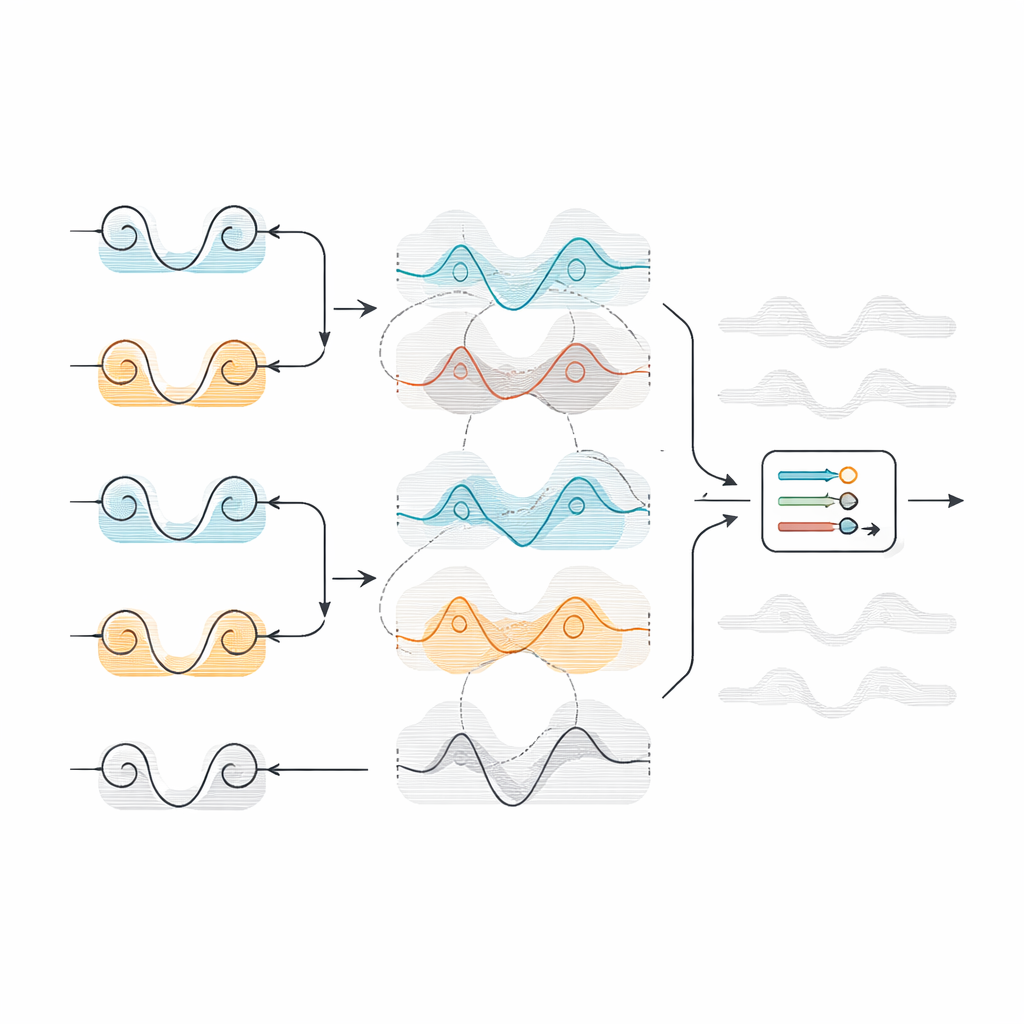

Seeing When Neighboring Signals Say the Same Thing

The authors tackle this problem with an idea that is intuitive: if two neighboring sensors on the head are telling almost the same story over time, you do not need both. They pair up nearby electrodes and mathematically measure how different their time-varying signals are. If two channels diverge strongly, both are kept because they likely carry distinct information. If they are very similar, one is thrown out as redundant. To decide where to draw this line, the team compares each pair’s distance to an overall threshold, computed either from the average value (Mean) or from the middle value (Median) of all distances.

Testing the Shortcut on Different Mental Tasks

To see if this shortcut really works, the researchers applied it to two open datasets. One contained EEG and fNIRS recordings while volunteers imagined moving their hands or solved mental arithmetic problems. The other was a classic “speller” task, where users focus on flashing letters so a distinct brain wave, the P300 response, reveals their choice. After cleaning the signals, the team extracted simple statistical features from each channel, or used short time windows directly, and then trained three well-known machine-learning tools to classify the tasks. They compared performance with and without their channel reduction method.

Keeping the Important Brain Areas

The method cut the number of channels by more than half across all tasks: roughly 15–17 out of 30 EEG channels and about 18–24 out of 36 fNIRS channels in the first dataset, and only about 31–40 out of 64 EEG channels in the speller. Crucially, accuracy stayed the same or even improved. For the speller, accuracy reached about 94% with a lightweight classifier; for hybrid arithmetic tasks, it climbed to around 73%. When the team plotted how often each sensor was kept, the surviving channels lined up with what brain science would predict: motor areas for imagined movement, prefrontal regions for arithmetic, and parietal–occipital zones for the P300 response. In other words, the algorithm was automatically homing in on functionally important regions without being told where they were.

Choosing the Right Way to Handle Noise

An important twist in the study is how the threshold is set. Using the Median distance, which is less swayed by extreme values, often yielded more stable and sometimes higher accuracy than using the Mean, especially for noisy or high-demand tasks such as mental arithmetic and the P300 speller. Statistical tests showed that this choice is not cosmetic: for those tasks, Median-based thresholds clearly outperformed Mean-based ones, while for simpler movement imagery the difference was minor. At the same time, models that used the reduced channel sets could make decisions within about a tenth to a fifth of a second, a dramatic speedup compared with several seconds when every channel was included.

What This Means for Future Brain Interfaces

In plain terms, this work shows that you can discard more than half of the sensors in a hybrid EEG–fNIRS system, keep the most informative ones, and still match or beat the original accuracy—all while shrinking the reaction time into a truly real-time range. By relying on straightforward distance calculations instead of heavy optimization schemes or deep learning, the proposed framework is easier to implement on portable devices with limited computing power. For future brain–computer interfaces designed to leave the lab and enter homes, clinics, or even wearable patches, this kind of smart channel pruning could be a key step toward making thought-controlled technology both practical and widely accessible.

Citation: Alhudhaif, A. Distance-based temporal similarity metrics for adaptive channel selection in multi-modal EEG-fNIRS BCI frameworks. Sci Rep 16, 13702 (2026). https://doi.org/10.1038/s41598-026-44052-z

Keywords: brain-computer interface, EEG, fNIRS, channel selection, P300 speller