Clear Sky Science · en

Uncertainty-aware feature-weighted ensemble framework for heart disease prediction

Why spotting heart trouble early matters

Heart disease is still the world’s top killer, yet most people are diagnosed only after symptoms become serious. Doctors increasingly turn to computer programs to sift through routine test results and medical records, looking for early warning signs that might be easy for humans to miss. But many of today’s artificial intelligence tools act like overconfident students: they give a single yes-or-no answer without admitting when they are unsure. This paper presents a smarter system that not only predicts heart disease more accurately, but also knows when to raise its hand and say, in effect, “I’m not certain—please double‑check.”

How today’s smart tools fall short

Most existing prediction systems for heart problems learn from tables of patient information—such as age, blood pressure, cholesterol and chest pain history—and then apply machine‑learning models to decide who is at risk. Two big weaknesses limit their usefulness in real clinics. First, they usually treat every input detail as equally important. In reality, some factors carry far more weight than others, and weaker but still useful signals can be drowned out in the noise. Second, most systems offer no sense of uncertainty; they output a single probability or class label even when the underlying data are confusing. In high‑stakes medicine, that kind of blind confidence can translate into missed diagnoses or unnecessary alarm.

Breaking the problem into clearer pieces

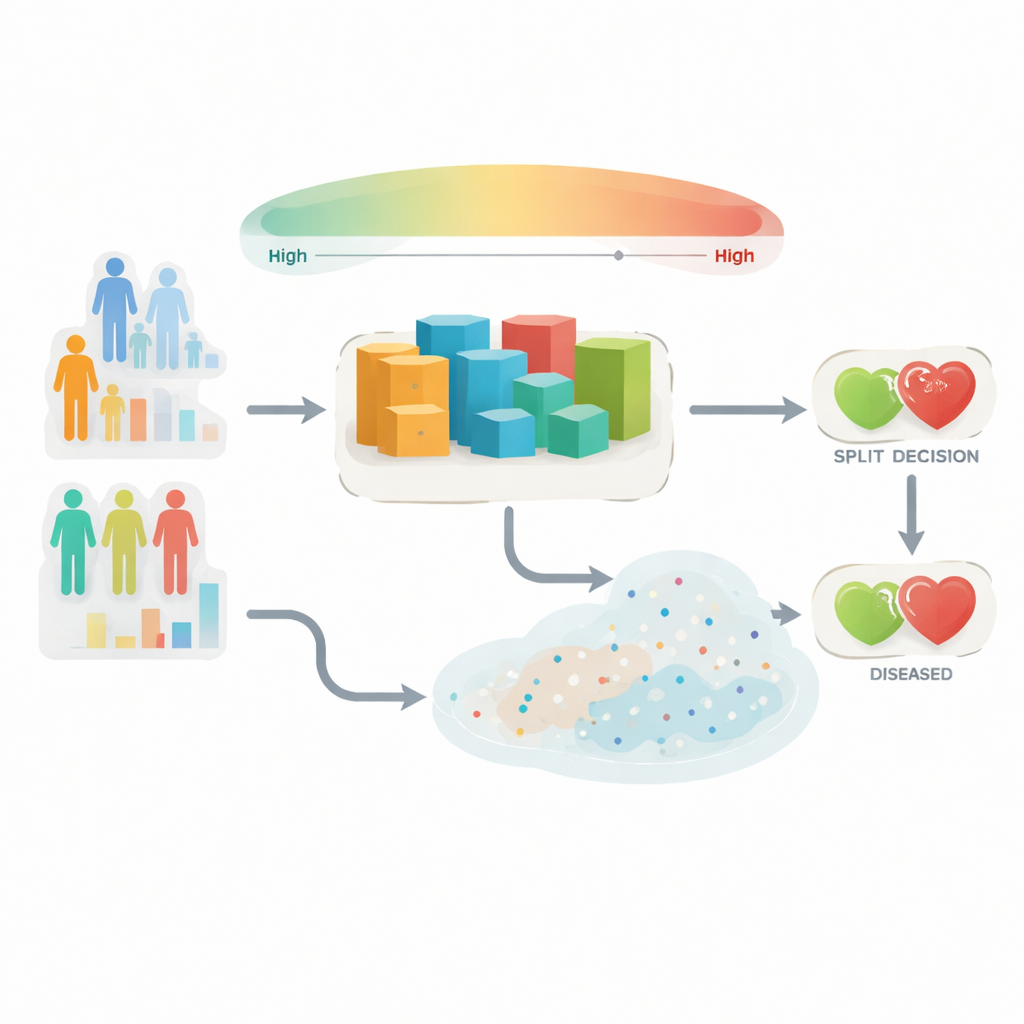

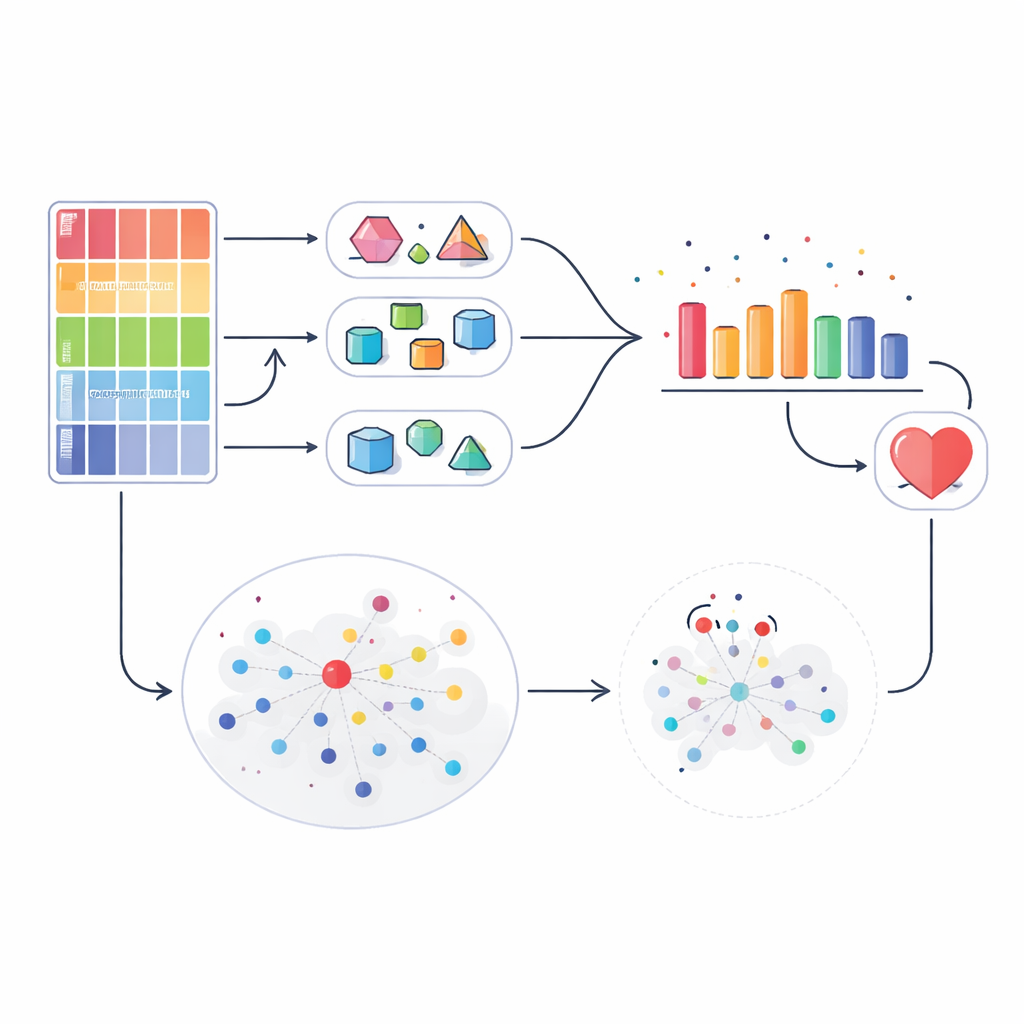

The authors propose an approach called the Uncertainty‑Aware Feature‑Weighted Ensemble (UAFE) to tackle both limitations. The method starts by ranking all clinical measurements according to how informative they are for detecting heart disease, using a well‑known tree‑based algorithm as a guide. It then splits these measurements into three groups: highly informative, moderately informative and long‑tail features that may help only in special cases. Different prediction models are trained on different combinations of these groups. Some focus only on the strongest signals, while others see a broader picture that includes weaker patterns. By mixing these specialized and general models, the system can capture both clear‑cut risk factors and subtle combinations that matter only for certain patients.

Teaching the system to doubt itself

Once the ensemble of models is trained, UAFE pays close attention to how much the models agree with one another for each new patient. If all models give similar risk estimates, the system treats that case as reliable and combines their opinions using weights based on past performance. If the models strongly disagree, UAFE interprets this as high uncertainty. In those situations, it deliberately “smooths” the influence of any single model so that no overconfident outlier dominates the final decision. For especially doubtful cases, the system goes a step further: it looks at a small neighborhood of past patients with similar measurements and checks how many of them actually had heart disease. This local comparison acts as a second opinion that can nudge borderline predictions in a safer direction.

How well the new idea works in practice

The researchers tested UAFE on a widely used heart disease dataset and compared it against standard methods, including logistic regression, several popular tree‑based models, a deep‑learning system, and a conventional voting ensemble. Across five standard measures of quality, UAFE came out on top, achieving about 87% overall accuracy and an especially strong ability to catch true heart disease cases while keeping false alarms in check. It cut the number of missed diagnoses nearly in half compared with strong individual models. The team also examined how sensitive the method is to the choice of internal settings, such as how features are grouped and how many cases are treated as “uncertain,” and found that performance stayed robust across a wide range of values.

Staying reliable across different hospitals

A common worry with AI tools is that they may perform well only where they were trained, then stumble when moved to a new hospital with slightly different patients or recording habits. To probe this, the authors ran a leave‑one‑center‑out analysis across four international clinical sites. In each round, they trained UAFE on three centers and tested it on the fourth, mimicking deployment in a new clinic. The framework consistently beat a strong baseline model and kept a healthy margin in its ability to separate patients with and without heart disease. This suggests that the uncertainty‑aware design helps the system avoid overfitting to quirks of any single hospital and instead latch onto more universal disease patterns.

What this means for patients and doctors

In everyday language, the study shows that it is possible to build a computer assistant that not only predicts heart disease risk more accurately, but also knows when its own judgment may be shaky. By paying extra attention to the most telling medical details, blending several complementary models, and double‑checking borderline cases against similar patients, UAFE reduces the chance of missing people who quietly harbor serious heart problems. At the same time, its built‑in uncertainty score can be used to flag cases that deserve closer human review rather than automatic trust. While the system still needs to be tested on larger and more varied patient groups, and extended to richer data like scans and heart rhythm traces, it represents a concrete step toward AI tools that clinicians can rely on—not just for answers, but for honest estimates of confidence.

Citation: Wang, X., Fan, Y., Yu, M. et al. Uncertainty-aware feature-weighted ensemble framework for heart disease prediction. Sci Rep 16, 13321 (2026). https://doi.org/10.1038/s41598-026-42419-w

Keywords: heart disease prediction, medical AI, ensemble learning, diagnostic uncertainty, clinical decision support