Clear Sky Science · en

3DViT-GAT: a unified atlas-based 3D vision transformer and graph learning framework for major depressive disorder detection using structural MRI data

Why brain scans may change how we spot depression

Major depressive disorder affects hundreds of millions of people, yet doctors still diagnose it mainly by talking with patients and observing behavior. That approach is essential, but it can be subjective and inconsistent. This study asks whether detailed brain scans, combined with modern artificial intelligence, can offer a more objective aid to diagnosis—by teaching a computer to recognize subtle structural changes in the brain that are linked to depression.

Looking inside the brain with modern imaging

The researchers focus on structural MRI, a type of scan that reveals the anatomy of the brain in fine detail, down to tiny three‑dimensional units called voxels. Earlier computer methods often examined each voxel separately or relied on handcrafted summaries created by experts. While useful, these strategies may miss broader patterns that span multiple brain regions. The team instead aims to automatically learn those patterns across the whole brain, using a large public dataset that includes more than two thousand people with and without diagnosed depression, collected at many hospitals and research sites.

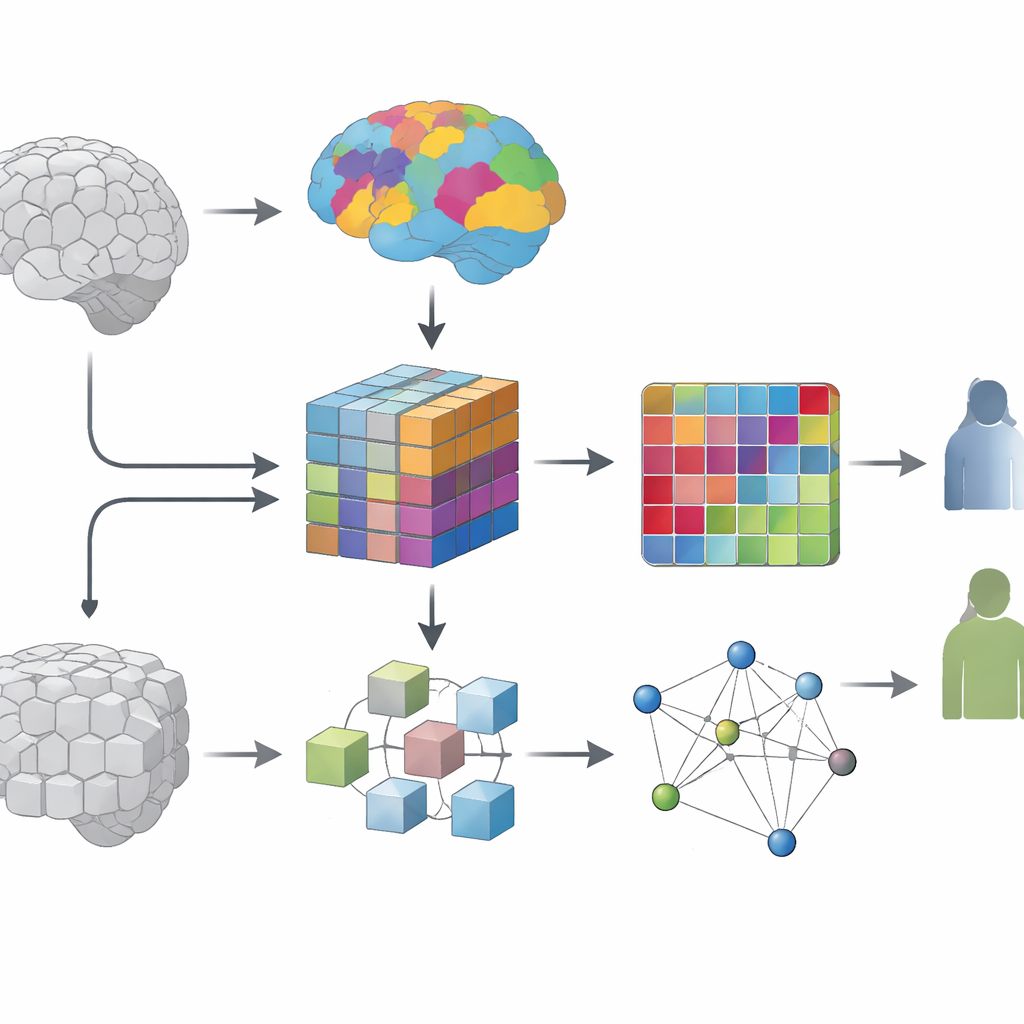

Teaching a model to see brain regions, not just pixels

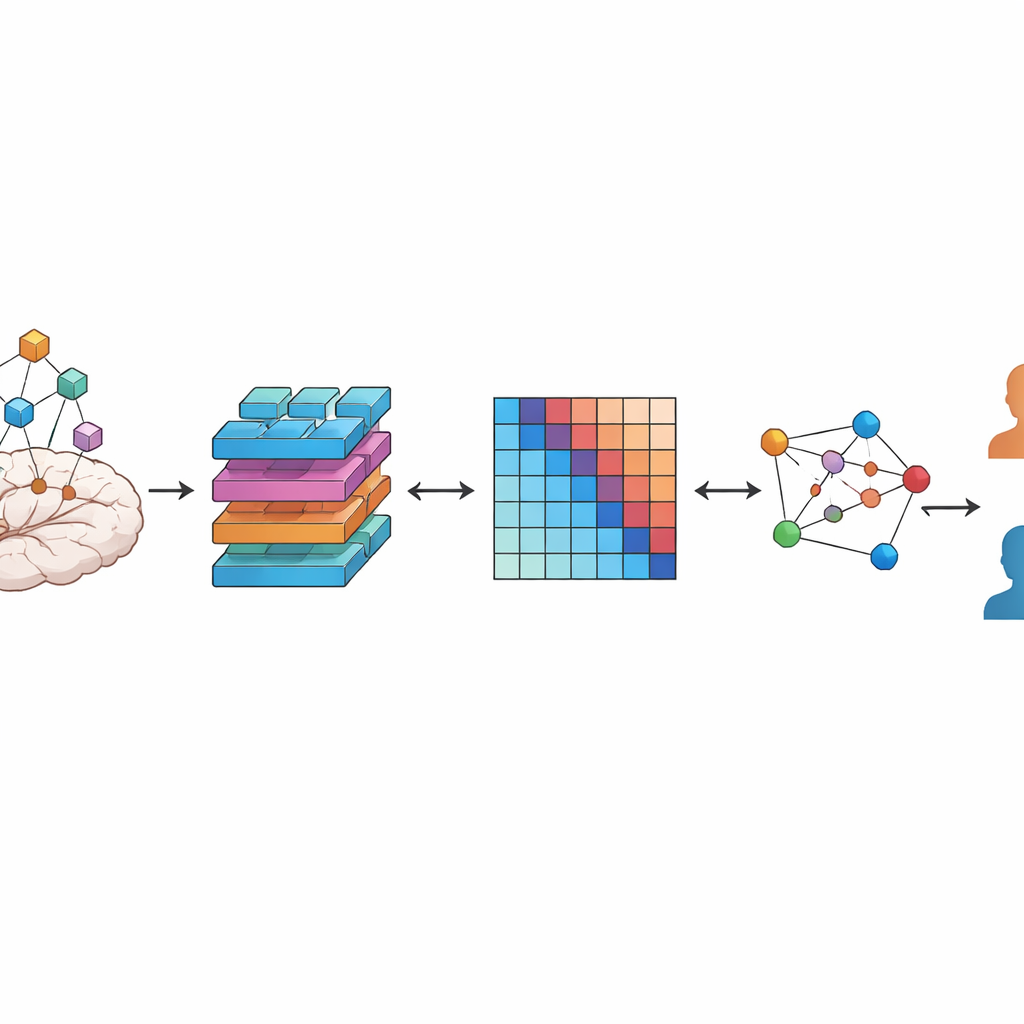

A key question in this work is how to break the brain into meaningful pieces for the computer to analyze. One option is to chop the scan into uniform cubes, like cutting bread into identical dice. Another is to follow existing brain maps, or atlases, that group voxels into regions known to share similar structure or function. The authors systematically compare these two strategies. In both cases, they use a type of deep‑learning model called a vision transformer, adapted to work on 3D data, to turn each region into a compact numerical fingerprint that captures both local detail and longer‑range context inside that region.

Turning regions into a network of connections

Brains are not just collections of isolated areas—they form networks. To capture this, the team builds a personalized network for every participant. Each node in this network is one brain region, and the strength of the connection between two nodes reflects how similar their learned fingerprints are. The result is a graph that summarizes how a person’s brain regions relate to each other structurally. A second type of AI model, a graph attention network, then learns which regions and connections carry the most information for distinguishing depressed patients from healthy volunteers, effectively highlighting the most informative pathways.

How well the system works and what it reveals

Across extensive tests using stratified 10‑fold cross‑validation, the combined transformer‑plus‑graph system reached an accuracy of about 81.5 percent, with high sensitivity (correctly identifying people with depression) and solid specificity (correctly identifying healthy controls). Importantly, versions of the model that used atlas‑defined brain regions consistently outperformed those based on uniform cubes, even though the cube‑based approach had similar computational complexity. This suggests that building in existing anatomical knowledge—rather than treating the brain as a uniform block—helps the model find clearer, more robust signals linked to depression. Follow‑up analyses of the trained models pointed to fronto‑parietal, sensorimotor, occipital, cerebellar, and frontal‑insular regions, echoing prior studies that link these areas to mood, thought, and movement changes in depression.

What this means for the future of diagnosis

To a non‑specialist, the takeaway is that the authors have created a brain‑scan analysis pipeline that does two things at once: it respects the known layout of the brain while letting powerful AI models discover new patterns in how regions relate to one another. Their results show that this atlas‑guided, network‑based view of the brain can detect depression from MRI scans more accurately than many earlier approaches. Although the method is not ready to replace clinical evaluation, it moves the field closer to objective imaging‑based tools that could one day support earlier and more reliable diagnosis, help track treatment effects, and deepen our understanding of how depression alters the brain’s structure and networks.

Citation: Alotaibi, N.M., Alhothali, A.M. & Ali, M.S. 3DViT-GAT: a unified atlas-based 3D vision transformer and graph learning framework for major depressive disorder detection using structural MRI data. Sci Rep 16, 11595 (2026). https://doi.org/10.1038/s41598-026-42108-8

Keywords: major depressive disorder, brain MRI, deep learning, vision transformers, graph neural networks