Clear Sky Science · en

A non-intrusive framework using acoustic signals and deep learning for boiling diagnostics in visual-limited environments

Listening to boiling to keep machines safe

In many advanced facilities, from medical isotope factories to particle accelerators, metal parts are cooled by water that boils at their surfaces. If this boiling suddenly becomes too intense, parts can overheat and fail. The places where this happens are often buried under thick shielding and heavy water, which makes it hard or impossible to watch the boiling directly with cameras. This study shows how engineers can instead listen to the sounds that boiling makes and use artificial intelligence to turn those sounds into a real-time gauge of how close a system is to unsafe heating.

Why boiling is hard to watch

Boiling is not just a kitchen curiosity; it is a workhorse for removing huge amounts of heat from powerful machines. At the Isotope Production Facility in Los Alamos, for example, a proton beam bombards metal targets to make medical and research isotopes, and cool water rushes through narrow passages to carry away heat. Near the hot walls, small bubbles form, grow, and leave the surface in a process called subcooled flow boiling. If the heat load passes a critical limit, this gentle bubbling can flip into a crisis state where the surface is blanketed in vapor, cutting off cooling and threatening the hardware. Because the targets sit deep under water and inside radiation shielding, engineers cannot rely on normal visual tools to track bubble behavior or measure how close the system is to this critical state.

Turning sound into a window on boiling

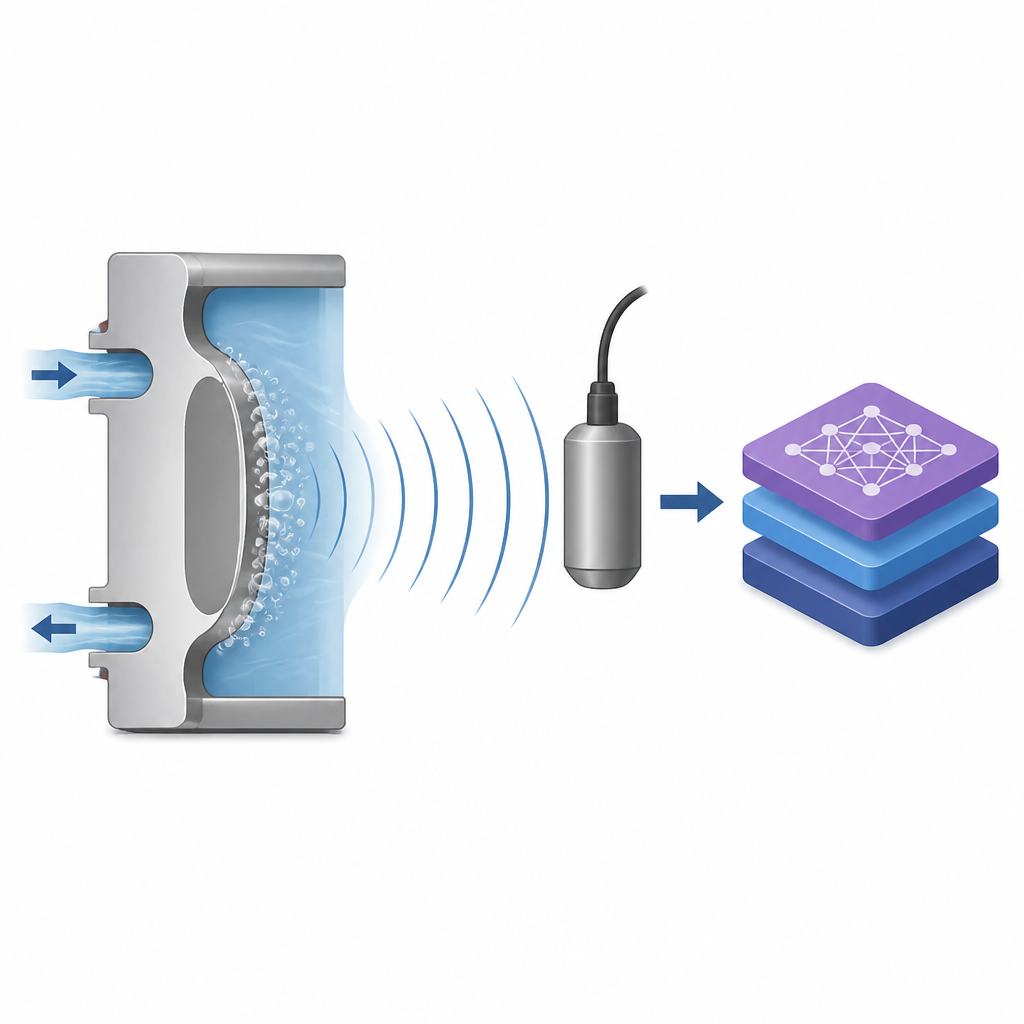

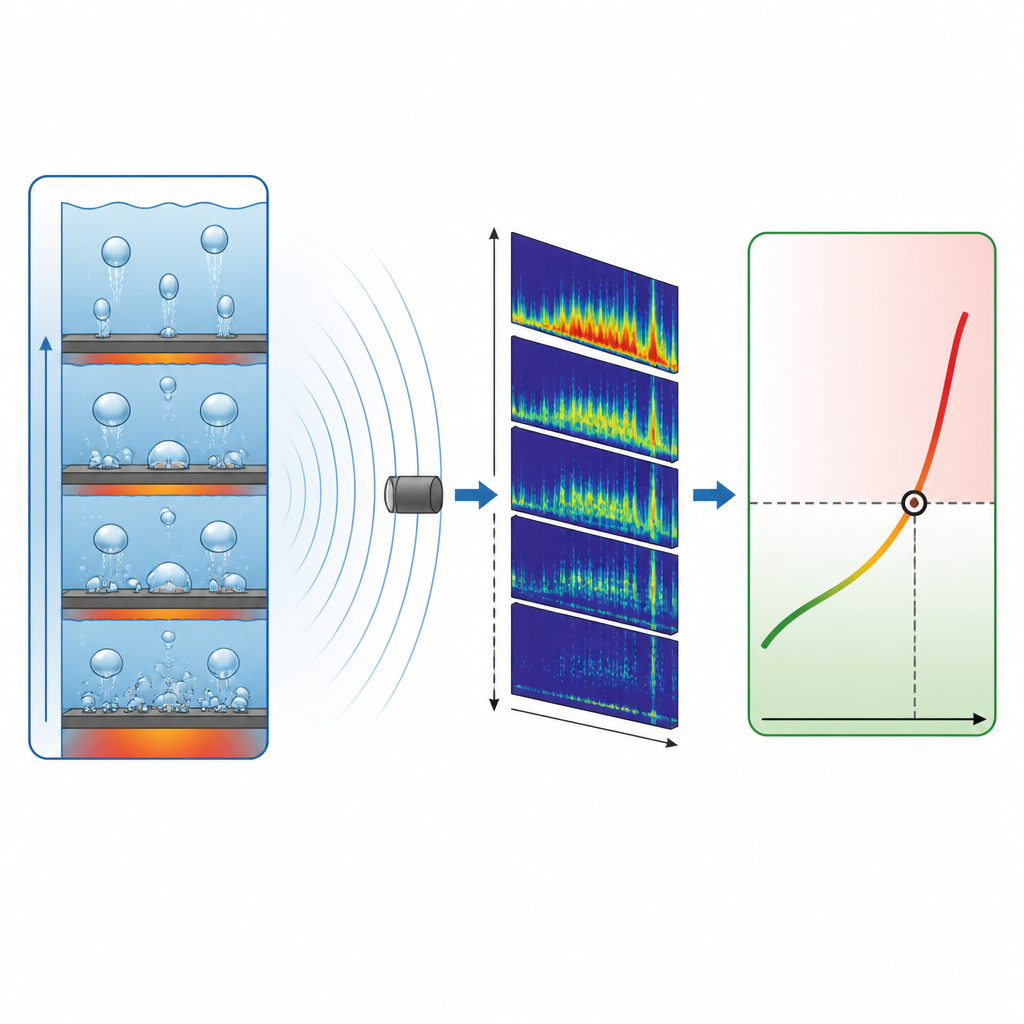

The authors built a test loop that mimics one of the narrow water passages in the real facility, with a heated nickel disk standing in for a beam window or target. They placed sensitive underwater microphones, called hydrophones, at various distances from the boiling region to capture the tiny pressure waves created as bubbles are born and collapse. At the same time, high-speed video and embedded thermometers recorded what was actually happening at the wall: heat flow, surface temperature, and bubble behavior such as size, frequency, and how many sites were active. By comparing the visual and temperature data with the sound recordings, the team created a rich training set that links specific patterns of boiling to specific sound signatures.

Teaching a neural network to read boiling sounds

To make sense of the complex audio, the researchers converted each sound signal into a picture-like form that shows how the strength of different frequencies changes over time. They then subtracted background noise measured when no boiling was present, leaving mainly the features tied to bubble activity. These images were fed into a convolutional neural network, a type of deep learning model that is very good at finding patterns in pictures. The network was trained to predict six key quantities directly from sound: the heat leaving the wall, how much hotter the wall is than the water, and several measures that describe bubble behavior at the surface. Once trained, the model successfully reproduced these values for new data, even when tested across a range of heat loads and under different flow conditions than it had seen before.

Testing noise and linking to safety models

Real industrial sites are noisy, so the team checked how well their approach holds up when the sound is contaminated. They added artificial white noise to the recordings, gradually lowering the signal-to-noise ratio until the boiling sounds were almost buried. The neural network kept its accuracy down to the point where the sound and noise were about equal in strength, showing that the method is surprisingly robust. Next, the predicted bubble statistics were fed into a computer fluid-flow model that simulates how heat and vapor move in the channel, including the onset of the dangerous critical heat flux point. When driven by sound-based predictions instead of camera-based measurements, the model produced nearly the same boiling curves and critical limits, staying within the uncertainty bands of prior visual benchmarks.

How this helps future high-power systems

The study demonstrates that listening to boiling can stand in for seeing it, at least within the tested conditions. By combining hydrophones with deep learning, engineers can estimate how hard a surface is being pushed, how bubbles behave near the wall, and how close the system is to a boiling crisis, all without placing fragile cameras or sensors in harsh radiation zones. The method remains reliable under moderate changes in water temperature, flow rate, and microphone placement, and keeps working in the presence of strong background noise. With further refinement and broader testing, this sound-based approach could become a practical tool for real-time, non-intrusive safety monitoring in reactors, accelerators, and other high-power devices where direct visual access is limited.

Citation: Huang, PH., Seong, J.H., Castro-Aguilar, J.M. et al. A non-intrusive framework using acoustic signals and deep learning for boiling diagnostics in visual-limited environments. Sci Rep 16, 14934 (2026). https://doi.org/10.1038/s41598-026-41757-z

Keywords: boiling acoustics, deep learning, critical heat flux, subcooled flow boiling, thermal safety