Clear Sky Science · en

U-Trans: a foundation model for seismic waveform representation and enhanced downstream earthquake tasks

Why smarter quake listening matters

Earthquakes can strike without warning, but the vibrations they send through the Earth carry a wealth of information. Turning those shaking signals into fast, reliable answers—Where did it happen? How big was it? Which fault moved?—is the job of modern earthquake monitoring systems. This study introduces a new way to "listen" to seismic waves using a powerful general-purpose AI model, designed to boost many different earthquake tasks at once and to work well even when labeled data are scarce.

A new common brain for quake data

Most existing AI tools for earthquakes are specialists: one network picks the arrival of key waves, another estimates magnitude, a third finds the location, and so on. They are often trained on a single region and struggle when moved elsewhere. The authors propose a different strategy inspired by foundation models in language and vision: build one large model, called U-Trans, that learns a rich internal representation of seismic waveforms from millions of examples, then share that representation with many downstream tools. Instead of replacing existing models, U-Trans acts as a common "feature engine" that feeds them extra, informative signals.

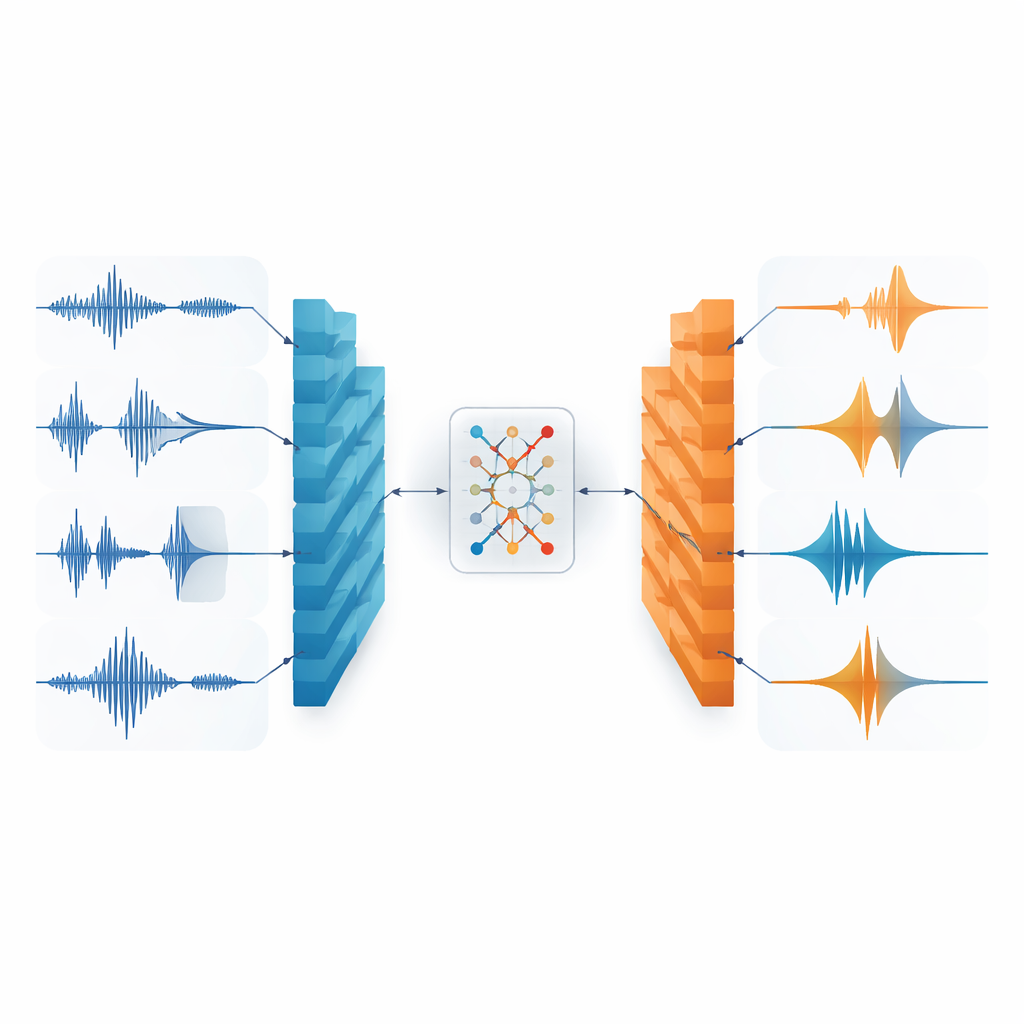

Teaching the model by hiding pieces

To train U-Trans, the researchers do not need human labels like event time or magnitude. Instead, they use a self-supervised task: take real three-component seismograms from several global datasets, deliberately remove up to about a third of their content in both time and frequency, and ask the network to reconstruct what is missing. Architecturally, U-Trans combines a U-shaped encoder–decoder, which captures fine local wiggles in the traces, with a compact transformer module in the middle that learns long-range relationships across the waveform. Learning to "fill in the blanks" forces the model to internalize the underlying physics of P- and S-waves and to distinguish meaningful signals from noise.

Hidden patterns that track key wave arrivals

After training on roughly 2.5 million seismograms, U-Trans can faithfully rebuild corrupted waveforms, showing that it has captured the essential structure of the data. When the authors inspect the internal latent features—essentially the compressed internal picture the model forms of each waveform—they find that these features light up around the arrival times of P- and S-waves, the main wave types used in earthquake monitoring. For noisy records without real events, the latent patterns are diffuse and unstructured. A separate visualization technique shows that the model’s internal representations naturally cluster earthquake signals away from noise, even though it was never explicitly told which was which.

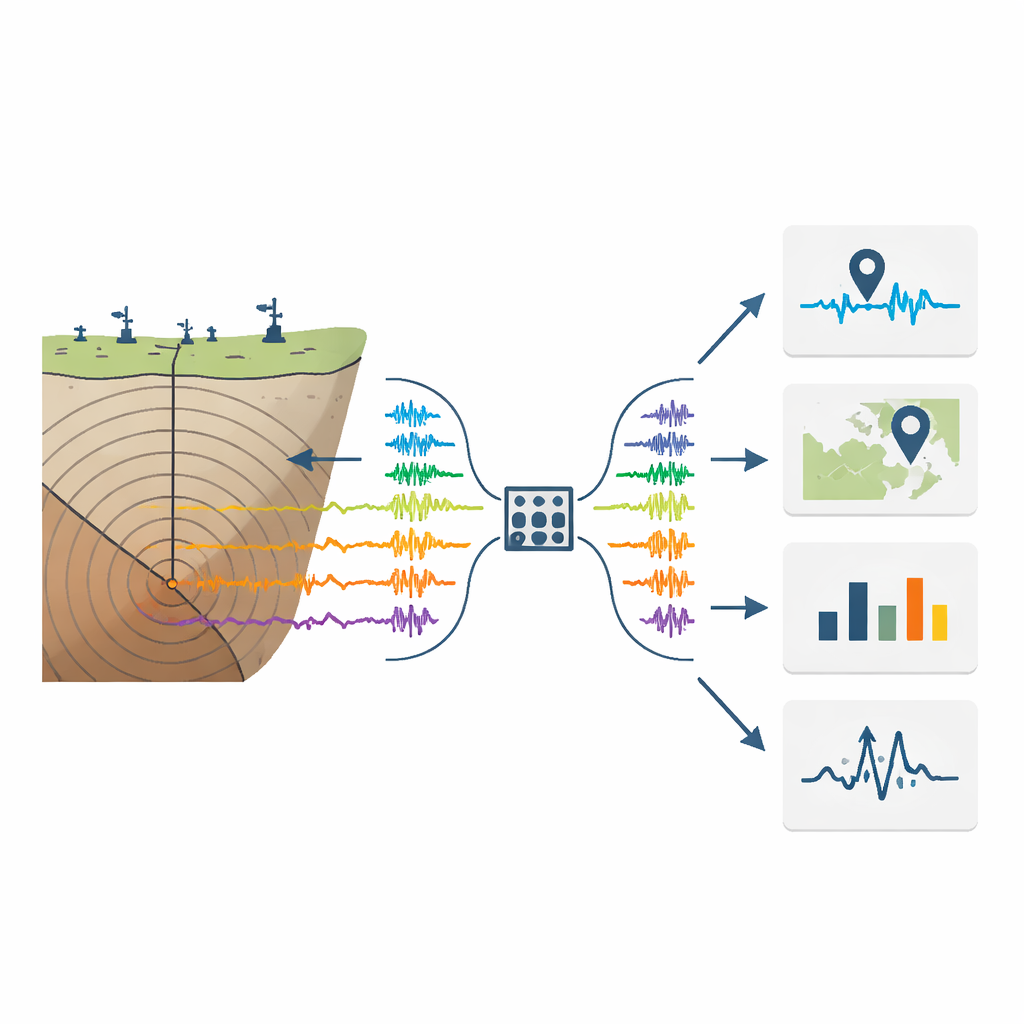

Boosting many earthquake tasks at once

To test whether these learned features are actually useful, the authors plug U-Trans into several established deep-learning tools: one for picking P- and S-wave arrivals, one for locating events from single-station data, one for estimating magnitude, and one for classifying the first upward or downward motion of a P-wave. For each task, they add U-Trans’s latent features as a fourth input channel alongside the raw three-component seismogram and fine-tune the combined system. Across datasets from California, Texas, Italy, and Japan—including regions not used in the original training—this simple addition consistently reduces errors. Picks of wave arrival times become sharper, distances and depths are estimated more accurately, magnitude predictions align better with catalog values, and polarity classification improves, even when only a small fraction of labeled data is available.

What this means for future quake monitoring

The study shows that a single, self-supervised foundation model can learn a general "language" of seismic shaking that benefits many different monitoring tasks. By focusing on reconstructing partially hidden waveforms, U-Trans naturally emphasizes the wave arrivals that seismologists care about most, then passes that distilled information to downstream models. In practical terms, this approach promises more accurate and robust earthquake catalogs, better performance in regions with limited training data, and a flexible framework that can be extended as new tasks arise. For the public, it is a step toward faster, more reliable assessments of when, where, and how strongly the Earth has just moved.

Citation: Saad, O.M., Chen, Y. & Alkhalifah, T. U-Trans: a foundation model for seismic waveform representation and enhanced downstream earthquake tasks. Sci Rep 16, 12657 (2026). https://doi.org/10.1038/s41598-026-41454-x

Keywords: earthquake monitoring, seismic waveforms, deep learning, foundation models, self-supervised learning