Clear Sky Science · en

AI-driven multimodal imaging fusion using swin transformer and optimized tensor fusion networks for pneumonia detection

Why smarter pneumonia checks matter

Pneumonia can turn a simple cough into a life‑threatening emergency, especially for children, older adults, and people with weak immune systems. Doctors usually spot it by examining chest X‑rays or CT scans, but reading thousands of such images a year is demanding and sometimes uncertain, particularly in crowded or under‑resourced hospitals. This paper presents a new artificial intelligence (AI) system that looks at lung images from multiple sources at once, explains what it is seeing, and even estimates how risky a patient’s condition might be—aiming to support faster, more reliable care rather than replace doctors.

Bringing different lung images together

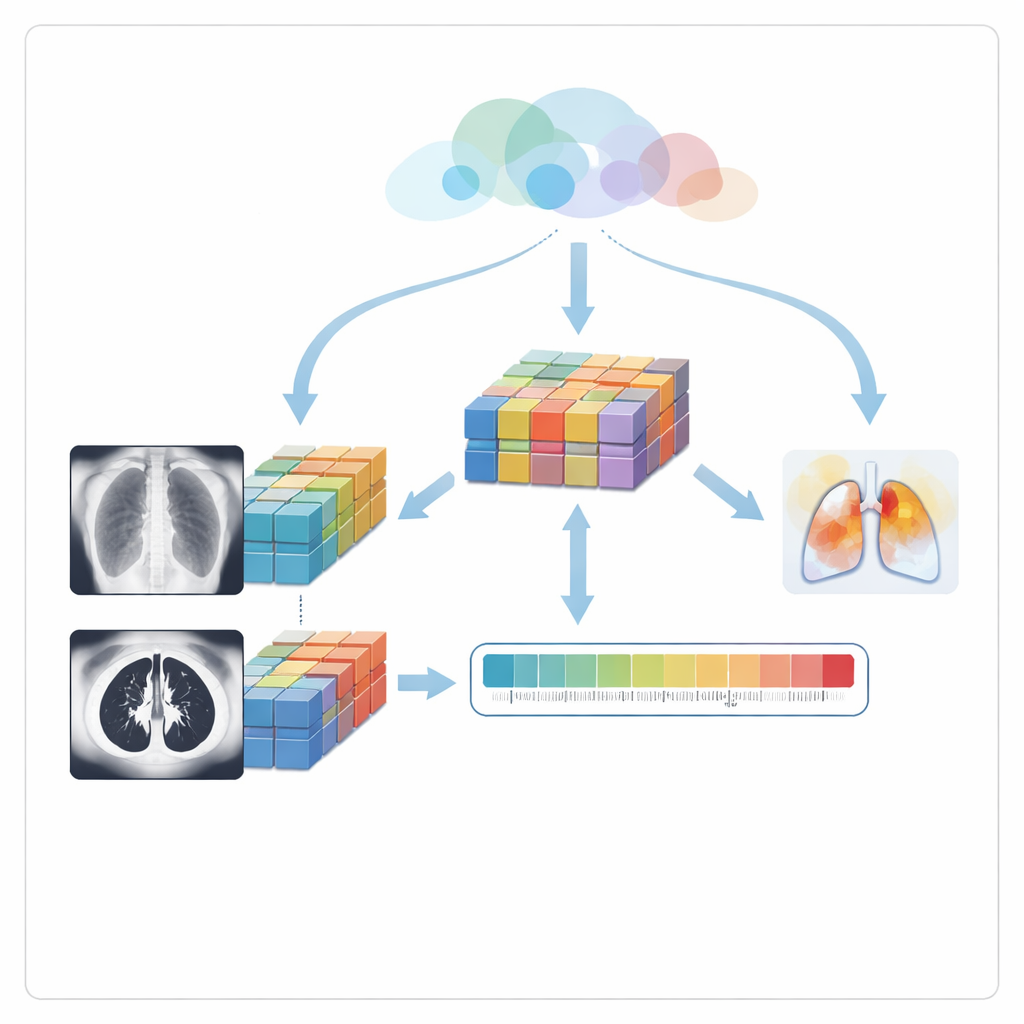

The authors focus on two common scan types: chest X‑rays, which are cheap and widely available, and CT scans, which provide more detailed cross‑sections of the lungs. Instead of treating these as separate worlds, the system learns from both. First, a specialized image‑processing step cleans each picture, removing noise and boosting subtle bright spots and hazy regions that often signal early pneumonia. This makes faint disease patterns more visible to the AI and, indirectly, to clinicians who later review the system’s explanations.

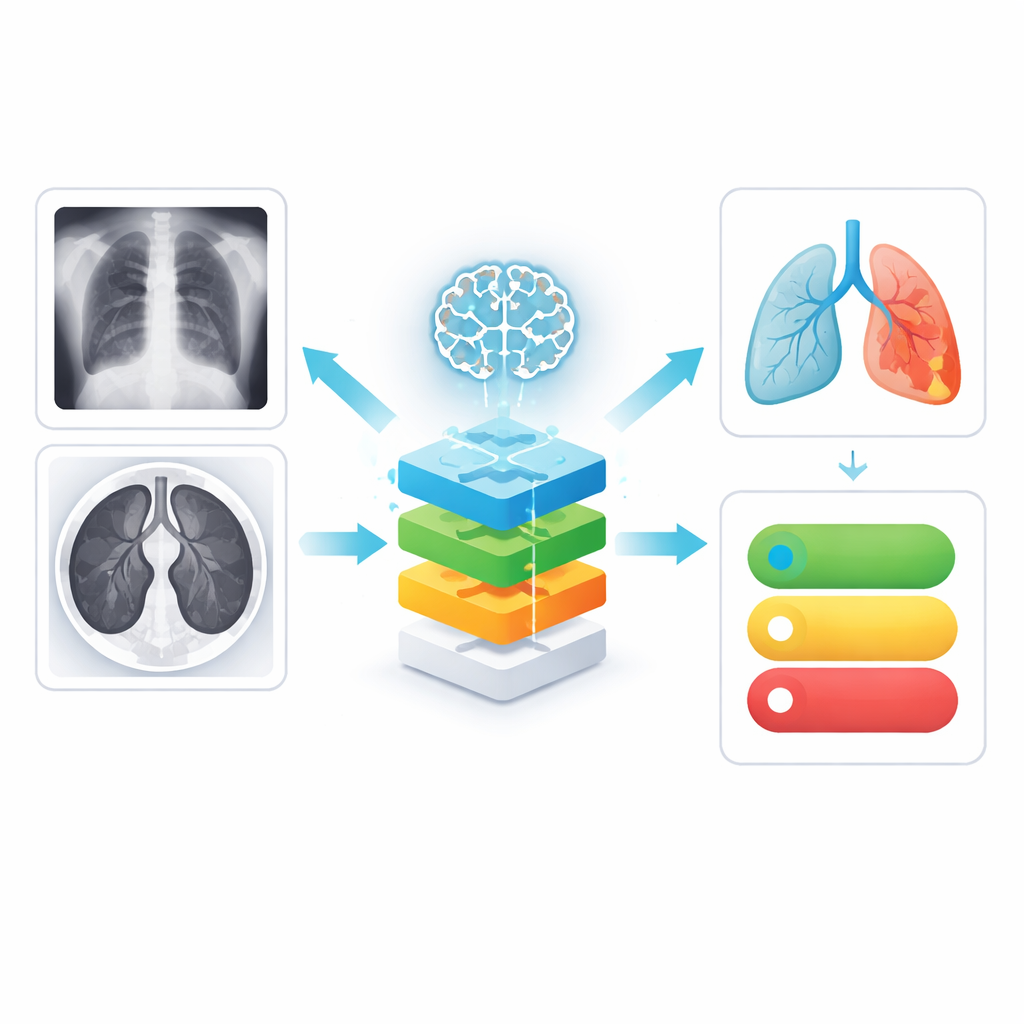

How the AI learns patterns of disease

After cleaning, each image is passed to a modern vision model called a Swin Transformer. Unlike traditional methods that scan an image with fixed filters, this model looks at the picture through many small, overlapping windows and gradually builds a layered understanding of shapes and textures—from fine lung details to broader patterns across the chest. Separate copies of this model analyze X‑rays and CT scans, producing rich summaries of each image that capture both local blemishes and global structure, such as patchy opacities or fluid‑filled areas that tend to accompany pneumonia.

Combining views and handling uncertainty

The next challenge is to merge what the AI has learned from the two imaging types. Instead of simply averaging scores, the system uses a tensor fusion network that mathematically pairs every feature from X‑rays with every feature from CT scans, capturing how patterns in one view reinforce or contradict patterns in the other. Because this can create an overwhelming number of combinations, an optimization method inspired by zebra herd movement trims away redundant or unhelpful links, keeping only the most informative ones. This fused representation is then sent into a Bayesian neural network, which not only predicts whether pneumonia is present but also estimates how confident it is. Repeating the prediction several times with slight internal variations lets the model measure its own uncertainty—a crucial clue for doctors deciding when to trust the output or look more closely.

Showing doctors where the model is looking

To avoid a “black box” diagnosis, the system uses a technique called Grad‑CAM to highlight regions of each scan that most influenced its decision. These highlights appear as color overlays on X‑ray and CT images, typically lighting up cloudy or consolidated lung areas known to radiologists. The authors then go one step further: they measure how well these highlighted regions overlap with the actual lung area, turning this into a visual consistency score. Finally, a risk module combines three ingredients—the predicted chance of pneumonia, the model’s uncertainty, and this visual consistency—into a single risk score ranging from low to high. When the score passes a preset threshold, the system is designed to trigger early alerts so that high‑risk patients can be prioritized.

What the results mean for patients

Tested on public X‑ray and CT datasets, the framework outperformed several widely used deep‑learning models, achieving high accuracy while also providing uncertainty estimates and clear visual cues. Although the data did not include matched scans from the same patients and came from limited sources, the work shows that a carefully designed multimodal AI can do more than simply label images: it can fuse different views of the lungs, say how sure it is, and show exactly where it sees trouble. For patients, such systems could translate into quicker diagnoses, better triage in crowded hospitals, and more targeted follow‑up, especially in regions where expert radiologists are scarce.

Citation: Sikindar, S., Raghavendran, C.V. & Madhavi, G. AI-driven multimodal imaging fusion using swin transformer and optimized tensor fusion networks for pneumonia detection. Sci Rep 16, 12611 (2026). https://doi.org/10.1038/s41598-026-41427-0

Keywords: pneumonia detection, medical imaging AI, chest X-ray, CT scan, risk assessment