Clear Sky Science · en

Automatic text readability assessment for educational content based on graph representation learning

Why It Matters for Teachers and Learners

When teachers choose a reading passage, they face a delicate balancing act: the text must be challenging enough to promote growth, but not so difficult that students give up. This paper introduces a new artificial intelligence method that can estimate how hard a passage is to read, especially for educational materials. By looking beyond simple counts of words and sentences to the deeper structure of language, the system aims to help match the right text to the right reader more accurately than traditional readability formulas.

Limits of Old-Fashioned Readability Scores

For decades, schools have relied on formulas like Flesch–Kincaid that use surface clues—such as sentence length and syllable counts—to judge difficulty. These methods are easy to compute but blind to many aspects of real-world complexity. A short science paragraph packed with technical terms or a sentence with a twisted structure might still be labeled “easy” because its words are short and its sentences are brief. As a result, teachers may unintentionally assign material that is too dense for some students or overly simple for others, especially in content-rich subjects like science and social studies.

Looking Inside the Sentence

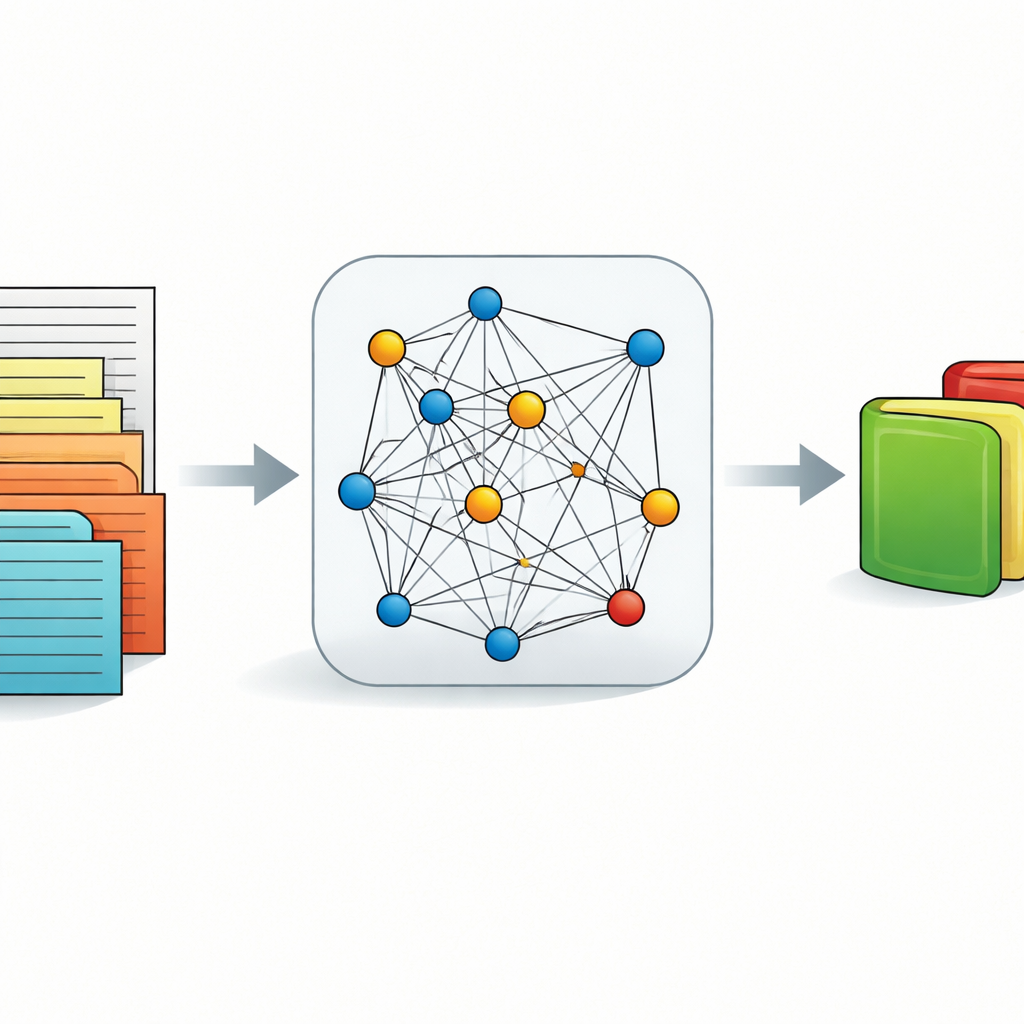

The authors propose a different approach that treats each sentence like a network. Every word becomes a point (or node), and the grammatical links between words—such as subject–verb or verb–object—become connections (edges). Crucially, the strength of each connection depends not only on distance in the sentence but also on what kinds of words sit in between. A long stretch filled with content words such as nouns, verbs, and adjectives suggests a mentally demanding leap; a shorter path or one filled mostly with small function words suggests an easier step. Psycholinguistic research shows that these long, content-heavy detours strain working memory and slow understanding, so the model uses them as signals of higher difficulty.

Teaching a Network to Read the Network

To make use of this sentence-as-network idea, the study employs a type of neural network designed for graphs, called a Graph Convolutional Network. Before the graph model runs, another AI engine (similar to widely used systems like BERT) creates a rich numerical representation of each word that reflects its meaning in context. The graph network then passes information along the connections between words, blending meaning and structure to form a single summary representation of the entire passage. This summary is fed into a final layer that outputs a continuous readability score rather than a simple grade band, allowing for finer distinctions between texts.

To squeeze the best performance from the system, the authors use Bayesian optimization, a strategy that automatically searches for good settings of many “knobs” at once. These include how strongly different parts of speech should influence connection strength, how many graph layers to use, and how fast the model should learn. Instead of hand-tuning these choices, the optimization procedure systematically tests and refines them based on validation results.

How Well It Works in Practice

The model is tested on the CLEAR dataset, a large collection of roughly 5,000 short passages with expert-assigned readability scores and movie-style content ratings (G, PG, PG-13, and R). Using a rigorous cross-validation scheme, the system explains about 97% of the variation in these scores, a level of accuracy that surpasses both classic feature-based methods and strong modern baselines built on transformer models alone. The method also performs well when applied to a Persian dataset originally built for classifying texts into easy, medium, and hard levels: passages within the same difficulty group tend to receive similar predicted scores, suggesting that what the model learns about structure in English carries over to another language.

What This Means for Classrooms

For educators and curriculum designers, the main takeaway is that readability is about more than long words and long sentences. The way information is threaded through a sentence—the number of detours and the kinds of words that fill them—plays a major role in how easily students can follow along. By modeling texts as networks of connected words and using graph-based AI to read those networks, this study offers a more precise, flexible tool for estimating reading difficulty. While it does not replace human judgment or account for every nuance of literature and social science prose, it can serve as a powerful decision aid, helping teachers select and adapt texts that better fit their students’ skills and support more inclusive learning.

Citation: Zhang, L., Abhani, J., B, J. et al. Automatic text readability assessment for educational content based on graph representation learning. Sci Rep 16, 11308 (2026). https://doi.org/10.1038/s41598-026-41313-9

Keywords: readability assessment, educational texts, graph neural networks, natural language processing, text difficulty