Clear Sky Science · en

Federated learning with continual update for privacy-preserving clinical event prediction across distributed hospitals using MCN-GNN

Why this matters for your health

Modern hospitals collect vast amounts of digital health records, but strict privacy rules and trust issues make it hard for them to pool data and learn from it together. This paper presents a way for many hospitals to jointly train powerful prediction tools for conditions like heart failure, stroke, liver disease, kidney disease, and diabetes—without ever sharing raw patient data. It also tackles a hidden problem in many artificial intelligence systems: they tend to “forget” old knowledge when new information arrives.

Many hospitals, one shared brain

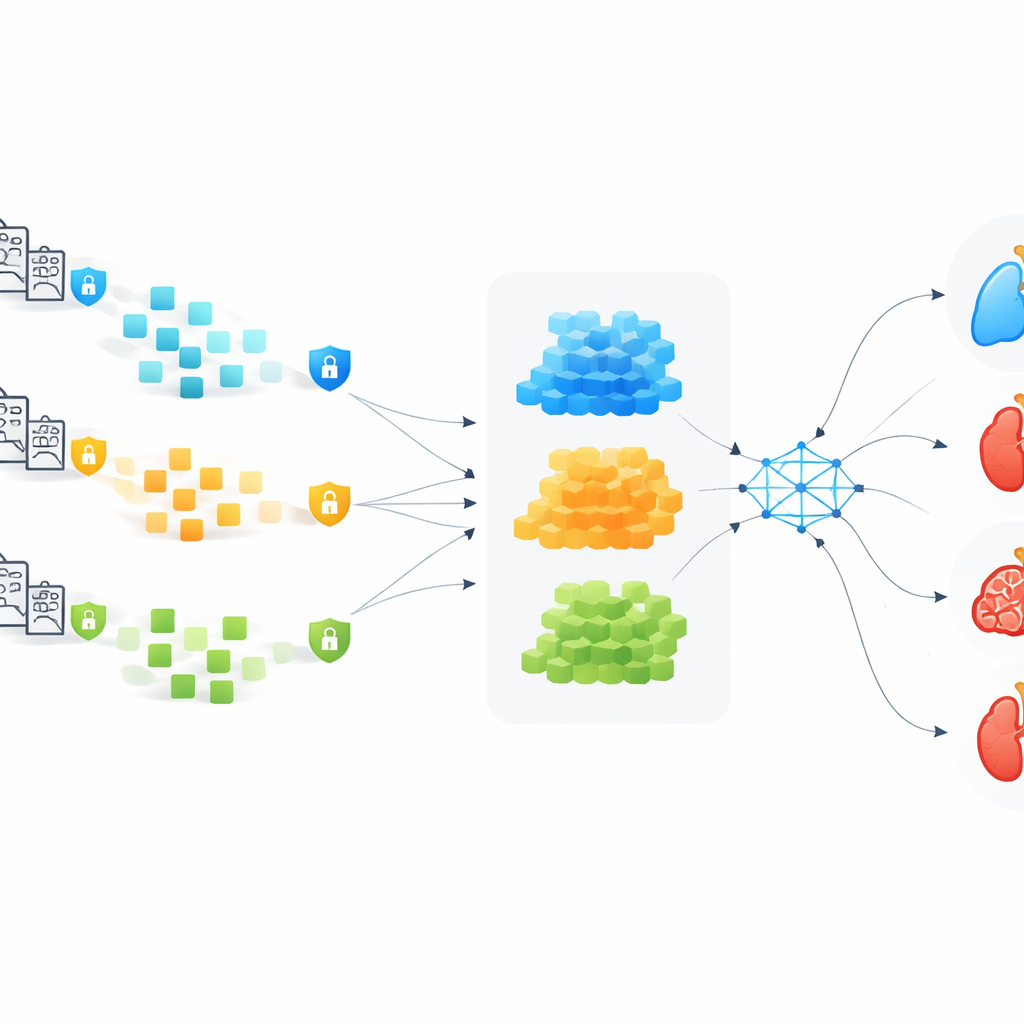

The authors build on an idea called federated learning: instead of sending patient records to a central server, each hospital trains its own local model and sends only the learned updates to a shared global model. In this work, dozens of hospitals can each focus on different problems—for example, one predicts heart failure, another stroke, another cirrhosis—but all contribute to a stronger common model. This setup keeps data inside each hospital’s walls while still allowing everyone to benefit from the combined experience of thousands of patients.

Turning medical histories into networks

To make the most of electronic health records, the team converts each patient’s information into a network of events over time. In this network, medical features—such as age, blood pressure, lab results, and symptoms—are treated as points, and links between them capture how earlier events influence later ones. This structure, called a temporal‑causal graph, is designed to tease out cause‑and‑effect patterns rather than simple correlations. A specialized graph‑based model then learns from these networks while using a “mean‑centering” step to keep different features from blending together too much, which is a common weakness of many graph methods.

Keeping secrets while models travel

Even if hospitals only send model updates, those updates can still leak private information if an attacker analyzes them cleverly. To prevent this, the authors encrypt each hospital’s model changes using a scheme that allows the central server to perform computations directly on scrambled numbers without ever seeing the underlying values. A special scaling technique keeps the extra mathematical “noise” under control, so the encrypted training remains accurate. Before any hospital’s updates are accepted, a reinforced digital signature checks that the sender is genuine and that the updates have not been tampered with. All major steps—registration, training, aggregation, and updates—are recorded on a blockchain‑style ledger to provide an audit trail that no single party can quietly rewrite.

Making sure learning never goes stale

Real‑world hospitals do not stand still: new diseases appear, guidelines change, and patient populations shift. A big challenge for learning systems is “catastrophic forgetting,” where absorbing new patterns erases what was learned earlier. The authors introduce a continual‑update method that carefully mixes older knowledge with fresh information every time the global model is sent back to the hospitals. Older examples are replayed less often over time, but never completely disappear, helping each hospital keep what it has already mastered while still adapting to new trends.

How well does it work in practice?

The framework was tested using five publicly available datasets covering heart failure, stroke, cirrhosis, chronic kidney disease, and diabetes. Across these different tasks, the system achieved prediction accuracies around 99%, clearly outperforming a range of existing deep‑learning and graph‑based models. It also showed strong resistance to various cyber‑attacks, maintained fair performance across hospitals with very different types of data, and scaled to many training rounds without overwhelming communication or computing costs.

What this means for patients and clinicians

In plain terms, this work shows that hospitals can jointly build highly accurate early‑warning tools for serious clinical events while keeping patient records safely behind their own firewalls. By combining privacy‑preserving training, robust security checks, continual learning, and transparent logging, the proposed system could support real‑time risk alerts and more personalized care plans across large hospital networks—without forcing a trade‑off between medical insight and data privacy.

Citation: Jagdeesh, K., Kanimozhi, N., Sardar, T.H. et al. Federated learning with continual update for privacy-preserving clinical event prediction across distributed hospitals using MCN-GNN. Sci Rep 16, 12608 (2026). https://doi.org/10.1038/s41598-026-40964-y

Keywords: federated learning, electronic health records, clinical event prediction, privacy-preserving AI, graph neural networks