Clear Sky Science · en

Enhanced image encryption with deep generative models using a self-attention mechanism

Why hiding medical pictures matters

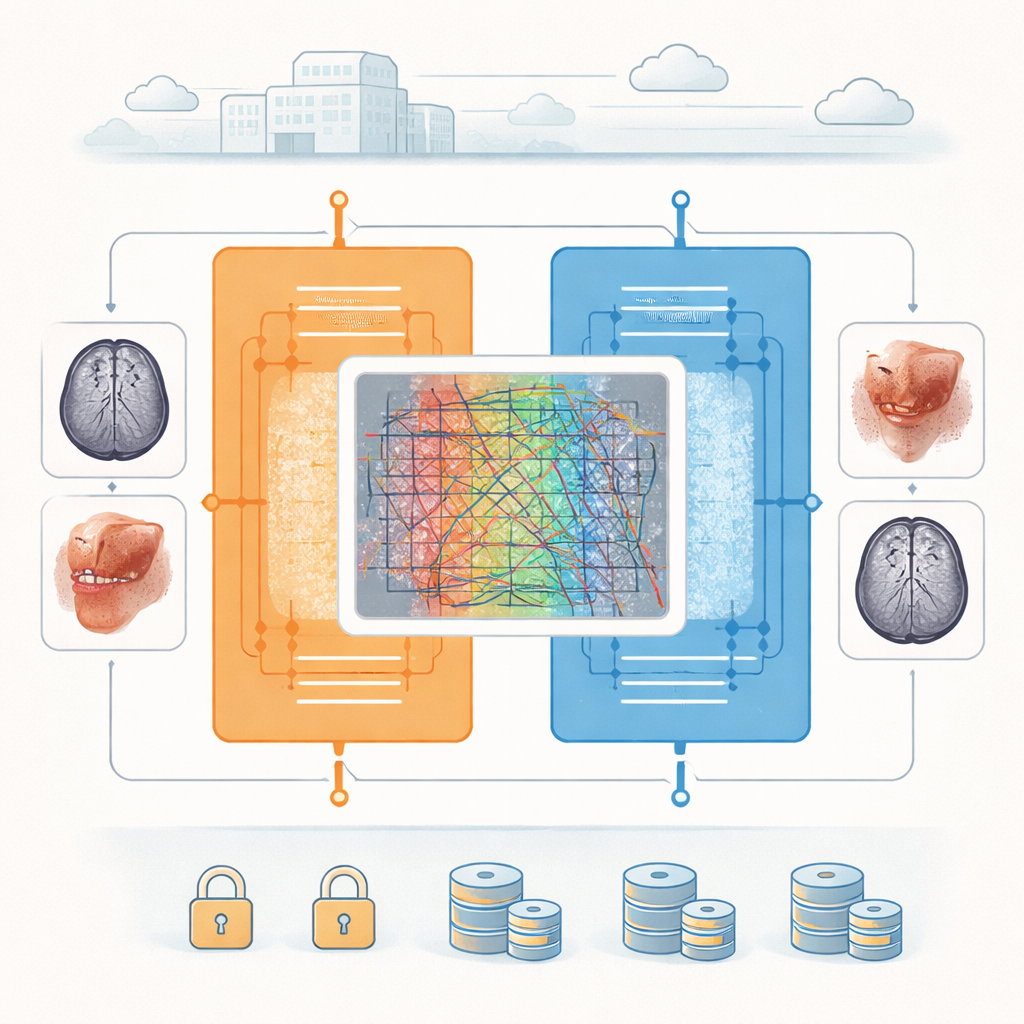

Hospitals now send and store millions of medical images—from brain scans to skin photos—across networks every day. These pictures can reveal intimate details about a person’s health, so they must be protected from prying eyes while still remaining clear enough for doctors to read. This paper introduces a new way to scramble medical images using modern artificial intelligence, aiming to make stolen data useless to an attacker while keeping decrypted images sharp enough for diagnosis.

A smarter lock for digital pictures

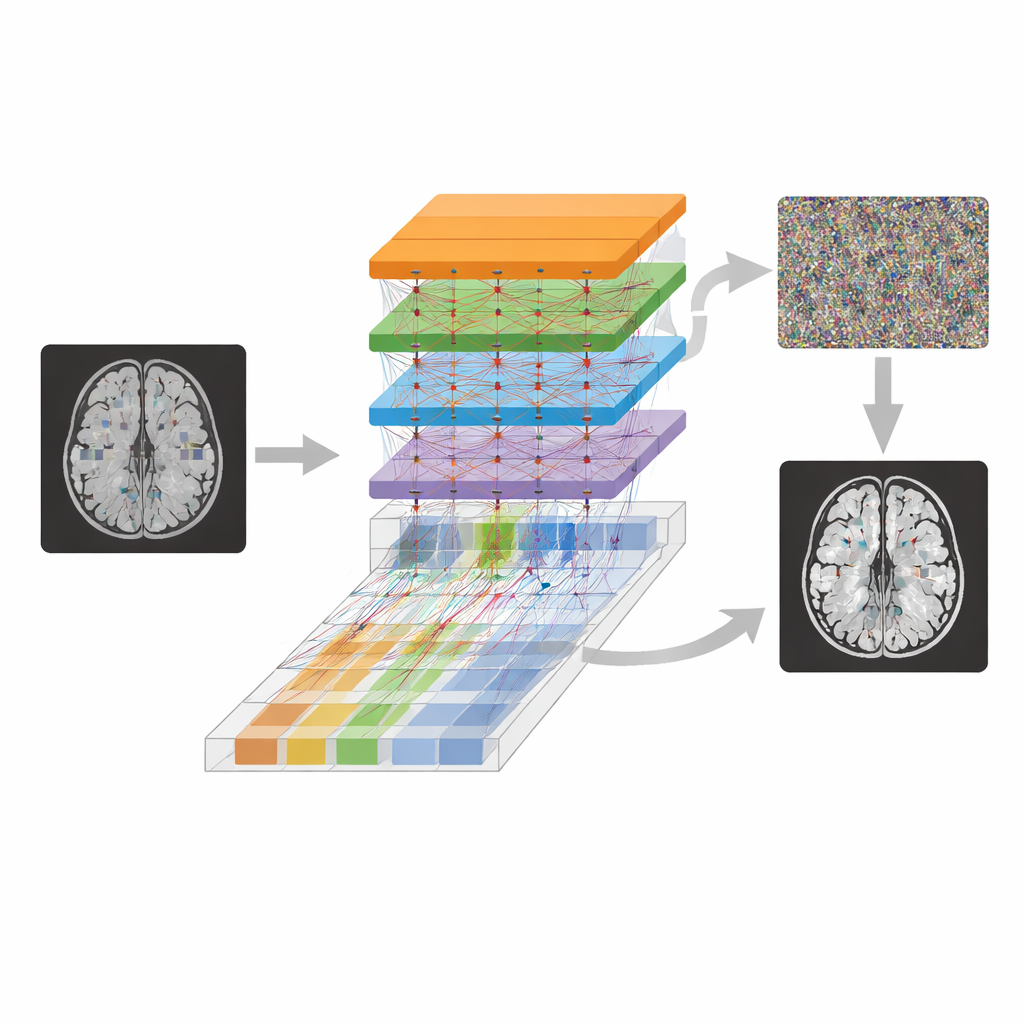

The authors build their protection system on a family of deep-learning models called generative networks, which are designed to learn how images look and then create new ones. Their setup uses a pair of neural networks that work like a lock and key: one network encrypts an ordinary medical image so that it looks like random noise, and a second network reverses the process to recover the original. Two additional networks act as critics, constantly judging whether encrypted images look unlike any real scan and whether decrypted images are close enough to the originals. This push‑and‑pull training forces the system to hide sensitive details while still being able to bring them back faithfully.

Letting the network pay attention

To strengthen both security and image quality, the researchers add a mechanism known as self‑attention inside the heart of the model. Instead of looking only at small neighborhoods of pixels, the attention module allows the system to connect distant regions of an image—for example, relating one part of a brain scan to another far away. Multiple attention “heads” examine the image from different perspectives and then combine their findings. This global view helps spread information across the entire encrypted picture, breaking the tight pixel‑to‑pixel links that attackers often exploit, while preserving the structures that matter when the image is later restored.

Turning learned weights into secret keys

In traditional encryption, a short numerical key controls how data is scrambled. Here, the role of the key is played by all the learned weights of the neural networks, which determine exactly how images are transformed. The two main generators together contain tens of millions of adjustable values, and the critics add millions more. During training on large sets of brain MRI scans and skin‑cancer images, these parameters are gradually tuned so that encryption and decryption work well. The sheer number of values produces an enormous key space, making it practically impossible for an attacker to guess or brute‑force the correct configuration without access to the trained models stored securely in a hospital environment.

Putting the system to the test

The team subjects their method to a broad set of checks that mirror real‑world threats. Statistically, the encrypted images look almost perfectly random: measures such as entropy sit near their theoretical maximum, and neighboring pixels lose the strong correlations found in natural scenes. When the same picture is encrypted with two nearly identical keys, the results differ dramatically, showing that even tiny key changes lead to very different ciphers. At the same time, decrypted images closely match the originals, with high scores on standard quality metrics that measure noise and structural similarity. The system also fares well when parts of the encrypted image are blocked or damaged; up to moderate levels of missing data, the recovered scans remain visually reliable. Finally, the authors compare their approach to several earlier deep‑learning‑based encryption schemes and find that their design achieves an unusually strong balance: it provides near‑maximal randomness and resistance to differential attacks while preserving medical detail.

What this means for patient privacy

For non‑specialists, the take‑home message is that the authors have built an AI‑driven lock that can strongly scramble medical pictures yet still restore them with near‑original clarity. By weaving self‑attention into a pair of generative networks and treating the learned model parameters as massive secret keys, their method makes it extremely hard for outsiders to reconstruct patient images from intercepted data. Although the approach is more computationally demanding than lightweight schemes aimed at tiny devices, it is well suited to hospital servers and medical gateways where security and diagnostic quality matter most. As such systems mature and become more efficient, they could form a key part of how future hospitals keep sensitive scans safe without sacrificing the information doctors need to care for their patients.

Citation: Karmouni, I., Ghouate, N.E., Tahiri, M.A. et al. Enhanced image encryption with deep generative models using a self-attention mechanism. Sci Rep 16, 11200 (2026). https://doi.org/10.1038/s41598-026-38780-5

Keywords: medical image encryption, deep learning security, generative models, self-attention, health data privacy