Clear Sky Science · en

Leveraging haze-aware features for improved image clarity and detection accuracy with an optimized DCNN-YOLOv8 network

Why Clear Vision in Fog Matters

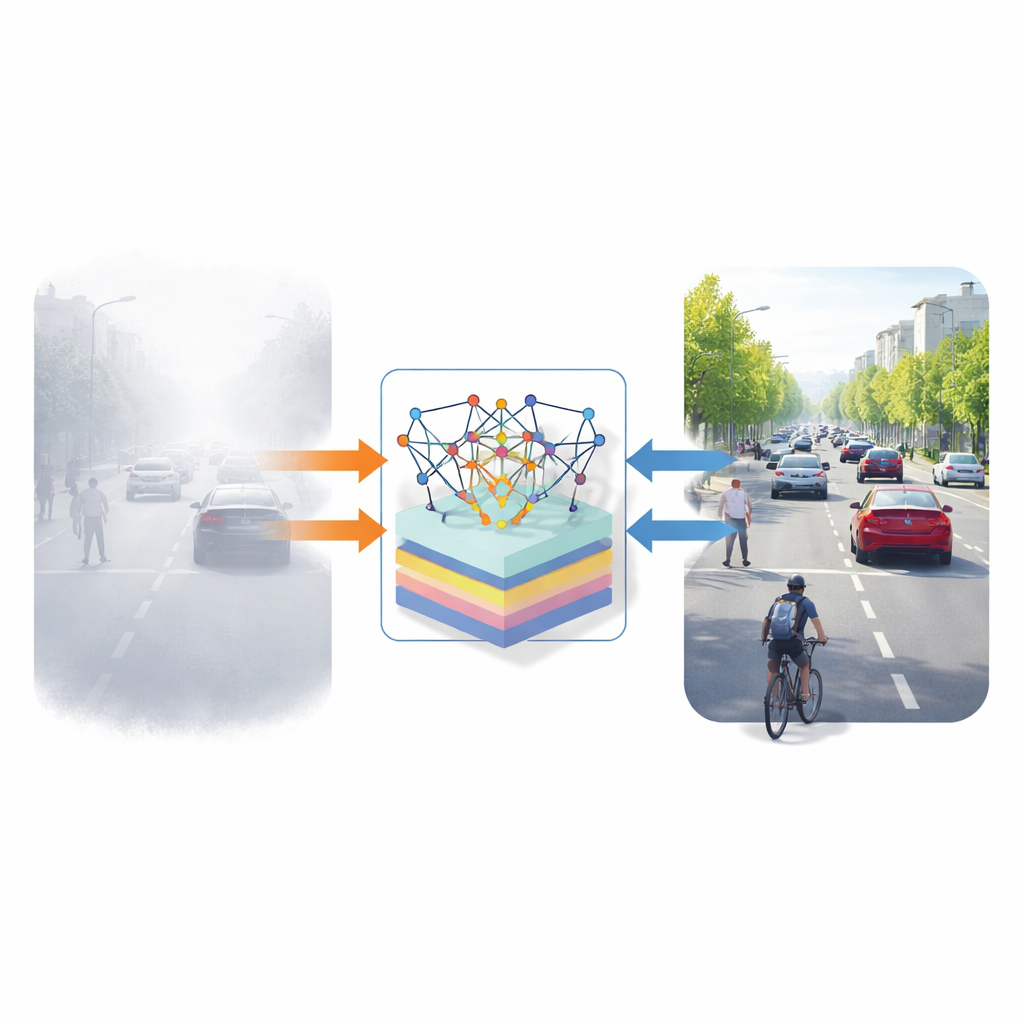

Driving on a foggy morning or watching a hazy security camera feed, we instinctively feel how dangerous hidden cars or pedestrians can be. Many modern systems—self-driving cars, road surveillance, even satellite mapping—depend on computers that can “see” clearly. But haze and fog confuse today’s vision algorithms, making them miss important objects. This paper presents a new approach that teaches computers not only to clean up hazy images but also to judge how good the cleanup is and then detect objects more reliably in difficult weather.

Seeing Through the Mist

Outdoor images taken in haze suffer from dull colors, low contrast, and blurred edges because tiny particles in the air scatter and absorb light. That means distant cars fade into the background and road signs become hard to distinguish. Many image “dehazing” methods have been proposed, yet real-world performance is still uneven: some methods restore contrast but distort colors; others sharpen details but remain unreliable across changing weather or locations. A major missing piece is a robust way for the computer itself to assess the visual quality of dehazed images, especially when no perfectly clear reference image is available.

Teaching the Computer What a Good Image Looks Like

The authors tackle this by building a pipeline that starts with haze-aware feature extraction tailored to foggy scenes. For each input image, the system computes a new type of descriptor called Haze aware Structural Pixel Neighbor (HSPN) features, which summarize how each pixel relates to its neighbors in terms of brightness and texture. These are combined with measures of average brightness, local variation, and color balance, as well as statistics that capture how haze alters the natural distribution of image intensities. Together, these features give the system a compact yet rich description of how strongly haze is degrading structure and color in a scene.

A Smarter Learning Engine Under the Hood

These haze-aware features are fed into a deep convolutional neural network (DCNN) that predicts an objective “quality score” for each image. To train this network effectively, the authors introduce a new optimization strategy called the Chronological Chimp Optimization Algorithm (CChOA). Inspired by group hunting behavior in chimpanzees and enhanced with a time-aware mechanism, CChOA guides the training process through past and present candidate solutions, helping the network avoid getting trapped in poor local optima. In practice, this means the quality-prediction network converges faster, reaches lower training error, and is more stable than when using standard optimizers or the original chimp-based method.

From Better Images to Better Detection

Once the system has improved and scored image quality, the enhanced images are passed to a customized version of YOLOv8, a fast object-detection network. The authors adapt YOLOv8 specifically for foggy conditions by refining its loss function to localize small, overlapping objects more precisely, adding an Inception-style module to capture details at multiple scales, and inserting a spatial pyramid pooling block that helps the network understand both local detail and broader context. Trained and tested on two benchmark haze datasets (RESIDE and FRIDA), the integrated pipeline delivers clearer images and more reliable detection than several existing dehazing and quality-assessment techniques, as well as outperforming baseline YOLOv8 in detection accuracy under fog.

What This Means for Real-World Vision Systems

In simple terms, the study shows that computers can become much better at spotting objects in fog if they first learn to understand what good image quality looks like in those conditions and if their learning process is carefully optimized. By combining haze-aware features, a time-sensitive optimization strategy, and a tuned detection network, the proposed system significantly boosts clarity and detection scores on standard metrics. This kind of integrated approach could make future surveillance cameras, driver-assistance systems, and remote-sensing tools safer and more dependable when the weather is at its worst.

Citation: Saini, A., Gill, N.S. & Gulia, P. Leveraging haze-aware features for improved image clarity and detection accuracy with an optimized DCNN-YOLOv8 network. Sci Rep 16, 14213 (2026). https://doi.org/10.1038/s41598-026-38265-5

Keywords: image dehazing, object detection, deep learning, computer vision in fog, YOLOv8