Clear Sky Science · en

TrustNet: a lightweight network with integrated uncertainty quantification and quantitative explainable AI for ischemic stroke detection in CT images

Why smarter stroke scans matter

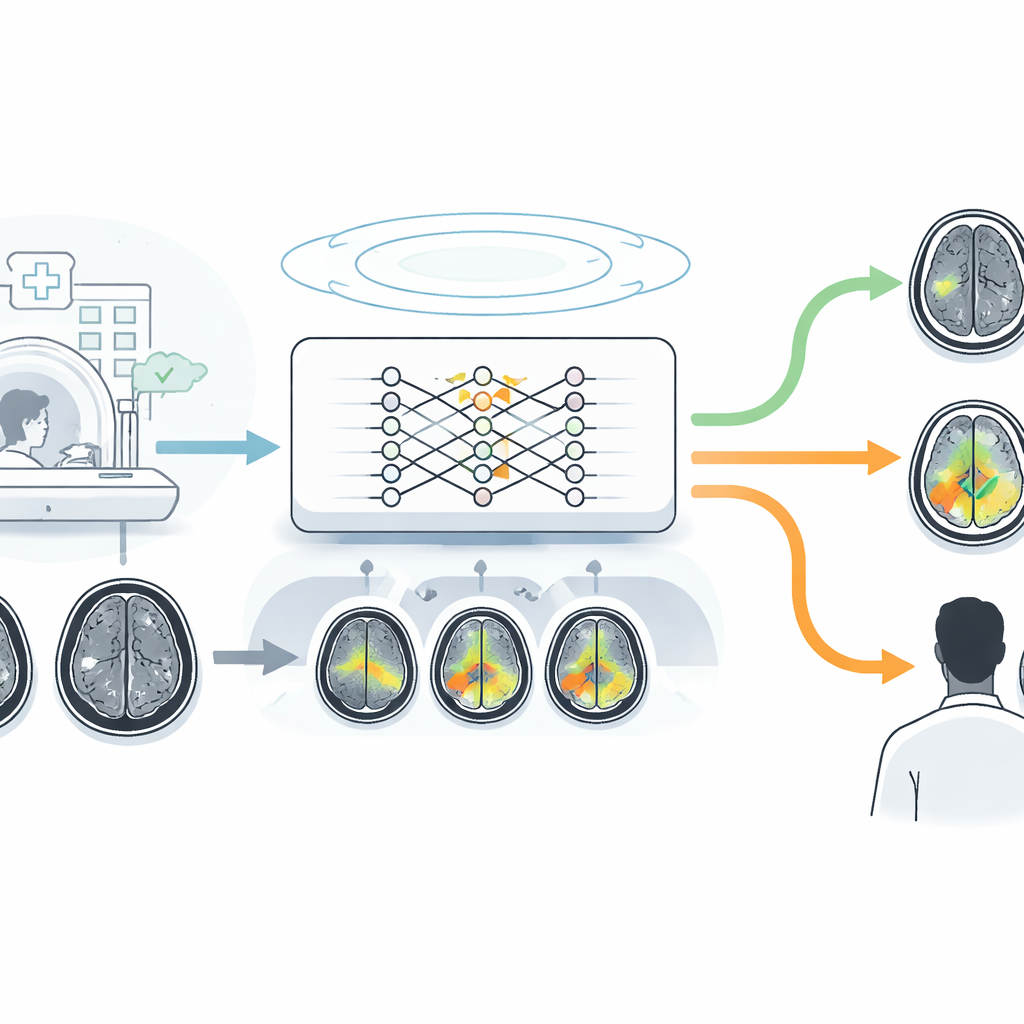

When someone has a stroke, every minute of delay can cost brain tissue and, ultimately, quality of life. Doctors often rely on quick CT scans of the head to decide who needs urgent treatment, but the early signs of ischemic stroke on these images are subtle and easy to miss, even for experts. Many artificial intelligence systems can spot patterns in scans, yet they typically act as opaque “black boxes” and offer no sense of how sure they are. This study introduces a new AI approach, called TrustNet, designed not just to be accurate, but also to show where it is looking and how confident it feels, so that clinicians can decide when to trust it and when to be cautious.

A small tool for a big emergency

TrustNet is a compact computer vision system built to distinguish normal brain CT scans from those showing ischemic stroke, a type of stroke caused by blocked blood vessels. Unlike many heavyweight image-analysis networks that demand powerful hardware, TrustNet uses a lightweight design with just 0.66 million adjustable parameters, making it suitable for hospitals with limited computing resources and for potential bedside or mobile use. The researchers trained and tested it on a carefully curated private collection of 2,023 CT images from an Indian hospital, evenly split between healthy and stroke cases, and then challenged it further using a public dataset from an online repository to see how well it would generalize beyond its home institution.

Teaching the network to know when it might be wrong

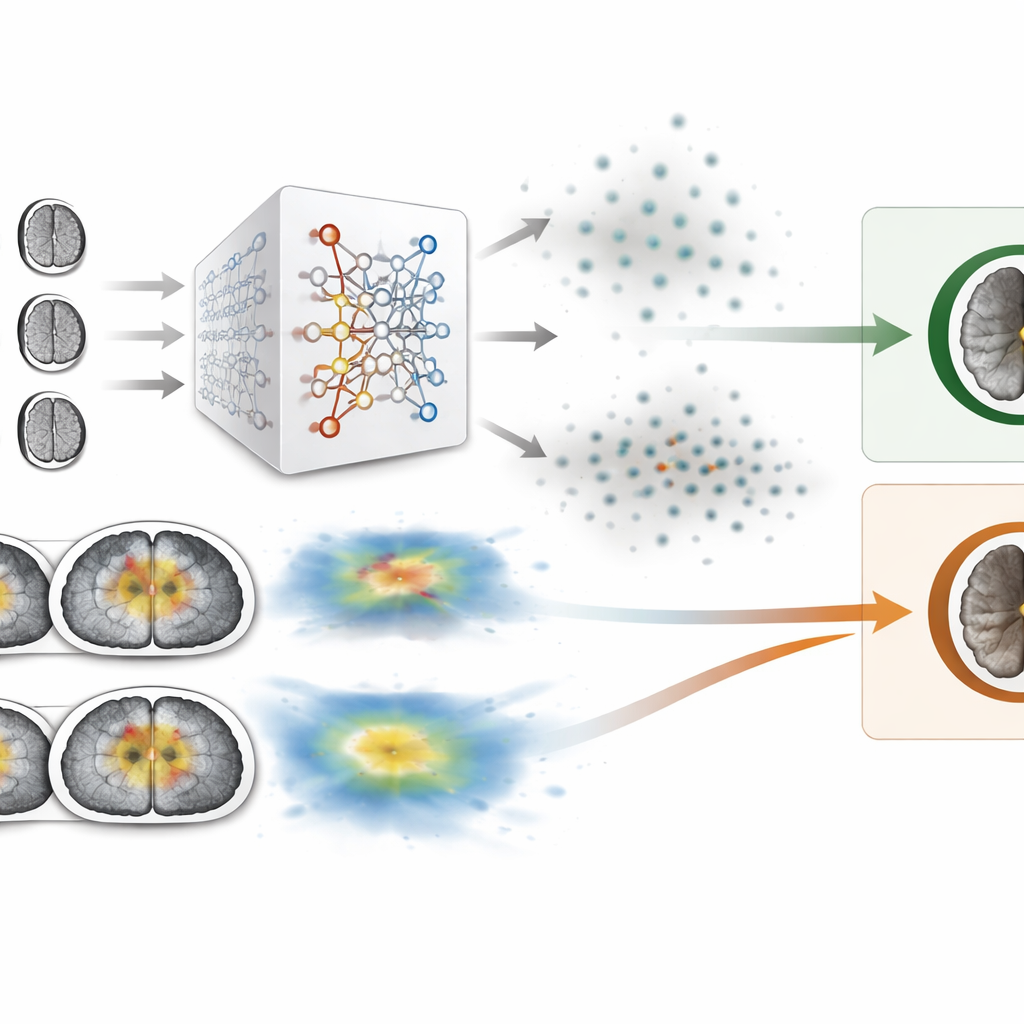

A key innovation in this work is that TrustNet is built to estimate its own uncertainty. Instead of producing a single rigid answer for each scan, the system runs the same image through itself multiple times while randomly dropping some of its internal connections. By looking at how much these repeated runs disagree, the model can assign each prediction a numerical measure of uncertainty, ranging from very sure to highly doubtful. If a case falls above a chosen uncertainty threshold, it is flagged for expert review rather than being treated as a reliable automatic decision. This simple mechanism sharply reduced both missed strokes and false alarms, boosting test accuracy from about 88% in the baseline setup to nearly 95% in patient-wise evaluation, with 100% specificity and 100% precision on the private cohort.

Making machine reasoning visible on the image

Beyond confidence scores, the authors wanted to see whether TrustNet was “looking” at the right parts of the brain when it called a scan normal or abnormal. They used a visualization technique that overlays a colored heatmap onto the CT image, highlighting regions that most influenced the decision. To move beyond purely qualitative inspection, they developed a new, simple metric that averages the intensity of these heatmaps. They found that correctly classified scans tended to show focused, moderate-intensity highlights over plausible lesion areas, whereas misclassified scans often lit up large, scattered regions with very high intensity. By setting a threshold on this heatmap-intensity measure, the system could detect some unreliable explanations and correct a subset of errors, providing a second safety net on top of the uncertainty estimate.

How the new system stacks up

The team compared TrustNet with a range of well-known image-recognition networks, from classic deep models like VGG and ResNet to more recent efficient designs. Many of these competitors either required far more memory and computation or showed weaknesses such as overfitting or poor balance between sensitivity and precision. TrustNet achieved performance on par with, or better than, much larger models, while remaining compact and fast. On slice-level tests of the private data, it reached nearly 99% accuracy, and on the external public dataset it achieved over 98% accuracy after adding both uncertainty and explanation modules. Calibration tests, which check whether the model’s stated confidence aligns with its actual correctness, indicated that TrustNet’s probabilities were reasonably well matched to reality, especially after a simple post-processing step.

What this means for stroke care

For a layperson, the central message is that this study moves stroke-detection AI closer to what clinicians actually need in practice: not just a yes-or-no answer from a mysterious algorithm, but a quick, visual indication of where the model sees trouble and an honest estimate of how sure it is. TrustNet demonstrates that it is possible to build a small, efficient system that spots ischemic stroke on CT scans with high accuracy while also flagging uncertain cases and exposing its reasoning on the image itself. Although the work so far focuses on a simple two-way choice—stroke or no stroke—and comes mainly from one hospital, it shows a promising path toward more transparent and trustworthy AI tools that can support, rather than replace, human judgment in time-critical stroke care.

Citation: Inamdar, M.A., Gudigar, A., Raghavendra, U. et al. TrustNet: a lightweight network with integrated uncertainty quantification and quantitative explainable AI for ischemic stroke detection in CT images. Sci Rep 16, 9861 (2026). https://doi.org/10.1038/s41598-026-37169-8

Keywords: ischemic stroke, brain CT, medical AI, uncertainty quantification, explainable AI