Clear Sky Science · en

Energy optimized scheduling in wireless sensor networks (WSNs) using hybrid bio-inspired reinforcement learning approach

Smarter Sensors for a Connected World

From farm fields to factory floors, wireless sensors quietly watch over our power grids, crops, bridges, and even disaster zones. But there is a catch: these tiny devices run on small batteries and are often scattered in places that are hard to reach. Replacing dead batteries across thousands of sensors is costly or impossible. This paper explores a new way to decide which sensors should be awake and which can safely sleep, so that the whole network lasts longer, stays reliable, and uses as little energy as possible.

The Challenge of Always-On Monitoring

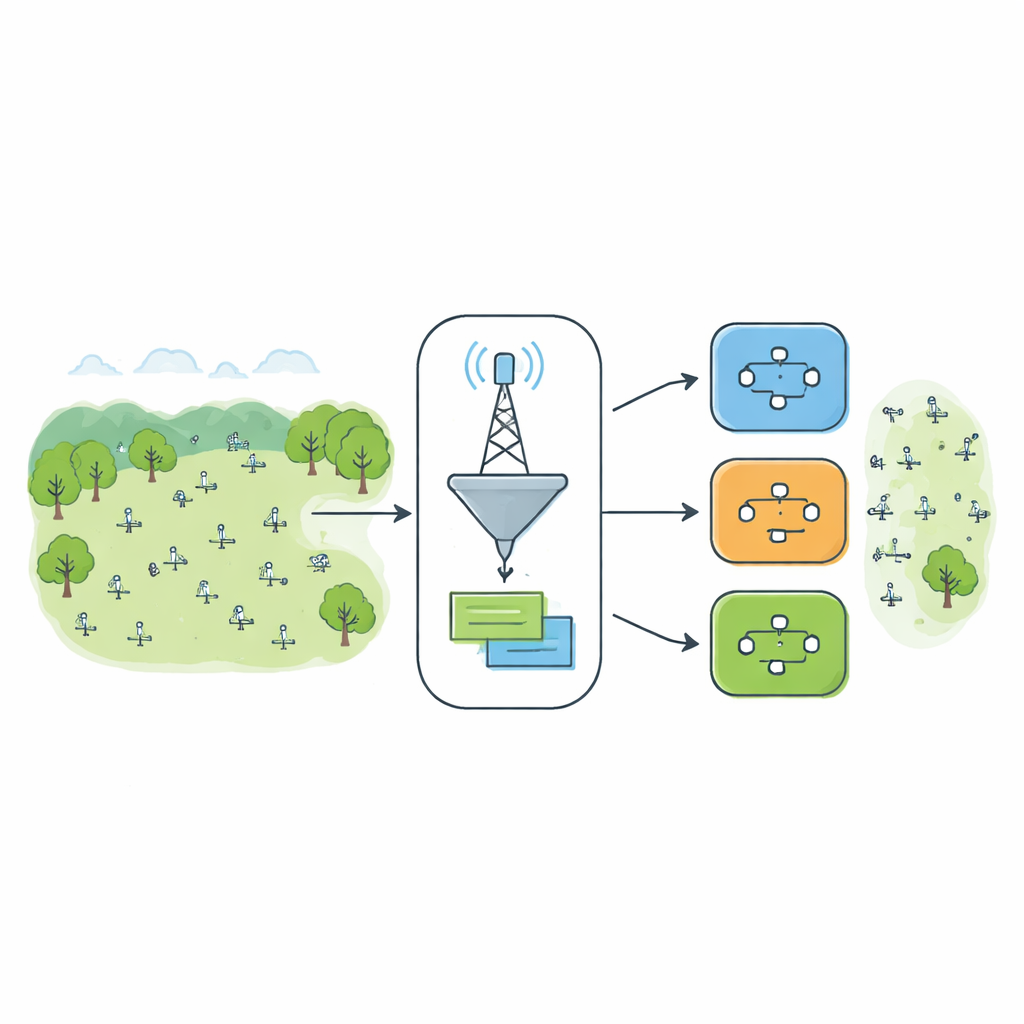

Wireless sensor networks are like invisible blankets spread over an area, sampling temperature, motion, pollution, or other signals and sending them back to a base station. If every sensor is left switched on all the time, the blanket is thick but short-lived: batteries drain quickly and the network dies early. If too many sensors are turned off, coverage becomes patchy and messages may no longer reach the base station. The central challenge is to keep just enough sensors active, in the right places and at the right times, to maintain good coverage and connectivity while stretching battery life as much as possible.

Learning from Ants, Birds, and Experience

Researchers have long borrowed ideas from nature to tackle this sort of problem. Ant-inspired methods send virtual "ants" through the network, gradually strengthening good paths the way real ants lay down pheromone trails. Bird-inspired methods treat each possible arrangement of active sensors as a "particle" in a flock that moves toward better solutions over time. These approaches work well in many settings, but they struggle when the network is changing—when batteries deplete, sensors fail, or the layout shifts. They tend to follow fixed rules and cannot easily adjust their behavior once the environment moves away from what they were tuned for.

A Hybrid Brain That Switches Strategies

The authors propose a hybrid approach called RL-HAPSO that adds a new twist: it not only uses ant-like and bird-like search, but also learns when to call on each of them. In an offline phase, one part of the system chooses a set of energy-efficient sensors to activate, while another rearranges how those active sensors share the work to reduce overlap and maintain coverage. On top of this, a learning module watches how well the network is doing—how much of the area is covered, how much energy is left, and how much redundancy there is. Based on this snapshot, it decides at each scheduling step whether to rely mainly on the ant-like search, the bird-like refinement, or the combined hybrid. Over time, this meta-level learner discovers which choice tends to work best under different conditions.

Putting the System to the Test

To find out whether this learning-driven scheduler is more than just an elegant idea, the team ran extensive simulations on networks with different layouts: randomly scattered sensors, orderly grids, and clustered groups that mimic real deployments. They compared pure ant-based, pure bird-based, a fixed hybrid of the two, and their new learning-guided hybrid. They measured how much energy each method used, how well it covered the area, how quickly it settled on a stable pattern, and how consistent the results were across many repeated runs. The learning-guided method not only reached good solutions faster but also delivered lower overall cost—meaning better coverage with less energy waste—while keeping performance stable even as nodes failed or energy levels dropped.

What This Means for Everyday Technology

In plain terms, the study shows that wireless sensor networks can manage themselves more intelligently by treating their own scheduling rules as something to be learned, not fixed in advance. RL-HAPSO acts like a smart conductor for an unseen orchestra of sensors, deciding which instruments should play and which can rest, and even which style of decision-making works best at a given moment. This allows sensor networks to last longer, react to changing conditions, and still provide trustworthy data for applications such as smart agriculture, critical infrastructure monitoring, and emergency response. As the Internet of Things expands, approaches like this point the way toward sensor systems that are not just connected, but truly adaptive and resource-aware.

Citation: Sarobin, M.V.R., Akil, S., Shalu, S.B. et al. Energy optimized scheduling in wireless sensor networks (WSNs) using hybrid bio-inspired reinforcement learning approach. Sci Rep 16, 13109 (2026). https://doi.org/10.1038/s41598-026-35077-5

Keywords: wireless sensor networks, energy-efficient scheduling, reinforcement learning, bio-inspired optimization, Internet of Things