Clear Sky Science · en

Multimodal and Hyperspectral Dataset for Segmentation of Bulky Waste using VIS, IR, NIR, and Terahertz Imaging

Why smarter waste sorting matters

Bulky household junk — from broken wardrobes to sagging sofas — is often rich in reusable wood. Yet, much of it still ends up burned or landfilled because machines struggle to tell wood apart from plastics, metals, and padding, especially when these materials are stacked or hidden inside one another. This article introduces WoodVIT, a detailed image dataset built to help artificial intelligence “see” inside such messy piles better, so that future sorting systems can recycle more wood safely and efficiently.

Looking at trash with new kinds of eyes

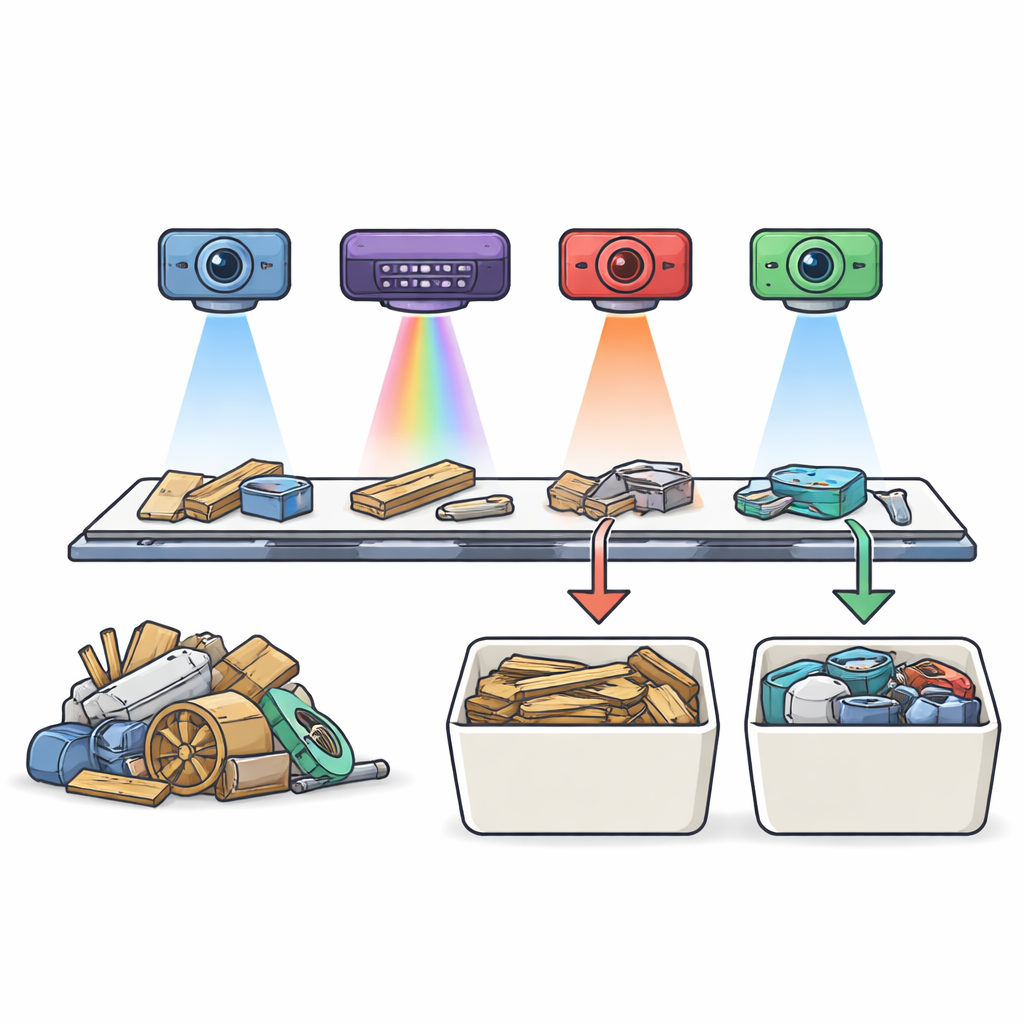

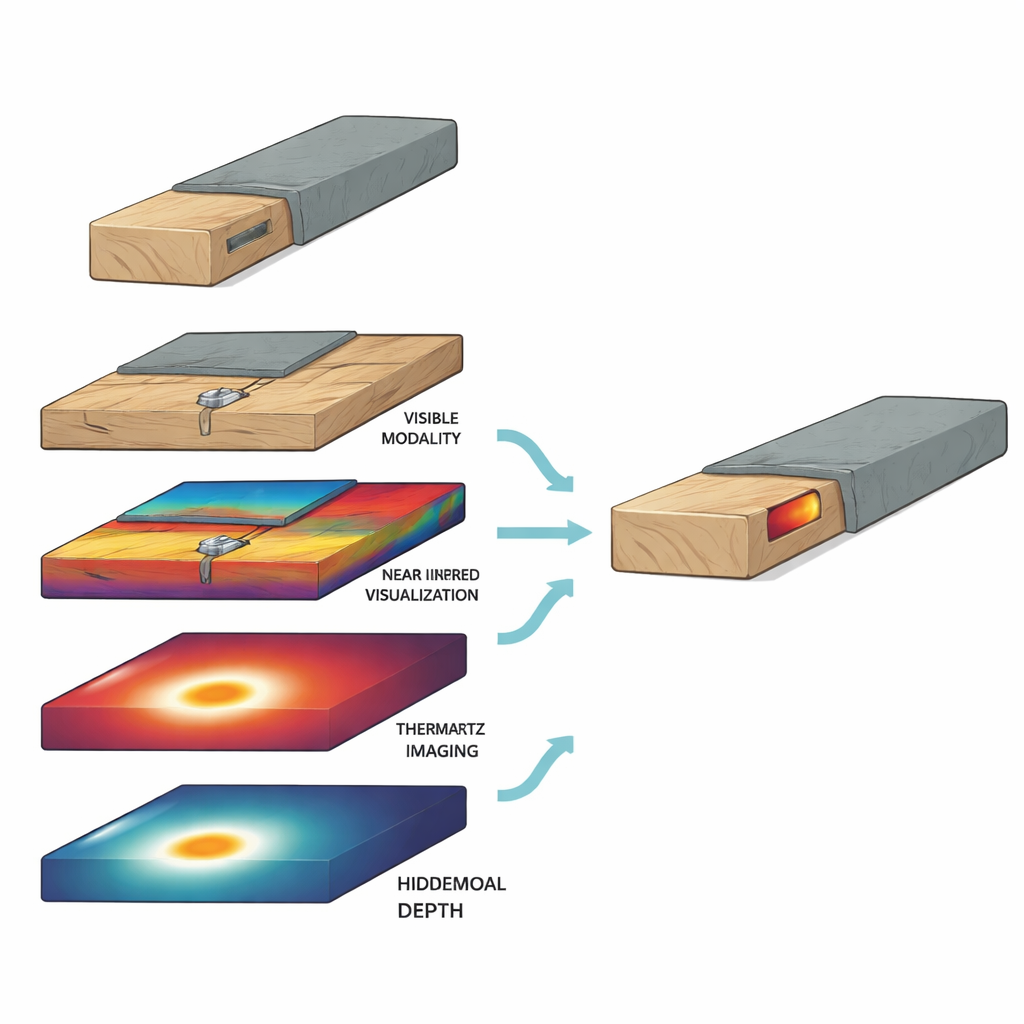

Conventional recycling machines usually rely on cameras that see roughly what our eyes see. That works well for clean, single objects, but bulky waste is messy: wood can be painted, covered in fabric, wrapped in plastic, or reinforced with metal. The authors tackle this by combining four different “views” of the same waste items. They use a visible-light camera (ordinary color images), a near‑infrared camera that captures material‑specific spectral fingerprints, a thermal camera that watches how objects heat up and cool down, and a terahertz sensor that can sense structures buried beneath the surface. Each technology captures different physical properties, and together they offer a fuller picture than any one sensor alone.

From broken furniture to data for machines

To build the dataset, the team collected crushed furniture and other bulky leftovers from a local waste facility. They placed these mixed pieces on standardized boards that traveled under the four sensors on a conveyor belt, mimicking an industrial sorting line. Every board was imaged once by each sensor, then all four images were carefully aligned so that each pixel in one image matched the same physical point in the others. Human annotators drew detailed outlines on the color images, marking wood, metals, plastics, minerals, upholstery, and several “covered” situations such as metal hidden under wood or wood hidden under fabric. These labels were transferred to the other sensor views, producing 56 fully aligned scenes and 22,659 small image patches ready for training and testing machine‑learning models.

Teaching computers to spot wood and hidden hazards

The central task in WoodVIT is simple to state: decide whether each small patch of an image is “wood” or “non‑wood.” Under the hood, this involves handling 717 channels of information per patch across the four sensors. The authors tested several neural‑network models on this task, training them either on single sensors or on all sensors combined. Models using only color images did reasonably well, but those that fused information from all four sensors performed better and more consistently. While thermal and terahertz data alone were harder to learn from, they became valuable when combined with color and near‑infrared views, especially in tricky scenes where wood is coated, stacked, or hiding metal parts.

Making sense of occlusion and complex scenes

A distinctive feature of WoodVIT is its focus on realistic, “non‑ideal” situations. The dataset includes boards where metal screws are embedded inside wood, or where wooden frames are wrapped in foam or fabric. For these covered cases, the researchers built the ground truth in two steps: they first imaged and labeled the base layer, then added the cover, re‑imaged, and merged the labels. This careful design makes it possible to judge how well different sensing combinations reveal what lies beneath the surface. The authors also explored pixel‑level segmentation using a popular neural‑network design that outlines wood regions within each patch. Both color and near‑infrared inputs produced accurate outlines, showing that the data support not only yes/no decisions but also detailed maps of where the wood actually is.

What this means for future recycling

For non‑specialists, the key message is that smarter recycling is not just about building a better camera — it is about combining many ways of seeing into a single, coherent view. WoodVIT provides the raw material for that: a publicly available, carefully labeled collection of images that captures how real bulky waste looks across visible, infrared, and terahertz bands. By enabling researchers to train and compare advanced algorithms on the same challenging, multimodal data, this work lays the groundwork for next‑generation sorting systems that can recover more usable wood, spot hidden metal contaminants, and ultimately make bulky‑waste recycling cleaner, safer, and more efficient.

Citation: Bihler, M., Roming, L., Čibiraitė-Lukenskienė, D. et al. Multimodal and Hyperspectral Dataset for Segmentation of Bulky Waste using VIS, IR, NIR, and Terahertz Imaging. Sci Data 13, 498 (2026). https://doi.org/10.1038/s41597-026-07053-1

Keywords: bulky waste recycling, multimodal imaging, hyperspectral data, wood sorting, sensor fusion