Clear Sky Science · en

A Benchmark X-ray Dataset for Pediatric Supracondylar Humerus Fractures with Improved YOLOv11-Based Detection

Why this matters for kids with broken elbows

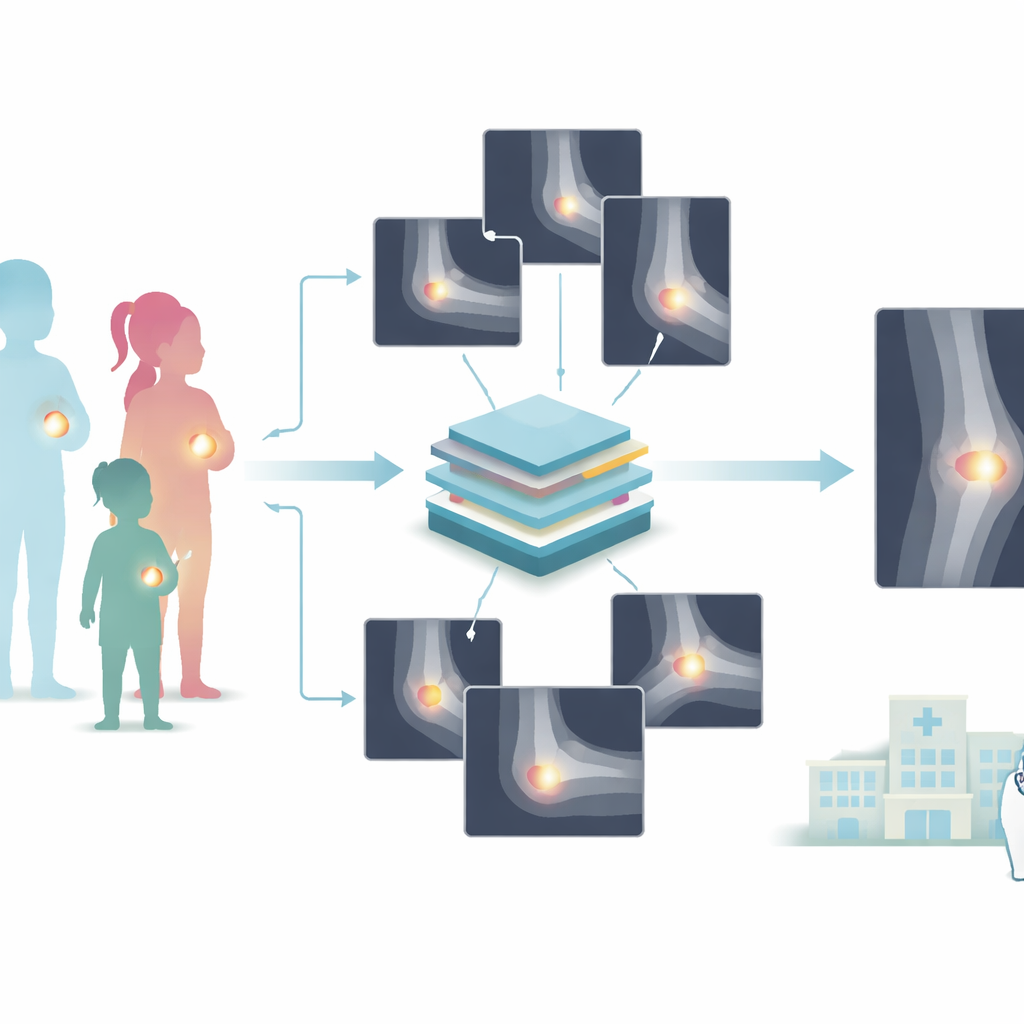

When a child falls and hurts an elbow, doctors often rely on X-ray images to decide whether the bone is broken and how serious the injury is. Some fractures around the elbow are tiny, hard to see, and easy to miss even for experienced clinicians. This study introduces a large, carefully checked collection of children’s elbow X-rays and a smarter computer-vision system designed to spot these tricky breaks, with the goal of supporting faster and more reliable care in emergency rooms and clinics.

A closer look at a common childhood injury

Among all elbow injuries in children, breaks just above the elbow joint, called supracondylar humerus fractures, are the most frequent. They usually happen when a child falls on an outstretched hand. If these fractures are not recognized and treated quickly, they can lead to serious complications, including damage to nerves and blood vessels, muscle swelling that threatens circulation, and bone healing in the wrong position that affects arm function. Today, diagnosis depends on human experts interpreting elbow X-rays, which can be challenging because children’s bones are still forming and fracture lines may be faint or hidden among normal structures.

Building a high-quality X-ray library

To address this challenge, the researchers created a new open dataset called PediaSHF-DX. It contains 10,325 elbow X-ray images from 5,163 pediatric patients seen at a large children’s hospital over an 11-year period. These images capture a wide range of ages, views, and real-world imaging conditions, making the collection realistic and varied. For the core training set, two senior orthopedic surgeons independently marked the exact regions of fractures on 2,015 images, then cross-checked and reconciled any differences. This double-blind, dual-review process was designed to reduce personal bias and ensure that the “ground truth” used to train computer models closely matches clinical reality. The remaining 8,310 images were kept separate to test how well algorithms trained on the labeled subset would perform on new, unseen cases.

Teaching a computer to see tiny cracks

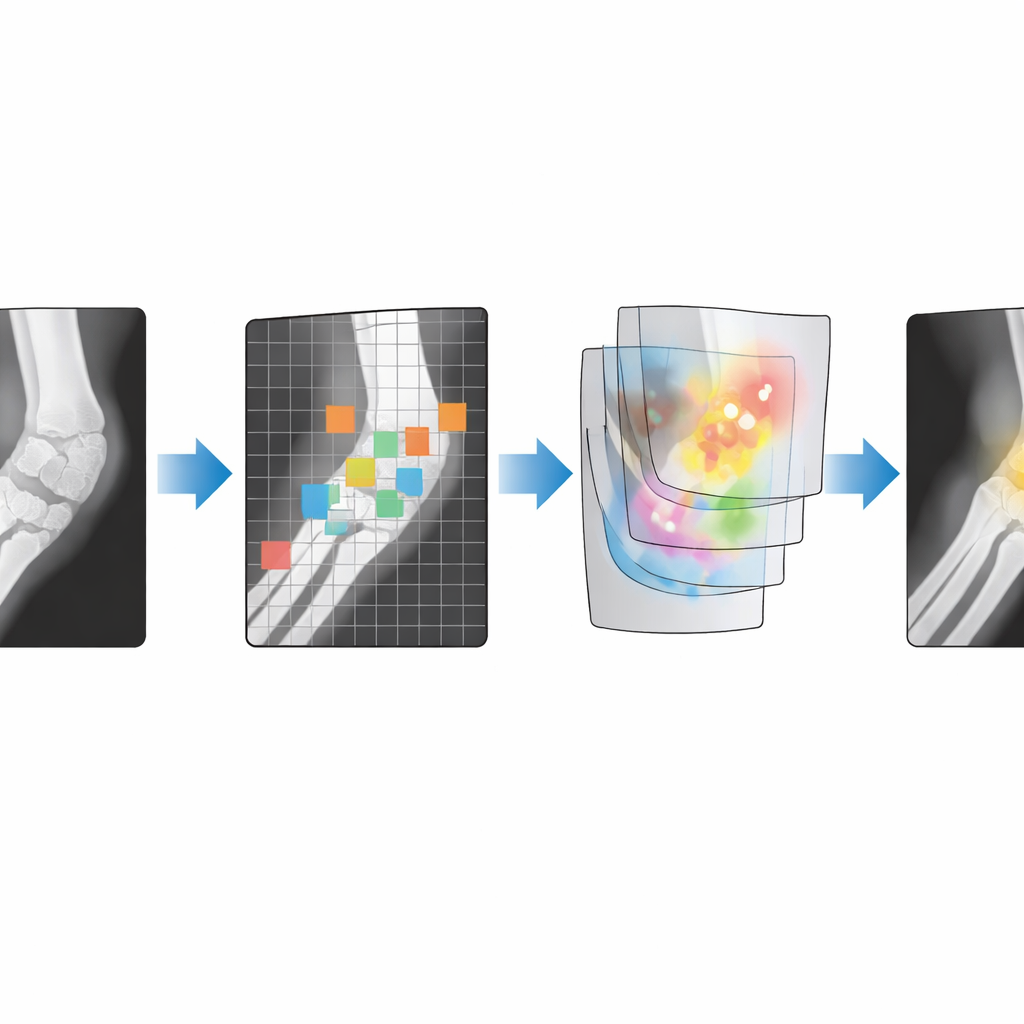

On top of this dataset, the team built an improved version of a popular object-detection system known as YOLOv11. Children’s elbow fractures are often small, have blurry edges, and may run in the same direction as the normal internal patterns of bone, so a standard algorithm can struggle to distinguish them from background detail. To overcome this, the authors redesigned parts of the model’s inner structure. They added an upgraded feature-extraction block that can create richer internal representations of the image with less computing cost, and they embedded a “local attention” mechanism that forces the network to focus more closely on small patches and fine textures rather than spreading its attention across the entire image. They also adjusted how information flows between layers so that details at different scales are combined more effectively.

How well does the system perform?

Using the curated training images, the improved model was trained and then evaluated on the large independent test set. During training, all measures of error steadily dropped and leveled off, suggesting that the system learned meaningful patterns without overfitting. When compared with other well-known detection methods—such as Faster R-CNN, a widely used two-step detector, and DETR, a transformer-based system—the enhanced YOLO model achieved the highest accuracy in identifying fracture regions. Careful “ablation” tests, in which components are added or removed, showed that each design change, especially the local attention module and the adjusted information pathways, provided measurable gains. Together, they boosted performance more than either improvement alone, indicating that the dataset is detailed enough to reveal the benefits of subtle architectural tweaks.

Sharing data and tools for wider use

Beyond the model itself, the authors paid close attention to ethics and openness. All images were converted from their original hospital format, stripped of personal identifiers, and screened for image quality before inclusion. The dataset is organized into clearly labeled folders for raw training images, surgeon annotations, validation images, and model output, and everything—data as well as code for preprocessing, training, and visualization—is made freely available on a public platform. This structure is intended to make it easy for other researchers to reproduce the study or to develop their own algorithms for pediatric fracture detection and related tasks.

What this means for real-world care

For non-specialists, the key message is that the study delivers both a large, trustworthy set of pediatric elbow X-rays and an AI system tuned to pick up small, easy-to-miss fractures. While such tools are not meant to replace doctors, they can serve as a second pair of eyes, highlighting suspicious regions on an image and helping reduce missed injuries in busy clinics or hospitals with fewer specialists. By openly sharing the PediaSHF-DX dataset and the associated code, the authors aim to accelerate the development of reliable, child-focused imaging AI that supports safer, more consistent fracture diagnosis around the world.

Citation: Xiong, Z., Zheng, K., Chen, H. et al. A Benchmark X-ray Dataset for Pediatric Supracondylar Humerus Fractures with Improved YOLOv11-Based Detection. Sci Data 13, 641 (2026). https://doi.org/10.1038/s41597-026-07029-1

Keywords: pediatric fractures, elbow X-ray, medical imaging AI, object detection, clinical dataset