Clear Sky Science · en

Data-In-situ Computing with One-Pixel-Multiple-Memristor Architecture for Neuromorphic Sequential Vision

Why faster vision matters

Every time a camera in a phone, robot or self driving car records the world, it must first capture images, then ship them off to a separate chip for analysis. This back and forth wastes time and energy, especially for video streams. The study behind this article explores a new kind of electronic “eye” that can both store and process visual information almost where light first lands, taking inspiration from how the human brain handles moving scenes.

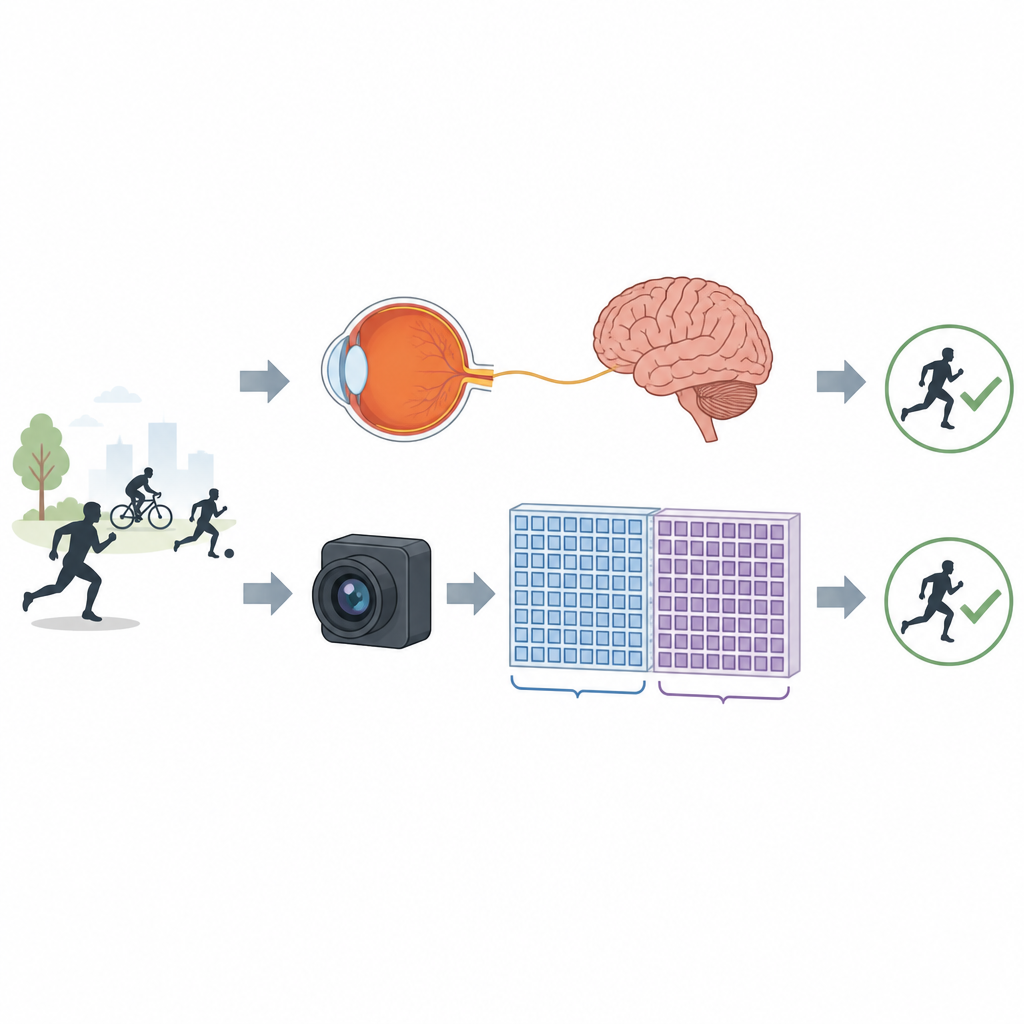

How our eyes and brain handle motion

In people, the eye turns light into tiny electrical pulses that travel along nerves to the brain. There, a kind of short term visual memory holds recent images and does some quick pre sorting before deeper recognition happens. This early filtering cuts down how much information needs to move around, helping the brain stay both fast and energy efficient. The new work borrows this idea, aiming to give artificial vision systems their own local visual working memory.

A new pixel and memory partnership

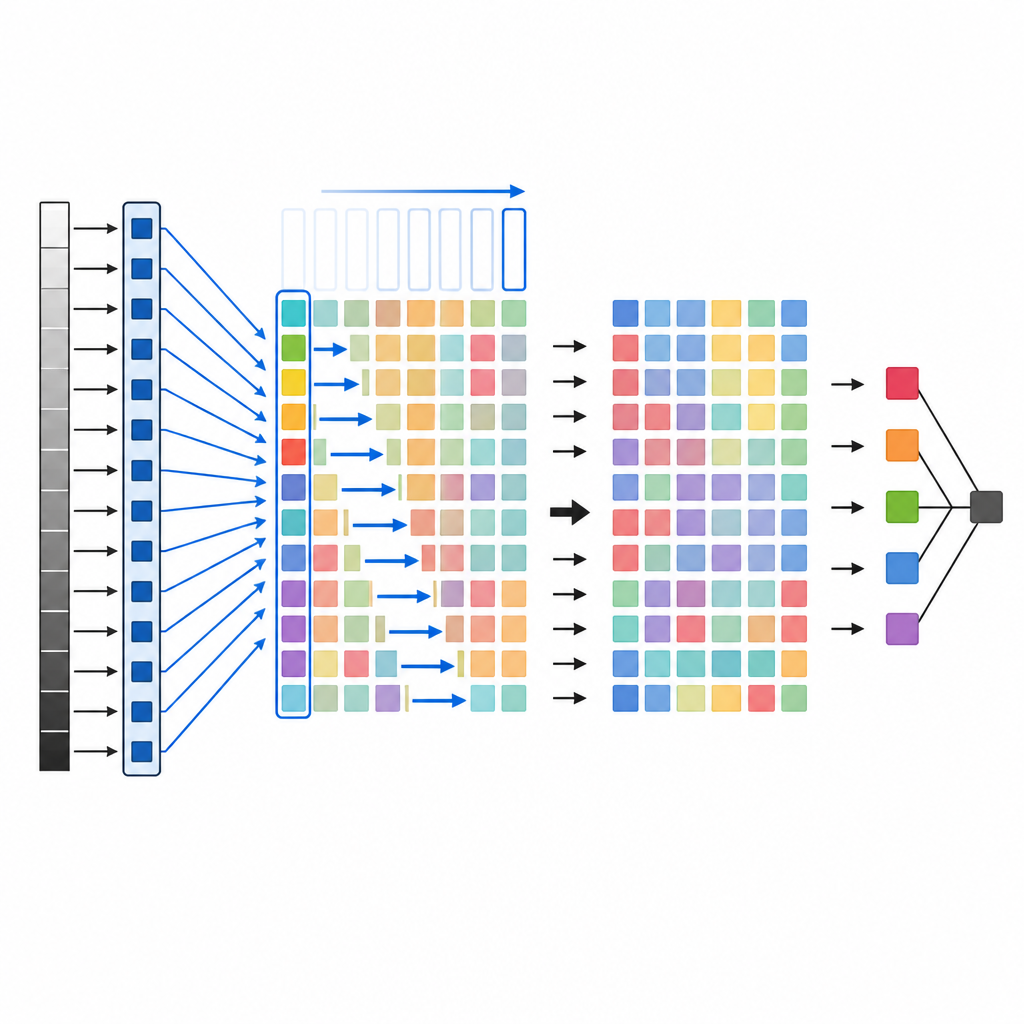

The researchers built a hardware system in which each light sensing pixel is linked to many tiny memory elements on a chip. These elements, called memristors, can store a range of values, not just simple on and off, which makes them well suited to hold shades of brightness. In the design, a simple analog circuit converts the pixel’s light signal into a voltage that directly programs several memristors at once. This one pixel to many memristors layout creates a compact map of the scene directly in the memory grid, similar to how nerve fibers from the retina fan out into many brain cells.

Rolling through images quickly

To capture moving pictures efficiently, the team introduced a “rolling exposure” strategy. Instead of grabbing an entire frame and sending it away, the system writes one column of pixels into the memristor array, then swiftly moves to the next column until the full image is stored. A special single pulse method programs many memristors in parallel, trading a tiny bit of precision for a huge gain in speed. Tests with simple human action silhouettes and a portrait image show that the restored images from the chip keep key shapes and faces clear enough for reliable recognition, even though some small noise is present.

Thinking where the images live

Most current smart vision hardware still separates sensing, storage and computing. In contrast, this system performs part of the “thinking” directly inside the same memristor array that holds the image. The researchers apply carefully chosen voltage patterns across the stored picture, letting the grid itself carry out the basic math steps of a neural network. Only the condensed results then travel to a second memristor block that finishes the classification. In tests on a well known human action dataset, the hardware recognized motions such as running, jumping and walking with 95.7 percent accuracy, close to computer simulations.

Why this approach could reshape machine eyes

Because sensing, short term storage and early processing are tightly woven together, the new architecture greatly cuts the need to shuttle data between separate chips. The authors estimate their design can reduce the time delay for image capture and storage by about two thousand fold, and cut energy for image processing by about 160 times compared with a typical digital system using standard memory. For everyday users, that could someday translate into smaller, cooler and more responsive cameras and vision guided gadgets that watch the world more like we do, taking only what they need from each moment in time.

Citation: Sun, Y., Tong, P., Shen, J. et al. Data-In-situ Computing with One-Pixel-Multiple-Memristor Architecture for Neuromorphic Sequential Vision. Nat Commun 17, 4244 (2026). https://doi.org/10.1038/s41467-026-70860-y

Keywords: neuromorphic vision, memristor, in memory computing, sequential images, energy efficient AI