Clear Sky Science · en

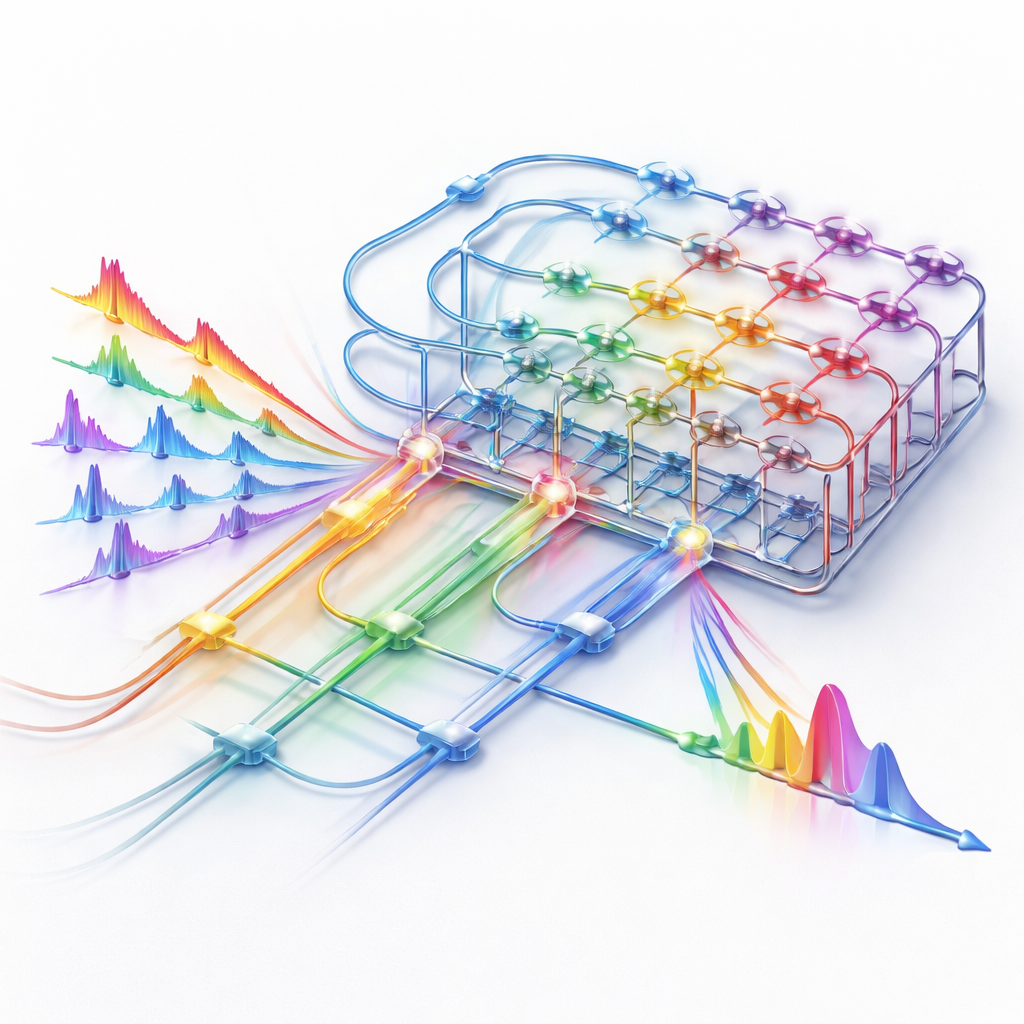

Integrated photonic 3D tensor processing engine

Why Faster Thinking Machines Matter

From self-driving cars to medical scanners and virtual reality, our world increasingly relies on computers that can understand complex, three-dimensional data in real time. Today’s artificial intelligence systems are powerful, but the electronic chips that drive them are straining under the demand for ever larger, faster neural networks. This paper presents a new way to handle such 3D data using light instead of electricity, promising quicker, more efficient “thinking” machines that could eventually make cars safer, diagnoses faster, and online experiences more immersive.

From Flat Pictures to 3D Worlds

Many familiar AI systems work on flat images—two-dimensional grids of pixels—using what are known as convolutional neural networks. But modern sensors, such as medical scanners and laser-based LiDAR on autonomous vehicles, capture full 3D scenes over time. These richer data sets are naturally described as “tensors,” or multi-dimensional arrays. Processing them with 3D neural networks is extremely powerful but also extremely demanding: the amount of computation and memory needed grows rapidly with each added dimension. Conventional electronic accelerators like GPUs and TPUs are mostly built to handle flat, two-dimensional matrix operations, so they must constantly reshape and shuffle 3D data, wasting time, energy, and memory.

Letting Light Do the Heavy Lifting

The researchers introduce an integrated photonic 3D tensor processing engine that performs a key step of 3D neural networks directly with light. Instead of repeatedly moving data between memory and electronic processors, their system sends information as optical signals that travel through tiny waveguides and resonators on a chip. Three different “axes” are used to encode and process data at once: the color (wavelength) of the light, the time at which pulses pass through, and the physical paths they take across the chip. By interleaving these three dimensions, the system can handle full 3D convolution operations without chopping them into many smaller tasks or relying on bulky electronic control hardware.

Built-In Optical Memory and Synchronization

A central challenge in high-speed computing is keeping many channels of data precisely aligned in time. Traditional systems use complex electronic clock circuits and large memory buffers to do this. Here, the team solves the problem entirely in the optical domain. They add two optical memory units, made of tunable delay lines, before and after the main computing block. These delay lines act like adjustable waiting rooms for pulses of light, letting the system “cache” data and synchronize channels by simply changing how long each pulse travels on chip. The delays can be finely tuned with picosecond (trillionths of a second) precision and support effective clock rates up to around 200 billion operations per second, all without resorting to extra electronic timing hardware.

Smarter Light Circuits for Heavy Math

At the heart of the computing block is a grid of tiny ring-shaped optical resonators that control how strongly each light channel contributes to the final result—analogous to the adjustable weights in a neural network. The authors use a special dual-ring design on a multilayer photonic platform that makes these elements less sensitive to temperature changes and manufacturing imperfections, while offering a broad, flat optical response. That means the rings can handle high-speed signals with less distortion and maintain precise weight settings—better than 7 bits of effective precision—using simple calibration. In tests, the chip successfully performed four-channel matrix multiplications at symbol rates up to 30 gigabaud, demonstrating both speed and accuracy.

Real-World Test with 3D Laser Sensing

To show that their engine is useful beyond laboratory benchmarks, the team applied it to a practical 3D recognition problem: distinguishing pedestrians from vehicles in LiDAR point cloud data. They used a compact 3D neural network similar to known real-time models, trained its parameters digitally, and then offloaded the crucial 3D convolution step to the photonic engine. Operating at a 20-gigabaud symbol rate, the optical system produced feature maps that closely matched digital calculations and achieved a classification accuracy of about 97 percent—essentially the same as a traditional computer, but with the heavy 3D math carried out in light.

What This Means for Everyday Technology

In simple terms, this work shows that it is possible to build a compact optical “math engine” that directly tackles the hardest part of 3D AI workloads, while using less memory, fewer electronic components, and potentially much less energy than current designs. By keeping data caching, timing alignment, and computation all in the optical domain, the approach reduces complexity and opens a path to higher speeds and greater parallelism. As photonic integration improves and on-chip light sources and amplifiers mature, such 3D tensor engines could become key building blocks in future devices for autonomous driving, medical imaging, video analytics, and immersive virtual environments—quietly using beams of light to help machines see and understand our 3D world in real time.

Citation: Wu, Y., Ni, Z., Li, X. et al. Integrated photonic 3D tensor processing engine. Light Sci Appl 15, 154 (2026). https://doi.org/10.1038/s41377-026-02183-y

Keywords: photonic computing, 3D neural networks, optical accelerators, LiDAR recognition, tensor processing